Home > Workload Solutions > Data Analytics > White Papers > Dell Data Lakehouse build Resilient Data Pipelines > Apache Airflow

Apache Airflow

-

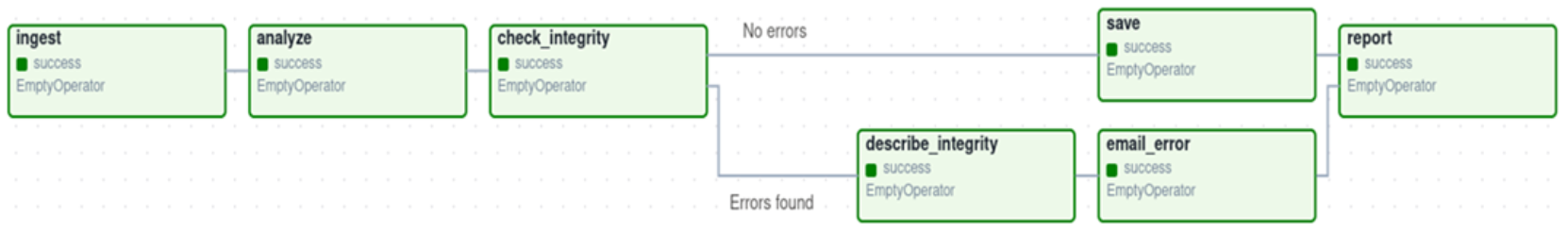

Apache Airflow serves as a versatile platform for building and running workflows, represented as Directed Acyclic Graphs (DAGs). These workflows consist of individual tasks, each defining a specific piece of work and arranged with dependencies and data flows considered. The DAG specifies the task dependencies, dictating the order of execution.

Figure 3. Apache Airflow DAG example

Tasks within Airflow workflows encompass a wide range of activities, from fetching data to running analyses, triggering external systems, and more. Airflow itself remains agnostic to the nature of these tasks, providing flexibility in orchestrating various operations. It can seamlessly integrate with different systems, either through high-level support from providers or by running commands directly using shell or Python operators. This flexibility enables Airflow to cater to diverse use cases and workflows across different domains.