Home > AI Solutions > Artificial Intelligence > White Papers > Dell Automotive Reference Architecture > Why Sensor Fusion?

Why Sensor Fusion?

-

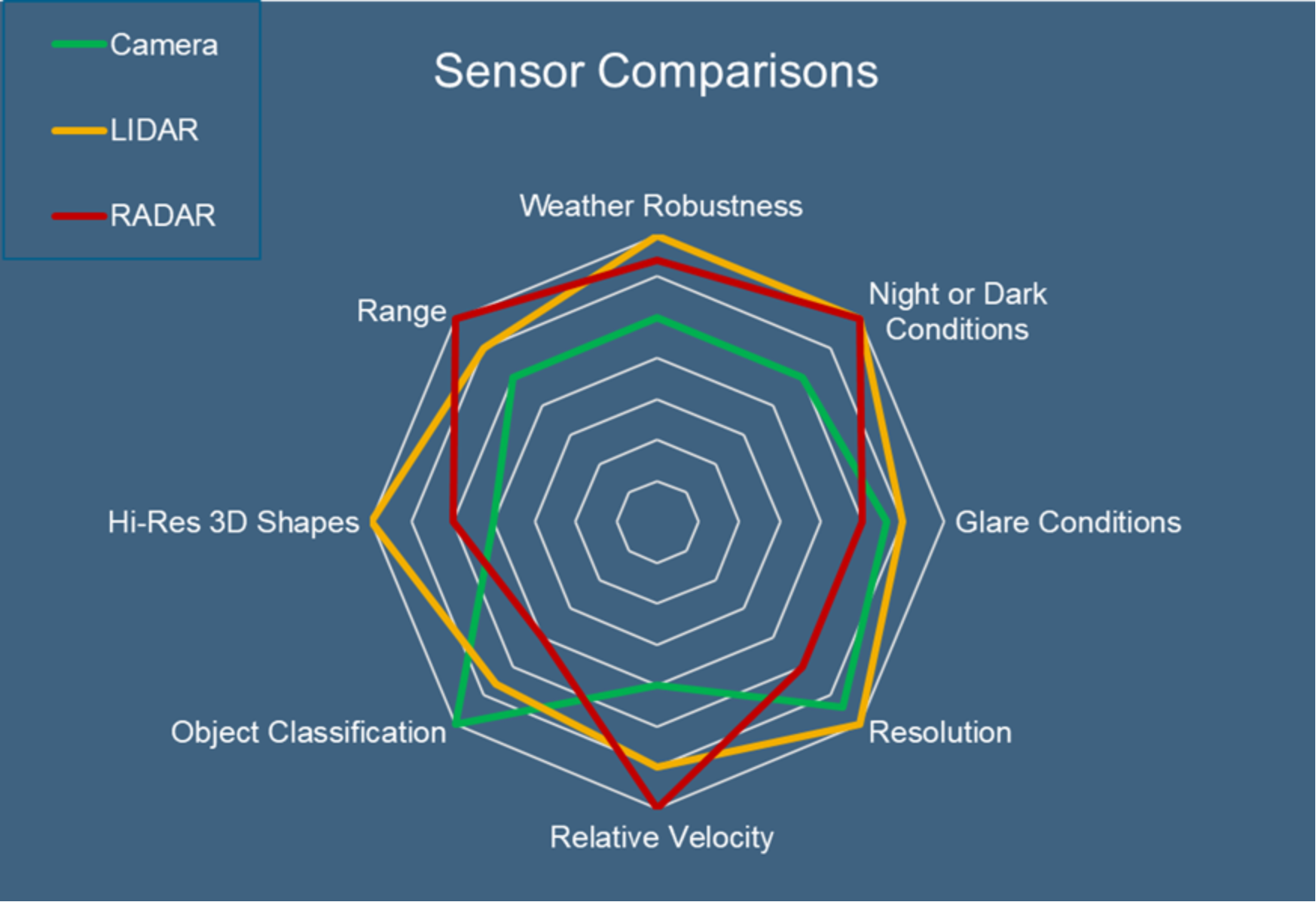

The following figure shows a spider graph comparing three primary sensor types: video camera, LiDAR, and RADAR:

Figure 13. Spider diagram showing qualitative strengths and weaknesses of each sensor typeEach sensor has its strengths and weaknesses. Sensor fusion combines data from multiple sensors that can compensate for the limitations and errors of individual sensors. By cross-referencing and validating data from different sources, it is possible to achieve a higher level of accuracy and reliability in measurements and estimations. Sensor Fusion also increases redundancy and fault tolerance, increases environmental awareness and improved robustness in the most extreme of weather and noise conditions.

By addressing each of the demanding environmental scenarios, one or two sensors address each specific condition. For instance, in the context of achieving high-resolution 3D shape recognition, LiDAR demonstrates superior performance compared to both RADAR and video cameras. However, in the realm of object classification, the video camera emerges as the top-performing choice.