Home > Workload Solutions > High Performance Computing > Guides > Architecture Guide—Dell EMC HPC Ready Solution for AI and Data Analytics > Playbooks

Playbooks

-

Dell Technologies’ Ansible-based deployment installs and deploys the Kubernetes Web UI (Dashboard) for graphical management of your cluster, as shown in the following figure:

Figure 6. Kubernetes management using Dashboard

Dashboard enables you to manage and monitor nodes, pods, services, persistent volumes, and more from a single interface. You can also run scripts that enable HPC nodes to be converted to Kubernetes nodes and back again for flexible workload scheduling on a single pool of resources. To enable dynamic scheduling, run the partition-switching script from the CLI. Ansible deploys the cluster similarly to the Bright deployment. The cluster will have both Slurm and Kubernetes personalities.

Ansible playbooks provide a convenient way to install the software for the HPC Validated Design for AI and Data Analytics by using PowerEdge servers with factory-installed CentOS images. Playbooks are available on GitHub (https://github.com/dellhpc/omnia).

The following tables provide details about the Ansible playbook environment:

Table 12. Ansible playbook environment capabilities

Capability

Technology

Container runtime with accelerator support

Docker containers

Container orchestration

Kubernetes

System monitoring

Prometheus

CNI-compliant software-defined network (SDN)

Flannel and Calico

Service discovery

CoreDNS

Ingress and proxy

Nginx

Table 13. Ansible playbook environment components – Omnia 1.0

Component

Version

Operating system (management node)

CentOS 8.4

Operating system deployed by Omnia on bare-metal servers

CentOS 7.9 2009 Minimal Edition

Cobbler

3.2.1

Ansible AWX Version

19.0.0

Slurm Workload Manager

20.11.2

Kubernetes Controllers on Management Station

1.21.0

Kubernetes Controllers on Manager and Compute

1.16.7 or 1.19.3

Kubeflow

1.0

Prometheus

2.23.0

Helm

3.0.1

To prepare systems for software deployment by using Ansible, ensure that each server is racked, powered, and networked so that the server can download software packages from the Internet or from a full CentOS mirror.

The HPC Validated Design for AI and Data Analytics assumes that you have two networks:

- A management network that uses the integrated Ethernet for iDRAC-based management

- A high-bandwidth fabric that is built on either 100 GbE or endpoint detection and response (EDR) InfiniBand

Hosting the management network and the high-speed fabric on two separate, private IP spaces is a best practice. As an example, the management network might use 192.168.x.x, while the high-speed fabric uses 10.1.x.x. Also, assign hostnames to systems. You can assign both names and IP addresses manually or by using Dynamic Host Configuration Protocol (DHCP).

The Ansible playbooks assume that, at a minimum, each node has SSH access on the high-speed fabric. Ansible uses the IP addresses of the high-speed fabric to establish the SDN for the Kubernetes installation.

Ansible uses roles to customize installation on different servers. Each server is given a specific role using the Ansible inventory file. Servers can be master nodes, unaccelerated compute nodes, or accelerated compute nodes.

The master role must list a single node to be used for Slurm and Kubernetes scheduling and orchestration, as well as for managing and monitoring the system. It does not require any accelerators and is not used for compute work.

List unaccelerated (that is, CPU-only) compute nodes in an inventory file. Because GPU enablement is as an add-on to the basic compute node provisioning process, list GPU-accelerated compute nodes in the unaccelerated section of the inventory file.

Here is a sample inventory file:

[master]

master

[compute]

compute[000:005]

[gpus]

compute001

compute002

### DO NOT EDIT BELOW THIS LINE ###

[workers:children]

compute

gpus

[cluster:children]

master

workers

You can install Ansible on the master node by using the yum package manager (as root):

yum install ansible

To download Ansible playbooks, go to the following GitHub page: https://github.com/dellhpc/omnia

When networking is set up, Ansible is installed on the master node and the inventory file is generated. Run the build-cluster.yml playbook to deploy the cluster:

ansible-playbook -i host_inventory_file build-cluster.yml

The playbook installs all necessary dependencies on the master and compute nodes, and it ensures that nodes are joined to the Kubernetes cluster. The process takes approximately 30 minutes, depending on your Internet connection speed.

When the cluster is set up, you can install additional applications on the Kubernetes partition, by using Helm, and on the Slurm partition, by using yum.

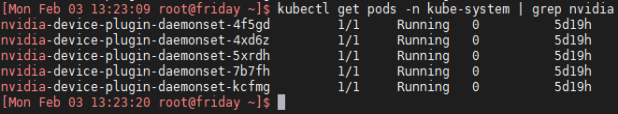

The following examples show validation that the services are running on the cluster when you use this architecture:

Figure 7. Container runtime with accelerator support

Figure 8. SDN with Flannel/Calico

Figure 9. Flannel SDN running on an Ansible-deployed system

Figure 10. Helm chart configuration