Unleash the Power of NVMe over TCP with Enterprise SONiC by Dell Technologies

Mon, 27 Feb 2023 19:40:41 -0000

|Read Time: 0 minutes

If you’re looking into NVMe/TCP as your new Storage Area Network (SAN) solution, then you already know that you can save on CAPEX and OPEX by using Ethernet instead of Fibre Channel. When selecting an Ethernet switch operating system, check out Enterprise SONiC Distribution by Dell Technologies, which offers a full feature set that covers all of your NVMe/TCP needs and more.

SONiC (Software for Open Networking in the Cloud) is an open-source network operating system based on Debian Linux. Enterprise SONiC Distribution by Dell Technologies builds on the open-source version by adding new features that have been hardened and validated for use in our customers’ environments, all of which is backed by Dell’s world-class support. Combine this with PowerStore or PowerMax storage, PowerSwitch networking, and PowerEdge hosts, and you’ve got a one-stop-shop for your NVMe/TCP solution. To make deploying and managing the solution easier, Dell provides the industry’s first NVMe/TCP Centralized Discovery Controller – SmartFabric Storage Software (SFSS). For more about SFSS, see The NVMe/TCP Dating App!

To learn more about NVMe/TCP, see the NVMe, NVMe/TCP, and Dell SmartFabric Storage Software Overview - IP SAN Solution Primer.

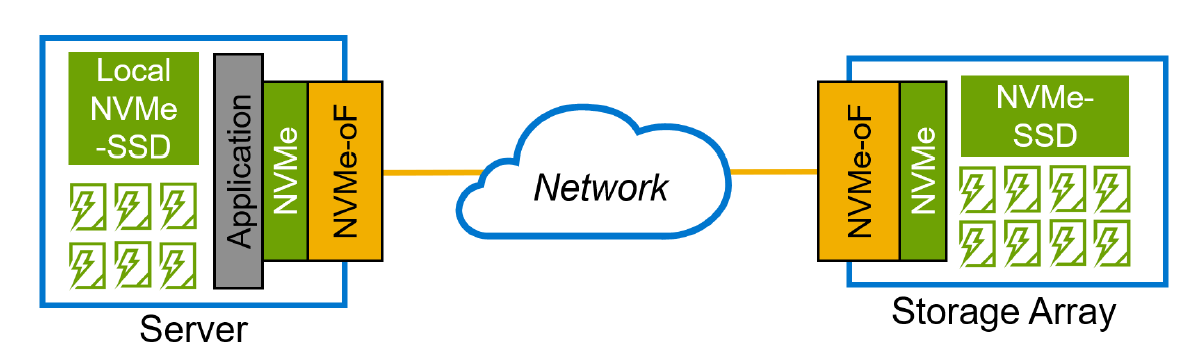

From the networking perspective, NVMe/TCP’s requirements are relatively simple, especially when compared with iSCSI, RoCE, FCoE, and even FC. The network only needs to do what it does best: forward packets from source to destination. The host and storage operating systems, such as ESXi, Linux, PowerStoreOS, and PowerMax, take care of the rest!

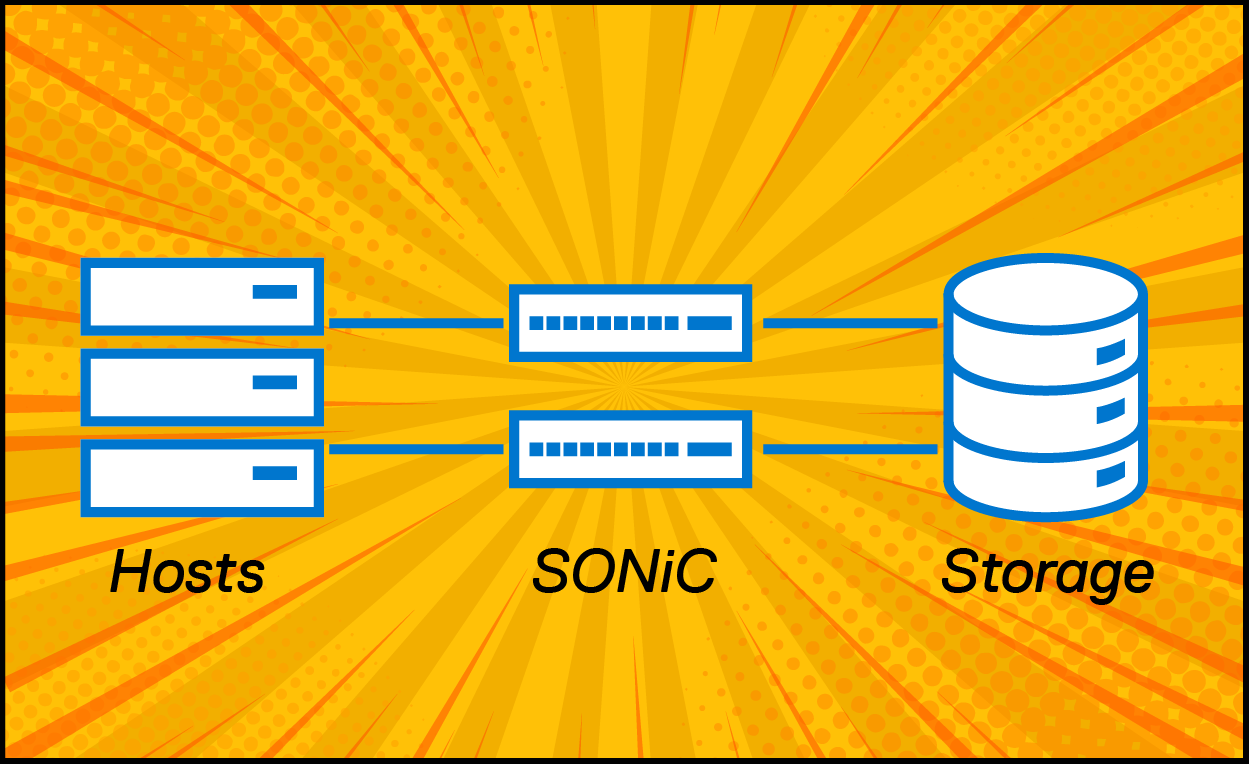

Figure 1: The endpoints do the NVMe/TCP processing, and the network just forwards IP traffic.

Figure 1: The endpoints do the NVMe/TCP processing, and the network just forwards IP traffic.

Enterprise SONiC Distribution by Dell Technologies is a highly scalable and customizable network operating system that can be used to build a storage network of any size, ranging from a simple two-switch setup to a large-scale spine/leaf fabric with thousands of endpoints. SONiC offers several features and functionalities that make it well suited for storage networking, including support for a variety of switching hardware, a modular design that enables administrators to add or remove features as needed, and a strong focus on network automation and programmability.

The SONiC CLI is very similar to that of other commonly used operating systems, meaning it is easy for administrators to learn and adopt. Here’s how simple the switch interface configuration is:

SAN Switch A | SAN Switch B |

interface vlan1921 description SAN_A mtu 9216 ! interface Eth1/1 description NVMeTCP_Endpoint_Port_1 mtu 9216 speed 25000 switchport trunk allowed vlan 1921 no shutdown | interface vlan1922 description SAN_B mtu 9216 ! interface Eth1/1 description NVMeTCP_Endpoint_Port_2 mtu 9216 speed 25000 switchport trunk allowed vlan 1922 no shutdown |

As NVMe/TCP implementations gain traction in data centers, monitoring the performance of the network will be important for network administrators to ensure they get the most from their investment. The good news is that monitoring solutions - like the one offered by Augtera, a Dell partner - make it easy to fold the NVMe/TCP fabric into standard network management solutions. Read more about how Augtera provides proactive detection and isolation of NVMe/TCP congestion using Machine Learning here.

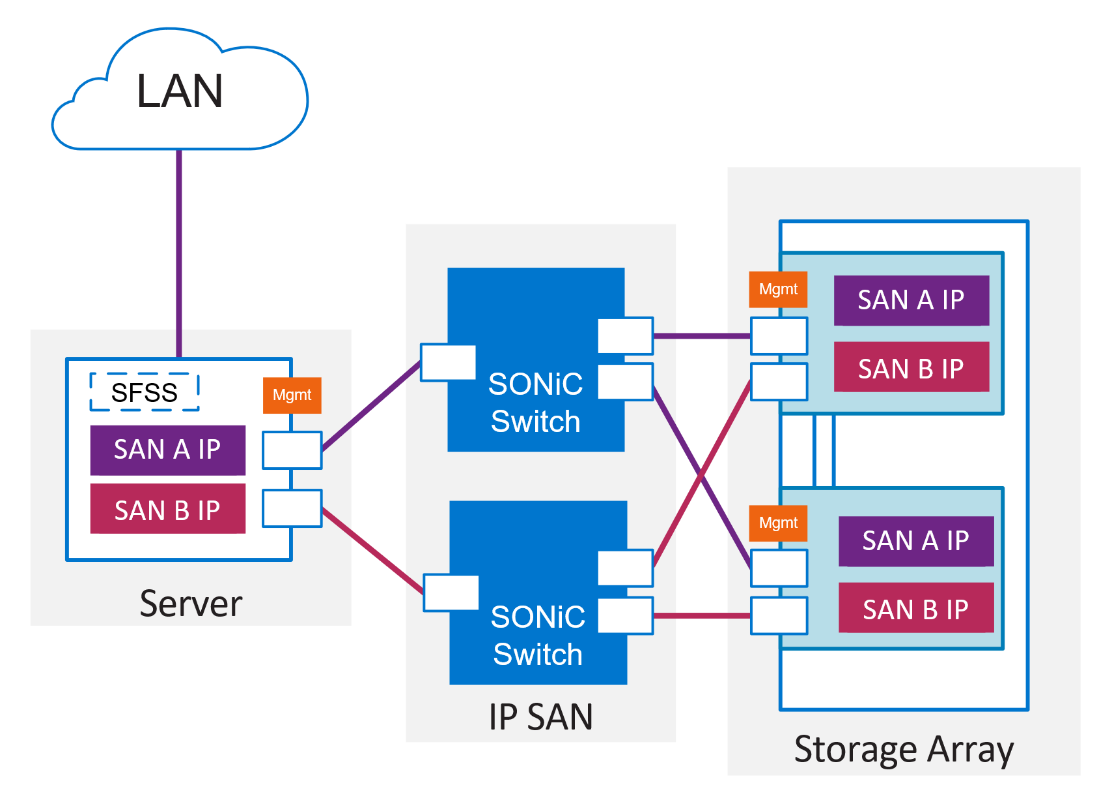

We made quick work of configuring SONiC on Dell PowerSwitch S5248F-ON in our Proof-of-Concept lab. For resiliency and security, we used two air-gapped IP SAN Switches, one for SAN A and one for SAN B, each with a Layer 2 network dedicated to NVMe/TCP traffic. Using dedicated switches and ports for NVMe/TCP removes the risk of contention with LAN traffic. The dual SAN topology creates an active/active resilient solution. Furthermore, this topology provides additional security by constraining NVMe/TCP traffic within Layer 2 networks, resulting in Layer 3 isolation from the rest of the network.

Figure 2: Proof of Concept Topology

Figure 2: Proof of Concept Topology

Enterprise SONiC Distribution by Dell Technologies is used by some of the largest cloud service providers. It is a practical choice for customers who are looking for improved performance, flexibility, reduced cost, and ease of deployment. The combination of Enterprise SONiC with NVMe/TCP makes an attractive option for organizations that need to build large and complex storage networks to meet the demands of their data-intensive workloads.

Contact your sales representative to learn more about how NVMe/TCP with SONiC can benefit your organization. Our experts can provide you with the information you need to make an informed decision and guide you through the implementation process.

Resources

To explore more of SONiC’s features, check out the Enterprise SONiC Spec Sheet.

To learn more about Enterprise SONiC Distribution by Dell Technologies, see Enterprise SONiC Networking Solutions.

Special thanks to Claire O'Keeffe, Zain Raza, and Aarti Utreja!

Related Blog Posts

Build your own NVMe/TCP Sandbox

Fri, 18 Aug 2023 13:29:07 -0000

|Read Time: 0 minutes

Whether your summer is at an end in the North, or just getting started in the South, there is always time to play in a sandbox - an NVMe/TCP SANdbox that is…

Here are three ways to try NVMe/TCP:

- Hardware: Build a physical lab.

- No hardware: Contrary to the idiom, you can “build sandcastles in the sky” using the SANdbox Github repo for AWS cloud platform!

- Hardware, but no setup: Contact Dell or a Dell Partner for access to our Hands on Lab which is running ESXi 8.x and PowerStore 3.x with SFSS.

- No setup at all: You can experience the deployment process by using the Dell Technologies Interactive Demo.

Build a physical lab

You can leave the bucket and spade behind; here’s what you’ll need:

- A server running Linux or ESXi. Choose a version listed in the NVMe/TCP Host/Storage Interoperability Matrix

- A switch or two; the list of interoperable switches includes, but is not limited to, the NVMe/TCP Switch Interoperability Matrix. Note the KB article for non-validated switches.

- A storage array from the NVMe/TCP Host/Storage Interoperability Matrix.

- SmartFabric Storage Software (Optional, good for large solutions).

- See The NVMe/TCP Dating App blog.

- This software is free to try.

- See the SFSS Interoperability Matrix for compatible OSs.

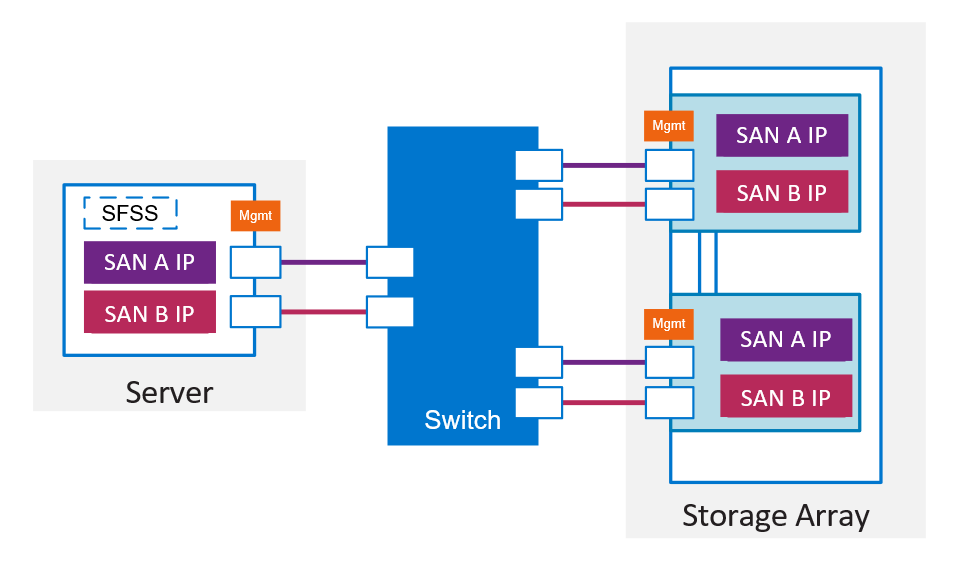

Figure 1 Minimum setup for PowerStore NVMe/TCP with optional SmartFabric Storage Software

Figure 1 Minimum setup for PowerStore NVMe/TCP with optional SmartFabric Storage Software

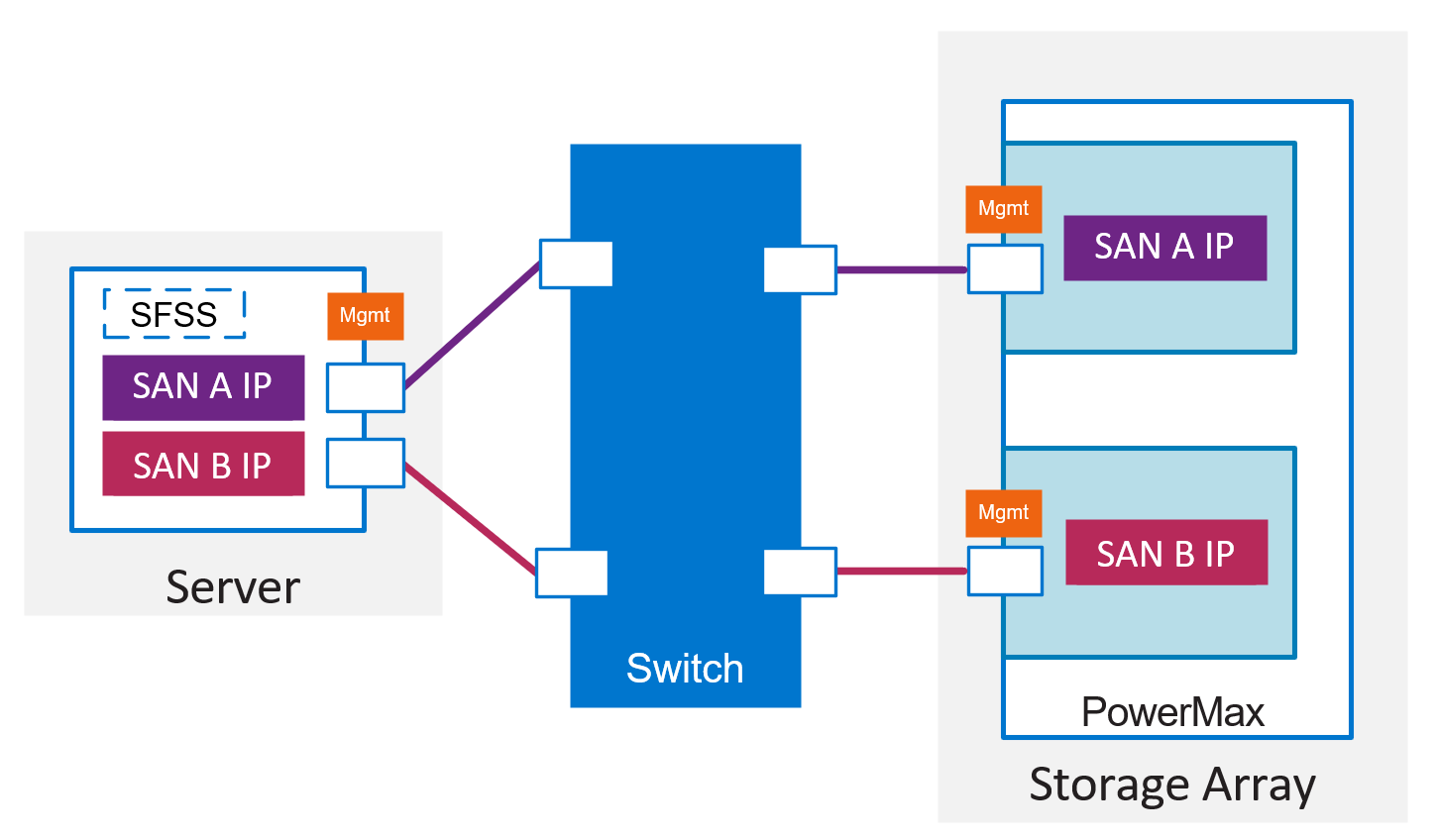

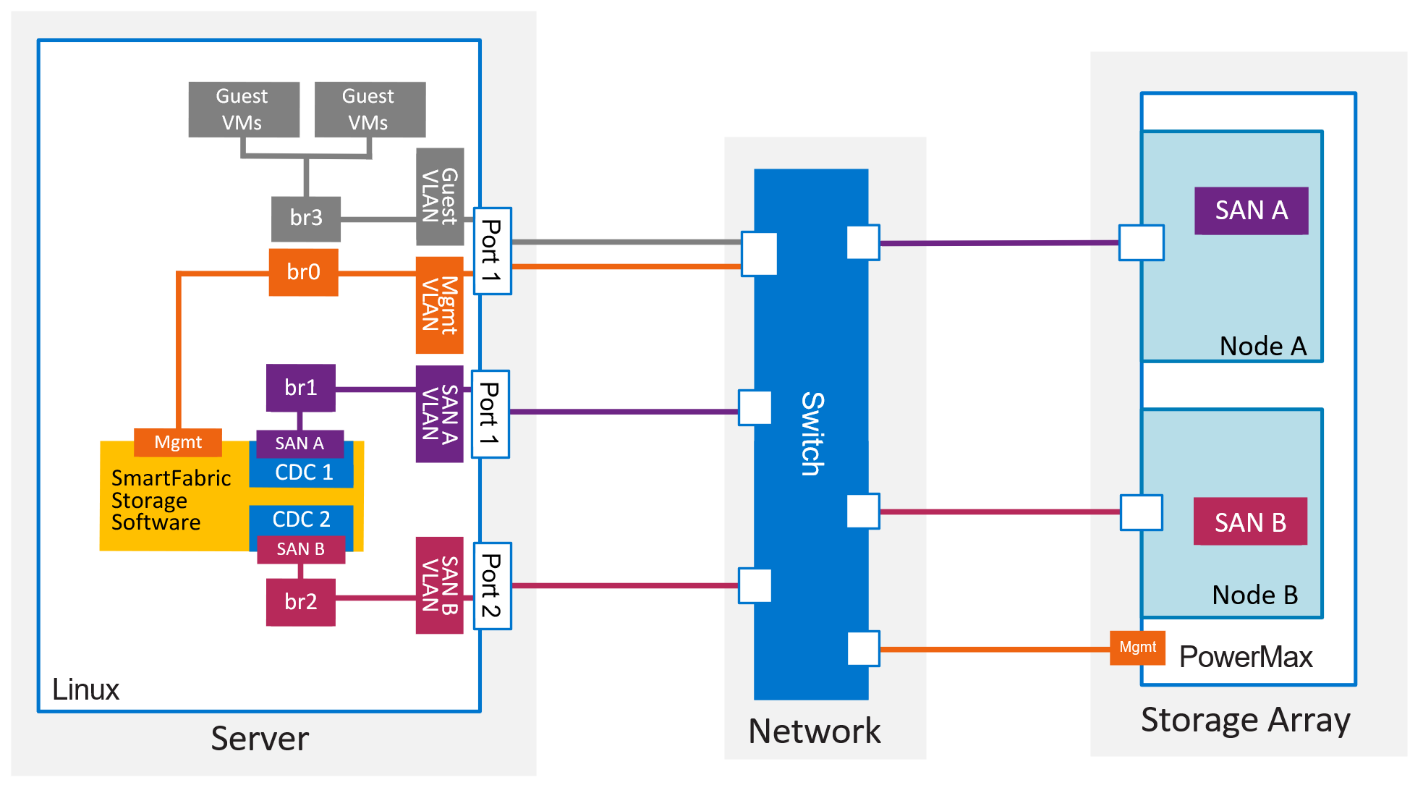

Figure 2 Minimum setup for PowerMax NVMe/TCP with optional SmartFabric Storage Software

Figure 2 Minimum setup for PowerMax NVMe/TCP with optional SmartFabric Storage Software

Your lab setup will depend on what you want to learn from it. Below are four common reasons for building a sandbox. You can deploy according to best practices or minimise configuration depending on your use case and components of most interest to you.

Attribute | How to deploy using best practices | How to configure and verify connectivity | Performance | Behaviour during failure scenarios |

Bandwidth | Minimum of 25Gbps end to end | 10Gbps will suffice. | Minimum of 25Gbps. | 10Gbps will suffice. |

Physical architecture | Resiliency or redundancy across the solution. SFSS shouldn’t be installed on a host it controls. | One of each device will suffice. | One of each device will suffice. | Two of each component is needed. |

Number of switches | Two |

| Two, if switch performance is part of the test. Otherwise, one will suffice. | Two, because switch failure/reboot should be tested. |

Port Usage 1 | Dedicated ports for SAN traffic. Two on the host, two on PowerMax, or four on PowerStore. | A single SAN would achieve this purpose, i.e. one VLAN. | Ensure SAN traffic is not contending with LAN traffic. | Emulate your production design. |

Number of SmartFabric Storage Software VMs (Optional) | Two, with a Centralized Discovery Controller (CDC) each. It’s free to download and will have full functionality for 90 days. | Two, with a CDC instance in each, is recommended. It’s free to download and will have full functionality for 90 days. If there are limited resources, one will suffice. | One, with two CDC instances, will suffice since SFSS is not in the data plane. | Try using two, with a CDC in each. It’s free to download and will have full functionality for 90 days. |

1. On ESXi, configuring teaming on uplinks won’t enable failover of paths from one vmhba to the other.

The high-level steps are:

- Configure the switch(es) with two IP SAN VLANs. Set flow control to transmit off/receive off. IPv6 solutions should disable MLD snooping.

- Configure the virtual network interfaces on the host and storage array.

- Connect the host to the subsystem.

- To use a centralized discovery controller, install SFSS using the Deployment Guide.

- Or, for direct discovery, connect to the storage subsystems directly from the host using the instructions in the Host Connectivity Guides.

- Provision storage and deploy guest VMs (if not bare metal Linux).

- Complete your selected tests, take the rest of the day off, and head to the beach!

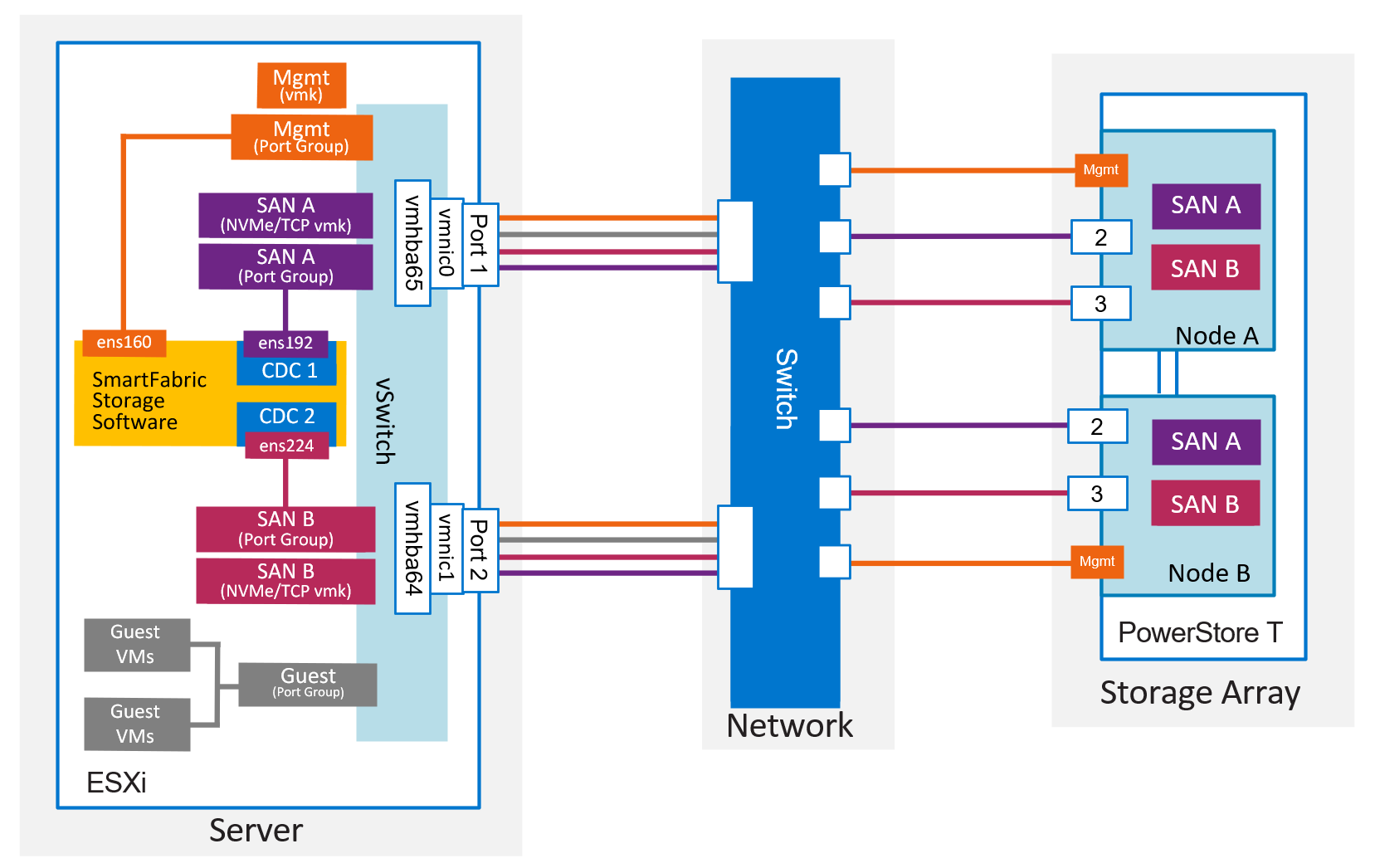

Figure 3 Detailed view of a lab setup for NVMe/TCP with ESXi and PowerStore T

Figure 3 Detailed view of a lab setup for NVMe/TCP with ESXi and PowerStore T

Figure 4 Detailed view of a lab setup for NVMe/TCP with Linux and PowerMax

Figure 4 Detailed view of a lab setup for NVMe/TCP with Linux and PowerMax

For the configuration steps to deploy NVMe/TCP lab with SFSS, see the SmartFabric Storage Software Deployment Guide.

For the steps to deploy NVMe/TCP on ESXi and Linux without SFSS, see the Host Connectivity Guides.

For the steps to deploy NVMe/TCP on PowerStore without SFSS, using direct discovery only, see the PowerStore Host Configuration Guide.

For a white paper on NVMe/TCP, see the NVMe, NVMe/TCP, and Dell SmartFabric Storage Software Overview - IP SAN Solution Primer.

Build a virtual lab in AWS

This solution will help you become comfortable with SAN automation without the need for hardware. Primarily focused on SmartFabric Storage Software, SANdbox is a GitHub repo that provides access to the resources you will need when automating your NVMe IP-Based SAN.

It includes:

- Links to relevant documentation

- Centralized Discovery Controller (CDC) downloads

- Sample scripts that automate configuration steps

- Instructions for creating a virtual lab in AWS

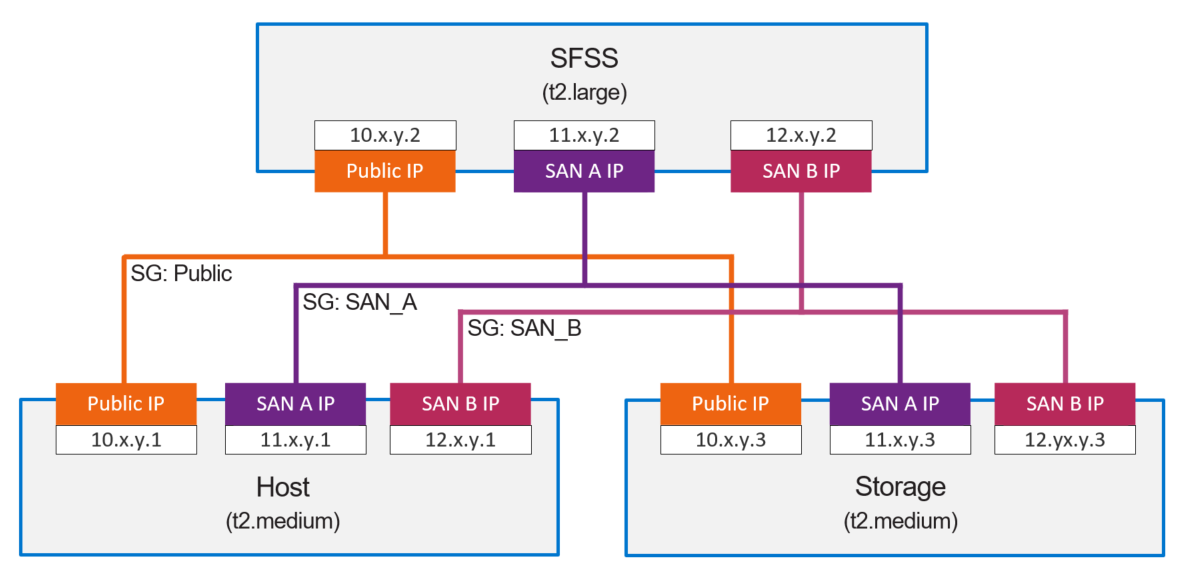

Figure 5 SANdbox VPC

Figure 5 SANdbox VPC

Set up a free AWS account if you don’t already have one. Then follow the instructions on the BrassTacks blog, which provides details on how to configure this lab option:

- Let’s play in an AWS based NVMe/TCP SANdbox!

- Let’s play in an AWS based SANdbox – Part 2 Setting up your AWS VPC and networks

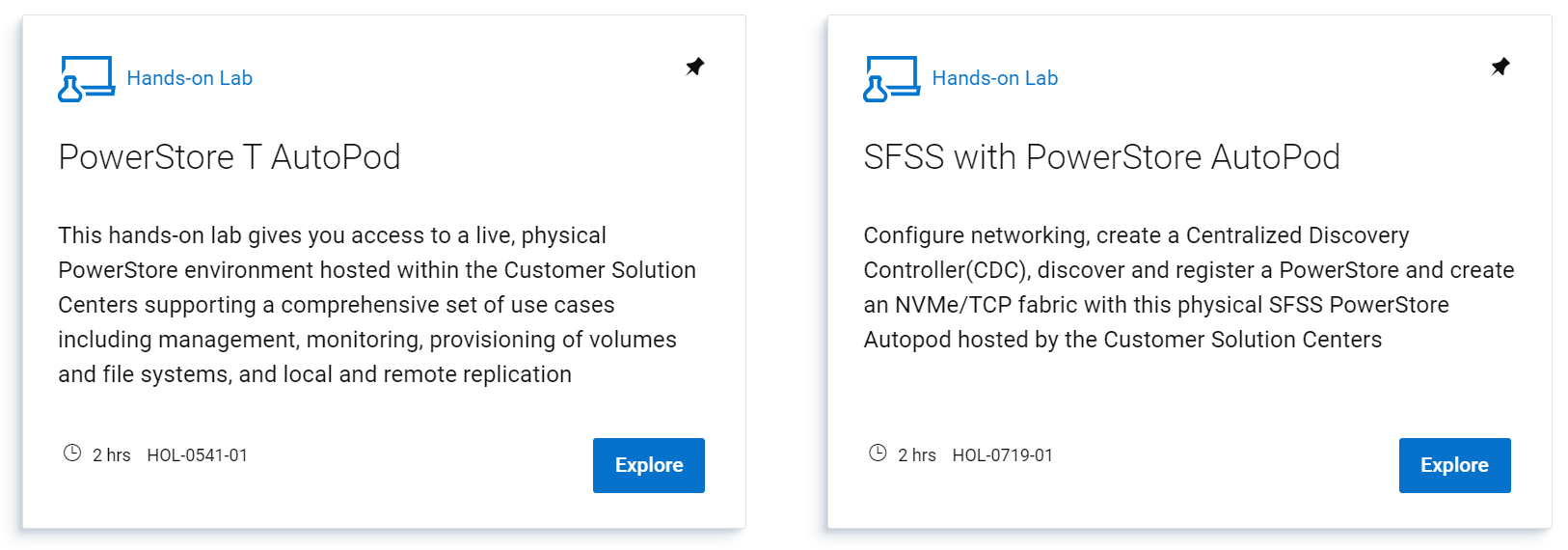

Hands on Labs

The following hands on labs are available in Dell’s Demo Center:

Interactive Demos

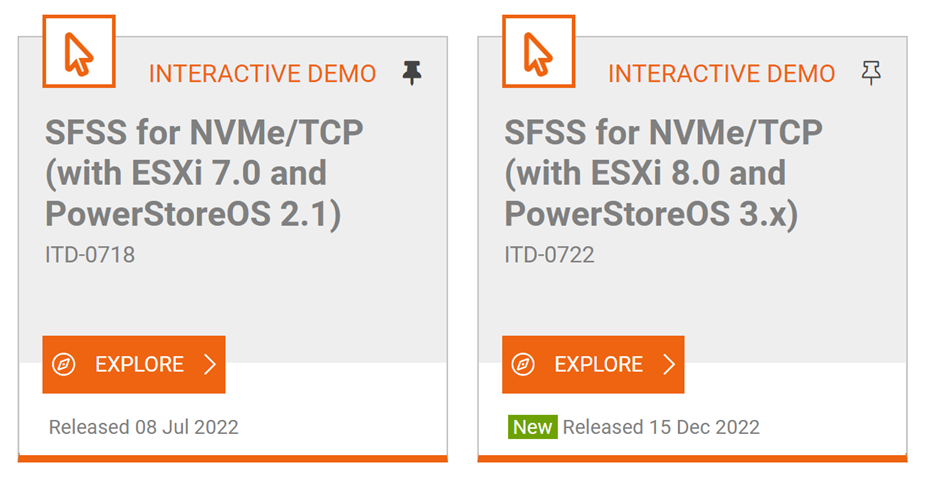

Experience the configuration steps by going to the Dell Demo Center’s SmartFabric Storage Software Interactive Demo. You can do all of the modules or select the ones that are most interesting to you.

- SFSS for NVMe/TCP (with ESXi 8.0 and PowerStoreOS 3.x)

- SFSS for NVMe/TCP (with ESXi 7.0 and PowerStoreOS 2.1.x)

Figure 6 Interactive Demos in Dell's Demo Center We hope that this is a helpful step in your journey towards an NVMe/TCP solution.

Figure 6 Interactive Demos in Dell's Demo Center We hope that this is a helpful step in your journey towards an NVMe/TCP solution.

Resources

NVMe/TCP

- NVMe IP SAN Solution Brief

- NVMe IP SAN on dell.com

- PowerStore and PowerStore Networking Documentation

- Host connectivity guides

SmartFabric Storage Software

- SmartFabric Storage Software on InfoHub

Deployment Guide, White Paper, Videos, Support & Interoperability Matrix - SmartFabric Storage Software on Dell Support

User Guide, Troubleshooting Guide, API Guide, Release Notes, Security Configuration Guide - Interactive Demo

- SFSS on Dell Technologies

Solution Brief, Top 5 Reasons, Spec Sheet

The NVMe/TCP Dating App!

Fri, 18 Aug 2023 13:31:38 -0000

|Read Time: 0 minutes

Did you know that online dating started in 1959 using a mainframe computer with punch cards? Of course, it has come a long way since then, and now, even Dell Technologies has created a dating app. But it’s not for lonely hearts; it’s for NVMe/TCP servers and storage!

Over the last few years, a new storage networking technology has emerged: NVMe/TCP. This new specification gives us a Fibre Channel-like experience over an IP network. But what happens when servers and storage (aka endpoints) come online and wish to meet like-minded NVMe/TCP individuals in their area? Alone and lost in the Ether(net), they wait to be set up with a friend of a friend who, hopefully, speaks the same language, is a good listener, and maybe even has a GSOH? Or they could just register with Dell SmartFabric Storage Software!

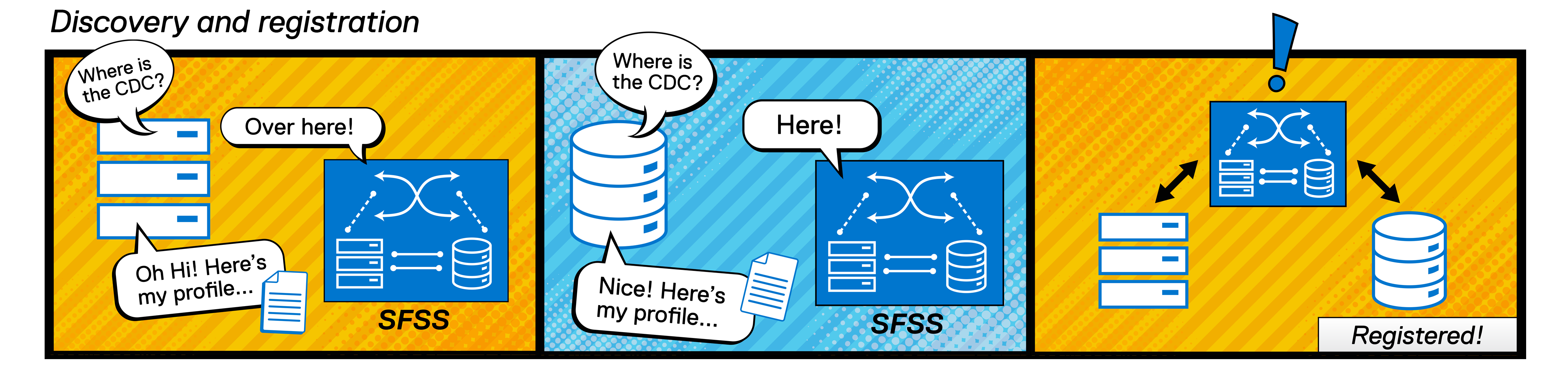

NVMe/TCP endpoints register with the Centralized Discovery Controllers (CDCs) in the SFSS virtual machine. Here’s how it will work:

Compatible endpoints look for a CDC by sending out multicast DNS queries. Once the CDCs in SFSS have responded, the endpoints register by sending their name and dating profile, or “Log Page.” For endpoints that operate according to specifications TP-8009 and TP-8010, the discovery and registration process will be fully automated.

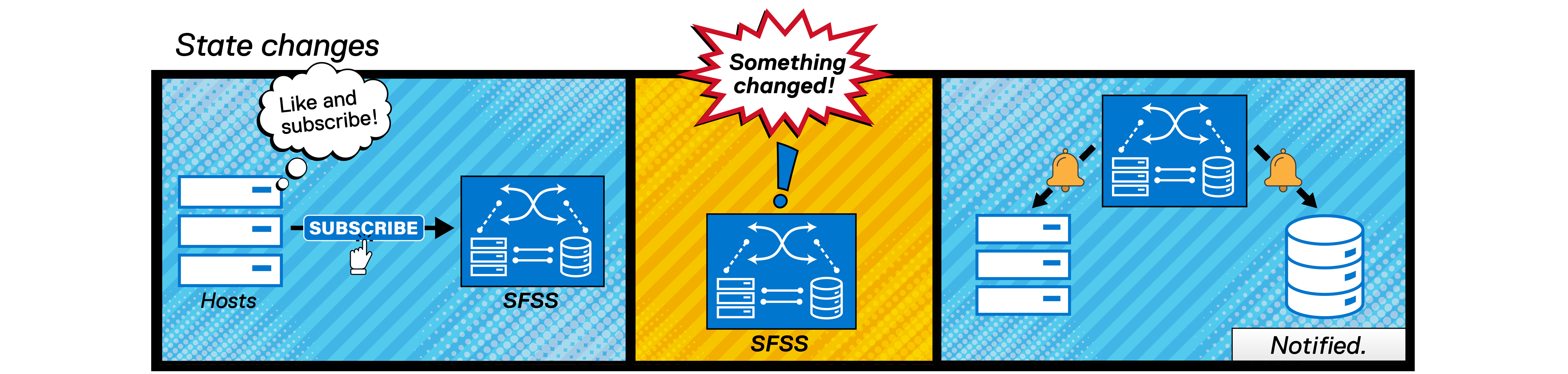

Endpoints subscribe to the SFSS’s notification service by sending an Asynchronous Event Request (AER). When a change occurs, such as a new storage subsystem, or an endpoint’s relationship status changes to “It’s complicated,” the registered endpoints are notified. This is called Asynchronous Event Notification (AEN) and is like Fibre Channel’s Registered State Change Notification (RSCN) service.

Next, we need a “match-maker.” The SFSS administrator will add hosts and storage to zones so that they reach out to each other directly.

The hosts are now able to connect and access the storage over high speed ethernet.

Here’s where it gets a bit awkward…

If, like me, you come from an application networking background, you’re used to setting up a network that gets a packet from A to B. However, with storage networking, we need a network that gets packets from A1 and A2, to B1, B2, B3, and B4. “Multi-pathing” is key! Storage networking is rather promiscuous compared to application networking! And, if you’re from a storage networking background, you might be thinking, “But what about single-initiator and single-target zoning?” Well, that’s not best practice either anymore. Once zoning is configured, hosts will connect with all accessible subsystems in the zone for optimal resiliency.

What does the network look like?

The people who designed NVMe/TCP took the lessons they learned from Fibre-Channel and applied them wisely. In FC, the convention is to deploy switches that will only bridge traffic between hosts and storage. Storage networks favor resiliency over redundancy, creating multiple active paths instead of creating idle paths ready to take over in the event of a failure.

We cover network planning in detail in the SmartFabric Storage Software Deployment Guide, but here are some highlights.

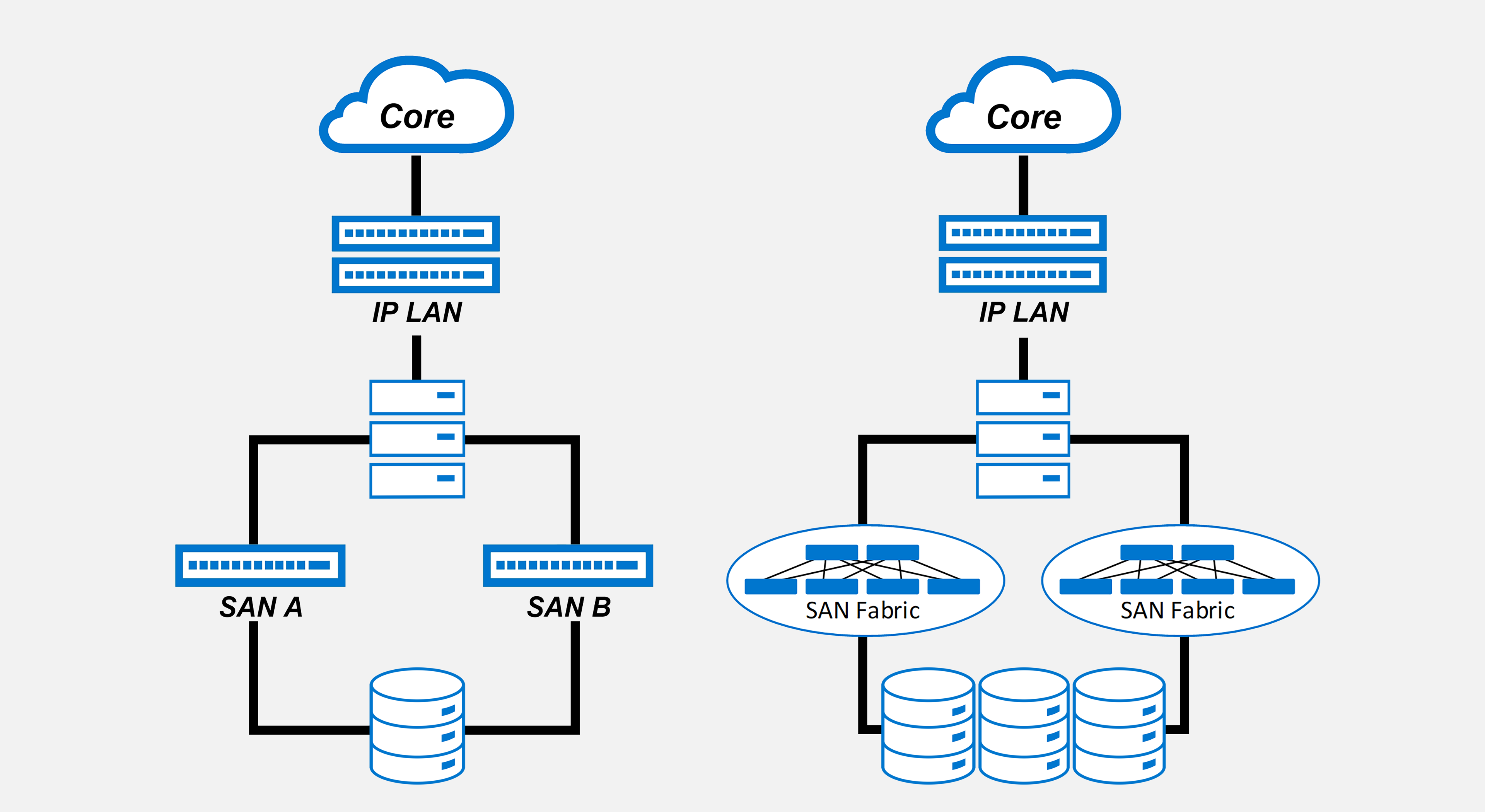

Our favorite topology is the dual-SAN topology with dedicated, air-gapped SAN switches. On the left is a small-scale version, with isolated SAN switches connecting hosts directly to the storage subsystem. On the right is a large-scale version with multiple arrays, an entire switch fabric for SAN A, and another separate fabric for SAN B.

Our favorite topology is the dual-SAN topology with dedicated, air-gapped SAN switches. On the left is a small-scale version, with isolated SAN switches connecting hosts directly to the storage subsystem. On the right is a large-scale version with multiple arrays, an entire switch fabric for SAN A, and another separate fabric for SAN B.

Because Dell SmartFabric Services automates 99% of network deployment, storage engineers can deploy large switch fabrics without years of training and experience. Dell OpenManage Network Integration software provides a single portal for administering multiple SFSS and SFS instances.

It’s possible to use the same switches for application and NVMe/TCP storage traffic (we call this the converged topology), but we must plan carefully to prevent congestion spreading and Incast. After all, we don’t want anything to get in the way of true love!

The only way is up for NVMe/TCP. Ethernet speeds are increasing and becoming more affordable, and application developers will focus on software that can take full advantage of easier access to large amounts of data. We will see Dell and other vendors including TP-8009 and TP-8010 functionality in their products so that they may take part in the revolution.

For more help with planning your network for SFSS for NVMe/TCP, check out the Network Planning section in the SmartFabric Storage Software Deployment Guide.

Keep an eye out for new compatible endpoints appearing on the Dell Networking Support & Interoperability Matrix.

If you’d like to try configuring SFSS for yourself, take our Interactive Demo for a test drive!

Happy Valentine’s Day 2022!

Resources

- SmartFabric Storage Software on InfoHub (Deployment Guide, White Paper, Videos, Support & Interoperability Matrix)

- SmartFabric Storage Software on Dell Support (User Guide, Troubleshooting Guide, API Guide, Release Notes, Security Configuration Guide)

- Interactive Demo

- SFSS on Dell Technologies (Solution Brief, Top 5 Reasons, Spec Sheet)

Special thanks to Erik Smith, Heather Morgan, and Alex Loy.