Support Predictive Maintenance Analytics Workloads with Dell AI Starter Kit

Download PDFWed, 02 Aug 2023 20:47:04 -0000

|Read Time: 0 minutes

Summary

Deep Learning (DL) techniques have enabled great successes in many fields, such as computer vision, natural language processing (NLP), autonomous driving, and predictive maintenance (PdM), by enabling a model to learn from existing data and then make corresponding predictions. This is the heart of PdM, a technique designed to help determine the condition of in-service equipment in order to estimate when maintenance should be performed to avoid unplanned outages, increase worker safety, and reduce downtime.

The success of DL in PdM is due to a combination of improved algorithms, access to larger datasets, and increased computational power. The choice and design of the system components, carefully selected and tuned for DL use-cases, can have a big impact on the speed, accuracy, and business value of implementing AI techniques for PdM.

In such a complex environment, it is critical that organizations be able to rely on vendors that they trust. Over the last few years, Dell and NVIDIA have established a strong partnership to help organizations fast-track their AI initiatives. This document demonstrates how the Dell PowerScale All-Flash Scale-out NAS, Dell PowerEdge servers with NVIDIA GPUs, and Dell PowerSwitches, can be used to provide an excellent environment for small teams performing data science, AI, and deep learning for PdM. The kit described here is intended to be a quick starter architecture for experimenting and tuning models for small production environments or singular solutions within larger production data centers.

Dell PowerScale

An efficient data science team often requires the ability to share massive amounts of data while providing high performance, reliability, and seamless access from multiple operating systems. Dell PowerScale scale-out NAS (network attached storage) provides this critical capability. With its ability to scale capacity and performance easily, PowerScale allows data science teams to collaborate effectively and to share data across different applications and systems. Its flexible deployment options, including on-premises, hybrid cloud, and multi-cloud, provide the necessary agility to adapt to changing business needs and to future proof the deployment.

PowerScale also offers advanced security features, such as file system and volume-level encryption and secure access zones, to ensure the confidentiality and integrity of sensitive data. These features make PowerScale an essential component in any data science team's toolkit, enabling them to efficiently manage, analyze, and derive insights from large amounts of data.

PowerScale also offers advanced security features, such as file system and volume-level encryption and secure access zones, to ensure the confidentiality and integrity of sensitive data. These features make PowerScale an essential component in any data science team's toolkit, enabling them to efficiently manage, analyze, and derive insights from large amounts of data.

PowerScale all-flash storage platforms, powered by the OneFS operating system, provide a powerful yet simple scale-out storage architecture to speed up access to massive amounts of unstructured data while dramatically reducing cost and complexity. They deliver extreme performance and efficiency for your most demanding unstructured data applications and workloads.

Support any workload

Choose from all-flash, hybrid and archive nodes for the best fit for your data. Run multiple data protocols with simultaneous access to avoid storage silos. Deploy as an on-prem NAS appliance, in APEX, or in the Cloud.

Scalable data management

Scale up, down, or out non-disruptively to tens of petabytes. Manage your storage infrastructure with a single UI with CloudIQ. Manage your datasets across your enterprise.

Protect your data

PowerScale provides built-in availability, redundancy, security, data protection, and replication with OneFS. It offers protection from cyber attacks with integrated ransomware defense and smart AirGap, and is designed for 6x9s availability.

Dell PowerEdge

The latest generation of PowerEdge servers enhances both business agility and time to market, and can support transformational workloads such as databases and analytics, virtualization, software-defined storage, virtual desktop infrastructure (VDI), containerization, HPC, AI, and ML. Dell PowerEdge systems can draw from NVIDIA’s full AI stack — including GPUs, DPUs, and the NVIDIA AI Enterprise software suite — providing enterprises the foundation required for a wide range of AI applications, including speech recognition, cybersecurity, recommendation systems, and a growing number of groundbreaking language-based services.

Performance and scale

Performance and scale

Next-generation PowerEdge servers provide improved performance, delivering greater AI inferencing. You can order PowerEdge systems with NVIDIA Bluefield data processing units to provide additional offload, acceleration and workload isolation capabilities that are ideal for power efficiency for private, hybrid, and multicloud deployments.

Designed for sustainability

Dell Smart Flow design is a new feature within the Dell Smart Cooling suite that increases airflow and reduces fan power by up to 52% as compared to previous generation servers. The Smart Flow design supports greater server performance and requires less power to cool systems for more efficient data centers. An array of air movers is available from turnkey to high-end to best meet server cooling needs.

Reliability and security

Dell’s cyber-resilient architecture is a layered security approach, consisting of a web of security solution elements designed to protect, detect, and recover from threats. We enhance supply chain security with the Secured Component Verification (SCV) offering. SCV allows customers to verify cryptographically that the components set in the factory match what was delivered to them.

PowerEdge servers help accelerate Zero Trust adoption within organizations' IT environments. The devices constantly verify access, by assuming that every user and device is a potential threat. At the hardware level, silicon-based hardware root of trust, with elements including the Dell Secured Component Verification (SCV), helps verify supply chain security from design to delivery.

Dell PowerSwitch

Dell Technologies is keenly aware of the challenges that exist in the networking space and what must be done to address the limitations brought on by slow-moving legacy, proprietary networks and their impact on AI initiatives. With Dell Technologies Open Networking, we offer a complete strategy that combines networking scalability and agility with standards-based hardware and innovative, best-in-class software solutions — and the automation tools to streamline a large amount of manual intervention. You’ll be in a better position to meet workflow and application demands with greater network flexibly and control.

Software-Defined Networking

Software-Defined Networking

Dell Networking Operating Systems deliver full-featured Software-Defined Networking functionality with Layer 2 and Layer 3 connectivity that meets your needs with software from Dell and Open Networking ecosystem partners.

Software for Open Networking in the Cloud (SONiC)

Dell Technologies offers a finely-tuned, enterprise ready and globally supported distribution of SONiC – called Enterprise SONiC Distribution by Dell Technologies – to help bring the benefits of hyper-scale-focused SONiC contributions to rest of the Enterprise, and Telco markets and use cases.

Architecture

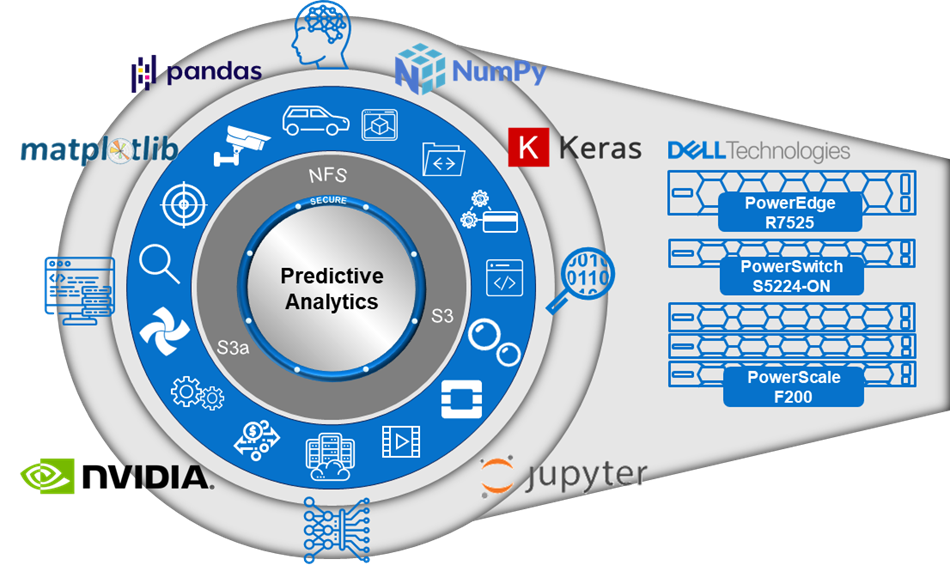

Figure 1. Predictive analytics stack components

Architecture components

Predictive Analytics architectures incorporate a variety of hardware and software components. Dell Technologies offers a large selection of hardware to build such architecture, starting from compute servers with the PowerEdge Family, PowerSwitch for the networking, and PowerScale for distributed storage. In this solution we used Dell PowerEdge servers populated with NVIDIA GPUs, running Ubuntu 20.04 LTS release.

To leverage NVIDIA GPUs, we used the NVIDIA Container Toolkit, which allows users to build and run GPU accelerated containers. For more details about this toolkit, see the NVIDIA website. Finally, we used a customized docker container based on NVIDIA’s TensorFlow Docker image. This image provides a large ecosystem of tools that allows engineers and data scientists to develop ML applications using JupyterLab, TensorFlow, Keras, RAPIDS cuDF libraries, and many more. The biggest interest in this methodology is the flexibility that Docker offers. Users can build and customize their own images and deploy specific Docker containers based on their needs.

Table 1. Core hardware and software components

Component | Description |

PowerScale F200 | PowerScale F200 delivers the performance of flash storage in a cost-effective form factor to address the needs of a wide variety of workloads. Each node allows you to scale raw storage capacity from 3.84 TB to 30.72 TB and up to 7.7 PB of raw capacity per cluster. The F200 includes in-line compression and deduplication. The minimum number of PowerScale nodes per cluster is three while the maximum cluster size is 252 nodes. |

PowerSwitch S5224-ON | The S5200-ON is a complete family of switches:12-port, 24-port, and 48-port 25GbE/100GbE ToR switches, 96-port 25GbE/100GbE Middle of Row (MoR)/End of Row (EoR) switch, and a 32-port 100GbE Multi-Rate Spine/Leaf switch. |

PowerEdge R7525 | The Dell PowerEdge R7525 is a two-socket, 2U rack-based server that is designed to run complex workloads using highly scalable memory, I/O capacity, and network options. The system is based on the 2nd Gen AMD EPYC processor (up to 64 cores), has up to 32 DIMMs, PCI Express (PCIe) 4.0-enabled expansion slots, and supports up to three double wide 300W or six single wide 75W accelerators. |

NVIDIA Container Toolkit | The NVIDIA Container Toolkit allows users to build and run GPU accelerated containers. The toolkit includes a container runtime library and utilities to automatically configure containers to leverage NVIDIA GPUs. |

JupyterLab | JupyterLab is the latest web-based interactive development environment for notebooks, code, and data. Its flexible interface allows users to configure and arrange workflows in data science, scientific computing, computational journalism, and machine learning. |

References

Dell PowerEdge Servers

Dell PowerScale Scale-Out File Storage

Dell PowerSwitch Networking

Related Documents

Accelerating High-Performance Computing with Dell PowerEdge XE9680: A Look at HPL Performance

Tue, 28 Mar 2023 23:05:16 -0000

|Read Time: 0 minutes

Executive Summary

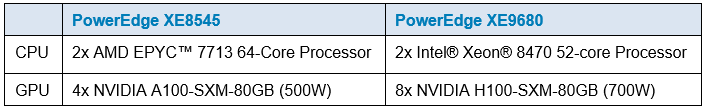

The Dell PowerEdge XE9680 is a high-performance server designed and optimized to enable uncompromising performance for artificial intelligence, machine learning, and high-performance computing workloads. Dell PowerEdge is launching our innovative 8-way GPU platform with advanced features and capabilities.

- 8x NVIDIA H100 80GB 700W SXM GPUs or 8x NVIDIA A100 80GB 500W SXM GPUs

- 2x Fourth Generation Intel® Xeon® Scalable Processors

- 32x DDR5 DIMMs at 4800MT/s

- 10x PCIe Gen 5 x16 FH Slots

- 8x SAS/NVMe SSD Slots (U.2) and BOSS-N1 with NVMe RAID

This Direct from Development (DfD) tech note offers valuable performance insights for High-Performance Linpack (HPL), a widely accepted benchmark for measuring HPC system performance.

Testing

The TOP500 list frequently relies on HPL to assess and rank supercomputer performance. Utilizing the Linpack library, HPL measures FLOPS (floating-point operations per second) by creating and solving linear equations, making it a reliable benchmark for evaluating HPC system efficiency.

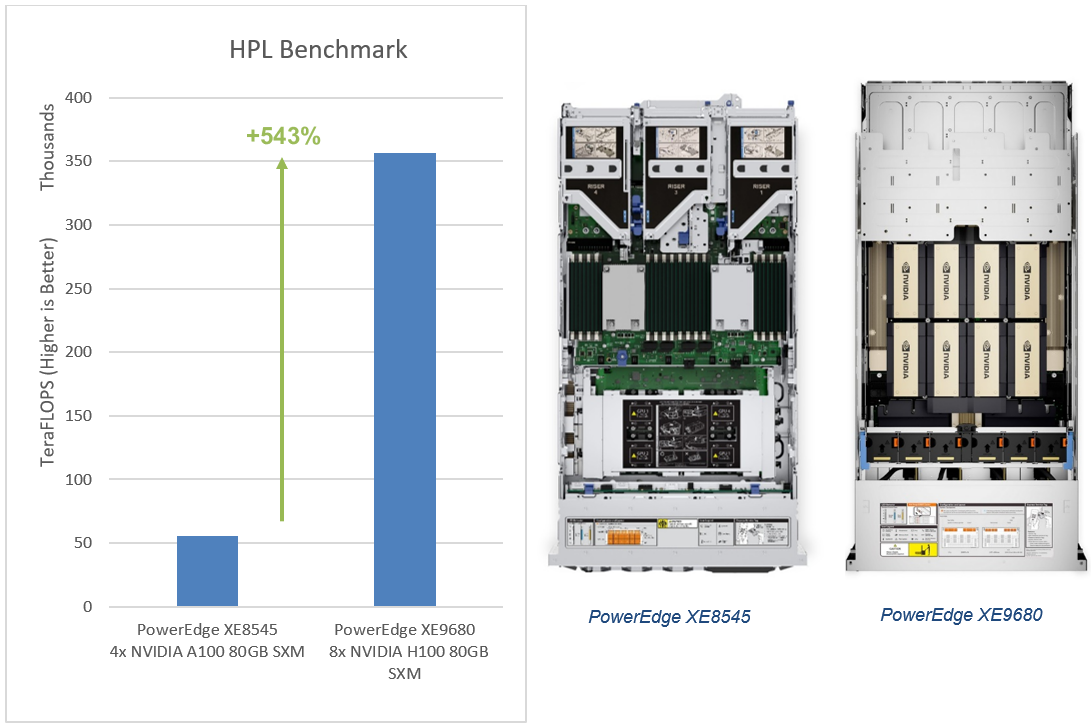

The Dell HPC & AI Innovation Lab used HPL to compare the performance of the PowerEdge XE9680 to our last generation PowerEdge XE8545. There are two key differentiators between the servers that affect HPL performance here: the quantity and model of GPUs supported by each platform.

Regarding GPU configuration, the PowerEdge XE9680 was equipped with 8x H100 80GB SXM GPUs, while the PowerEdge XE9680 was outfitted with 4x A100 80GB SXM GPUs.

Performance

In the HPL benchmark, the PowerEdge XE9680 equipped with NVIDIA's latest H100 80GB SXM GPU outperforms the PowerEdge XE8545 by an impressive 543% more TeraFLOPS!1

The PowerEdge XE9680, with the latest NVIDIA H100 SXM GPU, advances HPC performance. With exceptional HPL performance, the PowerEdge XE9680 sets a high benchmark for today’s and tomorrow's HPC demands. Contact your account executive or visit www.dell.com to learn more.

Table 1. Server configuration

- Testing conducted by Dell in March of 2023. Performed on PowerEdge XE9680 with 8x NVIDIA H100 SXM5-80GB and PowerEdge XE8545 with 4x NVIDIA A100-SXM-80GB. Actual results will vary.

MLPerf™ Inference v4.0 Performance on Dell PowerEdge R760 with NVIDIA L40S GPUs

Fri, 17 May 2024 16:25:45 -0000

|Read Time: 0 minutes

Summary

Artificial intelligence is rapidly transforming a wide range of industries with new applications emerging every day. As this technology becomes more pervasive, the right infrastructure is necessary to support its growth.

This Direct from Development (DfD) tech note describes the new capabilities you can expect from the PowerEdge R760, coupled with NVIDIA L40S GPU. This document covers the product features, MLPerf benchmark, and test configuration results to help determine the Artificial Intelligence use cases best suited for enterprises looking to invest in this mainstream rack server.

Market positioning

Organizations in multiple industries are adopting server accelerators to outpace the competition — honing product and service offerings with data-gleaned insights, enhancing productivity with better application performance, optimizing operations with fast and powerful analytics, and shortening time to market by doing it all faster than ever before. Dell Technologies offers a choice of server accelerators in Dell PowerEdge servers, so you can turbo-charge your applications.

PowerEdge R760 Rack Server

The Dell PowerEdge R760 is Dell’s latest two-socket rack server that is designed to run complex workloads using highly scalable memory, I/O, and network options. Gain the performance you need with this full-featured enterprise server, designed to optimize even the most demanding workloads, such as Artificial Intelligence and Machine Learning.

It is powered by up to 2 x 4th Gen Intel® Xeon® Scalable or Intel® Xeon® Max Processors with up to 56 cores. It can also support up to 2 x 5th Gen Intel® Xeon® Scalable Processors with up to 64 cores.

Figure 1. Dell PowerEdge R760 server

These R760 servers can support up to two double wide 350 W, or six single wide 75 W accelerators. For the purpose of this testing with MLPerf 4.0, we have used NVIDIA’s latest L40S GPUs, which are built to power the next generation of data center workloads—from generative AI and large language model (LLM) inference and training, to 3D graphics, rendering, and video.

Figure 2. Inside the system with full length risers and GPU

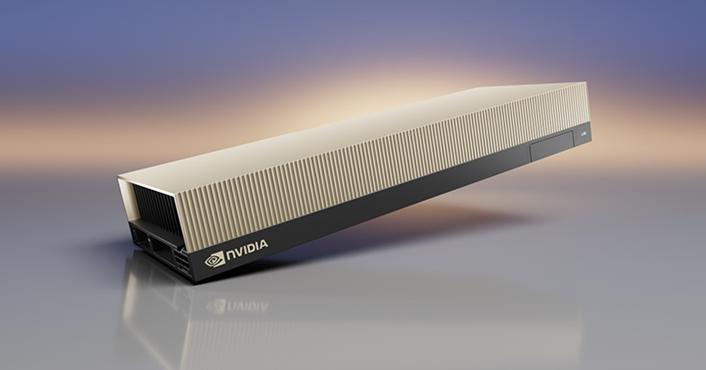

NVIDIA L40S: Ada Lovelace GPU architecture

NVIDIA Ada architecture GPUs deliver outstanding performance for graphics, AI, and compute workloads with exceptional architectural and power efficiency. The Ada RT Core has been enhanced to deliver 2x faster ray-triangle intersection testing and includes two important new hardware units. It supports Shader Execution Reordering (SER) which dynamically organizes and reorders shading workloads to improve RT shading efficiency. It also provides Optical Flow Accelerator and AI frame generation that boosts DLSS 3’s frame rates up to 2x over the previous DLSS 2.0

Figure 3. NVIDIA L40S GPU

Table 1. L40S GPU Details

Model | NVIDIA L40S |

Form factor | PCIe Gen4 |

GPU architecture | Ada Lovelace |

CUDA cores | 18176 |

Memory size | 48 GB |

Memory type | GDDR6 |

Base clock | 1110 MHz |

Boost clock | 2520 MHz |

Memory clock | 2250 MHz |

MIG support | No |

Peak memory bandwidth | 864 GB/s |

Total board power | 350 W |

NVIDIA L40S specifications

- Fourth-Generation Tensor Cores: Deliver up to 4X higher inference performance over the previous generation (FP8).

- Advanced Video and Vision AI Acceleration: Can host up to 3X more video streams concurrently than the previous generation.

- Third-Generation RT Cores: Deliver up to 2X the ray-tracing performance over the previous generation.

MLPerf Benchmark

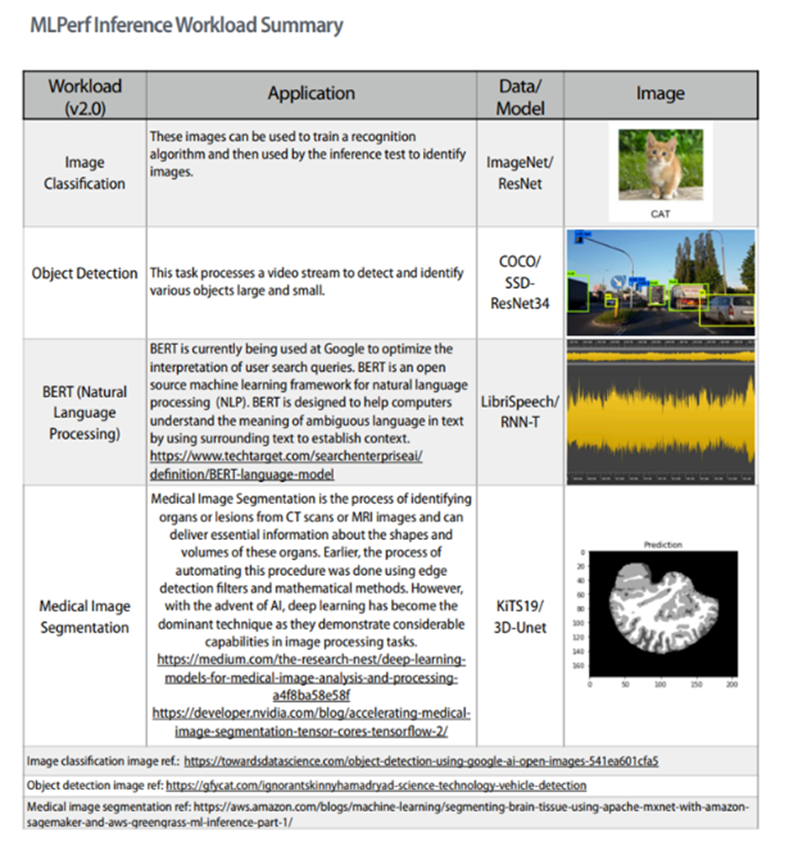

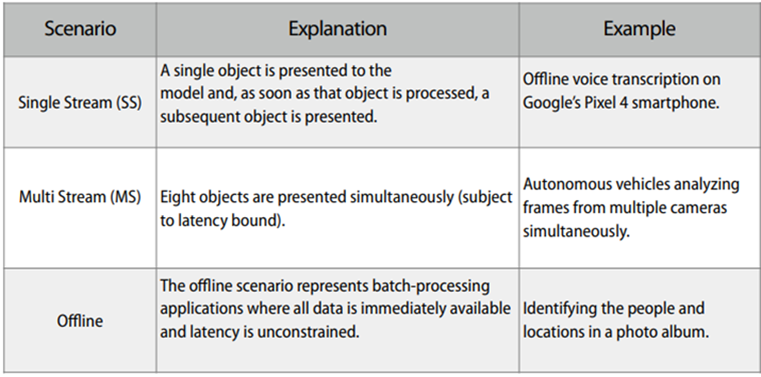

The primary output of MLCommons centers around its jointly-developed, open-source benchmarking suite: MLPerf™. MLPerf provides benchmarking suites that include both the “training” and “inference” aspects of ML. (For more about those topics, see the section Appendix - MLPerf workloads and scenarios.) MLPerf benchmarking suites offer multiple processing scenarios in Image classification, Object detection, Speech-to-text, and Natural language processing. The MLPerf benchmarking tool is free to use for both vendors and end-users, and members and non-members alike. MLCommons also hosts a repository where vendors (primarily) can post “reviewed” results that have been submitted for formal review by MLCommons. These are available for reference by the general public. For more information, see MLPerf Inference: Datacenter Benchmark Suite Results.

Test Configuration

For our testing, we used the following PowerEdge and system configurations:

Table 2. Dell PowerEdge Server - hardware configuration

System Name | PowerEdge R760 |

Status | Available |

System Type | Data Center |

Number of Nodes | 1 |

Host Processor Model | Intel Xeon Platinum 8580 |

Host Processors per Node | 2 |

Host Memory Capacity | 16x 96GB 5600 MT/s |

Host Storage Capacity | 6TB, NVME |

Accelerator Model Name | L40S NVIDIA |

Accelerator Per Node | 2 |

Accelerator Memory Configuration | 48GB, GDDR6 |

Table 3. Dell PowerEdge Server - software configuration

OS | Ubuntu 20.04.6 |

Software Stack | TensorRT 9.3.0, CUDA 12.3, cuDNN 8.9.6, Driver 545.23.08, DALI 1.28.0 |

Host Memory Configuration | 16x 96GB 5600 MT/s |

Framework | TensorRT 9.3.0, CUDA 12.3 |

Results

MLPerf v4.0 benchmark results are based on the Dell R760 server with two NVIDIA L40 GPUs and optimized software stacks. In this section, we show the performance observed in various scenarios.

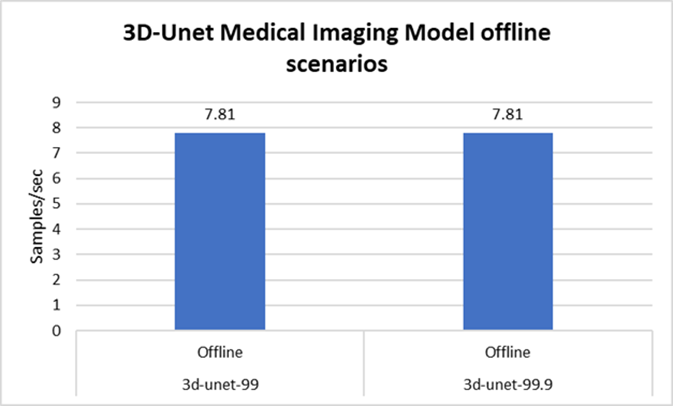

With increasing demand for healthcare facilities, providers are turning towards artificial intelligence for easier and faster data management. With higher throughput for medical imaging data, scalable and affordable options can be made possible.

Figure 4. Medical image segmentation model

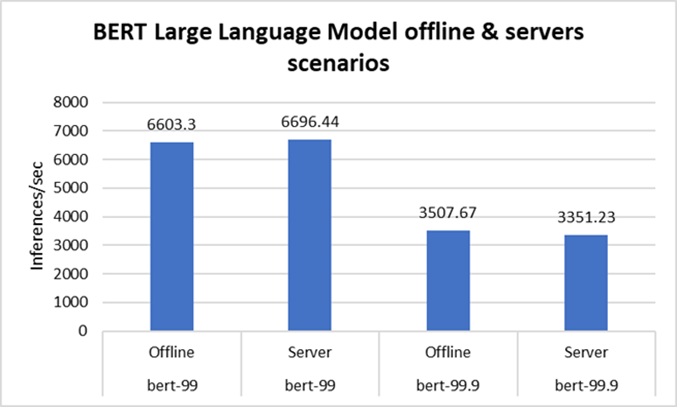

Rack servers continue to provide applications such as web hosting. AI-powered Natural Language Processing algorithms can help analyze user queries and provide real-time responses.

Figure 5. Natural Language Processing model

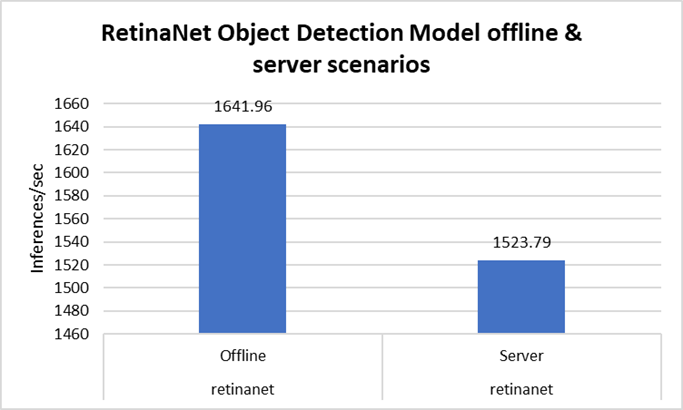

For compute intensive tasks, AI algorithms and deep learning models can help with inferencing and training tasks, and can help analyze user queries and provide real-time responses. Object detection or image recognition is being used increasingly for video surveillance in retail or for worker safety applications in manufacturing.

Figure 6. Object detection model

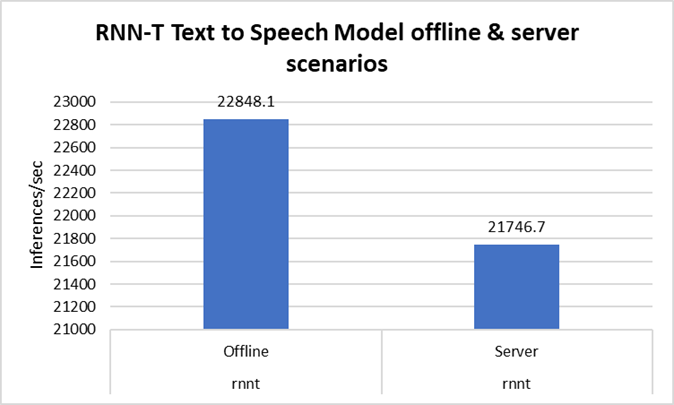

Text-to-speech chatbots are gaining popularity, along with voice assistants helping with multiple languages. R760 offers a great opportunity to support those use cases.

Figure 7. Text to speech model

Note: All testing was conducted in the Solutions and Performance Analysis Lab at Dell Technologies in February 2024.

Conclusion

The R760 supports various deep learning inference scenarios in the MLPerf benchmark, as well as other complex workloads, such as database and advanced analytics. It is an ideal solution for data center modernization to drive operational efficiency, lead to higher productivity, and minimize total cost of ownership (TCO).

The high performance and versatility are demonstrated across natural language processing, image classification, object detection, medical imaging, and speech-to-text inference scenarios. As AI is advancing in all segments, Dell PowerEdge servers can help you chose the right configuration for your performance requirements.

Appendix - MLPerf workloads and scenarios

References

- NVIDIA Ada Lovelace Professional GPU Architecture

- NVIDIA L40S - Unparalleled AI and graphics performance for the data center

- Dell Technologies - Acceleration-Optimized servers and accelerator portfolio