Performance study of a VMware vSphere 7 virtualized HPC cluster

Mon, 28 Mar 2022 16:35:13 -0000

|Read Time: 0 minutes

High Performance Computing (HPC) involves processing complex scientific and engineering problems at a high speed across a cluster of compute nodes. Performance is one of the most important features of HPC. While most HPC applications are run on bare metal servers, there has been a growing interest to run HPC applications in virtual environments. In addition to providing resiliency and redundancy for the virtual nodes, virtualization offers the flexibility to quickly instantiate a secure virtual HPC cluster.

Most people tend to run their HPC workloads on dedicated hardware, which is often composed of server compute nodes that are interconnected by high-speed networks to maximize their performance. Alternatively, virtualization abstracts the underlying hardware and adds a software layer that emulates this hardware. With this in mind, the engineers at the Dell Technologies HPC & AI Innovation Lab and VMware conducted a performance study to compare the performance of running and scaling HPC workloads on dedicated bare metal nodes to a vSphere 7-based virtualized infrastructure. The team also tuned the physical and virtual infrastructure to achieve optimal virtual performance and share these findings and recommendations.

Performance test details

Our team evaluated tightly coupled HPC applications or message passing interface (MPI) based workloads and observed promising results. These applications consist of parallel processes (MPI ranks) that leverage multiple cores and are architected to scale computation to multiple compute servers (or VMs) to solve the complex mathematical model or scientific simulation in a timely manner. Examples of tightly coupled HPC workloads include computational fluid dynamics (CFD) used to model airflow in automotive and airplane designs, weather research and forecasting models for predicting the weather, and reservoir simulation code for oil discovery.

To evaluate the performance of these tightly coupled HPC applications, we built 16-node HPC cluster using Dell PowerEdge R640 vSAN Ready nodes. Dell Power Edge R640 is a 1U dual socket server with Intel® Xeon® Scalable processors. The same cluster was configured as both a bare metal HPC cluster and as a virtual cluster running VMware vSphere.

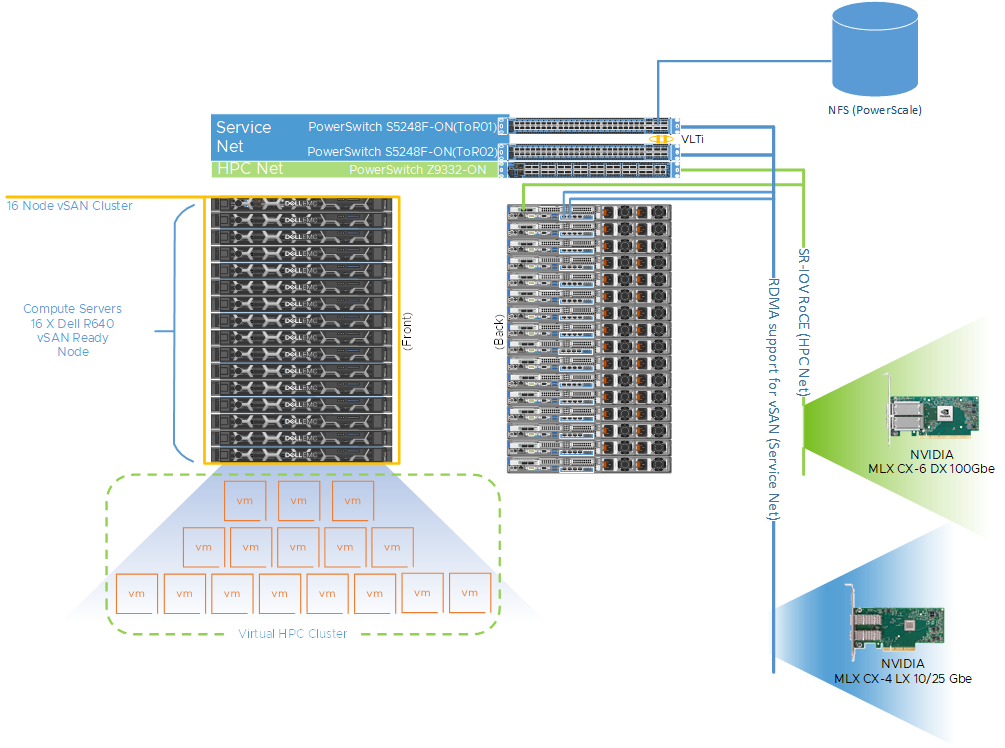

Figure 1 shows a representative network topology of this cluster. The cluster was connected to two separate physical networks. We used the following components for this cluster:

- Dell PowerSwitch Z9332 switch connecting NVIDIA® Connect®-X6 100 GbE adapters to provide a low latency high bandwidth 100 GbE RDMA-based HPC network for the MPI-based HPC workload

- a separate pair of Dell PowerSwitch S5248 25 GbE based top of rack (ToR) switches for hypervisor management, VM access and VMware vSAN networks for the virtual cluster

The VM switches provided redundancy and were connected by a virtual link trucking interconnect (VLTi). A VMware vSAN cluster was created to host the VMDKs for the virtual machines. To maximize CPU utilization RDMA, we also leveraged support for vSAN. This provides direct memory access between the nodes participating in the vSAN cluster without involving the operating system or the CPU. RDMA offers low latency, high throughput, and high IOPs that are more difficult to achieve with traditional TCP-based networks. It also enables the HPC workloads to consume more CPU for their work without impacting the vSAN performance.

Figure 1: A 16-Node HPC cluster test bed

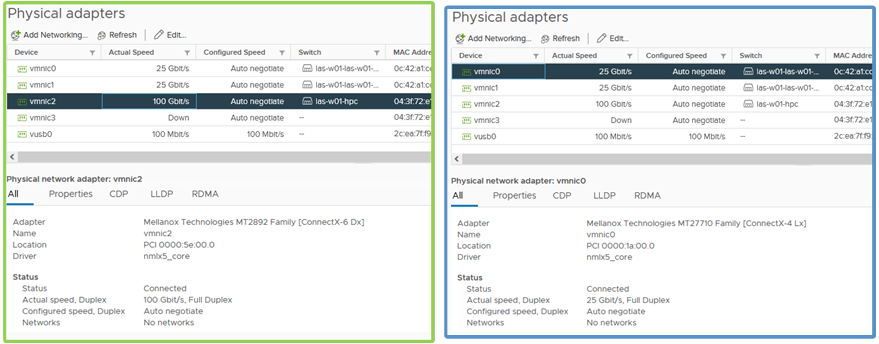

Figure 2: Physical adapter configuration for HPC network and service network

Table 1 describes the configuration details of the physical nodes and the network connectivity. For the virtual cluster, a single VM per node was provisioned for a total of 16 VMs or virtual compute nodes. Each VM was configured with 44 vCPU and 144 GB of memory, and the VM CPU and memory reservation were enabled and we set the VM latency sensitivity to high. Figure 1 also provides an example of how the hosts are cabled to each fabric. One port from each host is connected to the NVIDIA Mellanox ConnectX-6 adapter and to the Dell PowerSwitch Z9332 for the HPC network fabric. For the service network fabric, two ports are connected from the NVIDIA Mellanox ConnectX-4 adapter to the Dell PowerSwitch S5248 ToR switches.

Table 1: Configuration details for the bare metal and virtual clusters

Environment | Bare Metal | Virtual |

Server | PowerEdge R640 vSAN Ready Node | |

Processor | 2 x Intel Xeon 2nd Generation 6240R | |

Cores | All 48 cores used | 44 vCPU used |

Memory | 12 x 16GB @3200 MT/s | 144 GB reserved for the VM |

Operating System | CentOS 8.3 | Host OS: VMware vSphere 7.0u2 |

HPC Network NIC | 100 GbE NVIDIA Mellanox Connect-X6 | |

Service Network NIC | 10/25 GbE NVIDIA Mellanox Connect-X4 | |

HPC Network Switch | Dell PowerSwitch Z9332F-ON | |

Service Network Switch | Dell PowerSwitch S5248F-ON | |

Table 2 shows a range of different HPC applications across multiple vertical domains along with the benchmark datasets that were used for the performance comparison.

Table 2: Application and Benchmark Details

Application | Vertical Domain | Benchmark Dataset |

OpenFOAM | Manufacturing - Computational Fluid Dynamics (CFD) | |

Weather Research and Forecasting (WRF) | Weather and Environment | |

Large-scale Atomic/Molecular Massively Parallel Simulator (LAMMPS) | Molecular Dynamics | |

GROMACS | Life Sciences – Molecular Dynamics | HECBioSim Benchmarks – 3M Atoms |

Nanoscale Molecular Dynamics (NAMD) | Life Sciences – Molecular Dynamics | STMV – 1.06M Atoms |

Performance Results

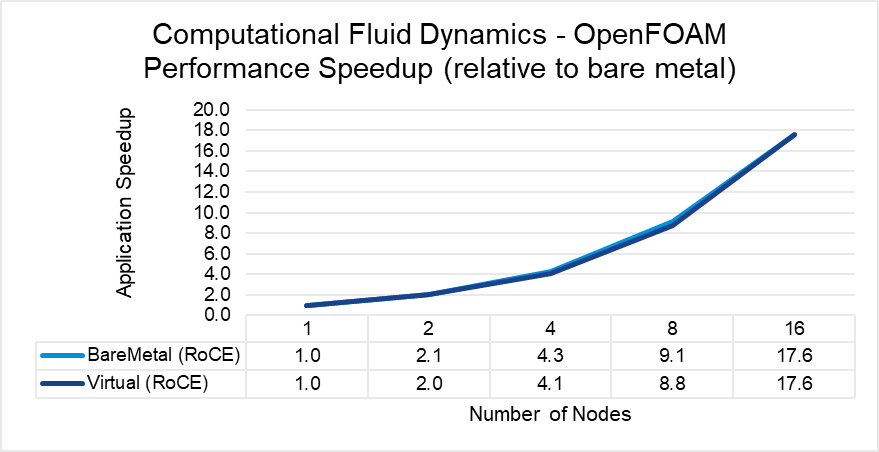

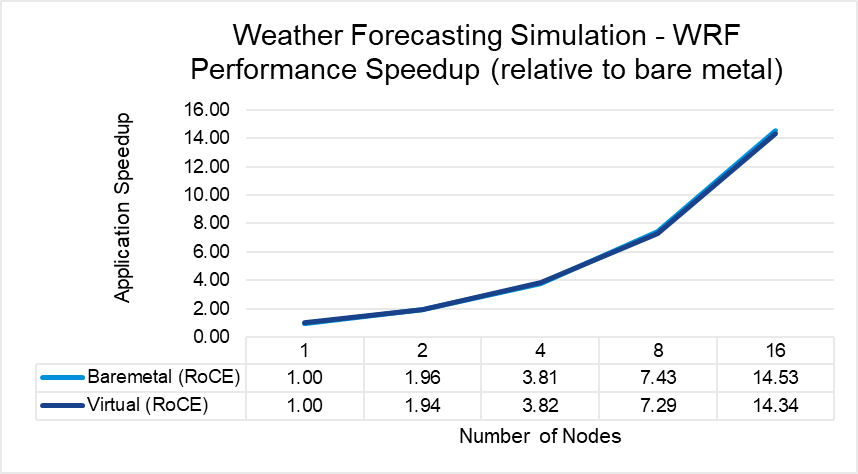

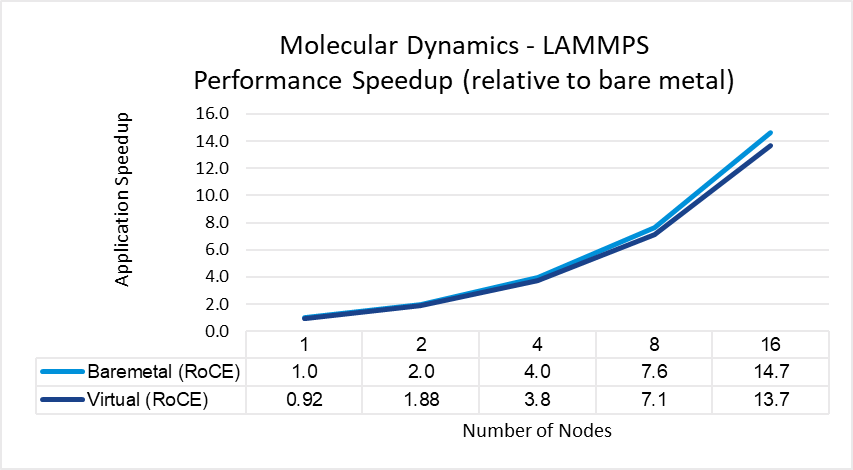

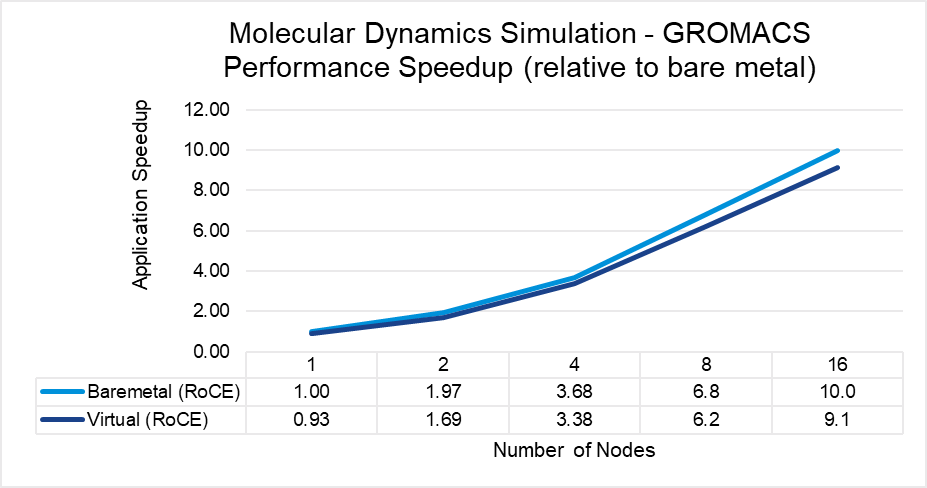

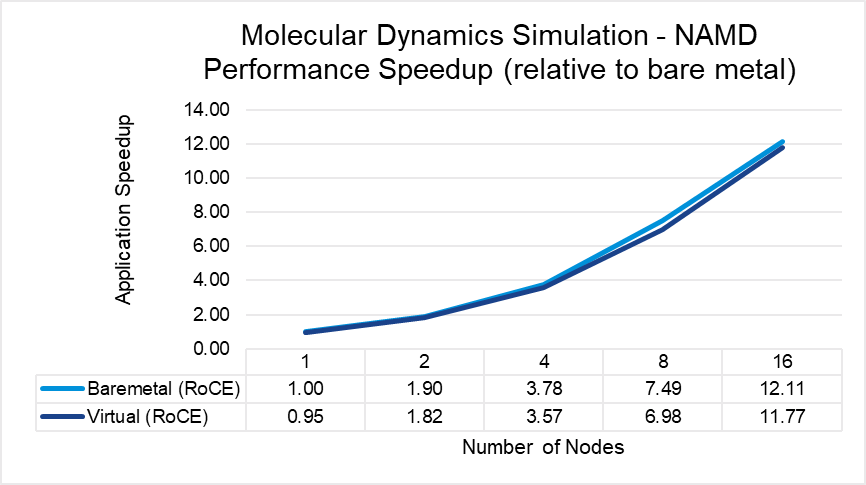

Figures 3 through 7 show the performance, scalability, and difference in performance for five representative HPC applications in the CFD, weather, and science domains. Each of the applications was run to scale from 1 node through 16 nodes on a bare metal and a virtual cluster. All five applications demonstrate efficient speedup when computation is scaled out to multiple systems. The relative speedup for the application is plotted (the baseline is application performance on a bare metal single node).

The results indicate that MPI application performance running in a virtualized infrastructure (with proper tuning and following best practices for latency-sensitive applications in a virtual environment) is close to performance in a bare metal infrastructure. The single node performance delta ranges from no difference for WRF to a maximum of 8 percent difference observed with LAMMPS. Similarly, as the nodes are scaled, the performance observed on the virtual nodes is comparable to that on the bare-metal infrastructure with the largest delta being 10% when running LAMMPS on 16 nodes.

Figure 3: OpenFOAM performance comparison between virtual and bare-metal systems

Figure 4: WRF performance comparison between virtual and bare-metal systems

Figure 5: LAMMPS performance comparison between virtual and bare-metal systems

Figure 6: GROMACS performance comparison between virtual and bare-metal systems

Figure 7: NAMD performance comparison between virtual and bare-metal systems

Tuning for Optimal Performance

One of the key elements of achieving a viable virtualized HPC solution is the tuning best practices that allow for optimal performance. We found a significant improvement was achieved after some minor tweaks were made from the out-of-box configuration. These improvements are a critical ingredient to ensuring customers can and will achieve results that enable not only the implementation of a virtual HPC environment, but also the adoption of a more cloud-ready eco-system that provides operational efficiencies and multi-workload support.

Table 3 outlines the parameters that we found to work best for MPI applications. Given the nature of MPI for parallel communication and its heavy reliance on a low-latency network, we suggest the implementation of the VM Latency Sensitivity setting available in vSphere 7.0. This setting allows users to optimize the scheduling delay for latency sensitive applications by 1) giving exclusive access to physical resources to reduce resource contention, 2) by-passing virtualization layers that are not providing value for these workloads, and 3) tuning virtualization layers to reduce any unnecessary overhead. We have also outlined the additional physical host and hypervisor tunings that complete these best practices below.

Table 3: Recommended performance turnings for tightly coupled HPC workloads

Settings | Value |

Physical Server |

|

BIOS Power Profile | Performance per watt (OS) |

BIOS Hyper-threading | On |

BIOS Node Interleaving | Off |

BIOS SR-IOV | On |

Hypervisor |

|

ESXi Power Policy | High Performance |

Virtual Machine |

|

VM Latency Sensitivity | High |

VM CPU Reservation | Enabled |

VM Memory Reservation | Enabled |

VM Sizing | Maximum VM size with CPU/memory reservation |

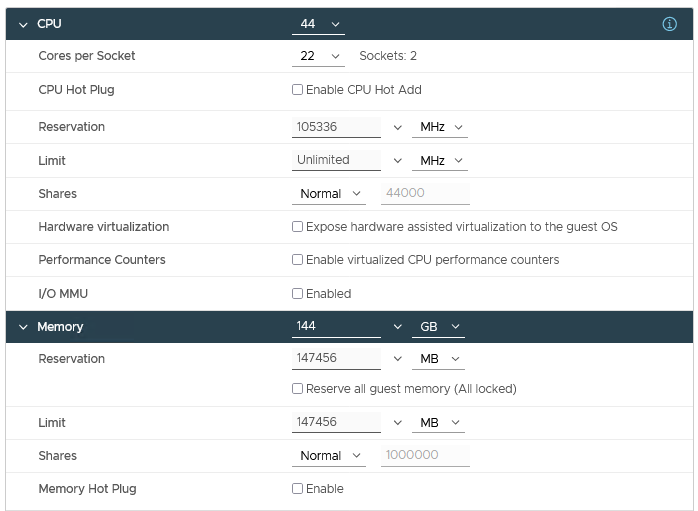

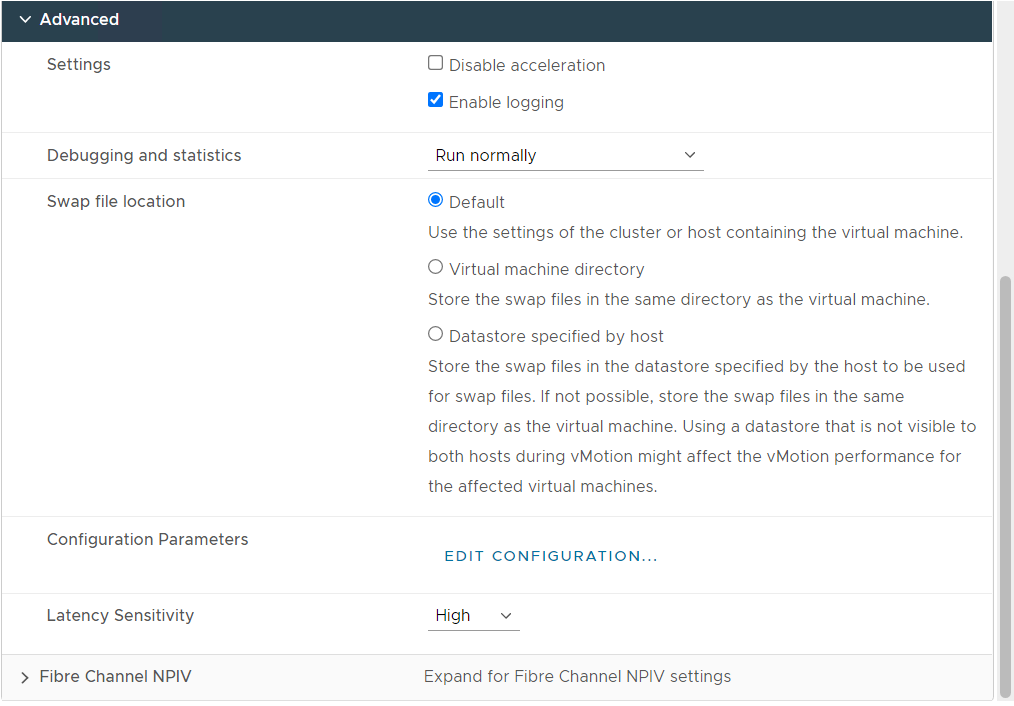

Figure 8: Virtual Machine Configuration with the recommended tuning settings

Figure 8 shows a snapshot of the recommended tuning settings as applied to the virtual machine used as the virtual nodes on the HPC cluster.

Conclusion

Achieving optimal performance is a key consideration for running an HPC application. While most HPC applications enjoy the performance benefits offered by a dedicated bare metal hardware, our results indicate that with appropriate tuning the performance gap between virtual and bare metal nodes has narrowed, making it feasible to run certain HPC applications in a virtualized environment. We also observed that these tested HPC applications demonstrate efficient speedups when computation is scaled out to multiple virtual nodes.

Additional resources

To learn more about our previous and ongoing work at the Dell Technologies HPC & AI Innovation Lab, see the High Performance Computing overview and the Dell Technologies Info Hub blog page for HPC solutions.

Acknowledgements

The authors thank Martin Hilgeman from Dell Technologies, Ramesh Radhakrishnan and Michael Cui from VMware, and Martin Feyereisen for their contribution in the study.