Overview of MLPerf™ Training 4.0 Results

Thu, 13 Jun 2024 14:08:03 -0000

|Read Time: 0 minutes

Today marks the unveiling of another round (v4.0) of MLPerf Training results. Dell Technologies has been an active contributor to these results since the beginning of the benchmark. These results are essential for making effective decisions about modern AI data center deployment. As AI continues to spread into all domains, building effective infrastructure to support such growth is important and Dell Technologies is well positioned to enable your AI solutions needs.

Our excellent MLPerf results help our customers make the best choices for their needs based on the major tasks of their workloads. The tasks in our MLPerf submission included large language model (LLM) fine-tuning, graph neural networks (GNNs), image classification, medical image segmentation, object detection, language modeling, and recommendation.

What’s new with the MLPerf Training 4.0 and the Dell Technologies submissions?

The following benchmarks were introduced for this submission:

- 70B parameter Llama 2 LLM fine-tuning submission using LoRA with the SCROLLS Government Report dataset. Fine-tuned LLMs are used for many use cases. An example is domain-specific language generation for understanding medical or legal documents, interpreting jargon, task-specific language such as RAG question answering, content creation, and so on.

- GNN with RGAT using the IGBFull dataset. Use cases for this model include:

- Knowledge Graph Completion and Link Prediction, where the intent is to predict missing relationships or links between entities in a knowledge graph

- Node classification in relational graphs, where relationships between nodes carry semantic meaning

- For social networks, biological networks, and citation networks

- For fraud detection, recommender systems, and so on

Overview of results

Dell Technologies submitted 19 results. These results were submitted using four different systems:

- The PowerEdge R760xa server, powered by four NVIDIA L40S GPUs

- The PowerEdge XE8640 server, powered by four NVIDIA H100 Tensor Core GPUs

- The PowerEdge XE9640 server, powered by four NVIDIA H100 Tensor Core GPUs

- The PowerEdge XE9680 server, powered by eight NVIDIA H100 Tensor Core GPUs

These results consisted of models such as BERT, DLRM_DCNV2, ResNet, SSD, UNET3D, LLAMA2_70B_LORA, and GNN, showcasing different use cases.

Points of interest

Points of interest include:

- Dell PowerEdge XE9680, XE8640, XE9640, and R760xa servers secured top performer titles (#1 titles) among other systems that were equipped with the same number of NVIDIA Tensor Core GPUs.

- We made submissions to the new Llama 2 70B fine-tuning with LoRA and GNN benchmark. LLM fine-tuning is important because of its use cases such as chatbots, function calling, question answering, code generation, and so on. GNNs are used in recommender systems, connection optimizations, fraud detection, and so on.

- The PowerEdge R760xa server with four NVIDIA L40S GPUs results were submitted to enable end users to see performance in relation to servers having four NVIDIA H100 PCIe GPUs.

- The eight-way PowerEdge XE9680 server, the four-way PowerEdge XE9640 server with liquid-assisted air cooling (LAAC), and the PowerXE8640 server offer multinode scaling efficiently as submitted in our previous submission. Customers can expect near linear scaling with these servers.

- In the recent Dell Technologies World (DTW) announcement, the PowerEdge XE9680 server was unveiled with liquid cooling technology. This cutting-edge cooling solution enables unparalleled efficiency, allowing for the massive scaling of large compute training workloads with superior thermal management and performance. Modern data center workloads are expanding, and customers can use these servers for their generative and predictive AI use cases. Our MLPerf results serve as a reference to support data center design and like-to-like comparison with other submitters.

- The results for the 2U PowerEdge XE9640 server with LAAC are similar to the 4U PowerEdge XE8640 air-cooled server results. These results mean that the form factor of the 2U PowerEdge XE9640 server allows for higher GPU density per rack compared to the 4U PowerEdge XE8640 server. Therefore, the PowerEdge XE9640 server further optimizes rack space use if liquid cooling is an option. Essentially, air cooling and liquid cooling offer approximately the same performance, while liquid cooling offers more GPU density in the rack.

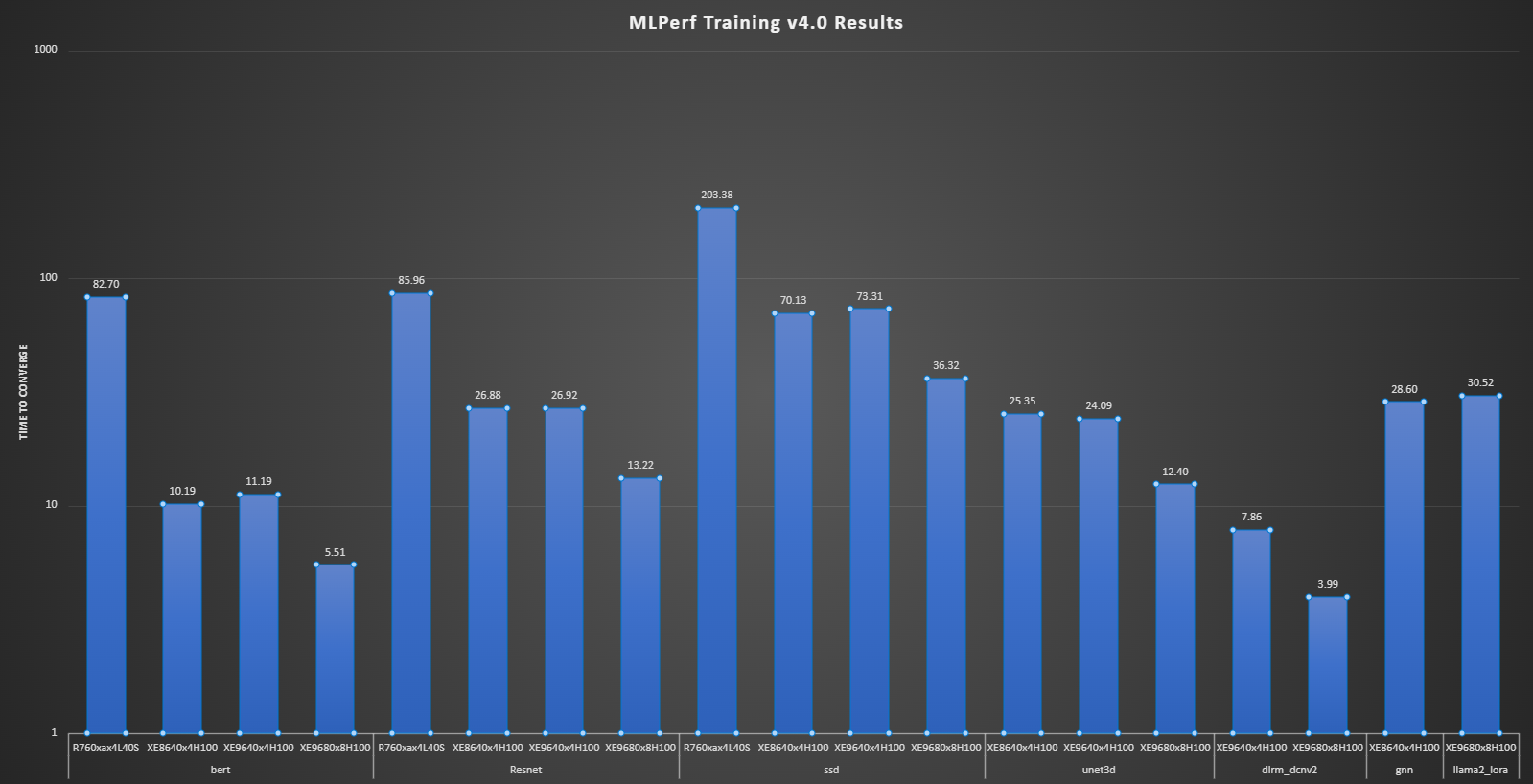

The following figure represents the convergence time in minutes for all the results in the submission. Note that the same graph includes all benchmarks, therefore, the y axis is expressed logarithmically. Overall, these numbers show an excellent time to converge for the specified workloads.

Figure 1: Logarithmically scaled results from different systems and benchmarks

Conclusion

All our results are compliant with the MLCommons Training v4.0 benchmark. These results are based on the latest generation of Dell PowerEdge XE9680, XE8640, XE9640, and R760xa servers powered by NVIDIA H100 Tensor Core GPUs and L40S GPUs. The results are impressive and showcase what end users can expect while running similar workloads in their data center. These workloads span LLM fine-tuning, GNNs, image classification, medical image segmentation, object detection, language modeling, and recommendation. With generative AI expanding towards all domains and vertical markets, Dell Technologies empowers customers to enable faster AI adoption with high-performance systems for demanding compute-intensive workloads.

Furthermore, the launch of Dell AI Factory with NVIDIA accelerates transformation during these unprecedented generative AI growth times.

Next steps

Watch for blogs coming soon that provide more benchmarks for other systems, including some multi-node benchmarks.

MLCommons Results

https://mlcommons.org/benchmarks/training/

The preceding graphs are MLCommons results for MLPerf IDs from 4.0-0019 to 4.0-0022.

The MLPerf™ name and logo are trademarks of MLCommons Association in the United States and other countries. All rights reserved. Unauthorized use strictly prohibited. See www.mlcommons.org for more information.