DevEdgeOps Defined

Mon, 25 Sep 2023 07:30:48 -0000

|Read Time: 0 minutes

What is a DevEdgeOps Platform?

As the demand for edge computing continues to grow, organizations are seeking comprehensive solutions that streamline the development and operational processes in edge environments. This has led to the emergence of DevEdgeOps platforms, with specialized processes and tools. Including frameworks designed to support the unique requirements of developing, deploying, and managing applications in edge computing architectures.

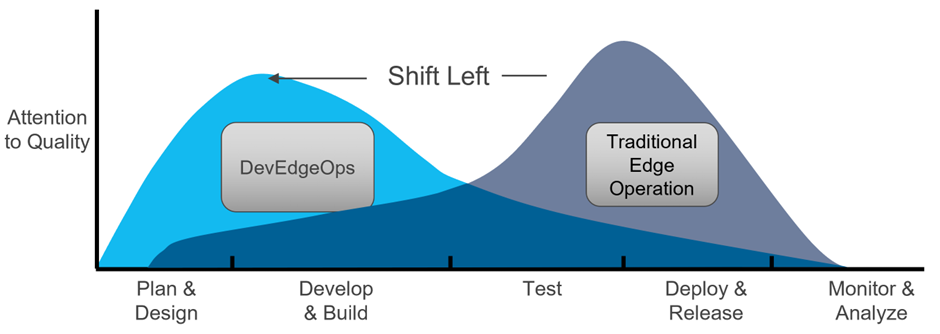

Edge Operations Shift Left

Figure 1: DevEdgeOps platform, as part of the Shift Left movement, focuses on pushing the operational challenges of edge computing to the development stage.

Figure 1: DevEdgeOps platform, as part of the Shift Left movement, focuses on pushing the operational challenges of edge computing to the development stage.

Shift Left refers to the practice of moving activities that were traditionally performed mostly at production stage to an earlier in the development stage. It is often applied in software development and DevOps to integrate testing, security, and other considerations earlier in the development lifecycle. Similarly, in the world of edge computing, we are moving operational tasks to an earlier stage, just like how we did with Shift Left in DevOps. We call this new idea, DevEdgeOps.

A DevEdgeOps platform facilitates collaboration between developers and operations teams, addressing challenges like network connectivity, security, scalability, and edge deployment management.

In this blog post, we introduce edge computing, its use cases, and architecture. We explore DevEdgeOps platforms, discussing their features and impact on edge computing development and operations.

Introduction to Edge Computing

Edge computing is a distributed computing paradigm that brings data processing and analysis closer to the source of data generation, rather than relying on centralized cloud or datacenter resources. It aims to address the limitations of traditional cloud computing, such as latency, bandwidth constraints, privacy concerns, and the need for real-time decision-making.

To learn more about the use cases, unique challenges, typical architecture and taxonomy refer to my previous post: Edge Computing in the Age of AI: An Overview

Figure 2: Innovation and momentum building at the edge. Source: Edge PowerPoint slides, Dell Technologies World 2023

Figure 2: Innovation and momentum building at the edge. Source: Edge PowerPoint slides, Dell Technologies World 2023

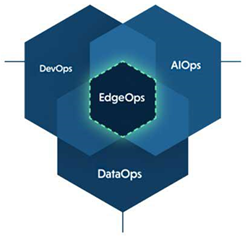

DevEdgeOps

DevEdgeOps is a term that combines elements of development (DevOps) and edge computing. It refers to the practices, methodologies, and tools used for managing and deploying applications in edge computing environments while leveraging the principles of DevOps. In other words, it aims to enable efficient development, deployment, and management of applications in edge computing environments, combining the agility and automation of DevOps with the unique requirements of edge deployments.

DevEdgeOps Platform

A DevEdgeOps platform provides developers and operations teams with a unified environment for managing the entire lifecycle of edge applications, from development and testing to deployment and monitoring. These platforms typically combine essential DevOps practices with features specific to edge computing, allowing organizations to build, deploy, and manage edge applications efficiently.

Key Features of DevEdgeOps Platforms

- Centralized edge application management—DevEdgeOps platforms provide centralized management capabilities for edge applications. They offer dashboards, interfaces, and APIs that allow operations teams to monitor the health, performance, and status of edge deployments in real-time. These platforms may also include features for configuration management, remote troubleshooting, and log analysis, enabling efficient management of distributed edge nodes.

- Integration with edge infrastructure—DevEdgeOps platforms often integrate with edge infrastructure components such as edge gateways, edge servers, or cloud-based edge computing services. This integration simplifies the deployment process by providing seamless connectivity between the development platform and the edge environment, facilitating the deployment and scaling of edge applications.

- Edge-aware development tools—DevEdgeOps platforms offer development tools tailored for edge computing. These tools assist developers in optimizing their applications for edge environments, providing features such as code editors, debuggers, simulators, and testing frameworks specifically designed for edge scenarios.

- CI/CD pipelines for edge deployments—DevEdgeOps platforms enable the automation of continuous integration and deployment processes for edge applications. They provide pre-configured pipelines and templates that consider the unique requirements of edge environments, including packaging applications for different edge devices, managing software updates, and orchestrating deployments to distributed edge nodes.

- Edge simulation and testing capabilities—DevEdgeOps platforms often include simulation and testing features that help developers validate the functionality and performance of edge applications in various scenarios. These features simulate edge-specific conditions such as low-bandwidth networks, intermittent connectivity, and edge device failures, allowing developers to identify and address potential issues proactively.

Final Words

The emergence of new edge use cases that combine cloud-native infrastructure and AI introduces an increased operational complexity and demands more advanced application lifecycle management. Traditional management approaches may no longer be sustainable or efficient in addressing these challenges.

In my previous post, How the Edge Breaks DevOps, I referred to the unique challenges that the edge introduces and the need for a generic platform that will abstract the complexity associated with those. In this blog, I introduced DevEdgeOps platforms that combine essential DevOps practices with features specific to edge computing. I also described the set of features that are expected to be part of this new platform category. By embracing these approaches, organizations can effectively manage operational complexity and fully harness the potential of edge computing and AI.