Dell NativeEdge Speeds Edge Deployments with FIDO Device Onboard (FDO)

Tue, 26 Sep 2023 19:15:00 -0000

|Read Time: 0 minutes

Edge computing is generally defined as “a distributed computing paradigm that brings computation and data storage closer to the sources of data.1” The goal of this approach is to improve response times and save bandwidth.

Beyond this definition, edge computing is critical for enterprises to drive innovation and business outcomes. Existing approaches to the edge have led to technology silos, unscalable operations, poor infrastructure utilization, and inflexible legacy ecosystems. The massive proliferation of diverse edge devices has also increased exposure to cyberattacks. Dell has addressed these challenges with the new NativeEdge solution, a key feature of which is the ability to deploy edge devices swiftly and securely. At the root of this capability is FIDO Device Onboard (FDO), an open standard defined by technology leaders within the FIDO Alliance to automatically and securely onboard devices within edge deployments as diverse as retail, manufacturing, and energy. The FDO implementation used by Dell is based on the open-source implementation that has been contributed to the Linux Foundation Edge project by Intel.

The integration of the FIDO Device Onboard (FDO) with the Dell NativeEdge solution helps organizations to deploy and manage infrastructure at the edge by utilizing zero-trust principles and a streamlined supply chain to secure the edge environment at scale. “Intel developed and contributed the base technology that became FDO. Our work with Dell and the FIDO Alliance is a great example of the power of collaboration to address the continuously evolving threat landscape faced by our edge customers,” said Sunita Shenoy, Senior Director, Edge Technology Product Management at Intel.

Edge computing is transforming industries and we are delighted that FDO is a key component in Dell's innovative NativeEdge platform," said Andrew Shikiar, executive director and CMO of the FIDO Alliance. See the press release here: FIDO Device Onboard (FDO) Certification Program is Launched to Enable Faster, More Secure, Deployments of Edge Nodes and IoT Devices

In this blog, we will look at the edge challenges and three key elements that seek to address them: firstly Dell’s NativeEdge solution (described here), secondly the FIDO Device Onboarding (FDO) standard, and lastly the Linux Foundation Edge Open-Source software implementation of FDO (described here).

Business Challenges at the Edge

Recent years have seen a significant shift towards the edge, as more companies deploy devices that increase the demand for more data and analytics. By deploying devices to the edge, companies can reduce latency, improve the speed of data processing, and enhance security. Further, deploying devices at the edge can also help reduce bandwidth consumption and minimize the costs that are associated with transmitting large amounts of data to the cloud. The deployment of devices at the edge has therefore become a crucial component of modern technology infrastructure, enabling businesses to improve their operational efficiency and deliver better customer experiences.

The Dell NativeEdge Solution

The NativeEdge operations software platform enables organizations to securely deploy and manage infrastructure at the edge. NativeEdge supports a wide range of NativeEdge Endpoints. It uses zero-trust principles, combined with a holistic factory integration approach and application orchestration, to create a secure edge environment. It can start small with a single device and scale out as needed, and it can be deployed centrally or globally, regardless of network connectivity challenges, absence of technical staff, or facility environment.

Driving Improved Return on Investment at the Edge

In an internal Dell analysis2 consisting of return-on-investment modeling together with nearly a hundred Dell customer interviews, and a third-party environmental consultant review for methodology validation, Dell examined the potential economic impact of running NativeEdge across 25 facilities of a composite manufacturing company.

The study found that after three years, the company could expect to see the following benefits:

- Up to 132-percent return on investment for Dell NativeEdge platform costs

- An average of 20-minute time saving per month for every edge infrastructure asset managed with NativeEdge

- Savings on transportation costs by decreasing the need for site-support dispatches, helping to reduce travel time, and eliminating up to 14 metric tons of carbon dioxide emissions

Key Elements of Dell NativeEdge

As these figures show, NativeEdge is designed to address the major aspects of managing an edge system. The first two of these aspects are closely linked as the ability to provide zero-touch provisioning (also known as onboarding) together with zero-trust security, a key tenet of which is, “Never trust, always verify."

Automating the Onboarding Process with FIDO Device Onboard (FDO)

Traditionally, the installation of edge devices has been a cumbersome and time-consuming process. Edge installers, who could be individuals such as retail store managers or factory plant managers, may lack the expertise to manage complex edge devices and operating system installations. This highlights the importance of ensuring that edge devices are user-friendly and straightforward to deploy, as mistakes in manual onboarding can lead to security issues as well as service outages.

With NativeEdge, anyone can easily set up a NativeEdge Endpoint by simply plugging in a network cable, powering on the device, and stepping away. By leveraging the FIDO Alliance’s open standard known as FIDO Device Onboard Specification 1.1, Dell assures a streamlined installation process that is as easy as possible. The FIDO Alliance is a standards organization with over 250 members that was formed in 2012 with the goal of “simpler, stronger authentication.”

Leaders in technology from the FIDO Alliance (including Intel, Amazon, Google, Qualcomm, and Arm) created FDO. It is an open specification that defines an approach which combines 'plug and play'-like simplicity with the highest levels of security. It fully aligns with the zero-trust security framework in that neither the edge device nor the platform onto which it is being onboarded are trusted before onboarding takes place. FDO extends zero trust from the installation point back to the manufacturer.

How FIDO Device Onboard (FDO) Works

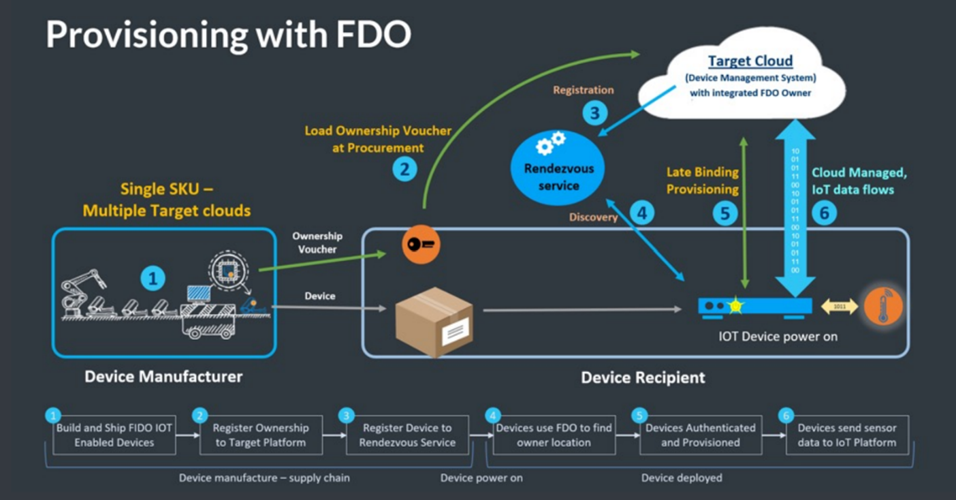

The following steps are aligned with the numbers in the figure:

- At the manufacturing stage of the device (or later if preferred), the FDO software client is installed on the device. A trusted key (sometimes called an IDevID or LDevID) is also created inside the device to uniquely identify it. This key may be built into the silicon processor (or associated Trusted Platform Module, know as TPM) or protected within the file system. Other FDO credentials are also placed in the device. A digital proof of ownership, known as the Ownership Voucher (represented as the orange/black key shape in the figure) is created outside the device. This self-protected digital document can be transmitted as a text file. The Ownership Voucher allows the owner of the device to identify themselves during the onboarding process.

- The device passes its way through the supply chain (for example, from distributor to VAR). The Ownership Voucher file follows a parallel path.

- Once the target cloud or platform is selected by the device owner, the Ownership Voucher is sent to that cloud/platform. In turn, the Ownership Voucher is registered with the Rendezvous Server (RV). The RV acts in a comparable way to a Domain Name System (DNS) service.

- When the time for device onboarding comes, the device is connected to the network and powered on. After the device boots up, it uses the Rendezvous Server (RV) to find its target cloud/platform. On-premise and cloud-based RVs can be programmed into the device.

- Based on the information provided by the RV, the device contacts the cloud/platform. The device uses its trusted key to uniquely identify itself to the cloud/platform, and in return the cloud/platform identifies itself as the device owner using the Ownership Voucher. Next, the device and owner perform a cryptographic trick called a key exchange to create a secured, encrypted tunnel between them.

- The cloud/platform can now download credentials and software agents over this encrypted tunnel (or whatever else is needed for correct device operation and management). FDO allows any kind of credential to be downloaded, so that solution owners do not have to change their existing solution when they adopt FDO.

Finally, having finished the FDO process, the device contacts its management platform, which is the platform that manages it for the rest of its lifecycle. FDO then lies dormant, although it can be re-awakened if needed, such as if the device is sold or repurposed.

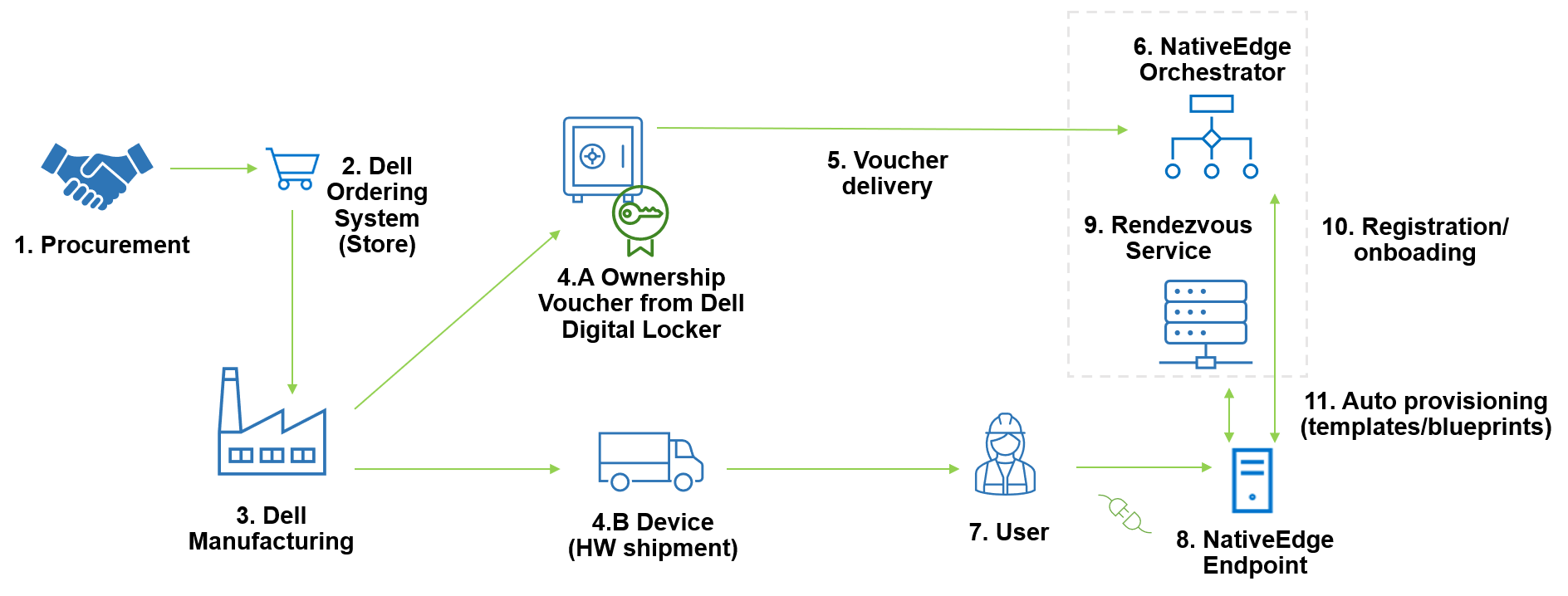

Dell NativeEdge FDO End-to-End Integration

Dell has integrated FDO into many elements of its NativeEdge solution, from its secure manufacturing facilities to the Dell Digital Locker used to store Ownership Vouchers to the NativeEdge Orchestrator. A full and detailed description of how FDO has been dovetailed into NativeEdge is available here.

The following diagram shows the FDO process applied within the NativeEdge environment.

The numbered steps in the diagram are explained in detail in the following steps:

- In the procurement process, the user selects the device configuration and places an order in the Dell store.

- The Dell stores receives the order and sends information to the Dell manufacturing facility.

- The Dell manufacturing facility builds the device and creates the Ownership Voucher.

- The following sub-steps occur simultaneously:

- The Dell manufacturing facility transfers the Ownership Voucher to the end user. This credential is passed through the supply chain, allowing the device owner to verify the device, and also giving the device a mechanism to verify its owner.

- The Dell manufacturing facility ships the NativeEdge Endpoint device to the user.

- The Ownership Voucher is delivered to the Edge Orchestrator that will control the device.

- The Edge Orchestrator now holds the device Ownership Voucher.

- Non IT-skilled staff unbox the device, cable the device to the network, and power it on.

- Once connected to the network, the device contacts the Rendezvous Service configured in the device.

- The Rendezvous Service provides information to the device about which orchestrator it belongs to. The Rendezvous server (which may be part of the NativeEdge Orchestrator or a separate system) is a service that acts a rendezvous point between a newly powered-on device and the owner onboarding service.

- Once the device connects to the NativeEdge Orchestrator that holds its Ownership Voucher, it starts the Secure Component Verification (SCV) process, and if it passes, it starts the registration and onboarding. This secure onboarding process includes device and ownership identification as well as component validation. SCV is part of Dell Supply Chain Security (described here).

- Once the onboarding is finished, the device is automatically provisioned with the deployment of pre-defined templates and blueprints that have been assigned to the device.

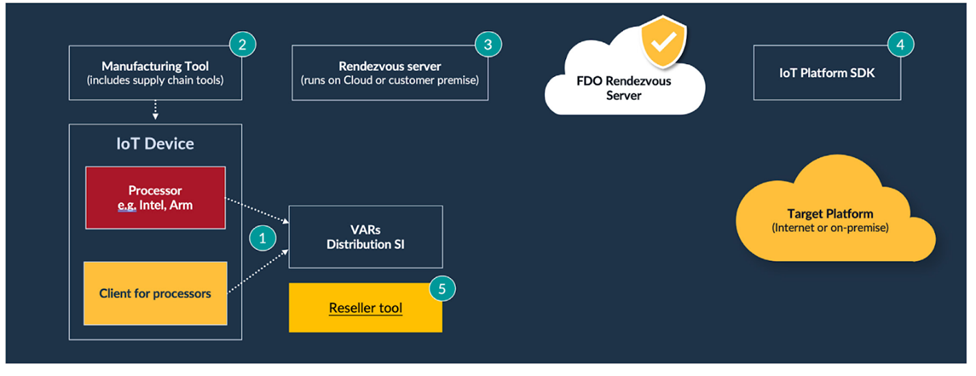

Implementing FDO with the Linux Foundation Edge Open-Source Implementation

Software implementations of FDO consist of several functional elements, which are highlighted in the following generic FDO tool diagram.

The numbered steps in the diagram are described in further detail as follows:

- The FDO client is placed on the device.

- The Manufacturing Tool installs the device credentials and creates the Ownership Voucher.

- The Rendezvous Server can be run in the cloud or on-premise.

- The FDO Platform Software Development Kit (SDK) is integrated into the target cloud or on-premise platform.

- A Reseller tool can be used by the supply chain ecosystem to extend the Ownership Voucher’s cryptographic key.

- Additionally, tools provide initial network access for the device (not shown).

Companies have a range of options when implementing the FDO software. They can develop the software themselves directly from the specification, use one of the commercially available implementations of FDO (for example, Red Hat), or they can use the Linux Foundation Edge implementation (described here).

The FDO software within the Linux Foundation Edge has been developed and contributed by Intel, one of the authors of the FDO specification. The code is a mixture of C and Java (depending on which part of the FDO system is being implemented). It offers client software for both Intel and other processors including Arm.

NativeEdge - Delivering on the Edge Promise

With NativeEdge, Dell set a simple but critical goal; allow customers to deploy Edge solutions quickly and securely and then manage them effectively throughout their lifetime. As with all simple goals, the challenge is in developing a solution that fully delivers on the promise. With NativeEdge, Dell has taken full advantage of FIDO Device Onboarding (FDO) together with the Linux Foundation Edge FDO project code to build on top of an industry onboarding technology that fully supports Dell’s mission to simplify deployment and management at the edge while delivering the highest levels of security. NativeEdge is now available for customers to deploy at scale.

1 https://en.wikipedia.org/wiki/Edge_computing

2 Based on internal analysis, May 2023. The internal analysis consisted of internal modeling, customer interviews, and third-party environmental consultant review for methodology validation.