Dell ECS Data Protection and Data Path Overview Part I

Fri, 23 Aug 2024 05:17:47 -0000

|Read Time: 0 minutes

Dell ECS is an industry-leading object storage platform designed to provide enterprise-grade scalability, performance, and reliability. ECS is built to support both modern cloud-native applications and traditional workloads, offering versatile data storage solutions. It enables organizations to efficiently manage and store vast amounts of unstructured data, ensuring easy access and retrieval across diverse applications. Its compatibility with S3, Atmos, CAS, Swift, NFS protocols allows for straightforward adoption and migration, making Dell ECS an ideal choice for enterprises seeking a flexible and scalable object storage solution.

This blog will introduce the data path of ECS. Please refer to ECS architecture and overview paper if you want to know more about ECS.

Before we introduce the data path, let us first talk about chunk, metadata. If you are already familiar with these concepts, please refer to the Dell ECS Data protection and data path overview II, which will introduce data protection and dataflow.

Chunk

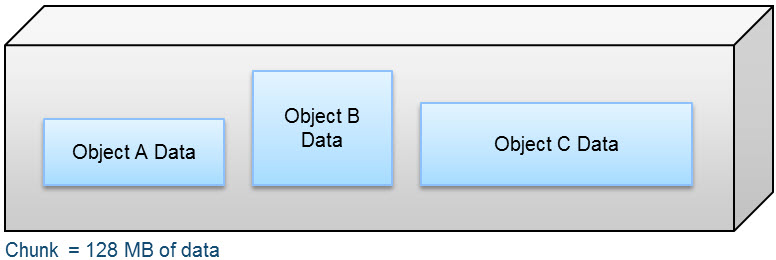

A chunk is a logical container that ECS uses to store all types of data, including object data, custom client provided metadata, and system metadata. Chunks contain 128 MB of data consisting of one or more objects, as shown in the following figure.

Metadata

Metadata is defined as the data providing information about one or more aspects of the data. It includes system metadata like owner, creation date, size and custom metadata. An example of customer metadata could be client=Dell, event=DellWorld and ID=123.

Data management

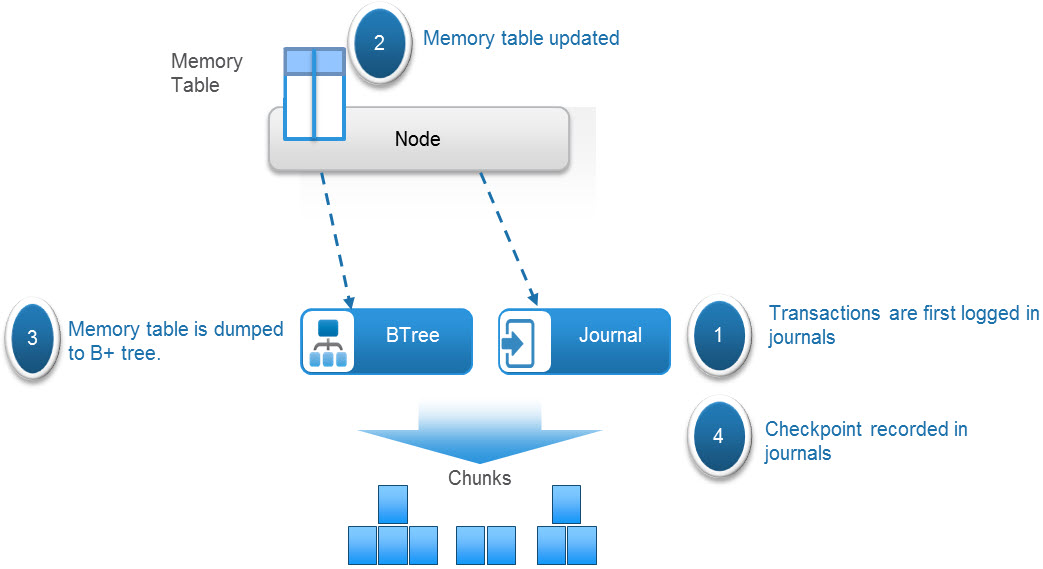

ECS maintains the metadata that tracks data locations and transaction history. This metadata is maintained in logical tables and journals.

The tables hold key-value pairs to store information relating to the objects. A hash function is used to locate which table partition the key is stored. These key-value pairs are stored in a B+ tree for fast indexing of data locations. In addition, to further enhance query performance of these logical tables, ECS implements a two-level log-structured merge (LSM) tree. Thus, there are two tree-like structures where a smaller tree is in memory (memory table) and the main B+ tree resides on disk. A lookup of key-value pairs first queries the memory table and if the value is not memory, it looks in the main B+ tree on disk.

Transaction history is recorded in journal logs, which are written to disks. The journals track the index transactions not yet committed to the B+ tree. After the transaction is logged into a journal, the memory table is updated. After the table in memory becomes full or after a set period of time, the table is merged, sorted, or dumped to B+ tree on disk. A checkpoint is then recorded in the journal.

ECS uses several different tables, and each table can get quite large. To optimize performance of table lookups, each table is divided into partitions that are distributed across the nodes. The node where the partition is written and then becomes the owner or authority of that partition or section of the table.

One such table is a chunk table, which tracks the physical location of chunk fragments and replica copies on disk. The following table shows a sample of a partition of the chunk table. Each chunk identifies its physical location by listing the disk within the node, the file within the disk, the offset within that file, and the length of the data. Chunk ID C1 is erasure coded, and chunk ID C2 is triple mirrored as shown in table 1.

Table 1: Sample chunk table partition

Chunk ID | Chunk location |

C1 | Node1:Disk1:File1:offset:length Node2:Disk1:File1:offset:length Node3:Disk1:File1:offset:length Node4:Disk1:File1:offset:length Node5:Disk1:File1:offset:length Node6:Disk1:File1:offset:length Node7:Disk1:File1:offset:length Node8:Disk1:File1:offset:length Node1:Disk2:File1:offset:length Node2:Disk2:File1:offset:length Node3:Disk2:File1:offset:length Node4:Disk2:File1:offset:length Node5:Disk2:File1:offset:length Node6:Disk2:File1:offset:length Node7:Disk2:File1:offset:length Node8:Disk2:File1:offset:length |

C2 | Node1:Disk3:File1:offset:length Node2:Disk3:File1:offset:length Node3:Disk3:File1:offset:length |

Another example is an object table, which is used for object name to chunk mapping. The following table shows an example of a partition of an object table that lists the chunk or chunks and shows where an object resides in the chunk or chunks.

Table 2: Sample object table

Object name | Chunk ID |

ImgA | C1:offset:length |

FileA | C4:offset:length C6:offset:length |

A distributed KV (Key-Value) store, which runs on part of the nodes, maintains the mapping of table partition owners. The following table shows an example of a portion of an KV mapping table.

Table 3: Sample mapping table

Table ID | Table partition owner |

Table 0 P1 | Node 1 |

Table 0 P2 | Node 2 |

Due to the words limitation in the blog, I will introduce the ECS data protection and workflow in the Dell ECS Data Protection and Data Path Overview Part II session.

Resources

The following Dell Technologies documentation provides additional information related to this blog. Access to these documents depends on an individual’s login credentials. For access to a document, contact a Dell Technologies representative.