Home > Workload Solutions > Container Platforms > Red Hat OpenShift Container Platform > Guides > Implementation Guide—Red Hat OpenShift Container Platform 4.14 on AMD-powered Dell Infrastructure > Zero-Touch Provisioning deployment

Zero-Touch Provisioning deployment

-

You can use Zero-Touch Provisioning (ZTP) to deploy OpenShift Container Platform in a hub-spoke architecture, where a single hub cluster manages multiple spoke clusters in a disconnected environment. For more information, see ZTP documentation.

Prerequisites

- An operational OpenShift 4.14 cluster that can be used as a hub cluster.

- Red Hat Advanced Cluster Management (ACM) Operator 2.9.1 installed on the hub cluster along with an instance of the MultiClusterHub resource.

Note:The hypershift add-on agent must contain runAsNonRoot: false.

- Cluster-admin access to ACM.

- Network connectivity between the hub cluster and the spoke cluster.

- Persistent volume on the hub cluster.

Add RHCOS images to the CSAH node

CSAH nodes host the RHCOS ISO and RootFS images that are used to provision the distributed unit bare-metal hosts.

- Export the required image names and OpenShift version as environment variables by running the following command:

[core@csah ~]$ export ISO_IMAGE_NAME= rhcos-4.14.15-x86_64-live.x86_64.iso

[core@csah ~]$ export ROOTFS_IMAGE_NAME=rhcos-4.14.15-x86_64-live-rootfs.x86_64.img

[core@csah ~]$ export OCP_VERSION=4.14.15

- Download the images by running the following command:

[core@csah ~]$ sudo wget https://mirror.openshift.com/pub/openshift-v4/dependencies/rhcos/4.14/${OCP_VERSION}/${ISO_IMAGE_NAME} -O /var/www/html/${ISO_IMAGE_NAME}

[core@csah ~]$ sudo wget https://mirror.openshift.com/pub/openshift-v4/dependencies/rhcos/4.14/${OCP_VERSION}/${ROOTFS_IMAGE_NAME} -O /var/www/html/${ROOTFS_IMAGE_NAME}

Prepare the hub cluster

To prepare the hub cluster, you must:

- Enable the assisted installer service

- Configure the hub cluster for ZTP

Enable the assisted installer service

The assisted installer service deploys OpenShift Container Platform clusters.

- Create an instance of AgentServiceConfig CR by using this sample YAML file and updating the required parameters. Run the following command:

[core@csah ~]$ oc apply -f <agentServiceConfig.yaml>

2. To enable the bare-metal operator to watch all namespaces, run the following command:

[core@ csah~]$ oc patch provisioning provisioning-configuration --type merge -p '{"spec":{"watchAllNamespaces": true }}'

Configure the hub cluster for ZTP

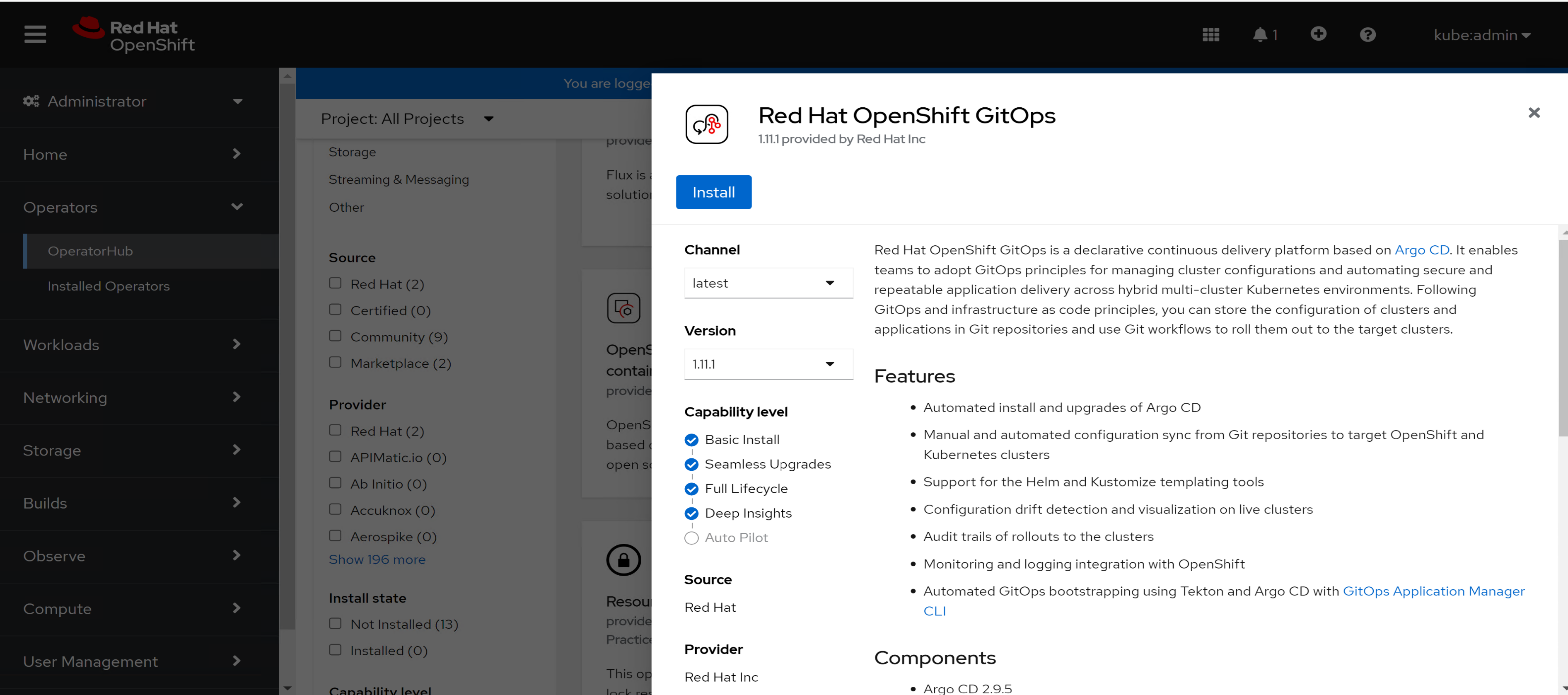

Start by installing the the Red Hat OpenShift GitOps operator from the embedded operator hub. This installation automatically installs Argo CD. Then follow these steps:

- Log in to the OpenShift web console and select Operators > Operator Hub.

- Search for Red Hat OpenShift GitOps and select it.

The following page opens:

Figure 10. OpenShift GitOps operator installation

3. Click Install and install the operator under the default operator-provided namespace.

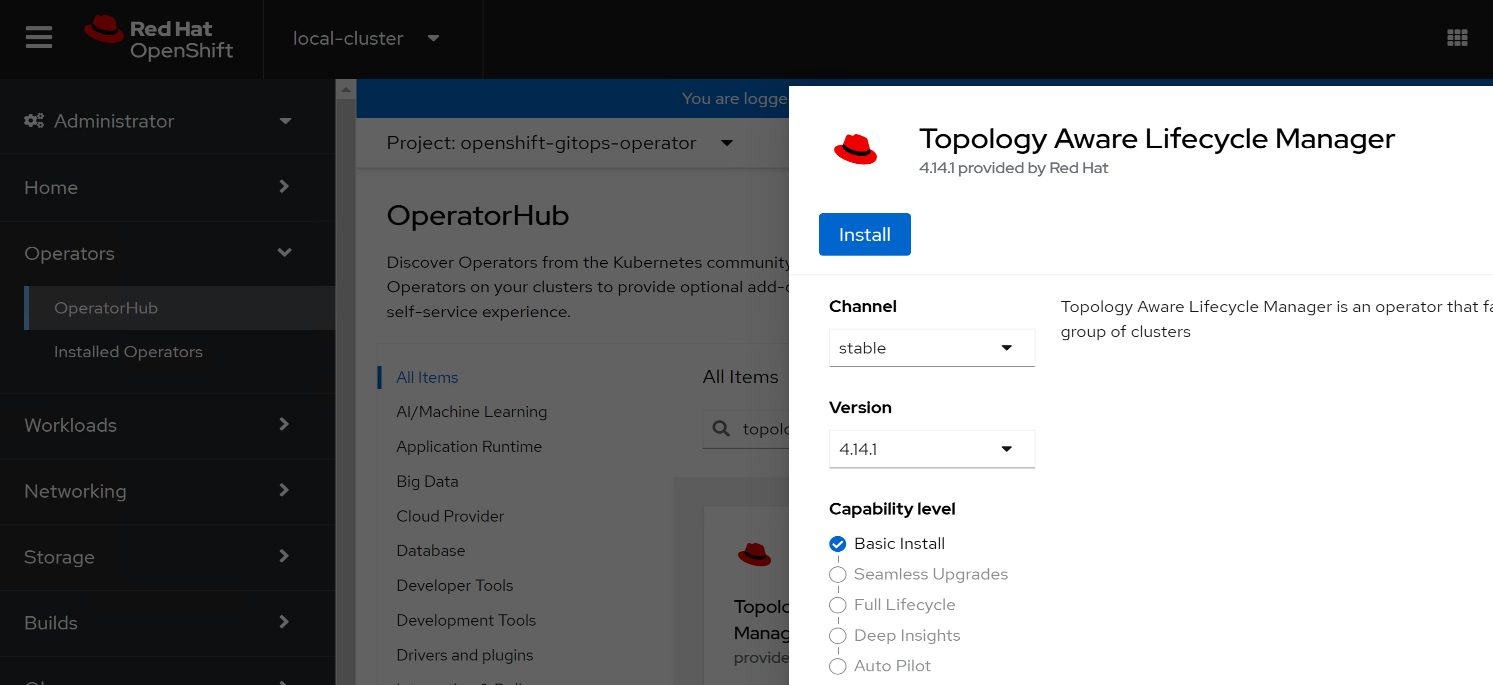

4. Install the Topology Aware Lifecycle Manager (TALM) operator from the embedded operator hub by performing the following steps:

- Log in to the OpenShift web console and select Operators > Operator Hub.

- Search for Topology Aware Lifecycle Manager and select it.

The Topology Aware Lifecycle Manager page opens:

Figure 11. Topology Aware Lifecycle Manager operator installation

- Click Install and install the operator under the default operator-provided namespace.

6. Prepare the ArgoCD pipeline configuration by performing the following steps:

- Create a Git repository:

- Export the argocd directory from the ztp-site-generate container image by running the following commands:

[core@csah ~]$ mkdir gitops

[core@csah ~]$ cd gitops

[core@csah gitops]$ podman pull registry.redhat.io/openshift4/ztp-site-generate-rhel8:v4.14

Note: If the pull command fails, verify that you are logged in using the podman login registry.redhat.io command and Red Hat credentials.

[core@csah gitops]$ mkdir -p ./out

[core@csah gitops]$ podman run --log-driver=none --rm registry.redhat.io/openshift4/ztp-site-generate-rhel8:v4.14 extract /home/ztp --tar | tar x -C ./out

ii. Create a directory structure with separate paths for the SiteConfig and PolicyGenTemplate CRs in your GitHub repository following the structure in the out/argocd/example directory.

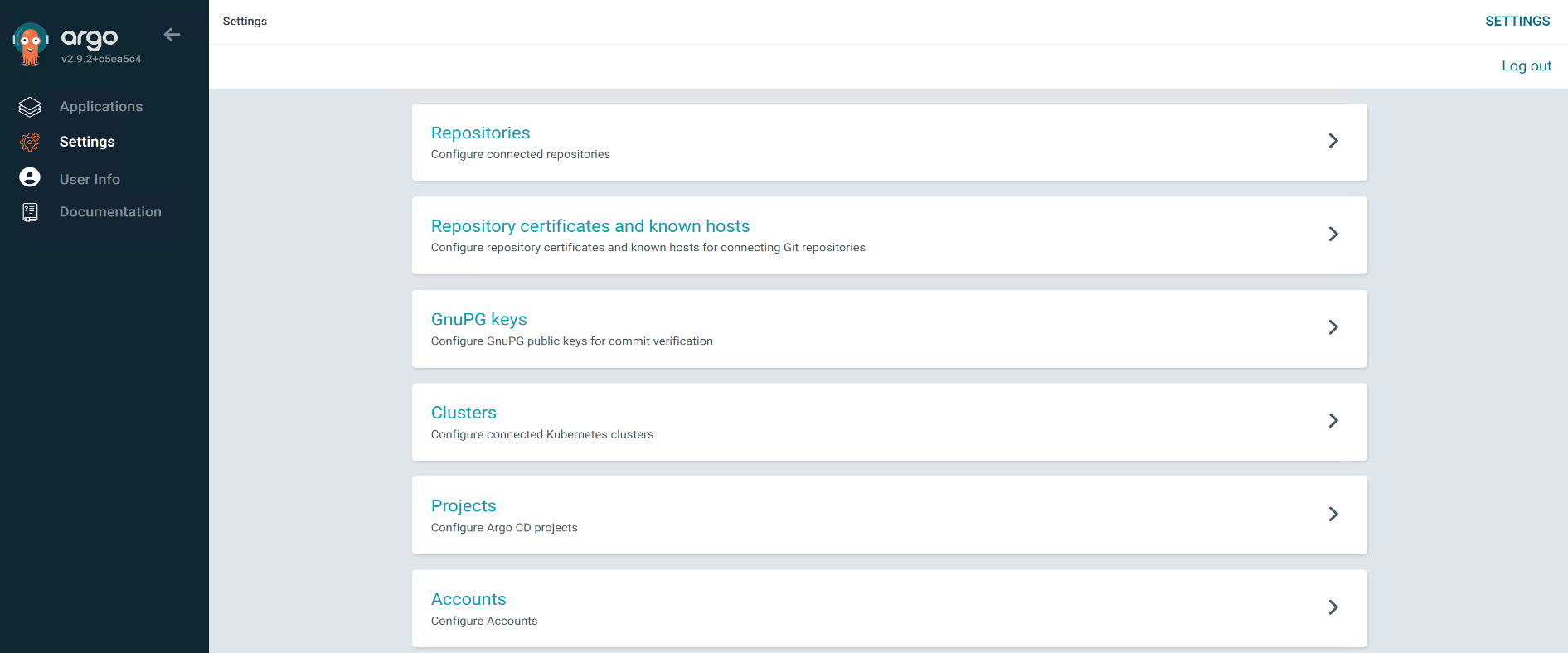

- To configure access to the repository using the ArgoCD UI:

- In the OpenShift console, click the Bento menu icon

in the upper right corner and select Cluster Argo CD. Log in using your OpenShift credentials.

in the upper right corner and select Cluster Argo CD. Log in using your OpenShift credentials.

- In the OpenShift console, click the Bento menu icon

Figure 12. Argo CD settings

ii. Under Settings, configure access to the repository:

- Repositories: Add the connection information and credentials. The URL must end in .git, for example, https://github.com/dell-esg/openshift-bare-metal.git

- Certificates: Add the public certificate for the repository, if required.

- Modify the ArgoCD application out/argocd/deployment/clusters-app.yaml based on your Git repository. See this sample file for guidance.

If required, update the out/argocd/deployment/policies-app.yaml file.

7. Update the ArgoCD instance in the hub cluster using the patch file that you previously extracted into the out/argocd/deployment/ directory. Run the following command:

[core@csah gitops]$ oc patch argocd openshift-gitops \

-n openshift-gitops --type=merge \

--patch-file out/argocd/deployment/argocd-openshift-gitops-patch.json

- Apply the pipeline configuration by running the following command:

[core@csah gitops]$ oc apply -k out/argocd/deployment

Install a spoke cluster

To prepare the hub cluster for site deployment, start ZTP by pushing the CRs to your Git repository. Then:

- Create the required secrets for the site.

These resources must be in a namespace with a name that matches the cluster name.

2. Create the namespace for the cluster by running the following commands:

$ export CLUSTERNS=compact

$ oc create namespace $CLUSTERNS

3. Create a secret with the pull secret to be used for the cluster using this sample file. Run the following command:

$ oc create -f <file name>

4. Create a secret for the BMC credentials of the cluster nodes using this sample file. Run the following command:

$ oc create -f <file name>

4. Repeat step 4 for each cluster node.

5. Create a siteconfig CR for the cluster in your local clone of the Git repository. Use this sample file and update IP or MAC addresses, the installation disk, and so on as required.

6. Add the SiteConfig CR YAML file name that you created in the previous step to the generators section of the kustomization.yaml file. The file name must be similar to the name in the sample file.

7. In the Git repository, commit the SiteConfig CR and associated kustomization.yaml files.

8. Push the changes to the Git repository.

The ArgoCD pipeline detects the changes and begins the site deployment.

9. The GitOps ZTP infrastructure generates a set of installation CRs on the hub cluster in response to a SiteConfig CR being pushed to the Git repository. To view the installation CRs, run the following command:

$ oc get AgentClusterInstall -n compact

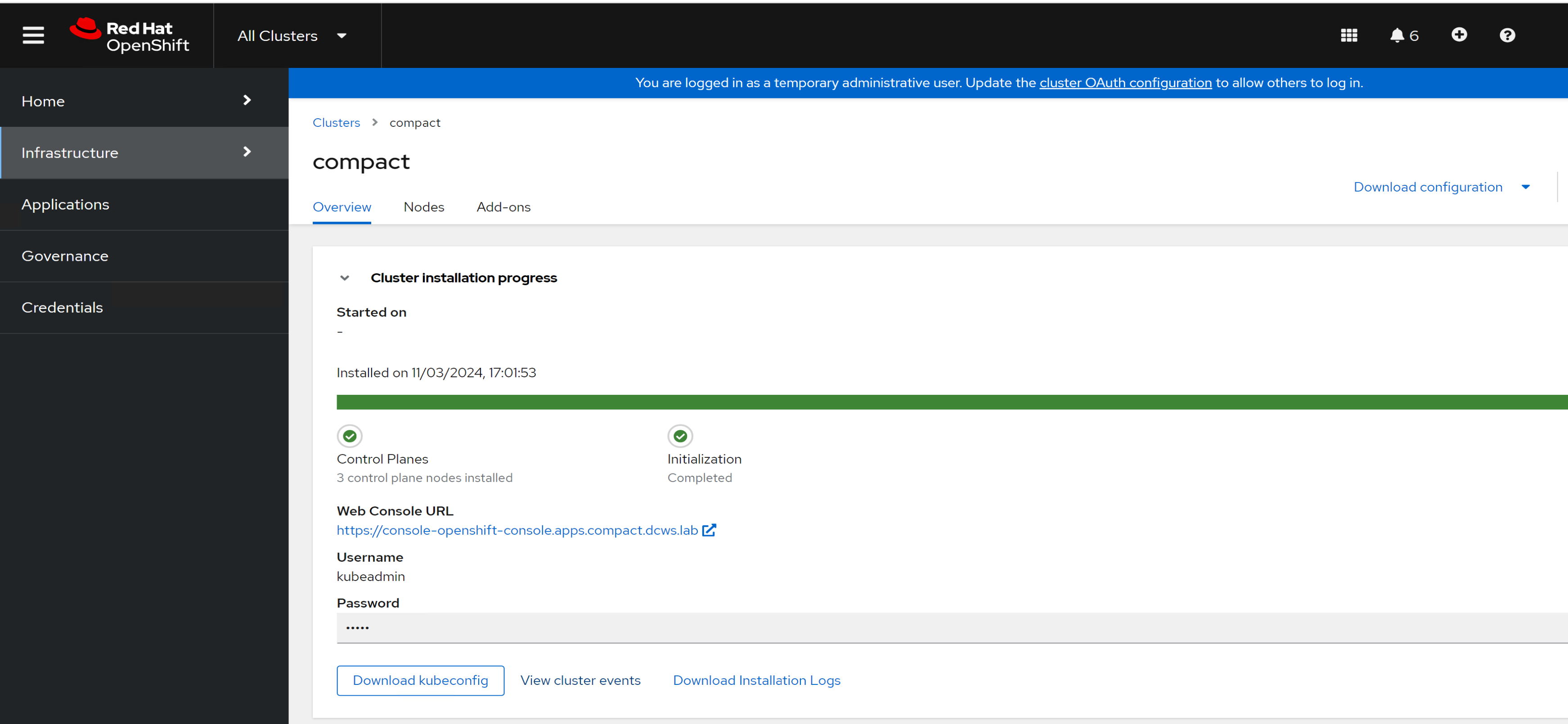

10. Verify the installation status from ACM, as shown in the following figure:

Figure 13. Installation status

11. Download the kubeconfig and kubeadmin credentials to access the cluster.