Home > Workload Solutions > Container Platforms > Red Hat OpenShift Container Platform > Guides > Implementation Guide—Red Hat OpenShift Container Platform 4.14 on AMD-powered Dell Infrastructure > Hosted Control Plane

Hosted Control Plane

-

Overview

Control plane configurations for OpenShift clusters are deployable using either stand-alone or hosted control planes. In thestand-alone set-up, the control plane is hosted on specific physical or virtual machines. However, building control planes as cluster pods using hosted control planes removes the requirement for separate virtual or physical computers. For more information, see Hosted control planes overview.

Prerequisites

Ensure that:

- An OpenShift cluster is installed using the IPI or Assisted Installer method.

- CSI drivers are installed and a default StorageClass is configured on the cluster.

- Records are created for the API and ingress IP addresses of the hosted cluster.

Point the API IP to the worker node of the hub cluster and the Ingress address to the worker node of the spoke cluster by running:

api.<spoke cluster>.<hub cluster>.<domain> Worker node IP of Hub cluster

api-int.<spoke cluster>.<hub cluster>.<domain> Worker node IP of Hub cluster

*.apps.<spoke cluster>.<hub cluster>.<domain> Worker node IP of Spoke cluster

Configure the hub cluster for HyperShift

Perform the following steps:

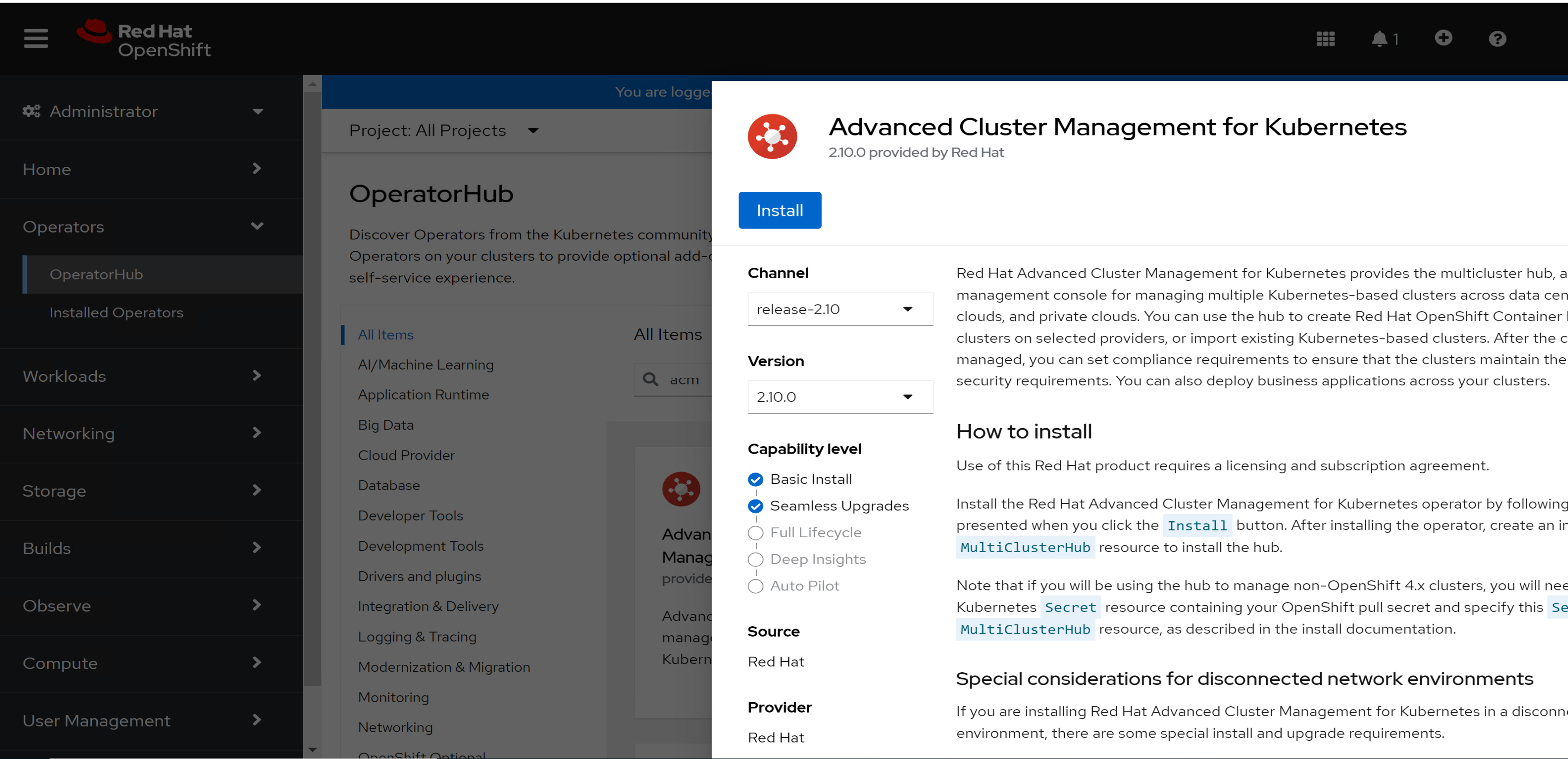

- Install the Advanced Cluster Management for Kubernetes operator from the Operator hub:

- Log in to the OpenShift web console and select Operators > Operator Hub.

- Search for Advanced Cluster Management for Kubernetes and select it.

Figure 15. ACM operator Installation

2. Click Install and install the operator under the default operator-provided namespace.

3. Select Operators > Installed Operators and click Advanced Cluster Management for Kubernetes.

4. Click Create MultiClusterHub CR.

5. Verify that all pods are running:

oc get pods -n open-cluster-management

5. Verify that the MultiClusterEngine CR has the HyperShift component enabled by running the following command:

oc get mce multiclusterengine -o yaml

6. Verify that the HyperShift ManagedClusterAddOn is created in the local-cluster namespace by running the following command:

oc get managedclusteraddons.addon.open-cluster-management.io -n local-cluster

Enable the Central Infrastructure Management service

To enable the service:

- Configure the provisioning resource to watch all namespaces:

oc patch provisioning provisioning-configuration --type merge -p '{"spec":{"watchAllNamespaces": true }}'

2. Use the sample file to create AgentServiceConfig by running the following command:

oc create -f <file name>

3. Enable the desired version in clusterimageset and modify the visible field to match the following output:

oc edit clusterimageset img4.14.0-x86-64-appsub

apiVersion: hive.openshift.io/v1

kind: ClusterImageSet

metadata:

labels:

channel: fast

visible: "true"

name: img4.14.0-x86-64-appsub

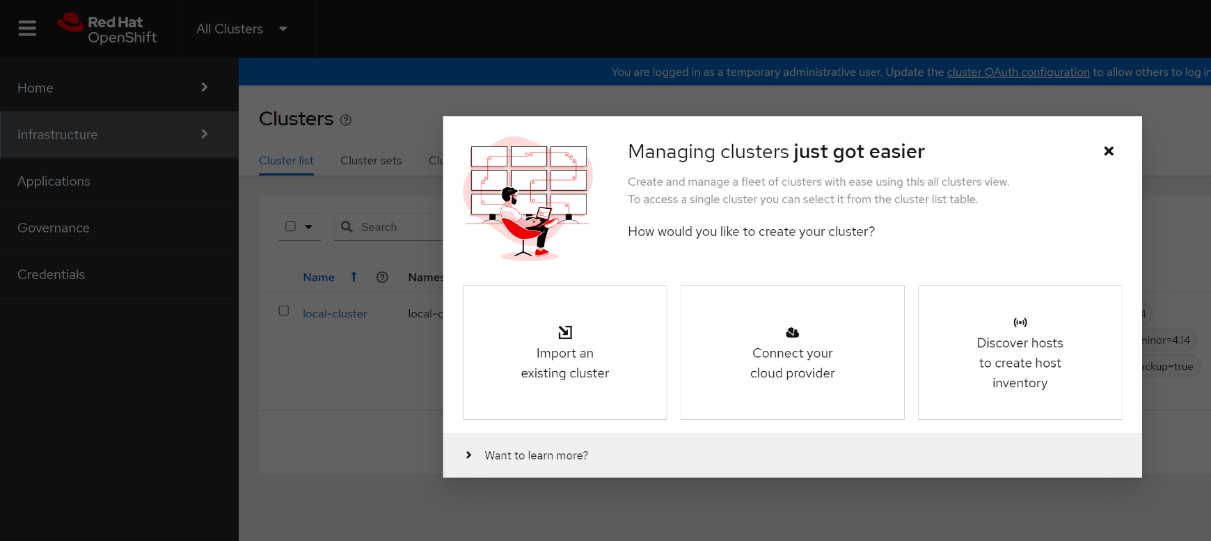

4. Log in to the OpenShift web console and select All clusters from the dropdown menu.

5. To build a new hosted control plane cluster on bare-metal servers, select Discover hosts to create host inventory.

Figure 16. ACM console

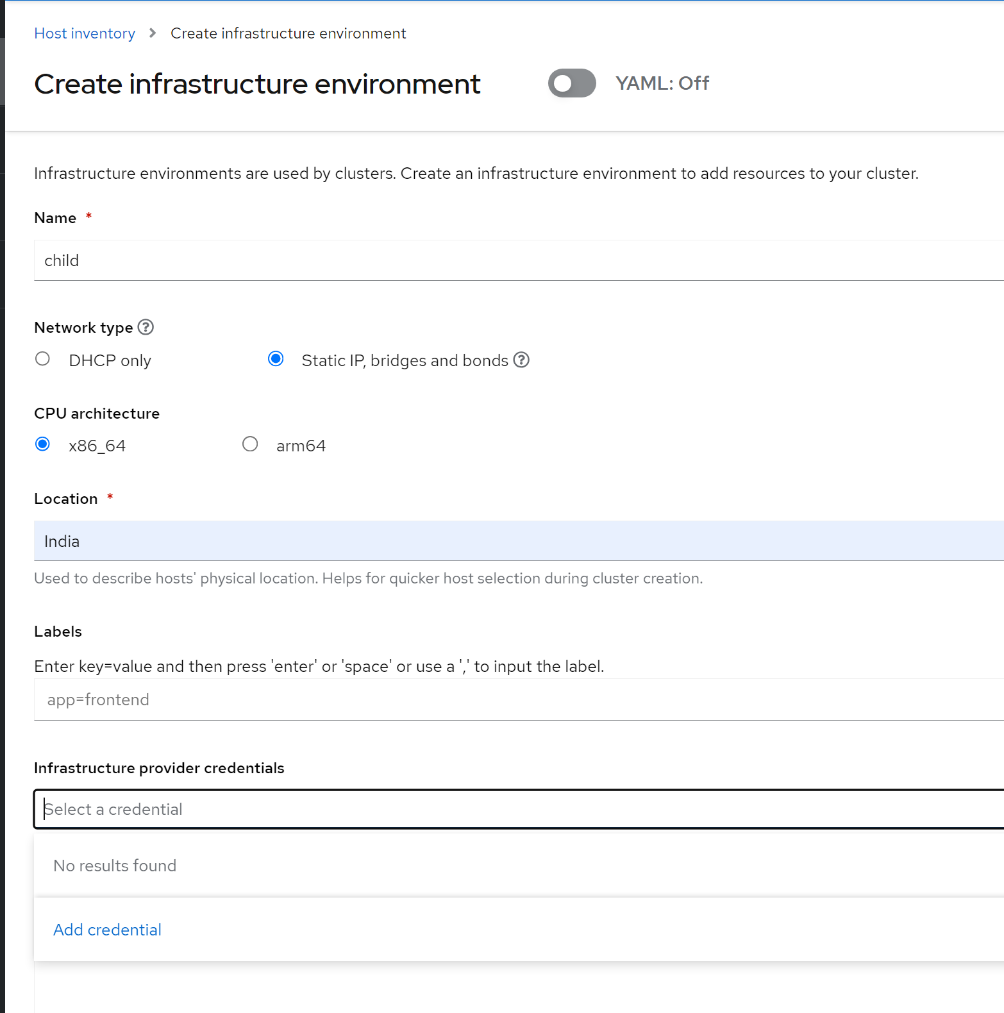

6. Click Create Infrastructure environment to launch the wizard.

Figure 16. Create infrastructure environment wizard

7. Provide the following information:

- The name of the infrastructure environment.

- The network type: Static IP, bridges and bonds.

- The CPU architecture: x86_64.

8. Click Add credential for Infrastructure provider credentials and provide the following information:

- A name for the credential secret and namespace.

- The base DNS domain in the format <hub cluster>.<base domain>

9. Click Next.

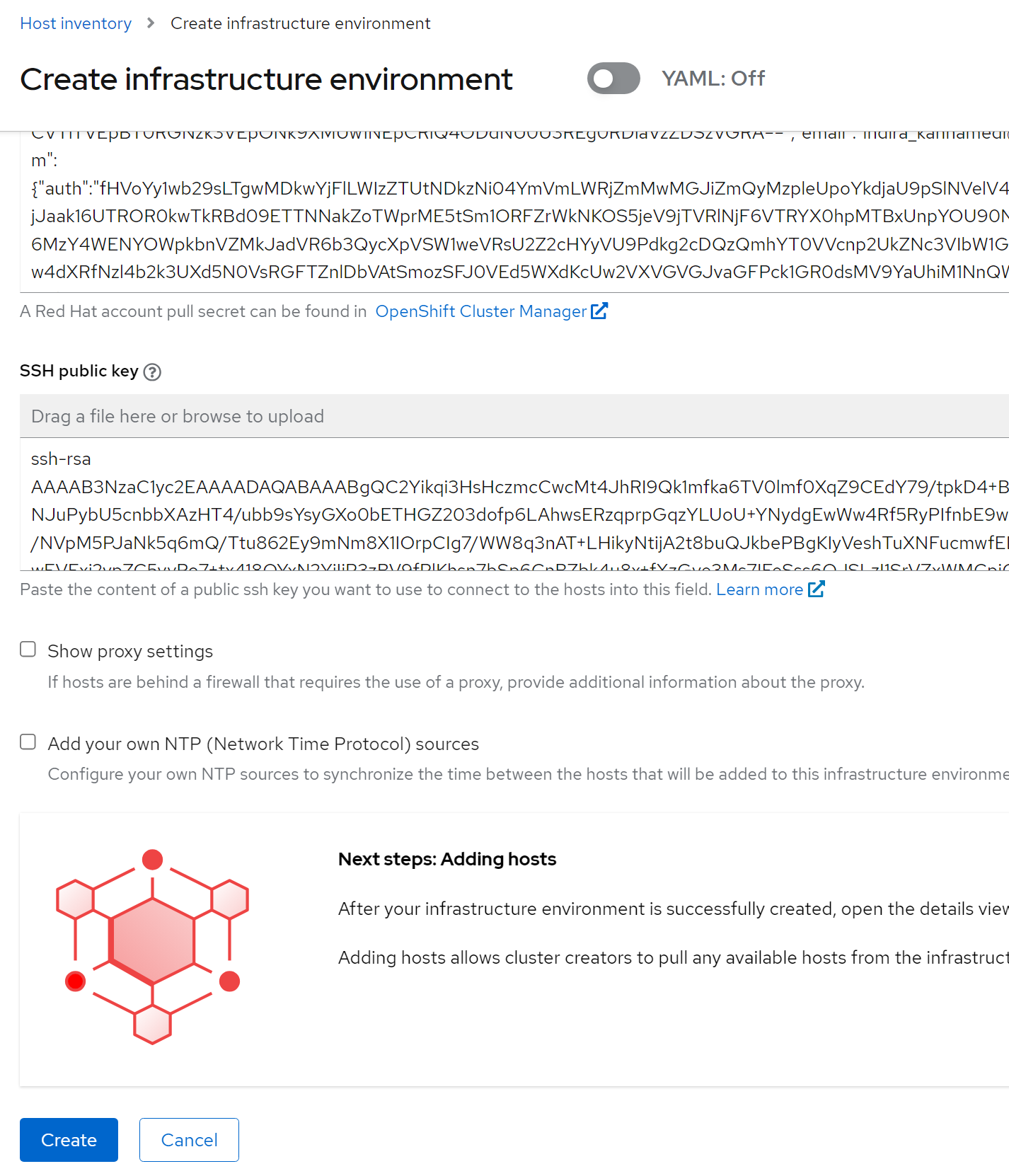

10. Copy the pull secret and SSH key. Click Next.

11. Review the details that you provided for the secret and click Add.

12. After the credentials are added, click Create to launch the Infrastructure environment wizard, as shown in the following figure:

Figure 17. Infrastructure environment wizard

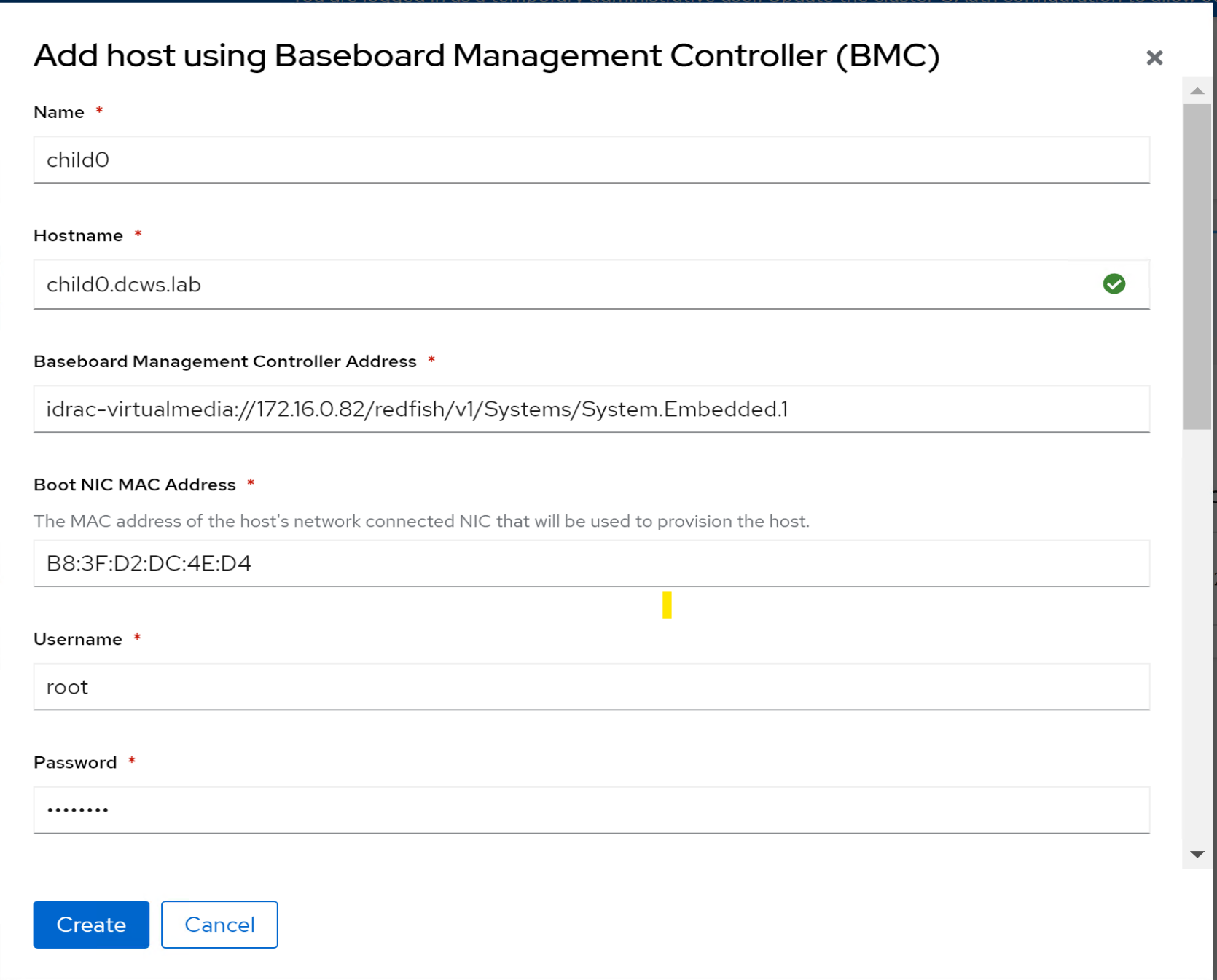

13. Click Add Hosts on the Hosts tab and select the Baseboard Management Controller (BMC). Then provide the following information:

- Enter the Name, Hostname, and Boot NIC MAC Address of the bare-metal server.

Enter the BMC address in the following format: idrac-virtualmedia://<IDRAC IP of baremetal server>/redfish/v1/Systems/System.Embedded.1 - Enter the login credentials of the iDRAC console.

- Use the sample file to create the NMStateConfig to create a bond on nodes.

- Provide a MAC address and NIC names for mapping.

14. Click Create.

Figure 18. Adding Baseboard Management Controller hosts

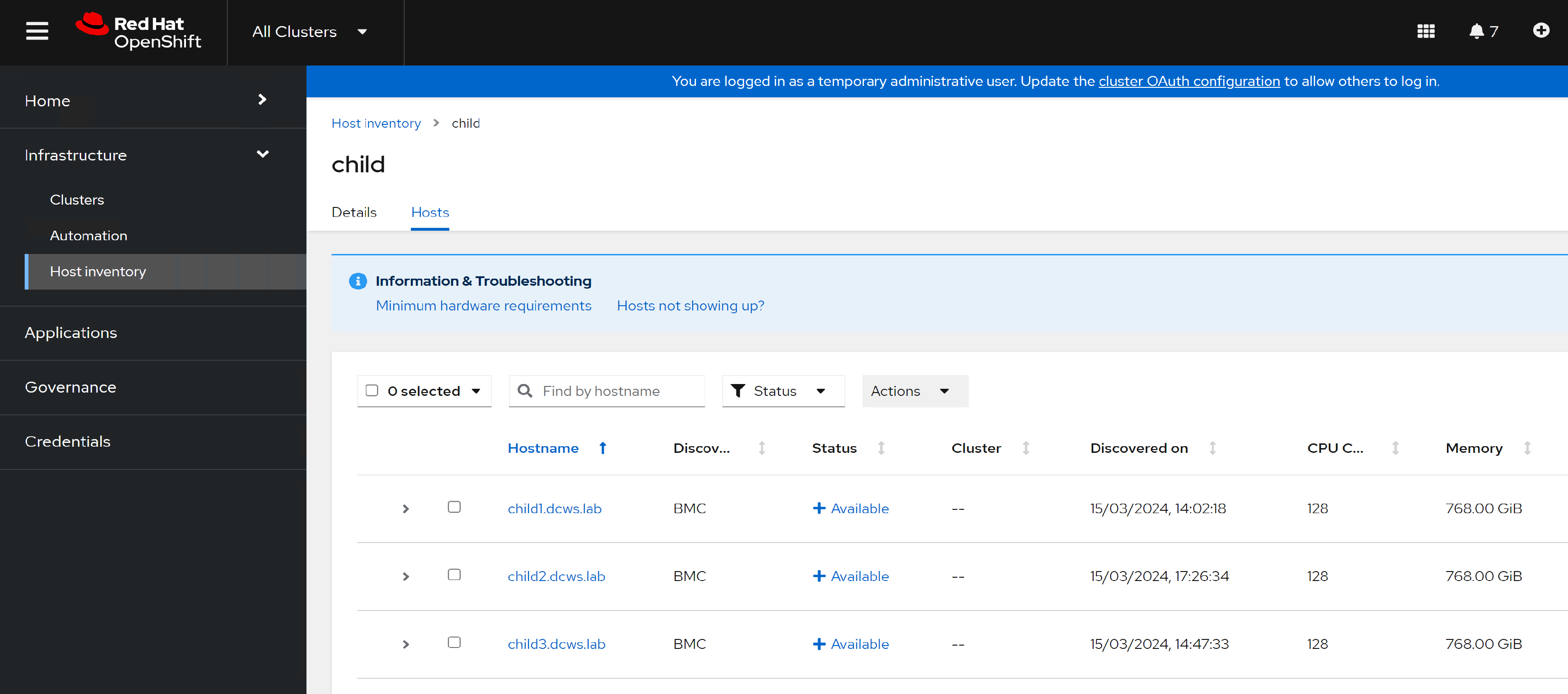

The bare-metal servers are booted using the RHCOS Live image. The status of the nodes on the console changes from Registering -> Provisioning -> Provisioned -> Available.

The following figure shows the available hosts:

Figure 19. Host inventory

Create the hosted control plane

To create the hosted control plane after adding the nodes:

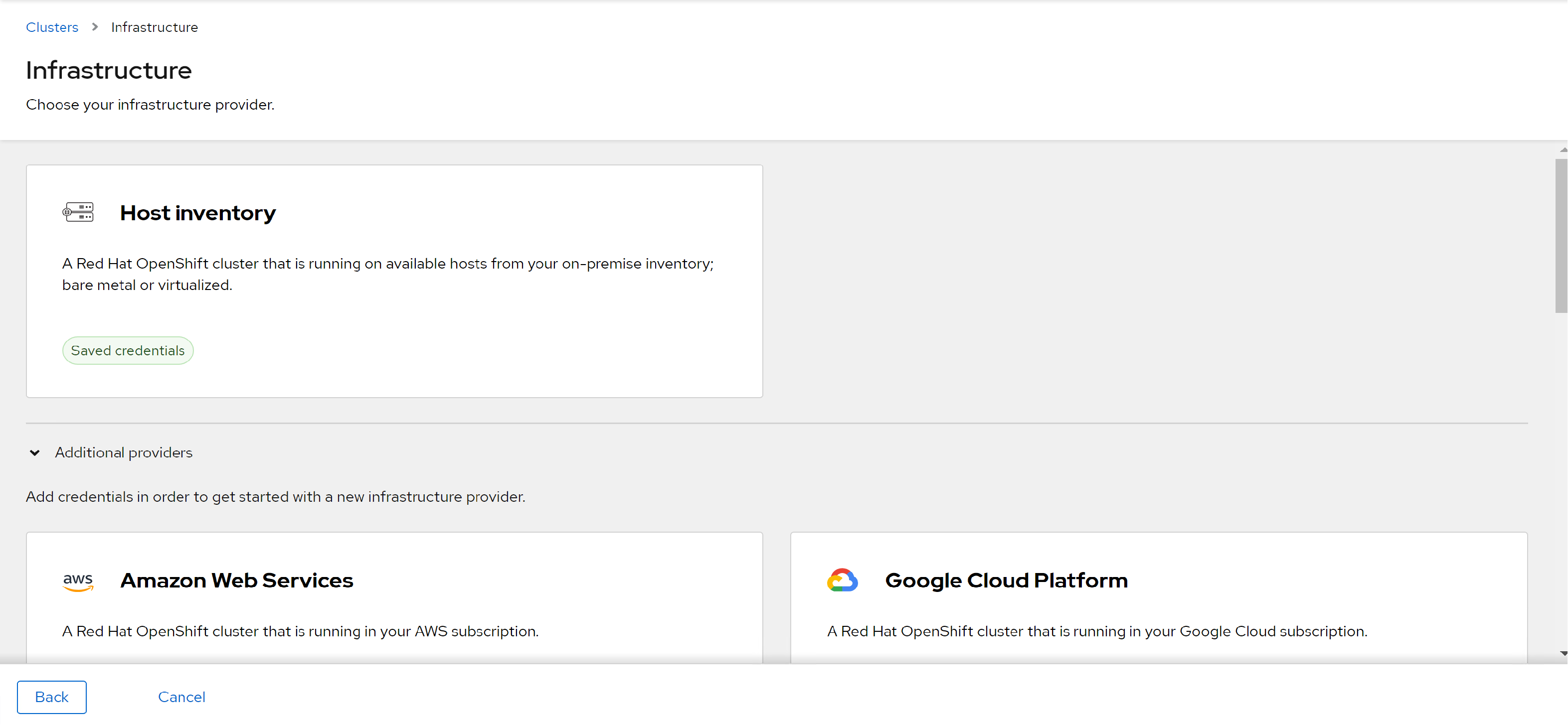

- In the Clusters tab, select Host Inventory.

Figure 20. Host Inventory page on the ACM console

2. Select Hosted as the Control plane type. The Create Cluster wizard opens.

3. In the Cluster details tab:

- Select the Infrastructure provider credentials from the dropdown menu.

- Enter the Cluster name, Base Domain, and OpenShift version, and paste in the Pull secret. Click Next.

Figure 21. Hosted control plane installation

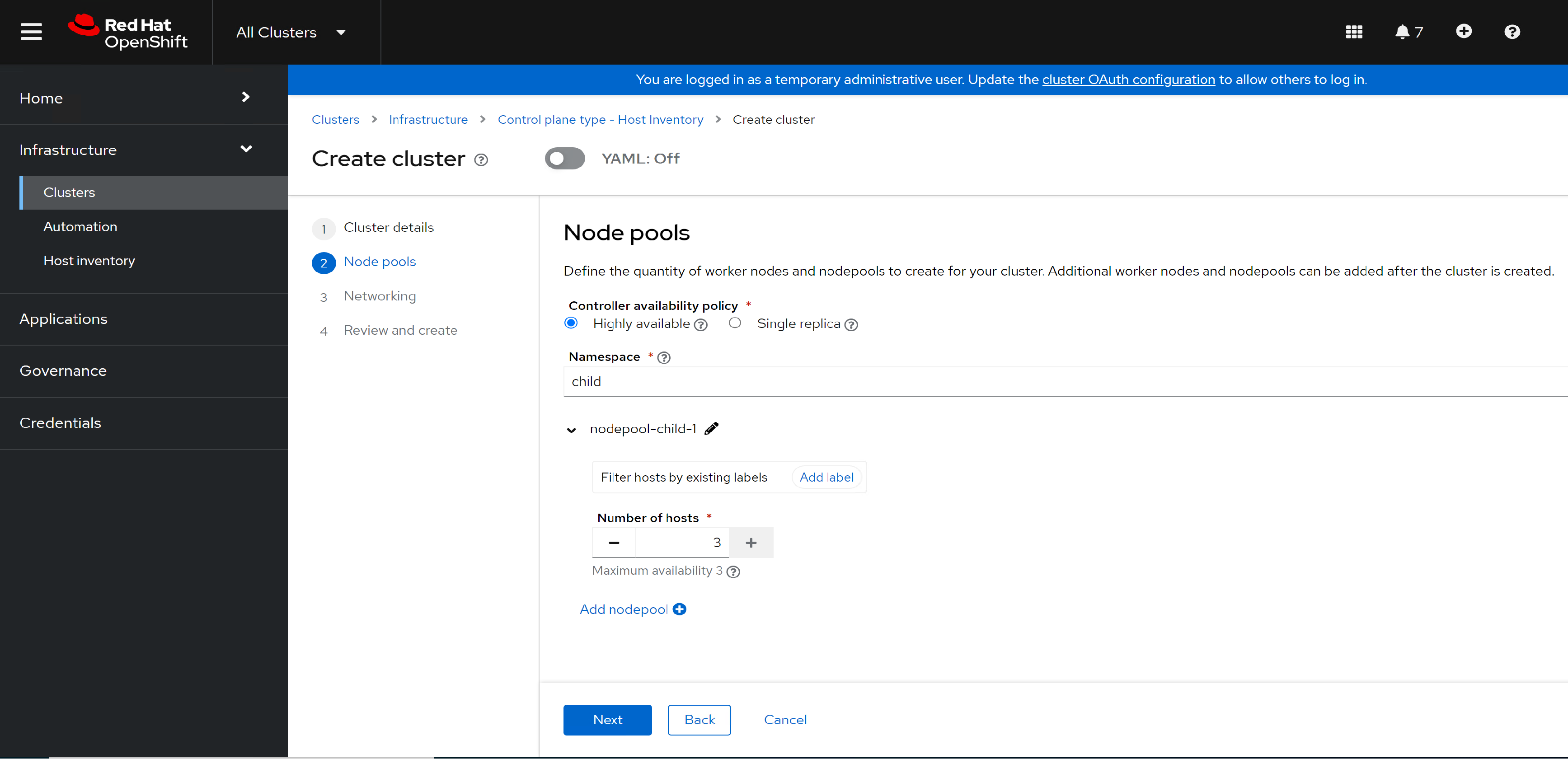

4. On the Node pools tab, select 3 as the number of hosts and click Next.

5. On the Networking tab:

- Select NodePort as the API server publishing strategy.

- Enter a worker node IP address as the Host Address.

- Paste in the SSH key.

Click Next.

6. On the Review and Create tab, click Create.

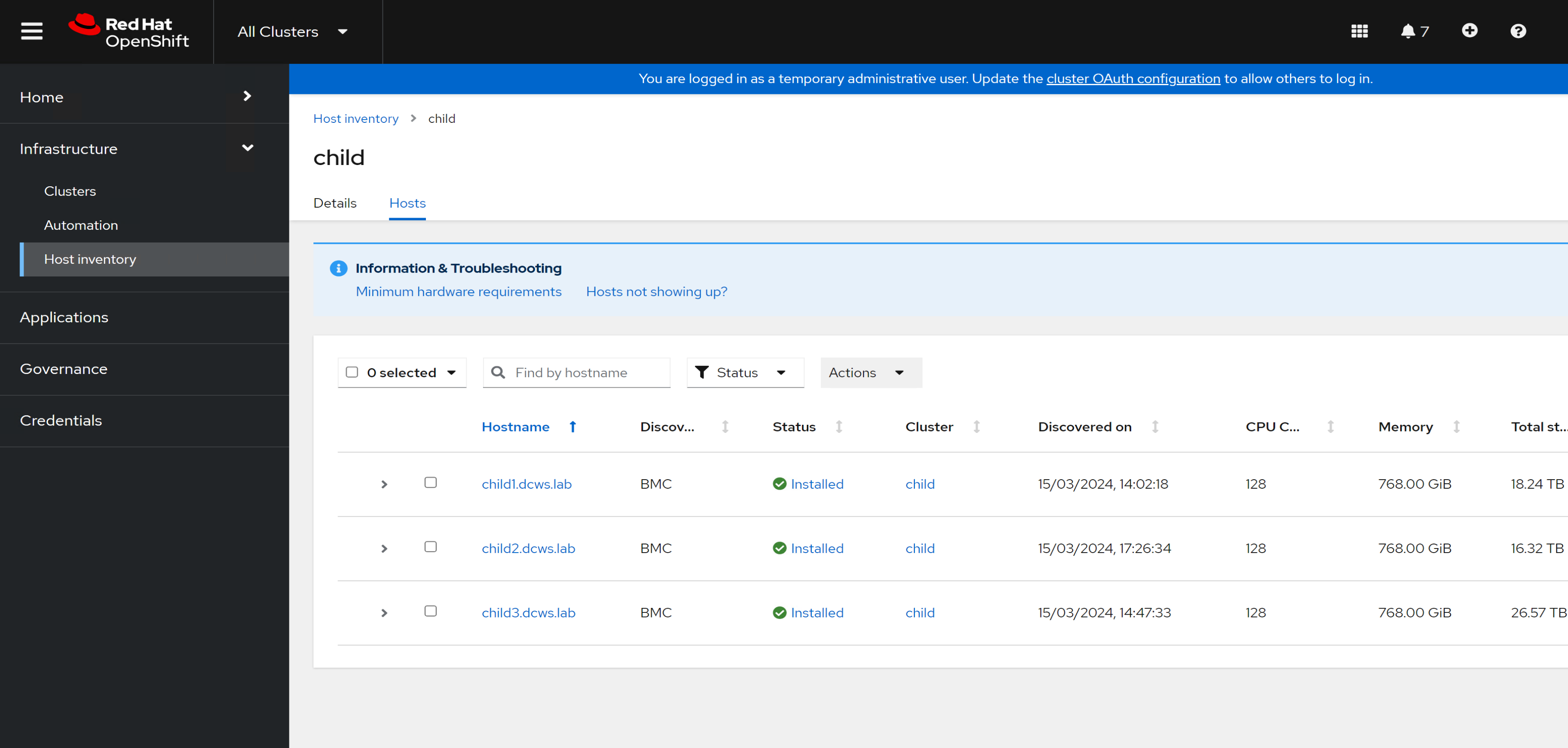

The cluster is installed. You can verify the host status from the Host Inventory tab, as shown in the following figure:

Figure 22. Hosted Control Plane node status

7. After the HyperShift cluster is installed, extract the kubeconfig and kubeadmin-passwords by running the following commands:

oc -n <spoke cluster> extract secret/<spoke cluster>-admin-kubeconfig --to=- > <spoke cluster>-kubeconfig

oc -n <spoke cluster> extract secret/<spoke cluster>-kubeadmin-password --to=- > <spoke cluster>-kubeadmin-password

8. Run the following command from the CLI:

oc get pods -n <spoke cluster namespace> --kubeconfig <path to the spoke cluster kubeconfig file>

9/ Scale out the ingress controller pods by running the following command:

oc patch -n openshift-ingress-operator ingresscontroller/default --patch '{"spec":{"replicas": 3}}' --type=merge --kubeconfig=<path to spoke cluster kubeconfig file>