Home > Workload Solutions > Container Platforms > Red Hat OpenShift Container Platform > Guides > Implementation Guide—Red Hat OpenShift Container Platform 4.14 on AMD-powered Dell Infrastructure > Deploying ObjectScale

Deploying ObjectScale

-

Overview

ObjectScale is a software-defined object scale solution based on containerized architecture. This chapter describes how to deploy ObjectScale on an OpenShift cluster.

To deploy ObjectScale, you must:

- Create and prepare the necessary namespaces in OpenShift.

- Deploy bare-metal CSI drivers for ObjectScale.

- Install ObjectScale on OpenShift.

- Create an object store.

Ensure that:

- Helm v3.12.1 is installed on the CSAH or admin node. See Installing Helm.

- You have downloaded Dell ObjectScale Community Edition v1.4.0 from Dell Support. Copy and extract the .zip file onto the CSAH node.

- A Docker hub account for retrieving images is available.

- All services are enabled to save the crash and dump files in pods into the expected place:

- Log in as root.

- Run the following command on all the cluster nodes:

$ sudo su -

# echo /crash-dump/core-%e > /proc/sys/kernel/core_pattern

Set the node parameters

On each worker node:

- Connect to the node by running the following command:

oc debug node/<worker node>

2. When the prompt appears, run the following command:

chroot /host

3. On all the worker nodes, make the following parameter changes in the /etc/crio/crio.conf file:

- Set the pids_limit to 16384 or add the parameter under [crio.runtime] if it is not already present.

- Add insecure_registries = ["0.0.0.0/0"] under [crio.image] if not already present.

Use the following code excerpt to add the parameters:

[crio.runtime]

pids_limit = 16384

[crio.image]

insecure_registries = ["0.0.0.0/0"]

4. Restart the crio service for the changes to take effect by running the following command:

sudo systemctl restart crio

Create namespaces

To create the necessary namespaces in OpenShift:

- On the CSAH node, set the environment variables by running the following command:

export CSI_NS=csi-baremetal

export SSO_NS=openshift-secondary-scheduler-operator

export OBJECTSCALE_NS=objectscale-system

2. Create namespaces for the secondary scheduler, bare metal CSI, and ObjectScale by running the following command:

oc create ns $SSO_NS

oc create ns $CSI_NS

oc create ns $OBJECTSCALE_NS

- To apply pod security privileges to the namespaces that you created in the previous step, run the following command:

oc label --overwrite ns $SSO_NS pod-security.kubernetes.io/enforce=privileged pod-security.kubernetes.io/audit=privileged pod-security.kubernetes.io/warn=privileged security.openshift.io/scc.podSecurityLabelSync="false"

oc label --overwrite ns $CSI_NS pod-security.kubernetes.io/enforce=privileged pod-security.kubernetes.io/audit=privileged pod-security.kubernetes.io/warn=privileged security.openshift.io/scc.podSecurityLabelSync="false"

oc label --overwrite ns $OBJECTSCALE_NS pod-security.kubernetes.io/enforce=privileged pod-security.kubernetes.io/audit=privileged pod-security.kubernetes.io/warn=privileged security.openshift.io/scc.podSecurityLabelSync="false"

3. Create the role and role binding based on the Role sample file and the RoleBinding sample file. Update any fields as necessary.

oc project $CSI_NS

oc create -f <role yaml file>

oc create -f <role binding yaml file>

4. Create a docker registry pull secret in all three namespaces:

oc create secret docker-registry ocp-secret --docker-server=docker.io/objectscale --docker-username=<docker username> --docker-password=<docker password> --docker-email=<docker account email> -n $SSO_NS

kubectl create secret docker-registry csi-secret --docker-server=docker.io/objectscale --docker-username==<docker username> --docker-password=<docker password> --docker-email=<docker account email> -n $CSI_NS

kubectl create secret docker-registry obj-secret --docker-server=docker.io/objectscale --docker-username==<docker username> --docker-password=<docker password> --docker-email=<docker account email> -n $OBJECTSCALE_NS

Deploy bare-metal CSI drivers for ObjectScale

To deploy the bare-metal CSI drivers:

- On the CSAH node, set the environment variables by running the following command:

export CSI_OPERATOR_VERSION=1.5.1-134.6eac267

export CSI_VERSION=1.5.1-670.9738a24

export REGISTRY=docker.io/objectscale

2. Extract the CSI Helm charts.tgz file by running the following command:

tar zxf dellemc-csi-helm-charts-1.5.1-134.6eac267.tgz

3. Install the secondary scheduler operator by running the following command:

helm install secondary-scheduler-operator secondaryscheduleroperator-${CSI_OPERATOR_VERSION}.tgz -n $SSO_NS --set global.registry=${REGISTRY} –set global.registrySecret=ocp-secret --set image.tag=${CSI_OPERATOR_VERSION} --set csv.version=secondaryscheduleroperator.v1.2.0

4. Wait for the secondary scheduler operator to start. Verify that the secondary scheduler operator pods are in the Running state by running the following command:

oc get pod -n $SSO_NS

5. Install the CSI bare-metal operator by running the following command:

helm install csi-baremetal-operator csi-baremetal-operator-${CSI_OPERATOR_VERSION}.tgz --set image.tag=${CSI_OPERATOR_VERSION} --set global.registry=${REGISTRY} --set log.level=debug --set global.registrySecret=csi-secret -n $CSI_NS

6. Wait for the CSI bare-metal operator pods to start, and then verify that the operator pod is in the Running state by running the following command:

oc get pod -n $CSI_NS

7. Start the CSI bare-metal deployment by running the following command:

helm install csi-baremetal csi-baremetal-deployment-${CSI_OPERATOR_VERSION}.tgz --set image.tag=${CSI_VERSION} --set global.registry=${REGISTRY} --set global.registrySecret=csi-secret --set scheduler.patcher.enable=true --set platform=openshift --set driver.drivemgr.type=halmgr --namespace $CSI_NS

The CSI bare-metal deployment starts after approximately five minutes.

8. Verify that the new CSI storage classes are created by running the following command:

oc get sc

The following excerpt is sample output from the command:

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

csi-baremetal-sc (default) csi-baremetal Delete WaitForFirstConsumer false 2m9s

csi-baremetal-sc-hdd csi-baremetal Delete WaitForFirstConsumer false 2m9s

csi-baremetal-sc-hddlvg csi-baremetal Delete WaitForFirstConsumer true 2m9s

csi-baremetal-sc-nvme csi-baremetal Delete WaitForFirstConsumer false 2m9s

csi-baremetal-sc-nvme-raw-part csi-baremetal Delete WaitForFirstConsumer false 2m9s

csi-baremetal-sc-nvmelvg csi-baremetal Delete WaitForFirstConsumer true 2m9s

csi-baremetal-sc-ssd csi-baremetal Delete WaitForFirstConsumer false 2m9s

csi-baremetal-sc-ssdlvg csi-baremetal Delete WaitForFirstConsumer true 2m9s

csi-baremetal-sc-syslvg csi-baremetal Delete WaitForFirstConsumer true 2m9s

9. When the new CSI pods and all the containers are in the Running state, verify the number of containers in the Ready column (for example, 4/4) by running the following command:

oc get pod -n $CSI_NS

10. Apply the appropriate OpenShift SCC settings for the ObjectScale namespace:

oc adm policy add-scc-to-group anyuid system:serviceaccounts:$OBJECTSCALE_NS

Install ObjectScale

To install ObjectScale:

- Extract the ObjectScale helm charts.tgz file by running the following command:

tar zxf objectscale-helm-charts-1.4.0.tgz

2. Install Postgres by running the following command.

Note: You can modify the following commands to tailor the storage resources that are used for the storageClassName and secondaryStorageClass. The preferred storage class for ObjectScale depends on the available capacity on the system drives of the nodes in the cluster.

helm install postgres ./postgres-ha-3.0.2-293.d7ddd9b.tgz --set global.registry=${REGISTRY} --set global.storageClass=csi-baremetal-sc-nvmelvg --namespace=${OBJECTSCALE_NS} --timeout=30m

3. Install the ObjectScale REST API package by running the following commands:

export VERSION=1.4.0

helm install objectscale-restapi ./objectscale-restapi-$VERSION.tgz --namespace=$OBJECTSCALE_NS --set global.registry=${REGISTRY} --set global.registrySecret=obj-secret --set global.platform=OpenShift --set global.deploymentmodel=application:openshift

4. To view the Dell End User License Agreement (EULA), run the following command:

helm show readme objectscale-portal-$VERSION.tgz | more

The EULA Revision Date value is shown in the last line of the readme file in the format ddMMMYYYY.

5. Set an environment variable for the date. For example:

export EULA_DATE=09Sep2020

6. Using a cluster-admin user, deploy ObjectScale in your OpenShift cluster by running the following command:

helm install objectscale ./objectscale-portal-$VERSION.tgz --namespace $OBJECTSCALE_NS --set global.registry=${REGISTRY} --set global.storageClassName=csi-baremetal-sc-nvmelvg --set global.secondaryStorageClass=csi-baremetal-sc-nvmelvg --set global.platform=OpenShift --set accept_eula=$EULA_DATE --set global.schedulerName=csi-baremetal-scheduler --set global.deploymentmodel=application:openshift --set global.registrySecret=obj-secret

This command installs the ObjectScale Portal UI, ObjectScale Manager, DECKS, and KAHM on the OpenShift cluster. Wait 15 to 20 minutes for all the services to start.

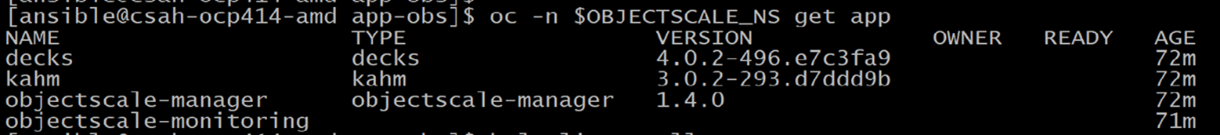

7. Get a list of the Kubernetes applications to ensure that all the ObjectScale components are present by running the following command:

oc get application.app

Figure 28. Sample command output – ObjectScale applications

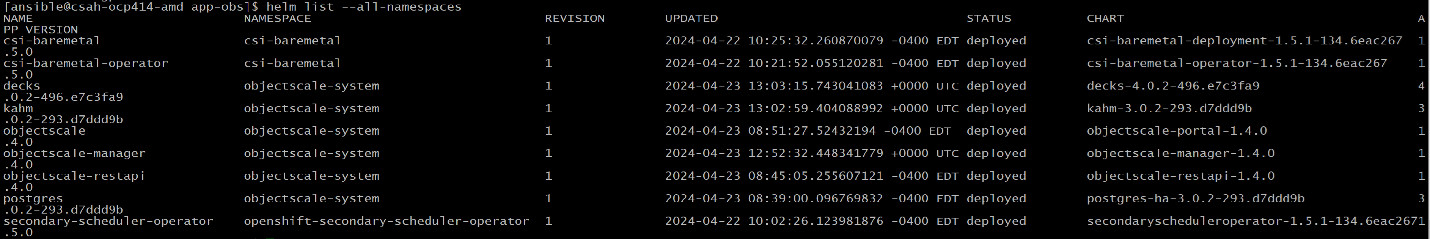

8. After a few minutes, verify that the ObjectScale components are in the Deployed status by running the following command:

helm list --all-namespaces

Figure 29. Sample output – ObjectScale components status

9. Install the csi-baremetal-alerts chart into the default namespace by running the following command:

helm install csi-baremetal-alerts --namespace $CSI_NS ./csi-baremetal-alerts-$VERSION.tgz

10. When the installation is complete, edit the objectscale-portal-external service to change it to the NodePort type:

oc edit svc objectscale-portal-external -n $OBJECTSCALE_NS

Follow this sample spec definition:

spec:

clusterIP: 172.30.190.110

clusterIPs:

- 172.30.190.110

externalTrafficPolicy: Cluster

internalTrafficPolicy: Cluster

ipFamilies:

- IPv4

ipFamilyPolicy: SingleStack

ports:

- name: https

nodePort: 31464

port: 4443

protocol: TCP

targetPort: 4443

selector:

app.kubernetes.io/component: objectscale-portal

app.kubernetes.io/name: objectscale-manager

sessionAffinity: None

type: NodePort

11. Identify the port to be used to access the ObjectScale UI by running the following command:

oc get svc -A | grep objectscale-portal-external

objectscale-system objectscale-portal-external-np NodePort 172.30.190.110 <none> 4443:31464/TCP 3m33s

Based on the command output, the port for accessing the web UI is 31464, which means you can access the ObjectScale console through a browser using 31464 as the worker-node-ip.

12. To log in to the ObjectScale Portal for the first time, use the default root user credentials:

Username: root

Password: ChangeMe

Note: The ObjectScale Portal prompts you to change the password for root on login.

Figure 30. ObjectScale login page

Creating an ObjectStore

To create an object store, you must:

- Apply an ObjectScale license.

- Create an account and a user.

- Create an ObjectStore instance.

- Add accounts to the ObjectStore.

Apply an ObjectScale license

To apply an ObjectScale license:

- Log in to the ObjectScale web UI.

- Expand the Administration tab to view the ObjectScale license settings.

- Select Licensing and click Apply.

- In the Apply License area, upload the ObjectScale license file and click Apply.

- Expand the license in the Licensing table to display details about the ObjectScale license and the enabled features and capacities.

Create an account and a user

Note: You can create a bucket in ObjectStore only after associating one or more accounts to the ObjectStore. Access to a key pair is needed for bucket authentication.

To create an account and a user:

- On the Accounts tab, click New Account.

- Provide a name in the alias field, enable or disable encryption, and click Save.

- Click the newly created account name and select the Users tab.

- Provide a name for the user.

- On the Permissions tab, you can assign policies for the user to have access to different components in the ObjectScale console. You can choose to assign predefined policies or assign a policy later.

- On the Policies tab, select all the policies that you want to assign to the user.

- Under Tags, add necessary tags for user identification.

- Review the details and create the user.

- Download the .csv file containing the access key and secret access key for the user.

Create an ObjectStore instance

Start by creating a new namespace on the cluster by running the following command:

oc new-project objectstore

- On the ObjectScale Portal UI, expand Administration in the left pane.

- Select ObjectScale > Object Stores.

- Select the new namespace from the dropdown menu and click New Object Store.

- Provide a name for the new object store instance, verify the namespace, and select the version that you want.

- Choose User Storage Class and System Storage Class names from the dropdown menu and click Advanced.

- On the Topology page, select the nodes to be used for creating the object store instance.

- On the Storage tab, edit the storage and memory details of nodes as required. For example, edit the requested raw capacity and replicas for server storage and volumes to determine the storage capacity for user buckets in this object store instance.

- On the Connectivity tab, change the S3 service type from load balancer to NodePort.

This change enables http ports for an insecure connection.

9. Verify the details in the Review and Save tab, and then create the object store instance.

When all the components are created and all the pods are running, the object store instance State changes to "Started" and its Health changes to "Available."

Add accounts to the ObjectStore

To add accounts to the object store instance:

- Expand Administration in the left pane and click the ObjectStore tab.

- On the ObjectStore tab, click the name of the object store instance and select Accounts.

- Click Add and select the Account ID of the object store instance. Enable or disable encryption as required.

- If required, add a bucket default quota to limit the bucket size. To do this, enter values for Block Writes at Quota and Notification and click Save.

Note: Leave these fields empty if no restrictions are to be applied.

All users in the account gain access to buckets in the object store instance based on the policies you attach to the individual user.

Create a bucket

Buckets are object containers that are used to store and control access to objects.

To create a bucket on the Buckets tab:

- Select the namespace, object store instance, and account from the dropdown menus. Click New Bucket.

- On the General tab, provide a name for the bucket and verify the namespace, object store instance, and account details. Click Next.

- On the Policy tab, create a bucket policy if needed. Click Next.

- On the Control tab, enable or disable versioning, object lock, quotas, and encryption. Click Next.

- If required, create events on the Event Rule tab. Click Next.

- Review the details in the Review tab and click Save.

Provision ObjectScale as image registry storage

To perform application builds, you must first configure the image registry for the OpenShift cluster.

To provision storage for the image registry, create a bucket on ObjectStore and assign the storage to the image registry configuration.

- Log in to the ObjectScale UI and create a bucket on ObjectStore with the name image-reg.

- Create a user in the account that is attached to the object store in which the bucket is created.

- Create a secret that provides the credentials of the user that you created in the previous step by running the following command:

oc create secret generic image-registry-private-configuration-user --from-literal=REGISTRY_STORAGE_S3_ACCESSKEY=OKIA44795AA25C4C1609 --from-literal=REGISTRY_STORAGE_S3_SECRETKEY=aC5uPEFhiA7t1HSA/xxYgmwwIhS5hxxxxxxxxxxxxx --namespace openshift-image-registry

4. Identify a port for accessing the ObjectStore S3 endpoint in the object store namespace by running the following command:

oc get svc -n objectstore | grep s3

objs-s3 NodePort 172.30.187.209 <none> 443:31020/TCP,80:31925/TCP 96m

5. Edit the registry configuration to use the PVC by running the following command:

[core@csah ~]$ oc edit configs.imageregistry.operator.openshift.io

6. Update the spec as follows:

- Change the value for managementState from Removed to Managed.

- Add the name as the storage key:

spec:

storage:

managementState: Unmanaged

s3:

bucket: image-reg

region: test-region

regionEndpoint: http://192.168.35.81:31141/

virtualHostedStyle: false

Validate the image registry

To validate the image registry:

- To expose the route, enable the defaultRoute parameter in the configs.imageregistry.operator.openshift.io resource by running the following command:

oc patch configs.imageregistry.operator.openshift.io/cluster --patch '{"spec":{"defaultRoute":true}}' --type=merge

2. Verify that the AVAILABLE column displays “True” for all the cluster operators:

[core@csah-pri ~]$ oc get co

Note: While the image registry cluster operator status is being verified, the status of cluster operators such as operator-lifecycle-manager and kube-apiserver might change. Review all the cluster operators before continuing.

3. Ensure that the image registry pods are all in the Running state, as shown in the following output:

[core@csah-pri ~]$ oc get pods -n openshift-image-registry

NAME READY STATUS RESTARTS AGE

cluster-image-registry-operator-8cd57fd97-4rg94 1/1 Running 0 5d21h

image-pruner-28569600-djgtj 0/1 Completed 0 2d5h

image-pruner-28571040-gm6pt 0/1 Completed 0 29h

image-pruner-28572480-g4rt9 0/1 Completed 0 5h21m

image-registry-8599b4f7d4-qnjtv 1/1 Running 0 4m18s

node-ca-5km7s 1/1 Running 3 47d

node-ca-f6fgf 1/1 Running 2 47d

node-ca-fbw6b 1/1 Running 4 47d

node-ca-t9xll 1/1 Running 3 47d

4. Get the default registry route:

HOST=$(oc get route default-route -n openshift-image-registry --template='{{ .spec.host }}')

5. Test the connection to the image registry service by running the following command:

[core@csah-pri ~]$ podman login -u kubeadmin -p $(oc whoami -t) $HOST

You should see the following output:

Login Succeeded!