Home > Communication Service Provider Solutions > Telecom Technical White Papers > Design and Optimize a 5G Telco Cloud > Energy optimization

Energy optimization

-

Challenge: Optimizing energy consumption in Kubernetes nodes is crucial for achieving energy efficiency and reducing operational costs in telco cloud environments.

Solution: Kubernetes Operators for energy optimization

Efficiently managing energy consumption in Kubernetes nodes poses a challenge, but various Kubernetes operators provide solutions that address this challenge and enable effective energy optimization within the telco cloud.

The Kubernetes Power Management Operator offers power management and energy optimization capabilities in Kubernetes nodes. This operator allows administrators to dynamically adjust the power states of nodes, including the ability to turn off CPU cores that are not required for current workloads. The operator reduces power consumption by selectively turning off idle CPU cores without compromising performance. This approach is particularly effective in workloads with varying resource demands, allowing for granular control over energy usage.

The Kubernetes Node Feature Discovery Operator plays a vital role in energy optimization by providing insights into the hardware capabilities of Kubernetes nodes. It enables the discovery of node features like power management capabilities and advanced power-saving features. This information helps identify nodes with specific power-saving capabilities, such as Frequency Invariant Scheduler (FIS), which enables intelligent CPU frequency scaling based on workload demand.

By leveraging these operators, telcos can optimize energy consumption in their Kubernetes nodes and achieve significant energy savings. However, it is essential to consider the impact of different tunings on energy consumption. For example, reducing CPU frequency or disabling CPU cores can result in lower power consumption but may affect the performance of applications that require high CPU utilization. Adjusting memory configurations, disk I/O settings, and network bandwidth allocation can also impact energy usage.

Regular monitoring and analyzing energy consumption metrics are essential to fine-tune node configurations for optimal energy efficiency. By carefully adjusting parameters based on workload requirements and conducting performance evaluations, telcos can balance energy optimization and performance. This iterative approach ensures ongoing optimization and delivers sustainable energy savings.

Kubernetes Power Manager Operator: https://github.com/intel/kubernetes-power-manager

Low latency impact on power consumption

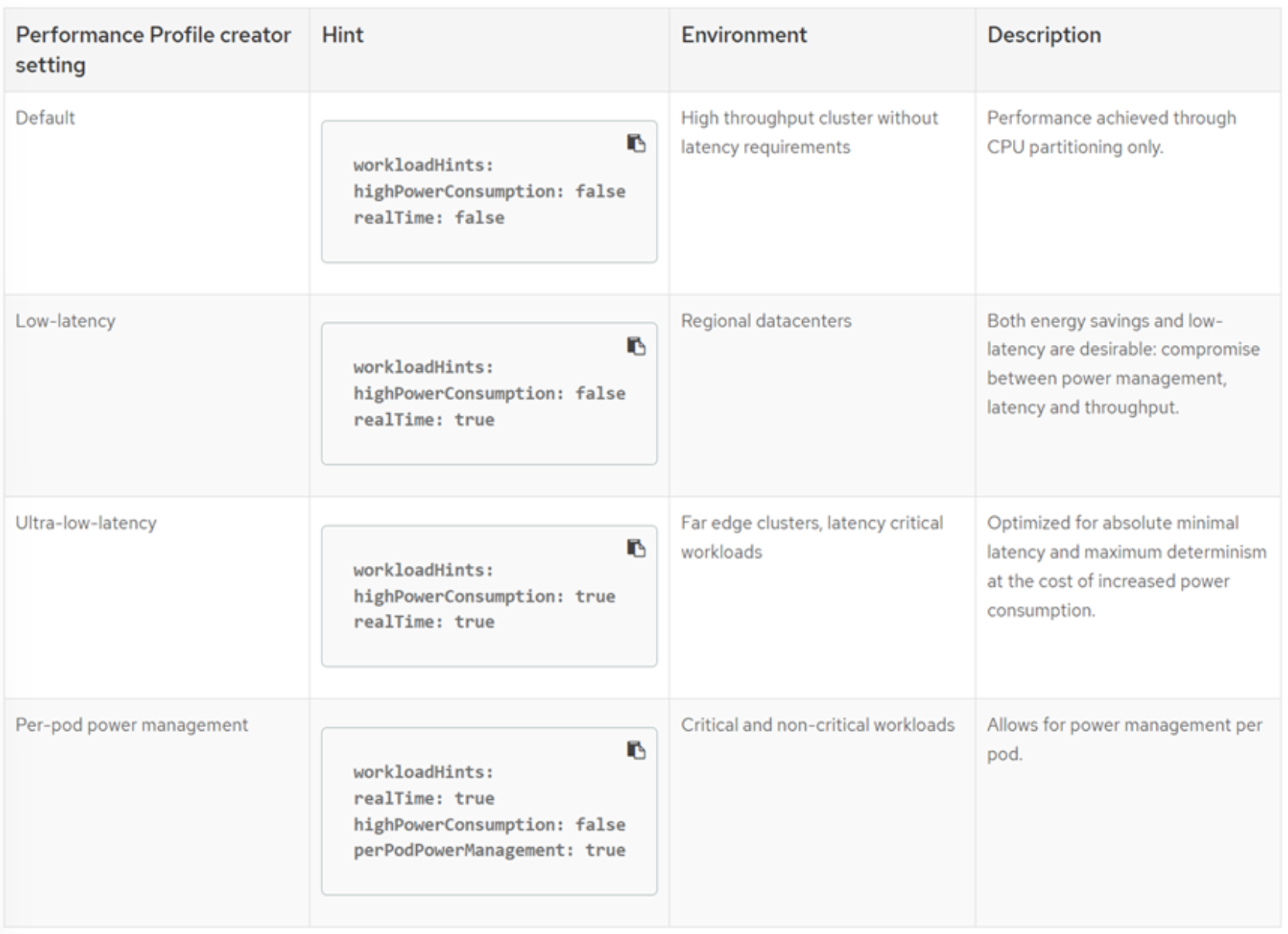

When optimizing a node for low latency, it is essential to understand that there can be a trade-off between power consumption and efficiency. Tuning for low latency typically turns off power-saving features in the CPU, chipset, and operating system to prioritize achieving the lowest deterministic latency possible.

Power-saving features such as CPU frequency scaling, CPU idle states, and chipset power management are typically disabled or set to high-performance modes when tuning for low latency. While beneficial for reducing power consumption and improving energy efficiency in normal operating conditions, these features introduce additional latency and variability, which is undesirable in low-latency scenarios.

By turning off these power-saving features, the node operates at higher power levels and consumes more energy, reducing power efficiency. The focus is on achieving consistent and predictable low-latency performance rather than optimizing for energy efficiency.

When tuning a node for specific low-latency requirements, carefully considering the trade-offs between low latency and power consumption is essential. Balancing the wanted latency goals with the overall system’s power requirements and efficiency considerations is essential to ensure optimal performance while maintaining acceptable power consumption levels.

Figure 11. Impact of performance profile selection on power consumption

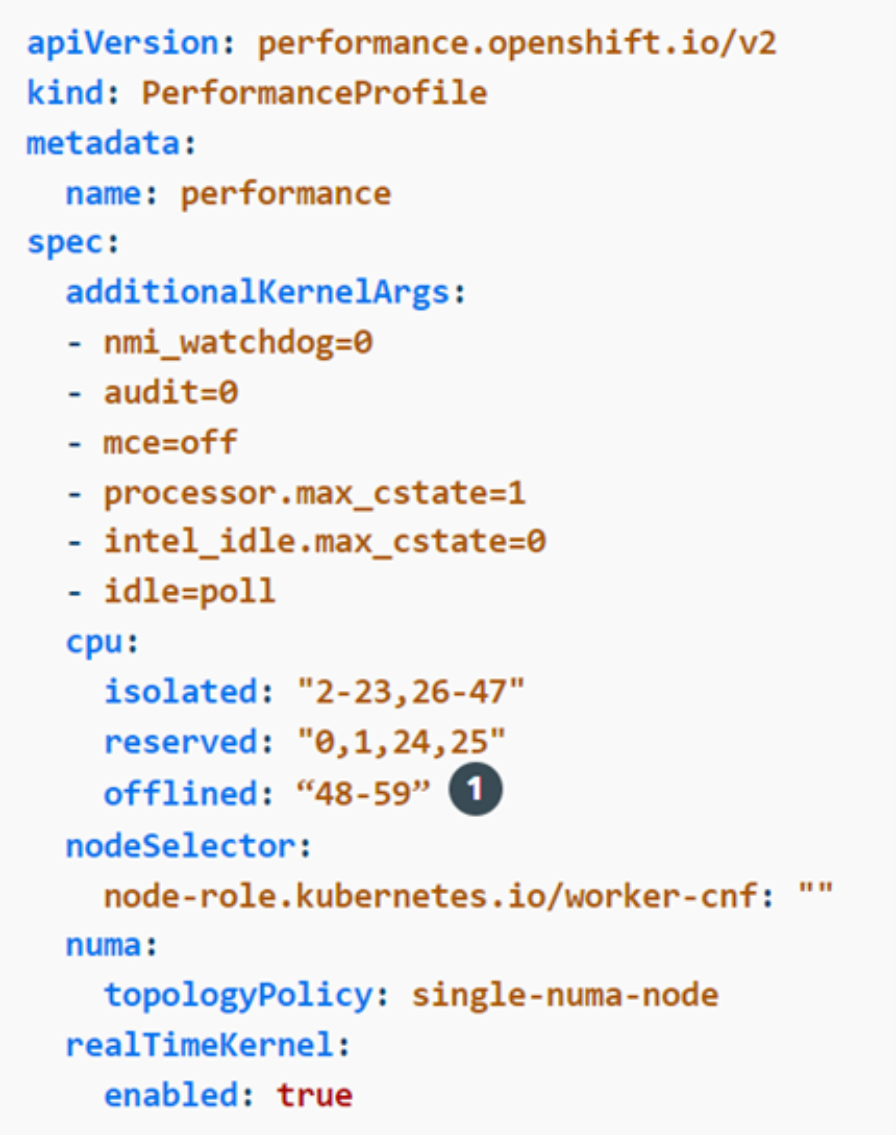

Optimize by turning off CPU cores

While procuring CPUs, opting for models with multiple cores is common to accommodate future growth and scalability. However, all the available resources are not often immediately required on day one. Significant energy savings can be achieved by strategically turning off unused CPU cores and right-sizing the infrastructure according to the workload demands. This approach allows for efficient resource utilization and helps minimize power consumption.

With the help of the node tuning operator, turning CPU cores on and off becomes a streamlined process. By deactivating unneeded CPU cores through the operator’s configuration, the system can operate with a reduced power footprint while still meeting the current workload requirements. This dynamic approach allows for flexibility in resource allocation and ensures that the infrastructure aligns with the immediate needs of the workload.

Should the workload demand increase, the previously turned-off CPU cores can easily be reactivated by enabling them through the node tuning operator. This on-demand activation of CPU cores enables efficient scaling and adaptability, ensuring that the infrastructure can seamlessly accommodate future growth while maintaining optimal energy efficiency.

Figure 12. Turning off unneeded CPUs with the Node Tuning Operator