Home > Storage > Unity XT > Storage Admin > Dell Unity Dynamic Pools > Expanding a dynamic pool

Expanding a dynamic pool

-

To provide flexibility, a user is able to expand an existing tier by one or more drives or add a new drive tier to a pool under most circumstances. The only time an expansion is not allowed is if the number of drives being added to an existing tier is more than enough to fill a drive partnership group, but not enough to also fulfill the minimum drive requirements to start another. In this instance, either less drives or more drives need to be added to the pool. This topic is covered in further detail in the Supported Configurations section of this paper.

When a single drive is successfully added to a dynamic pool, the space is either added as usable capacity for storage resources or spare space. The drive’s capacity is only added as spare space if the requirement for spare space extents has been increased. In this instance, one or more drives need to be added to the pool to increase the usable capacity within the pool, based on the hot spare capacity setting for the tier. To make the usable capacity available to the pool, a rebalance of drive extents within the dynamic pool drive partnership group must occur. This process is necessary as RAID extents cannot include drive extents entirely from the newly added drive. In the background, drives extents are relocated throughout the drive partnership group. During this time, CPU resources are utilized for relocation operations, but priority is always given to user I/O and the relocation process will throttle to not impact performance. This operation does take time to complete. The capacity will be available within the pool once the job completes.

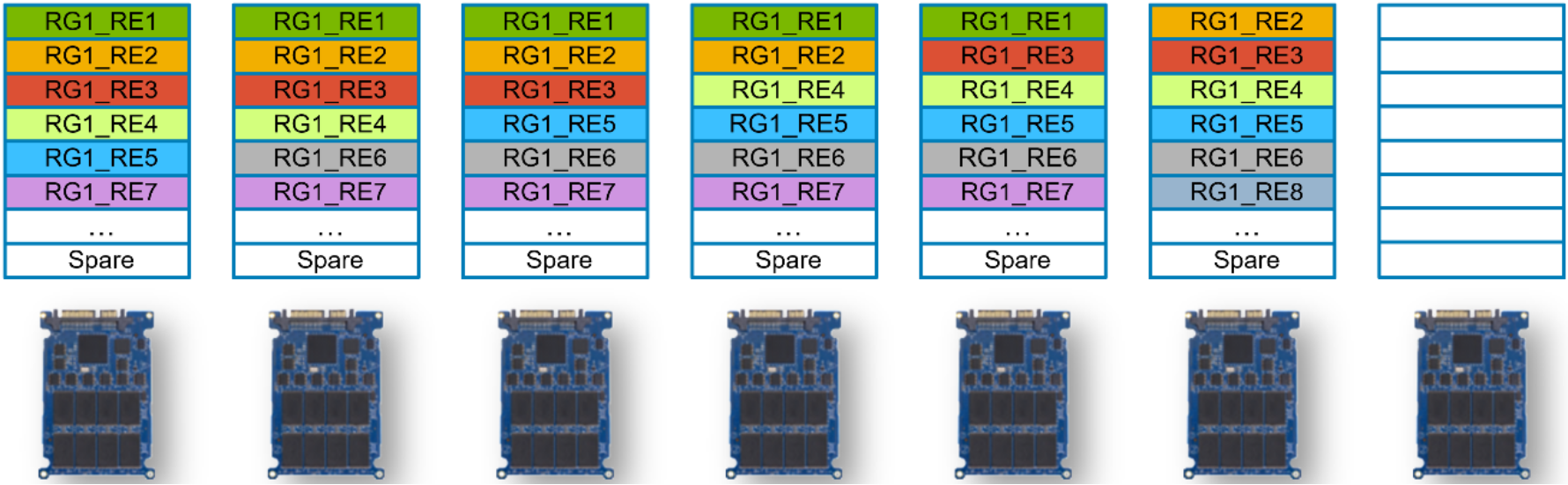

Figure 9 shows an example of expanding a dynamic pool by a single drive which does not increase the spare space requirement. If the spare space requirement increases, a drive extent relocation still occurs and spare space extents are spread out across the drives within the drive partnership group. In this example, drive extents are first created on the newly added drive. Since no two extents from a drive can be placed into a RAID extent, the drive extents must be rebalanced. To rebalance drive extents, the dynamic pool algorithms first identify a drive extent to relocate, and a location on the new drive to place it. This movement is a copy operation, which frees the original location of the drive extent for use. This process is repeated until all new space is spread across the drives within the drive partnership group.

Figure 9 shows an example of expanding a dynamic pool by a single drive which does not increase the spare space requirement. If the spare space requirement increases, a drive extent relocation still occurs and spare space extents are spread out across the drives within the drive partnership group. In this example, drive extents are first created on the newly added drive. Since no two extents from a drive can be placed into a RAID extent, the drive extents must be rebalanced. To rebalance drive extents, the dynamic pool algorithms first identify a drive extent to relocate, and a location on the new drive to place it. This movement is a copy operation, which frees the original location of the drive extent for use. This process is repeated until all new space is spread across the drives within the drive partnership group.Figure 9 Dynamic pool single drive expansion example with additional drive

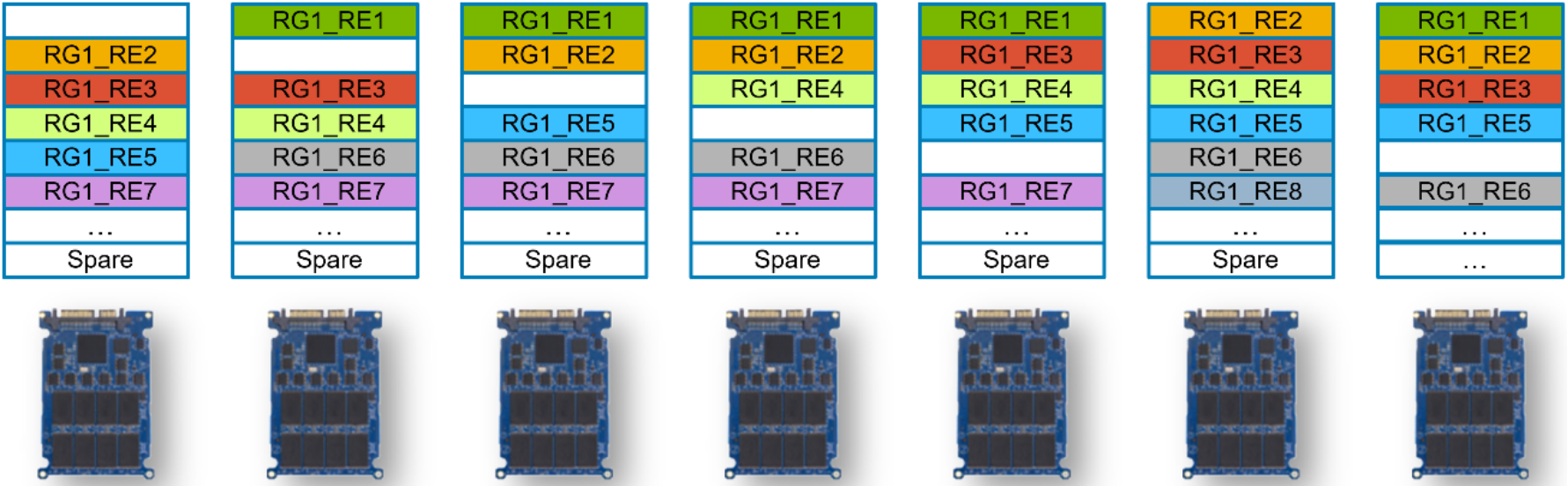

Figure 10 shows the state of the drive partnership group after the relocation of drive extents has occurred. Used drive extents from various drives within the drive partnership group have been relocated to the newly added drive to free space. At this point, free drive extents from different drives can be combined into RAID extents, and a new private dynamic pool RAID group and LUN will be created. Once this process completes, the

usable capacity of the pool will be increased for use.

usable capacity of the pool will be increased for use.Figure 10 Dynamic pool single drive expansion example with rebalance complete.

When the number of drives added to the pool for a particular tier matches the stripe width plus one drive and the spare space amount does not increase, space can quickly be made available to the pool. In this situation, drive extents, RAID extents, and the private RAID group and LUN can quickly be created on the new drives, and no upfront relocations need to occur. After the space is made available to the pool, a background relocation process is started to spread used and spare space extents out across the drives within the drive partnership group. This process ensures that application workloads, wear, and spare space are spread out among the drives within the drive partnership group.

In Dell UnityOS version 4.5 the dynamic pool expansion job for particular drive counts may be treated as multiple operations within the system. When expanding a flash tier by a drive count equal to or less than the stripe width, a single drive expansion process will occur before the remaining drives will be added to the pool. These operations will be seen as a single job in Unisphere. This change does not affect the total amount of space being added to the pool, and allows for a portion of the space to be made available during the expansion process if the spare space requirement is not increased by the first drive of the expansion. This change does not affect single drive expansions, expanding the pool with a drive count equal to the stripe width + 1 or more, expanding an existing performance or capacity tier, and expanding the pool with a new drive type.

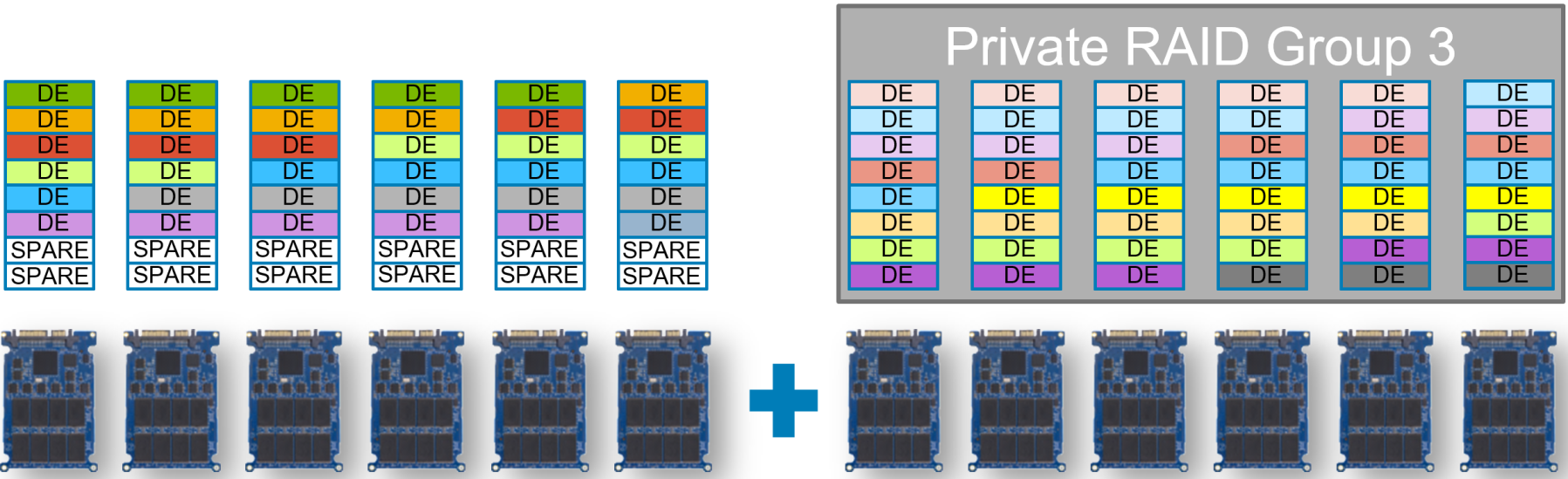

Figure 11 shows an example of a pool created with six drives. For this example, assume the pool is a RAID 5 (4+1), and after some period of time the pool is expanded using an additional six drives, bringing the total drive count to 12. This additional drive count matches the current stripe width of 4+1 plus an additional drive. In this instance, all dynamic pool components can quickly be created on the new drives, and space can be made available to the pool. After this is done, drive extents are relocated across the existing and new drives to spread out the spare space extents, as well as the drive extents containing user data. This operation is a background process, which will throttle as needed so it does not impact application I/O.

Figure 11 Dynamic pool multiple drive expansion example

When a drive expansion process is started, a job is created in the system and is run as a background task. The additional capacity is not available within the dynamic pool being expanded until the process completes. The job’s progress can be tracked in Unisphere by reviewing the Jobs page, or through Unisphere CLI or REST API. The amount of time the overall expansion process takes, directly depends on the number of drives being added, and the size and type of drives.