Home > Storage > PowerMax and VMAX > Storage Admin > Dell PowerMax and VMware vSphere Configuration Guide > NVMe over Fabrics

NVMe over Fabrics

-

Beginning with PowerMaxOS 5978.444.444, the PowerMax array introduced nonvolatile memory express (NVMe) over Fabrics (NVMeoF). NVMeoF is the media itself such as NAND-based flash or storage class memory (SCM) which constitute the PowerMax backend. NVMe or nonvolatile memory express is a group of standards which define a PCI Express (PCIe) interface used to efficiently access data storage volumes on NVM. NVMe provides concurrency, parallelism, and scalability to drive performance. It replaces the SCSI interface. NVMeoF is the specification that details how to access that NVMe storage over the network between host and storage. The network transport could be Fibre Channel, TCP, RoCE, or any other number of next-generation fabrics. Dell supports Fibre Channel with NVMe on the PowerMax 2000, 8000, 2500, and 8500, also known as FC-NVMe. Dell also supports TCP with NVMe on the PowerMax 2500 and 8500, also known as NVMe/TCP.

To use FC-NVMe requires 32 Gb/s Fibre Channel modules (SLICs). The emulation FN is assigned to ports on these modules to support FC-NVMe. In addition, in order to use FC-NVMe with a host, a supported Operating System and supported HBA card is necessary. With NVMeoF, targets are presented as namespaces (equivalent to SCSI LUNs) to a host in active/active or asymmetrical access modes (ALUA).

To use NVMe/TCP with the PowerMax 2500 and 8500 requires 25 Gb/s network modules (SLICs). An OR emulation is assigned to ports on these modules to support NVMe/TCP. In addition, in order to use NVMe/TCP with a host, a supported Operating System, and supported network card is necessary. With NVMeoF, targets are presented as namespaces (equivalent to SCSI LUNs) to a host in active/active or asymmetrical access modes (ALUA).

VMware vSphere 7 is the first release to support NVMeoF. It offers both NVMe over Fibre Channel (FC-NVMe) and NVMe over Remote Direct Memory Access (RDMA) (RoCE v2). Dell only offers FC-NVMe with vSphere 7 and higher on the PowerMax 2000, 8000, 2500, 8500. Dell offers NVMe/TCP with vSphere 7 U3 and higher on the PowerMax 2500 and 8500. ESXi hosts discover and use the presented namespaces, emulates the NVMeoF targets as SCSI targets internally, and presents them.

Note: The PowerMax 2000 and 8000 do not support NVMe/TCP. The PowerMax 2500 and 8500 support FC-NVMe when running a minimum of PowerMaxOS 10.1.0.0.

Dell requires the following to use FC-NVMe with vSphere 7 or 8:

- A 32 Gb SLIC on the PowerMax array at a minimum code level 5978.479.479 with fix 104661

- A host HBA (Gen6) that supports FC-NVMe on the ESXi.

- A Gen5 (8, 16 Gbps) or Gen6 (32 Gbps) switch

- Dell requires the following to use NVMe/TCP with vSphere 7 U3 and above:

- A host network card that supports NVMe/TCP on the ESXi (the network speed does not have to be equivalent to the array).

- A 25 Gb SLIC on the PowerMax array at a minimum code level of PowerMaxOS 10.0 (6079).

- A network switch that supports 25 Gb/s.

Both Dell and VMware have several restrictions for NVMeoF which are included below:

- A device can only be presented to an ESXi host from a PowerMax by one emulation - NVMeoF or traditional FC/iSCSI, not both.

- No SRDF/Metro support.

- No support for VMware SRM using VMFS on the PowerMax.

- RDMs are not supported.

- No shared VMDKs until vSphere 7.0 U1.

- No SCSI-2 or SCSI-3 reservations since NVMeoF uses a different command set.

- There is no equivalent NVMeoF command for SCSI VAAI XCOPY. VMware uses the default software host copy instead.

- Namespaces (devices) and paths are limited depending on the version of vSphere.

Despite these restrictions, there is nothing unique about the configuration of NVMeoF on the PowerMax for VMware. The general, Dell-recommended best practices should be followed.

NVMeoF device presentation

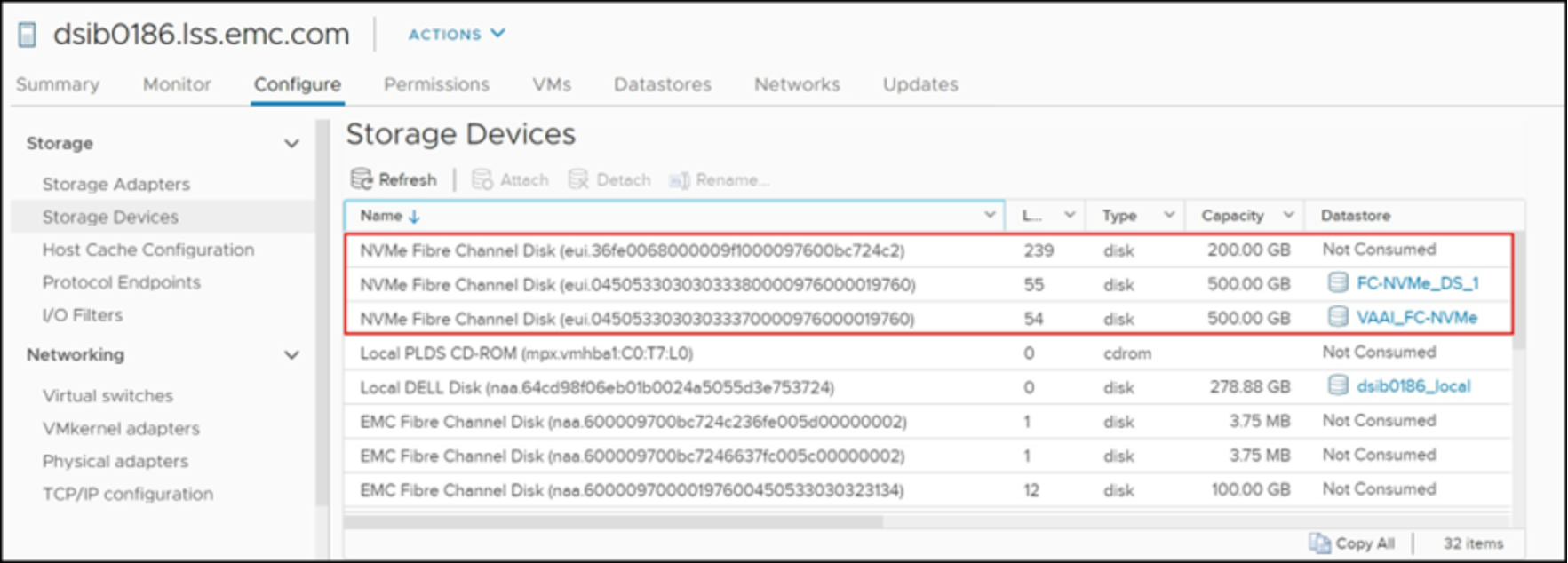

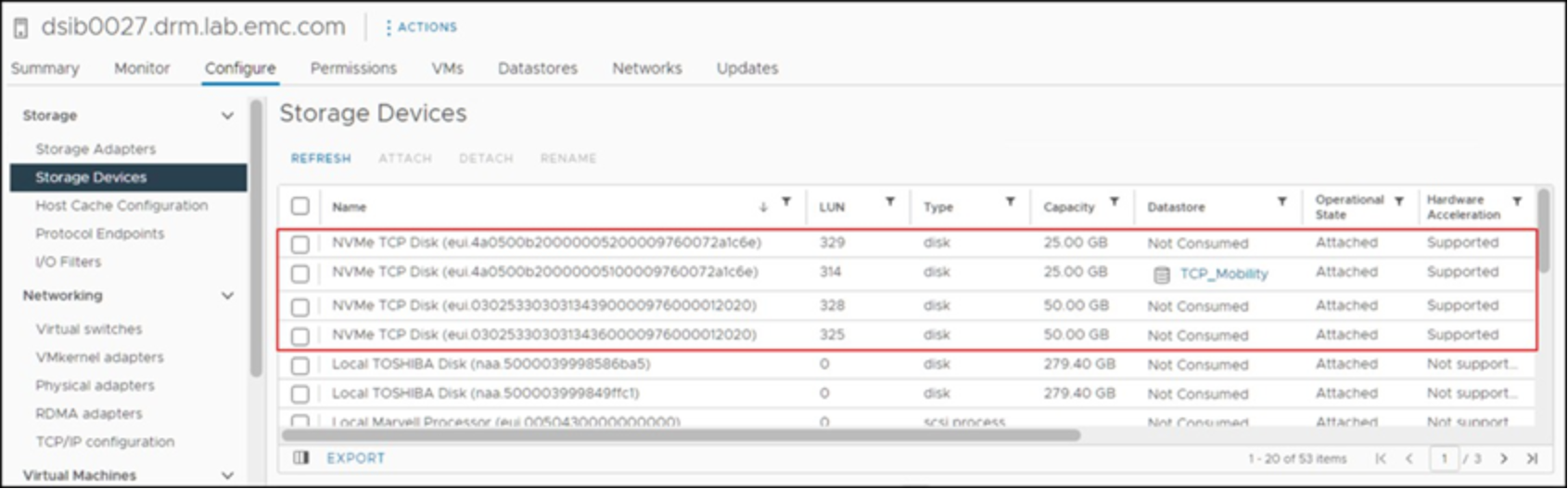

NVMeoF devices use the EUI prefix, or Extended Unique Identifier, and not the NAA or Network Addressing Authority. Either naming, however, is unique to that LUN. The storage device generates the NAA or EUI number. Since the NAA or EUI is unique to the LUN, that same naming is used across any ESXi hosts to which the device is presented. This scheme is what permits ESXi hosts to recognize the same datastore on that LUN, even if those hosts are in different vCenters (or standalone). Figure 3 shows how those FC-NVMe devices appear from within the vSphere Client and Figure 4 shows NVMe/TCP. They are not classified as (Dell) EMC disks, rather as NVMe. Like devices that are presented over FC and iSCSI, NVMeoF supports both the mobility ID and compatibility ID formats, each represented below.

Figure 3. FC-NVMe devices in the vSphere Client

Figure 4. NVME/TP devices in the vSphere Client

NVMeoF detail in vSphere

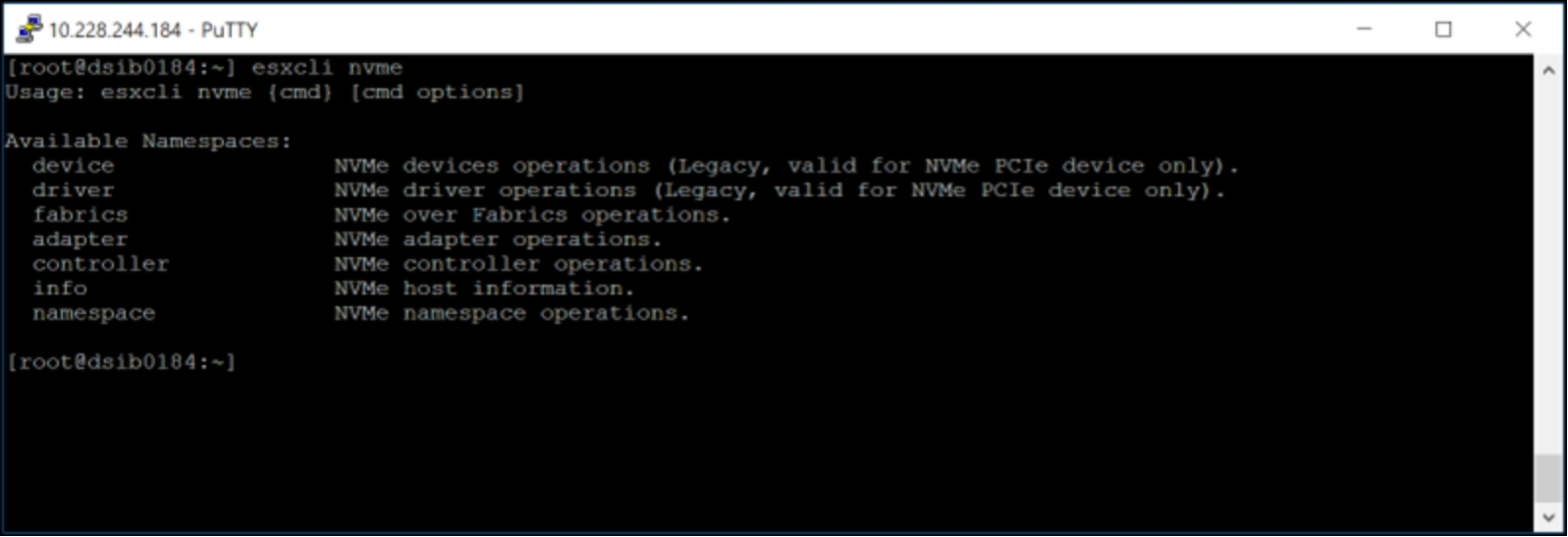

VMware offers specific esxcli commands for NVMe to list namespaces, controllers, drivers, and so forth. The options are available in Figure 5.

Figure 5. Esxcli nvme command

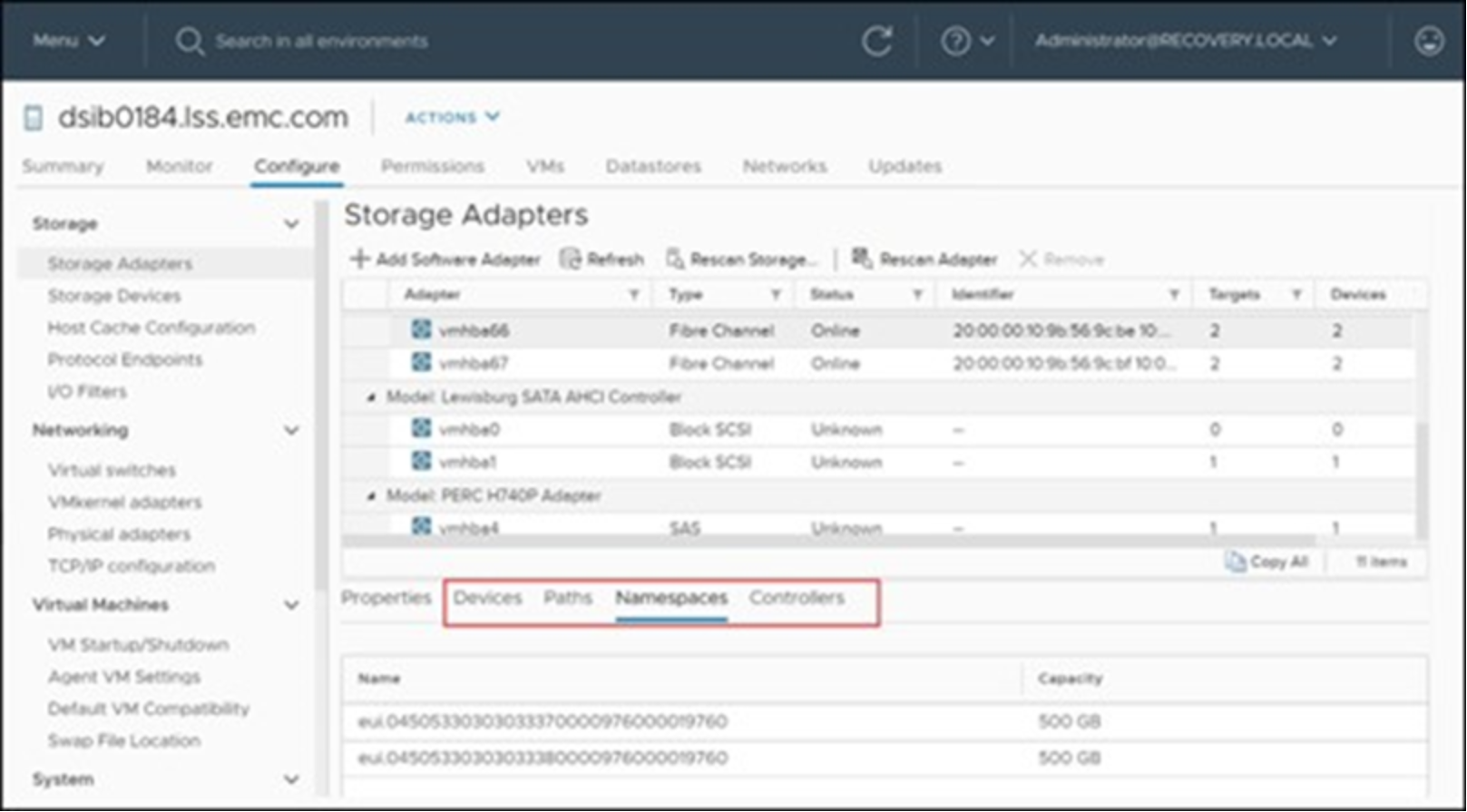

In addition, many of the command outputs are available in the vSphere Client. Highlight the appropriate storage adapter where the NVMe devices are presented. In Figure 6, details are available on devices, paths, namespaces, and controllers.

Figure 6. NVMeoF detail in the vSphere Client

Migration from FC/iSCSI to NVMeoF

There are two supported methods for migrating a VM from a VMFS datastore on a SCSI presented device to an NVMe presented device: online and offline.

The online method uses VMware Storage vMotion to move the VM between protocols. This method requires two devices, the SCSI VMFS datastore where the VM resides, and a new, NVMe presented device with a new VMFS datastore.

The offline method entails resignaturing the VMFS datastore after presenting it over the new NVMe protocol. Recall that the signature of a VMFS datastore partially consists of the device ID. Since SCSI and NVMe present different formats for the ID, VMware shows a mismatch when the device is presented through a different protocol. Therefore, the device must be resignatured, and the VMs re-registered.

Each method has its pros and cons. The online method allows no downtime, yet double the storage is used (at least initially), and it can be time consuming. The offline method is fast, however it does require downtime, no matter how short. A customer should weigh these pros and cons to come up with a suitable plan for migration. For small environments, Storage vMotion may be perfectly acceptable. However, large environments may require using both methods, determining which datastores must remain online and which ones can suffer downtime.

Dell, together with VMware, validated the offline method in the KB SCSI to NVMe VMware VMFS Datastore Offline Migration Steps.