RoCE brings application performance and efficiency improvements for customers running RDMA applications in a converged Ethernet infrastructure.

In a converged Ethernet environment storage, normal user data, and RDMA traffic exist. Each traffic type is assigned a particular Class of Service (CoS) value. These values are assigned to a particular output queue, and each queue is processed differently by the infrastructure or fabric.

The fabric operating system supporting RoCE ensures lossless RDMA traffic by implementing a full set Quality-of-Service (QoS) features such as policing, marking, and specific link bandwidth allocation.

With RoCEv1, all RDMA traffic is restricted in a common Layer 2 domain. The client and server hosts are part of the same Layer 2 domain.

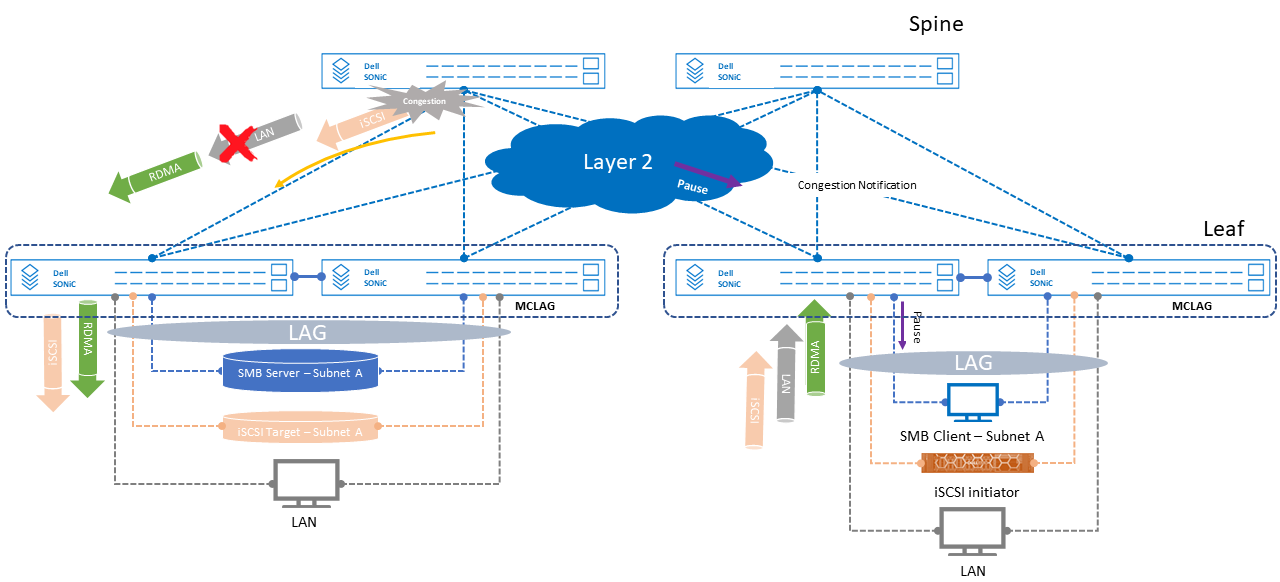

The following figure shows a common converged environment with three different data traffic, RDMA, iSCSI, and regular local area network (LAN) traffic. Each type of data traffic is assigned a unique class of service (802.1p) value.

MC-LAG is configured on the leaf switches and Link Aggregation (LAG) is configured on the switches and end-hosts.

With Dell Enterprise SONiC version 4.1, RoCEv1 and v2 are enabled through a single CLI command from the configuration mode "roce enable." This command autoconfigures the necessary features to deploy RoCE on the fabric. The autoconfiguration profile assigns RoCE traffic to 802.1p traffic-class 3, iSCSI traffic-class 4, congestion notification packets (CNP) to traffic-class 6, and all others to traffic-class 0.

Both RDMA and iSCSI traffic are given equal weight to share equally the link bandwidth. The CNP packets are given strict priority to generate this important notification either downstream or upstream depending on where congestion takes place in the fabric.

The following figure shows:

- Congestion at the spine switch

- Congestion notification generated by the spine switch is sent towards the leaf switch connected to the RDMA client.

- Pause frames sent by the spine switch are sent towards the leaf switch that is connected to the RDMA server to temporarily pause data traffic.

- Both RDMA and iSCSI storage traffic continue to flow, equally sharing bandwidth as configured.

All RDMA traffic is restricted to the same subnet or broadcast domain since RoCEv1 is being implemented. iSCSI and LAN traffic on the other hand, can be part of different subnets if necessary.

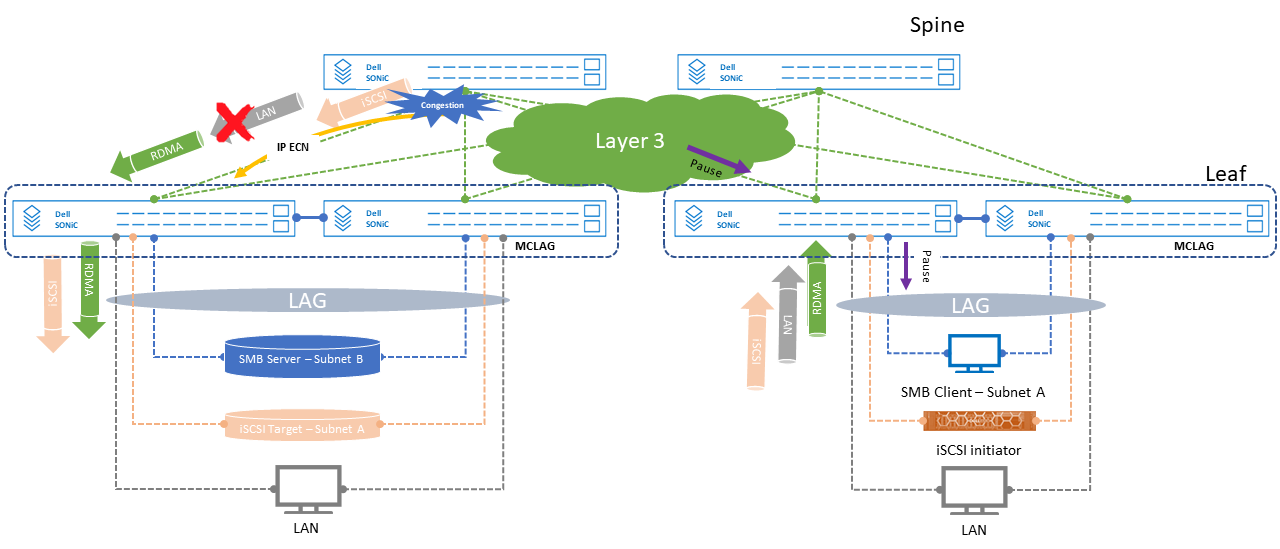

The following figure shows a RoCEv2 implementation, where the RDMA client and server are on different subnets or networks. The same set quality-of-service settings such as marking, classification, congestion notification, and others are carried through the IP cloud.

The only difference is, where RoCEv1 uses 802.1p values for classification and marking, with version 2, 802.1p values are translated into differentiated services code point (DSCP) values within the IP header and carried across the IP cloud to ensure lossless RoCE traffic end-to-end.

The same set of outcomes as in the previous figure are shown in the following figure, the only difference is the use of IP ECN packets for congestion notification.

Deployment best practices

Use the following best practices when implementing RoCE:

- Implement Jumbo frames.

- Implement MC-LAG peer links on the leaf switches to provide workload link redundancy.

- The minimum number of leaf interconnect links should be two.

- Separate RoCE and other traffic types through their own subnets or VLANs

- Assign a specific 802.1p Class of Service value to RoCE.

- RoCEv2 is supported only on the S5232F-ON, S5248F-ON, S5296F-ON, and Z9332F-ON platforms.

- When RoCEv2 uses PFC and ECN simultaneously, ECN functions as the primary congestion management mechanism; PFC is secondary.

- Configure breakout ports before enabling RoCEv2.

- Lossless operation is supported only for unicast traffic. The multicast traffic pause setting is not honored.

- Lossless traffic is tagged. Use lossless traffic (lossless DSCP) with dot1p priority 3 and 4. If you are using DSCP-based PFC, map lossless traffic with DSCP values mapped to traffic Class 3 to VLAN dot1p priority 3, and map lossless traffic with DSCP values mapped to traffic Class 4 to dot1p priority 4.

- On servers, Dell Technologies recommends that you set the DSCP value 48 for congestion notification packets (CNP), if possible. This setting allows a RoCEv2-enabled switch to prioritize the downstream server traffic it receives by applying strict priority queuing.

- The server and client network interface cards (NICs) must support RDMA.

- Apply all quality-of-service configurations on a port channel, not the port channel member links.

The reduction in network latency and offloading CPU resources, RoCE increases performance in key applications such as search, storage, database, financial and high-rate transaction.

Furthermore, because of this efficiency and application performance improvement, energy savings and footprint reduction are achieved in Ethernet based data centers.

The References section of this document provides the link to the Dell Enterprise SONiC RoCE configuration chapter.