Assets

Navigating the modern data landscape: the need for an all-in-one solution

Mon, 18 Mar 2024 19:56:59 -0000

|Read Time: 0 minutes

There are two revolutions brewing inside every enterprise. We are all very familiar with the first one - the frenzied rush to expand an organization's AI capabilities, which leads to an exponential growth in data creation, a rise in availability of high-performance computing systems with multi-threaded GPUs, and the rapid advancement of AI models. The situation creates a perfect storm that is reshaping the way enterprises operate. Then, there is a second revolution that makes the first one a reality – the ability to harness this awesome power and gain a competitive advantage to drive innovation. Enterprises are racing towards a modern data architecture that seeks to bring order to their chaotic data environment.

The Need For An All-In-One Solution

Data platforms are constantly evolving, despite a plethora of options such as data lakes, data warehouses, cloud data warehouses and even cloud data lakehouses, enterprise are still struggling. This is because the choices available today are suboptimal.

Cloud native solutions offer simplicity and scalability, but migrating all data to the cloud can be a daunting task and can end up being significantly more expensive over the long term. Moreover, concerns about the loss of control over proprietary data, particularly in the realm of AI, is a major cause for concern, as well. On the other hand, traditional on-premises solutions require significantly more expertise and resources to build and maintain. Many organizations simply lack the skills and capabilities needed to construct a robust data platform in-house.

A customer once told me – “We’ve heard from so many vendors but ultimately there is no easy button for us.”

When Dell Technologies set out to build that easy button, we started with what enterprises needed most: infrastructure, software, and services all seamlessly integrated. We created a tailor-made solution with right-sized compute and a highly performant query engine that is pre-integrated and pre-optimized to perfectly streamline IT operations. We incorporated built-in enterprise-grade security that also can seamlessly integrate with 3rd party security tools. To enable rapid support, we staffed a bench of experts, offering end-to-end maintenance and deployment services. We also knew the solution needed to be future proof – not only anticipating future innovations but also accommodating the diverse needs of users today. To support this idea, we made the choice to use open data formats, which means an organization’s data is no longer locked-in to a proprietary format or vendor. To make the transition easier, the solution makes use of built-in enterprise-ready connectors that ensures business continuity. Ultimately, our goal was to deliver an experience that is easy to install, easy to use, easy to manage, easy to scale, and easy to future-proof.

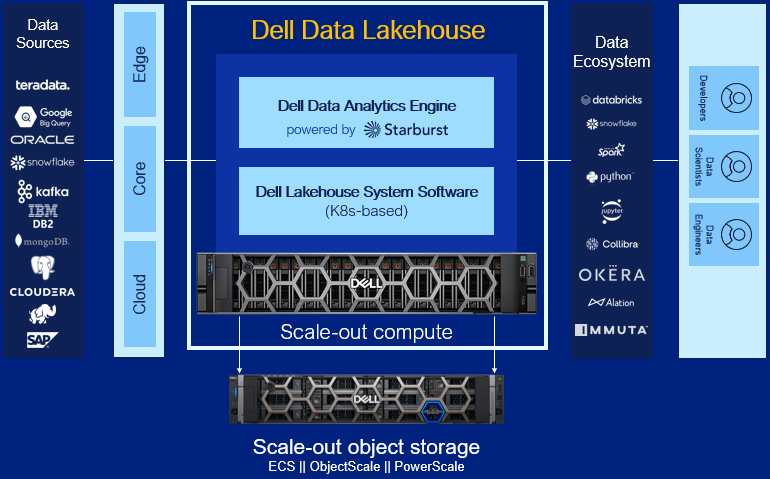

Dell Data Lakehouse’s Core Capabilities

Let’s dig into each component of the solution.

- Data Analytics Engine, powered by Starburst: A high performance distributed SQL query engine, built on top of Starburst, based on Trino, which can run fast analytic queries against data lakes, lakehouses and distributed data sources at internet-scale. It integrates global security with fine-grained access controls, supports ad-hoc and long-running ELT workloads and is a gateway to building high quality data products and power AI and Analytics workloads. Dell’s Data Analytics Engine also includes exclusive features that help dramatically improve performance when querying data lakes. Stay tuned for more info!

- Data Lakehouse System Software: This new system software is the central nervous system of the Dell Data Lakehouse. It simplifies lifecycle management of the entire stack, drives down IT OpEx with pre-built automation and integrated user management, provides visibility into the cluster health and ensures high availability, enables easy upgrades and patches and lets admins control all aspects of the cluster from one convenient control center. Based on Kubernetes, it’s what converts a data lakehouse into an easy button for enterprises of all sizes.

- Scale-out Lakehouse Compute: Purpose-built Dell Compute and Networking hardware perfectly matched for compute-intensive data lakehouse workloads come pre-integrated into the solution. Independently scale from storage by seamlessly adding more compute as needs grow.

- Scale-out Object Storage: Dell ECS, ObjectScale and PowerScale deliver cyber-secure, multi-protocol, resilient and scale-out storage for storing and processing massive amounts of data. Native support for Delta Lake and Iceberg ensures read / write consistency within and across sites for handling concurrent, atomic transactions.

- Dell Services: Accelerate AI outcomes with help at every stage from trusted experts. Align a winning strategy, validate data sets, quickly implement your data platform and maintain secure, optimized operations.

- ProSupport: Comprehensive, enterprise-class support on the entire Dell Data Lakehouse stack from hardware to software delivered by highly trained experts around the clock and around the globe.

- ProDeploy: Expert delivery and configuration assure that you are getting the most from the Dell Data Lakehouse on day one. With 35 years of experience building best-in-class deployment practices and tools, backed by elite professionals, we can deploy 3x faster1 than in-house administrators.

- Advisory Services Subscription for Data Analytics Engine: Receive a pro-active, dedicated expert to maximize value of your Dell Data Analytics Engine environment, guiding your team through design and rollout of new use cases to optimize and scale your environment.

- Accelerator Services for Dell Data Lakehouse: Fast track ROI with guided implementation of the Dell Data Lakehouse platform to accelerate AI and data analytics.

Learn More

With the combination of these capabilities, Dell continues to innovate alongside our customers to help them exceed their goals in the face of data challenges. We aim to allow our customers to take advantage of the revolution brewing that is AI and this rapid change in the market to harness the power of their data and gain a competitive advantage and drive innovation. Enterprises are racing towards a modern data architecture – it's critical they don’t get stuck at the starting line.

For detailed information on this exciting product, refer to our technical guide. For other information, visit Dell.com/datamanagement.

Source

1 Based on a May 2023 Principled Technologies study “Using Dell ProDeploy Plus Infrastructure can improve deployment times for Dell Technology”

Journey into the analytics space with Dell & Starburst

Thu, 02 Feb 2023 14:50:00 -0000

|Read Time: 0 minutes

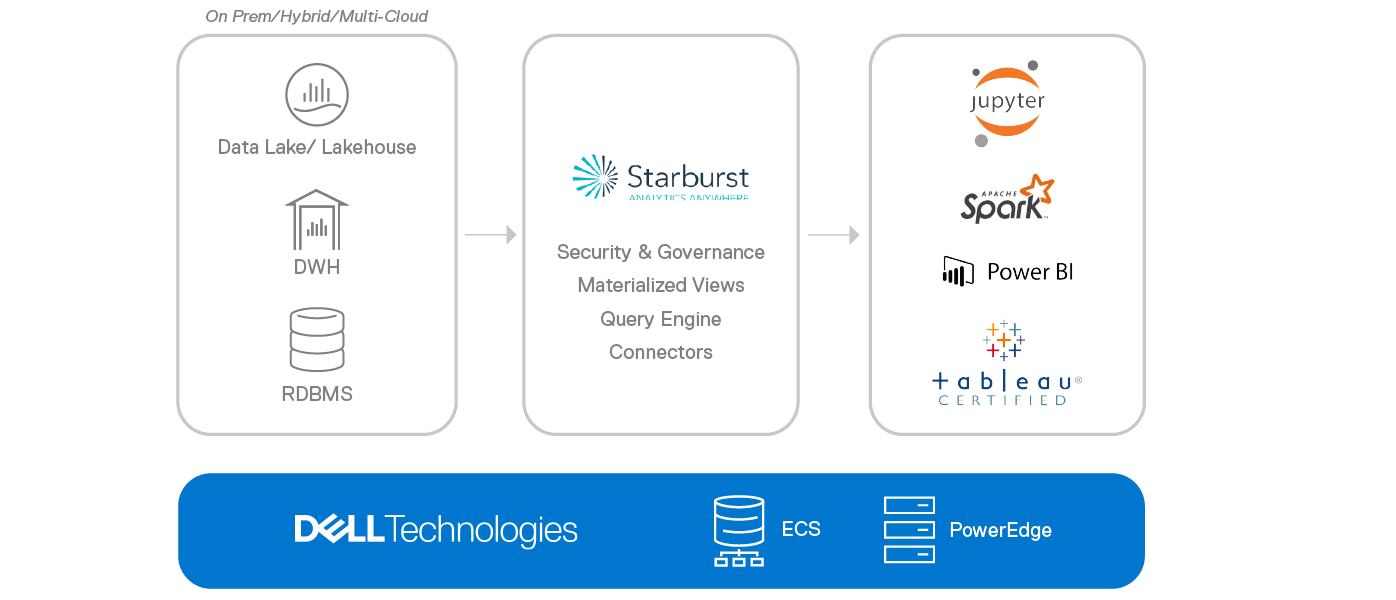

Data silos are a growing concern for enterprises today. They pose new challenges to discover, access, and activate data. At Dell Technologies, we have helped our customers work through these challenges for many years, from building fraud detection to enabling life-saving healthcare. We understand that getting the data strategy right can help teams solve their real-world problems. Dell Technologies has engaged in joint engineering and validation efforts to integrate our leading server product Dell PowerEdge and our leading Dell ECS with industry leaders in the Data Analytics space.

Today, we are happy to announce a collaboration with analytics leader Starburst Data, which will allow our analytics customers to deliver flexible and efficient architectures by combining the fastest and most secure query engine and leading hardware platforms for compute and storage.

Data virtualization and federated query analytics

Starburst is built on top of Trino, the open-source high performance distributed SQL engine, that’s known for running fast analytic queries against data sources ranging in size from GBs to PBs. Trino was formerly called PrestoSQL. In fact, in 2020, we released a white paper describing how Presto’s capabilities translate remarkably well on to the Dell ECS object storage, and that Trino’s rich feature set positions it well to win the price/performance battle against Hadoop and other technologies in most cases!

The Starburst Enterprise Platform distribution of Trino was created to help enterprises extract more value from their Trino deployments through global security with fine-grained access controls, stable and reliable releases, additional connectors, data caching, and enterprise support including guidance from the most qualified group of Trino experts anywhere.

For these reasons, we chose to partner with Starburst and deploy their software in our labs to evaluate its performance on Dell hardware. We used the industry standard TPC-DS test suite to benchmark Starburst performance by measuring the total execution item as well as the per-query execution time. We also varied the hardware resources to model how Starburst’s performance varies. We detailed our set up and experiments for reproducibility in this paper. Our goal was to provide our customers with a validated design reference for deploying Starburst and scale it appropriately as the query volume, concurrency, or data volume scales.

Deploy and scale on Dell infrastructure

Starburst is based on a distributed Coordinator-Worker architecture. In our setup, we run coordinator and worker nodes of Starburst Enterprise on Dell PowerEdge servers and use unstructured storage such as Dell Elastic Cloud Storage (ECS) for materialized views, data products, caching, and more.

We tested the reference architecture on PowerEdge R740XD (14G), but we think the latest PowerEdge server portfolio (15G) can take performance to a new level with generational improvements such as:

- High-performance computing - delivering up to 43% greater performance by leveraging Intel's 3rd Gen Xeon Scalable processors.

- PCIe Gen 4 - doubling the throughput over prior server generations, with eight lanes of data.

- Comprehensive security - with data encryption, the root of trust protection, and supply chain verification.

- Improved energy efficiency - with the latest cooling technology, offering up to a 60% reduction in power consumption.

- Flexible, autonomous management - delivering up to 85% time savings by freeing up the skilled hands of IT professionals for other vital projects.

We used the ECS EX500 as a data lake source. ECS is the world’s most cyber-secure object storage that delivers scalable public cloud services with the reliability and control of a private-cloud infrastructure. With comprehensive protocol support for unstructured data (object and file) and a variety of deployment options (turnkey appliance or software-defined), ECS can support a wide range of workloads especially big data analytics. And best of all, Starburst works seamlessly with ECS!

Harness data to solve real world problems

Data teams can start taking advantage of our collaboration now. Today’s announcement allows customers to:

- Quickly deploy a thoroughly tested architecture comprising Dell hardware, Starburst Enterprise Platform, and other software on-premises

- Effectively partner with IT to move data intelligently into a data lake / data warehouse based on usage patterns

- Prevent vendor lock-in with support for the most popular open table and file formats

- Separate compute and storage and scale flexibly and efficiently

- Harness the innovations in our latest generation of ECS appliances as a data lake storage

We’re very excited about the collaboration and can’t wait for you check out the reference architecture to learn about the announcement and the solution.