MLPerf™ Inference v3.1 Edge Workloads Powered by Dell PowerEdge Servers

Tue, 19 Sep 2023 12:07:00 -0000

|Read Time: 0 minutes

Abstract

Dell Technologies recently submitted results to the MLPerf Inference v3.1 benchmark suite. This blog examines the results on the Dell PowerEdge XR4520c, PowerEdge XR7620, and PowerEdge XR5610 servers with the NVIDIA L4 GPU.

MLPerf Inference background

The MLPerf Inference benchmarking suite is a comprehensive framework designed to fairly evaluate the performance of a wide range of machine learning inference tasks on various hardware and software configurations. The MLCommonsTM community aims to provide a standardized set of deep learning workloads with which to work and as fair measuring and auditing methodologies. The MLPerf Inference submission results serve as valuable information for researchers, customers, and partners to make informed decisions about inference capabilities on various edge and data center systems.

The MLPerf Inference edge suite includes three scenarios:

- Single-stream—This scenario’s performance metric is 90 percent latency. A common use case is the Siri voice assistant on iOS products on which Siri’s engine waits until the query has been asked and then returns results.

- Multi-stream—This scenario has a higher performance metric with a 99 percent latency. An example use case is self-driving cars. Self-driving cars use multiple cameras and lidar inputs to real-time driving decisions that have a direct impact on what happens on the road.

- Offline—This scenario is measured by throughput. An example of Offline processing on the edge is a phone sharing an album suggestion that is based on a recent set of photos and videos from a particular event.

Edge computing

In traditional cloud computing at the data center, data from phones, tablets, sensors, and machines are sent to physically distant data centers to be processed. The location of where the data has been gathered and where it is processed are separate. The concept of edge computing shifts this methodology by processing data on the device itself or on local compute resources that are available nearby. The available compute resources nearby are known as the “devices on the edge.” Edge computing is prevalent in several industries such as self-driving cars, retail analytics, truck fleet management, smart grid energy distribution, healthcare, and manufacturing.

Edge computing complements traditional cloud computing by reducing processing speed in terms of lowering latency, improving efficiency, enhancing security, and enabling higher reliability. By processing data on the edge, the load on central data centers is eased as is the time to receive a response for any type of inference queries. With the offloading of computation in data centers, network congestion for cloud users becomes less of a concern. Also, because sensitive data is processed at the edge and is not exposed to threats across a wider network, the risk of sensitive data being compromised is less. Furthermore, if connectivity to the cloud is disrupted and is intermittent, edge computing can enable systems to continue functioning. With several devices on the edge acting as computational minidata centers, the problem of a single point of failure is mitigated and additional scalability becomes easily achievable.

Dell PowerEdge system and GPU overview

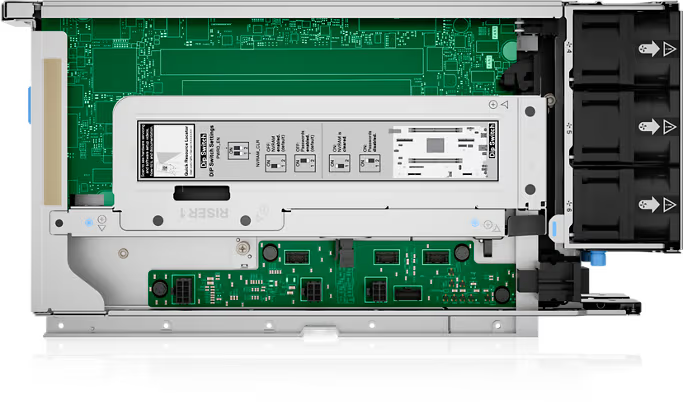

Dell PowerEdge XR4520c server

For projects that need a robust and adaptable server to handle demanding AI workloads on the edge, the PowerEdge XR4520c server is an excellent option. Dell Technologies designed the PowerEdge XR4520c server with reliability to withstand challenging edge environments. The PowerEdge XR4520c server delivers the power and compute required for real-time analytics on the edge with Intel Xeon Scalable processors. The edge-optimized design decisions include a rugged exterior and an extended temperature range to operate in remote locations and industrial environments. Also, the compact form factor and space-efficient design enable deployment on the edge. Like all Dell PowerEdge products, this server comes with world class Dell support and Dell’s (Integrated Dell Remote Access Controller (iDRAC) for remote management. For additional information about the technical specifications of the PowerEdge XR4520c server, see to the specification sheet.

Figure 1: Front view of the Dell PowerEdge XR4520c server

Figure 2: Top view of the Dell PowerEdge XR4520c server

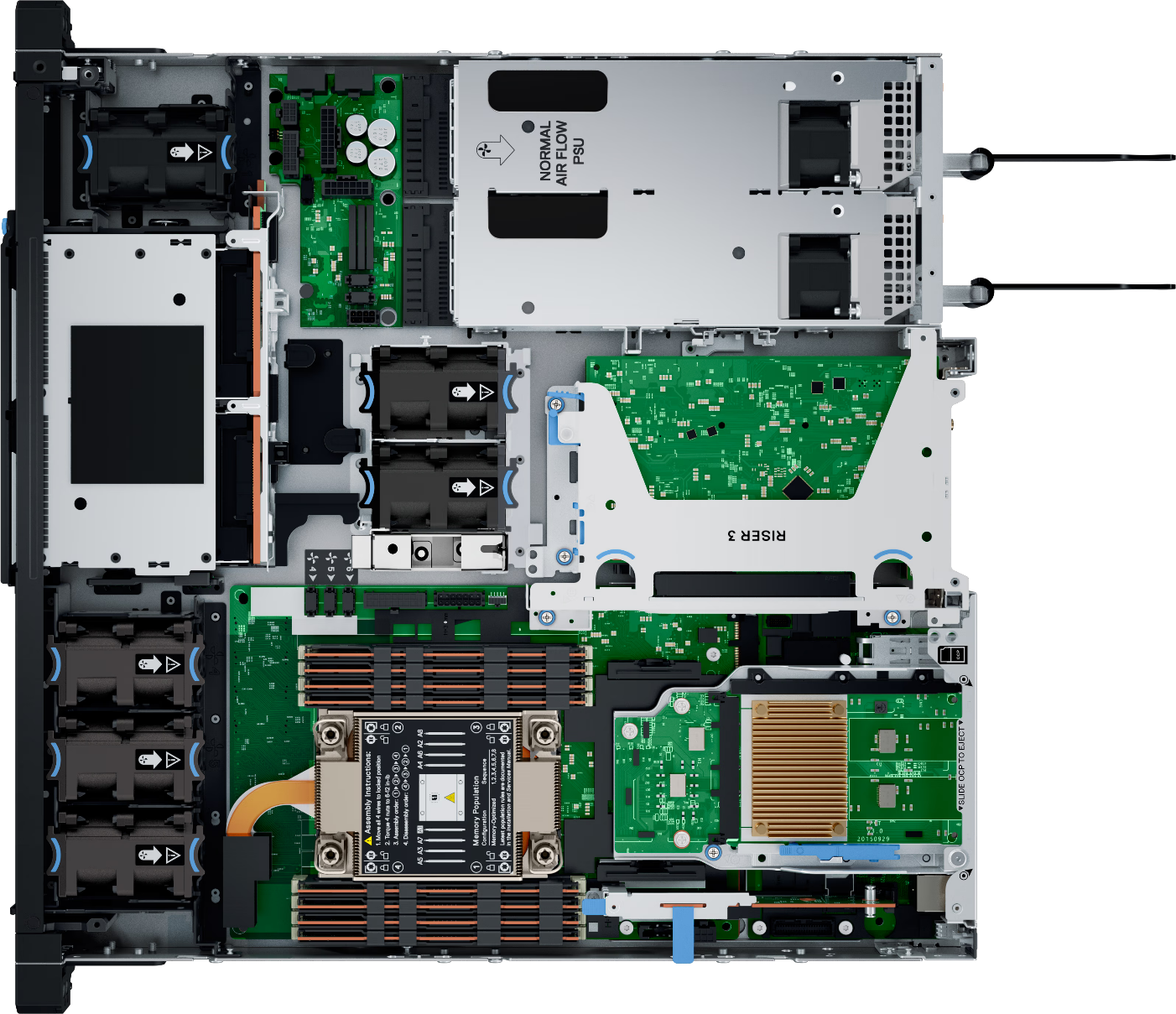

Dell PowerEdge XR7620 server

The PowerEdge XR7620 server is top-of-the-line for deep learning in the edge. Powered with the latest Intel Xeon Scalable processors, the reduced training time and additional number of inferences is remarkable on the PowerEdge XR7620 server. Dell Technologies has designed this as a half-width server for rugged environments with a dust and particle filter and extended temperature range from –5C to 55C (23 F to 131 F). Furthermore, Dell’s comprehensive security and data protection features include data encryption and zero-trust logic for the protection of sensitive data. For additional information about the technical specifications of the PowerEdge XR7620 server, see the specification sheet.

Figure 3: Front view of the Dell PowerEdge XR7620 server

Figure 4: Rear view of the Dell PowerEdge XR7620 server

Dell PowerEdge XR5610 server

The Dell PowerEdge XR5610 server is an excellent option for AI workloads on the edge. This all-pupose, rugged single-socket server is a versatile edge server that has been built for telecom, defense, retail and other demanding edge environments. As shown in the following figures, the short chassis can fit in space-constrained environments and is also a formidable option when considering power efficiency. This server is driven by Intel Xeon Scalable processors and is boosted with NVIDIA GPUs as well as high-speed NVIDIA NVLink interconnects. For additional information about the technical specifications of the PowerEdge XR5610 server, see the specification sheet.

Figure 5: Front view of the Dell PowerEdge XR5610 server

Figure 6: Top view of the Dell PowerEdge XR5610 server

NVIDIA L4 GPU

The NVIDIA L4 GPU is an excellent strategic option for the edge as it consumes less energy and space but delivers exceptional performance. The NVIDIA L4 GPU is based on the Ada Lovelace architecture and delivers extraordinary performance for video, AI, graphics, and virtualization. The NVIDIA L4 GPU comes with NVIDIA’s cutting-edge AI software stack including CUDA, cuDNN, and support for several deep learning frameworks like Tensorflow and PyTorch.

Systems Under Test

The following table lists the Systems Under Test (SUT) that are described in this blog.

Table 1: MLPerf Inference v3.1 system configuration of the Dell PowerEdge XR7620 and the PowerEdge XR4520c servers

Platform | Dell PowerEdge XR7620 (1x L4, TensorRT) | Dell PowerEdge XR4520c (1x L4, TensorRT) |

MLPerf system ID | XR7620_L4x1_TRT | XR4520c_L4x1_TRT |

Operating system | CentOS 8 | Ubuntu 22.04 |

CPU | Dual Intel Xeon Gold 6448Y CPU @ 2.10 GHz | Single Intel Xeon D-2776NT CPU @ 2.10 |

Memory | 256 GB | 128 GB |

GPU | NVIDIA L4 | |

GPU count | 1 | |

Software stack | TensorRT 9.0.0 CUDA 12.2 cuDNN 8.8.0 Driver 535.54.03 DALI 1.28.0 | TensorRT 9.0.0 CUDA 12.2 cuDNN 8.9.2 Driver 525.105.17 DALI 1.28.0

|

Performance from Inference v3.1

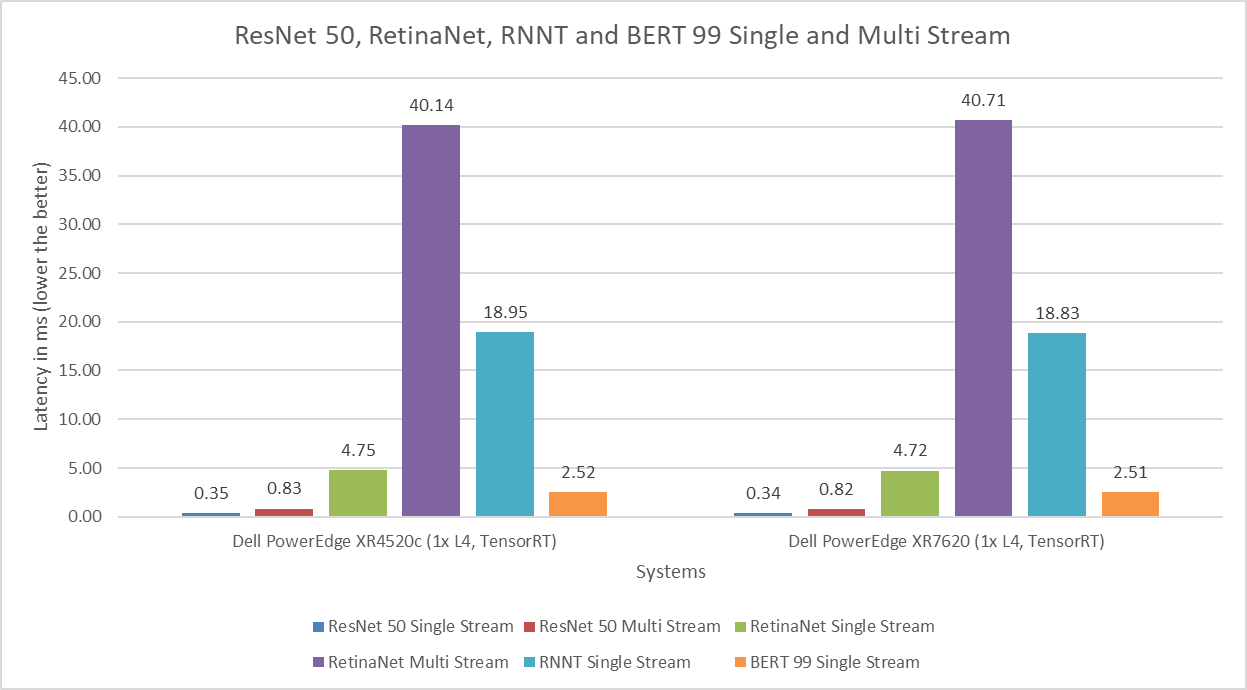

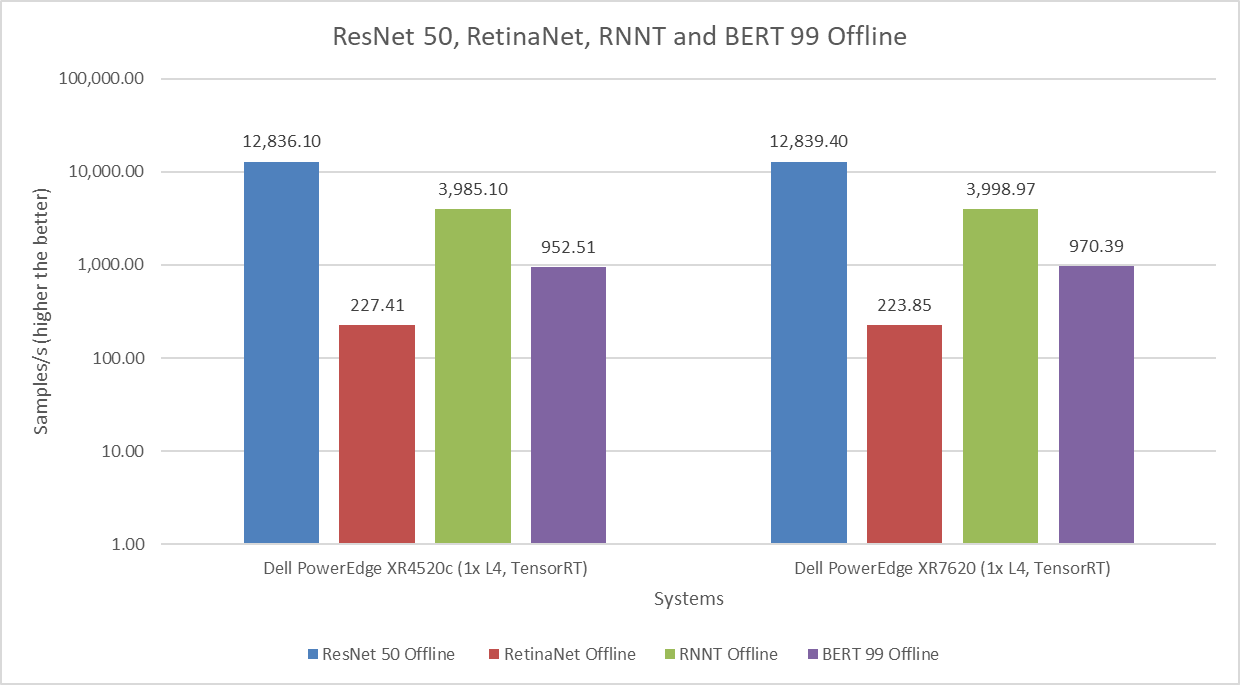

The following figure compares the Dell PowerEdge XR4520c and PowerEdge XR7620 servers across the ResNet50, RetinaNet, RNNT and BERT 99 Single-stream, Multi-stream, and Offline benchmarks. Across all the benchmarks in this comparison, we can state that the performance in the image classification, object detection, speech to text and language processing workloads packaged with NVIDIA L4 GPUs for both servers provide exceptional performance.

Figure 7: Dell PowerEdge XR4520c and PowerEdge XR7620 servers across the ResNet50, RetinaNet, RNNT, and BERT 99 Single and Multi-stream benchmarks

Figure 8: Dell PowerEdge XR4520c and PowerEdge XR7620 servers across the ResNet50, RetinaNet, RNNT, and BERT 99 Offline benchmarks

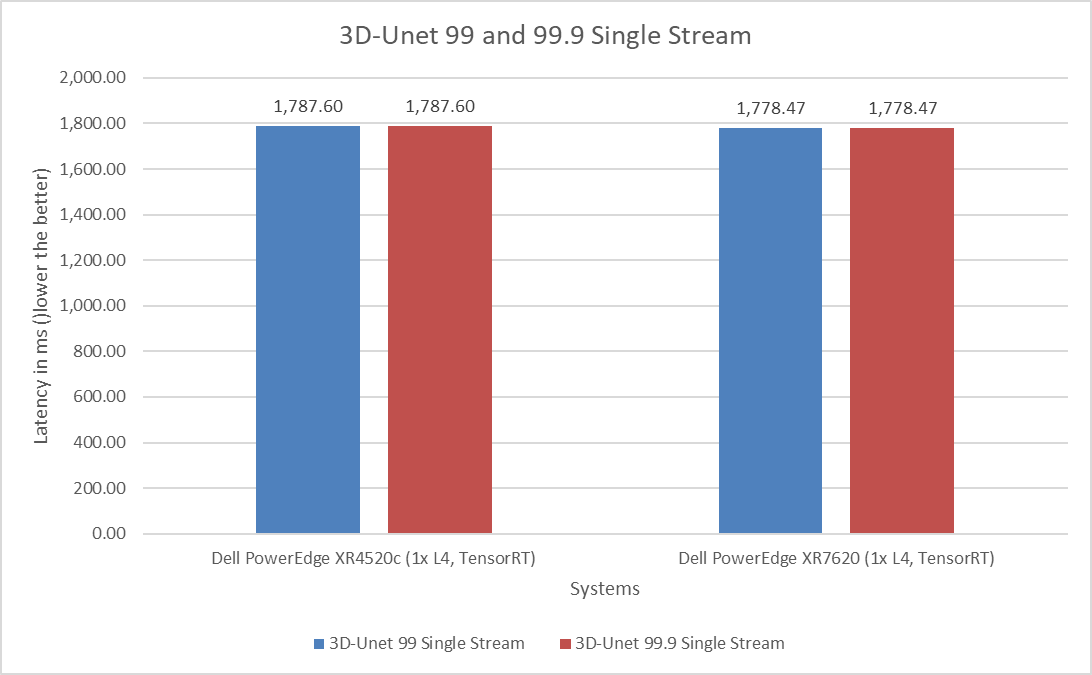

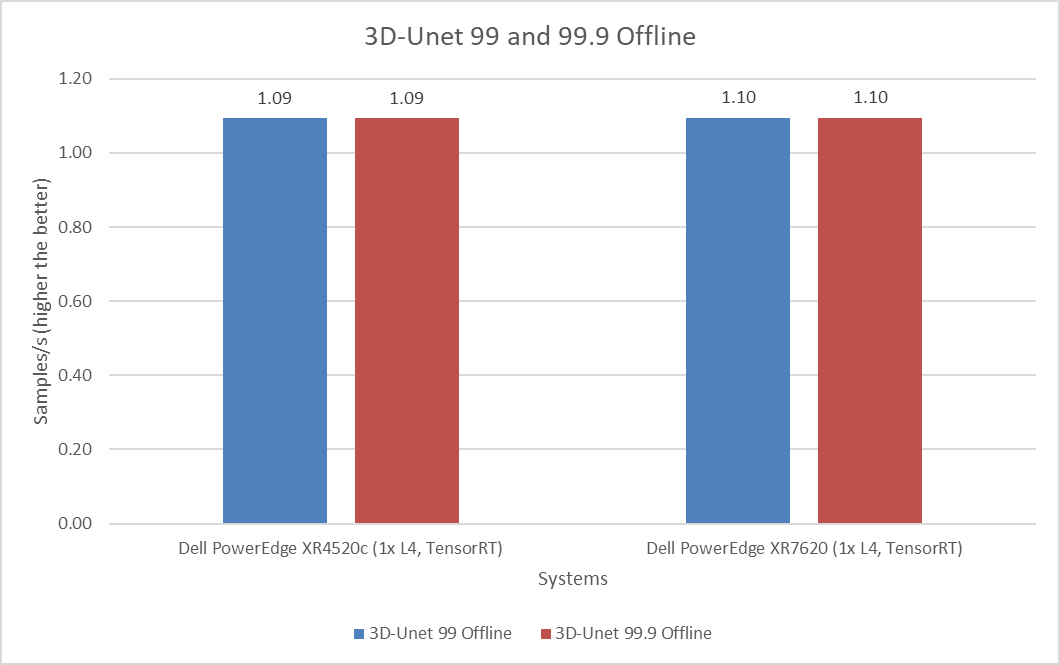

Like ResNet50 and RetinaNet, the 3D-Unet benchmark falls under the vision area but focuses on the medical image segmentation task. The following figures show identical performance of the two servers in both the default and high accuracy modes in the Single-stream and Offline scenarios.

Figure 9: Dell PowerEdge XR4520c and PowerEdge XR7620 servers across 3D-Unet Single-stream

Figure 10: Dell PowerEdge XR4520c and PowerEdge XR7620 server across 3D-Unet Offline

Dell PowerEdge XR5610 power submission

In the MLPerf Inference v3.0 round of submissions, Dell Technologies made a power submission under the preview category for the Dell PowerEdge XR5610 server with the NVIDIA L4 GPU. For the v3.1 round of submissions, Dell Technologies made another power submission for the same server in the closed edge category. As shown in the following table, the detailed configurations of both the systems across the rounds of submissions show that the hardware remained consistent, but that the software stack was updated. In terms of system performance per watt, the PowerEdge XR 5610 server claims the top spot in image classification, object detection, speech-to-text, language processing, and medical image segmentation workloads.

Table 2: MLPerf Inference v3.0 and v3.1 system configuration of the Dell PowerEdge XR5610 server

Platform | Dell PowerEdge XR5610 (1x L4, MaxQ, TensorRT) v3.0 | Dell PowerEdge XR5610 (1x L4, MaxQ, TensorRT) v3.1 |

MLPerf system ID | XR5610_L4x1_TRT_MaxQ | XR5610_L4x1_TRT_MaxQ |

Operating system | CentOS 8.2 | |

CPU | Intel(R) Xeon(R) Gold 5423N CPU @ 2.10 GHz | |

Memory | 256 GB | |

GPU | NVIDIA L4 | |

GPU count | 1 | |

Software stack | TensorRT 8.6.0 CUDA 12.0 cuDNN 8.8.0 Driver 515.65.01 DALI 1.17.0 | TensorRT 9.0.0 CUDA 12.2 cuDNN 8.9.2 Driver 525.105.17 DALI 1.28.0

|

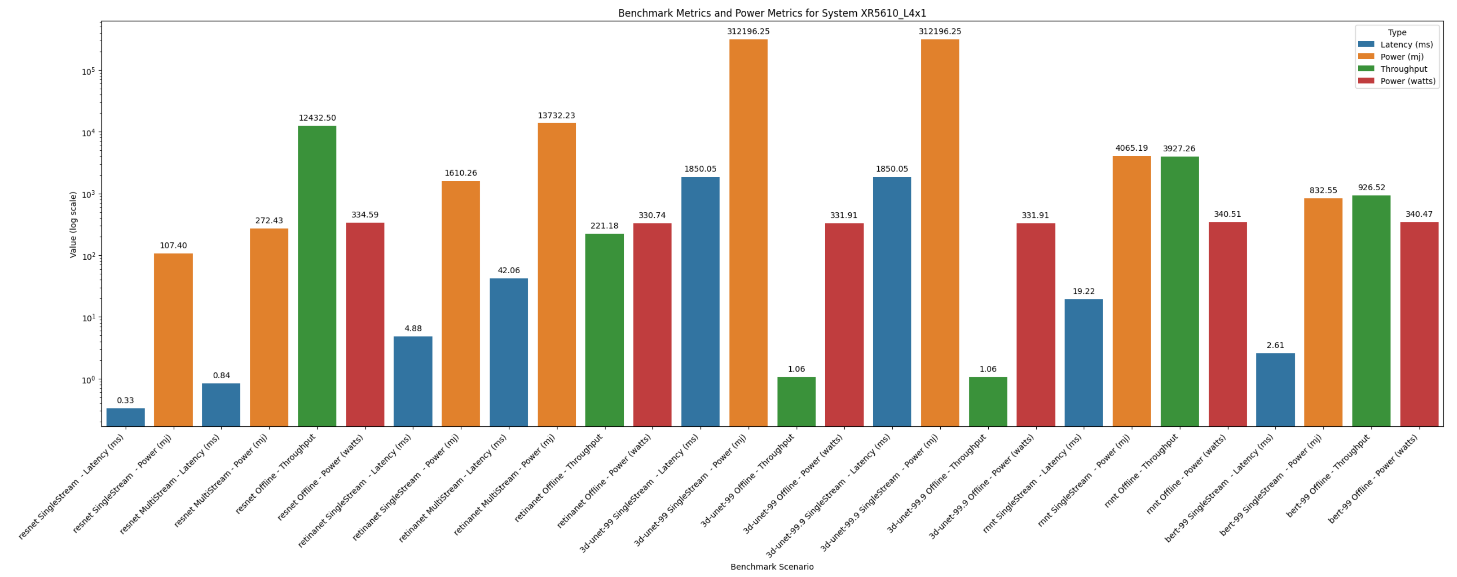

The power submission includes extra power results in each submission. For each submitted benchmark, there is a power metric that is paired with it. The metric for the Single-stream and Multi-stream performance results is Latency in milliseconds and the corresponding power consumption is noted in millijoules (mj). The Offline performance numbers are recorded in samples per second(samples/s), and the corresponding power readings are delivered in watts. The following table shows a breakdown for the calculations for queries per millijoules and samples/s per watt have been calculated.

Table 3: Breakdown of reading a power submission

Scenario | Performance metric | Power metric | Performance per unit of energy |

Single Stream | Latency (ms) | Millijoules (mj) | 1 query/mj -> queries/mj |

Multi Stream | Latency (ms) | Millijoules (mj) | 8 queries/mj -> queries/mj |

Offline | Samples/s | Watts | Samples/s / Watts -> performance per Watt |

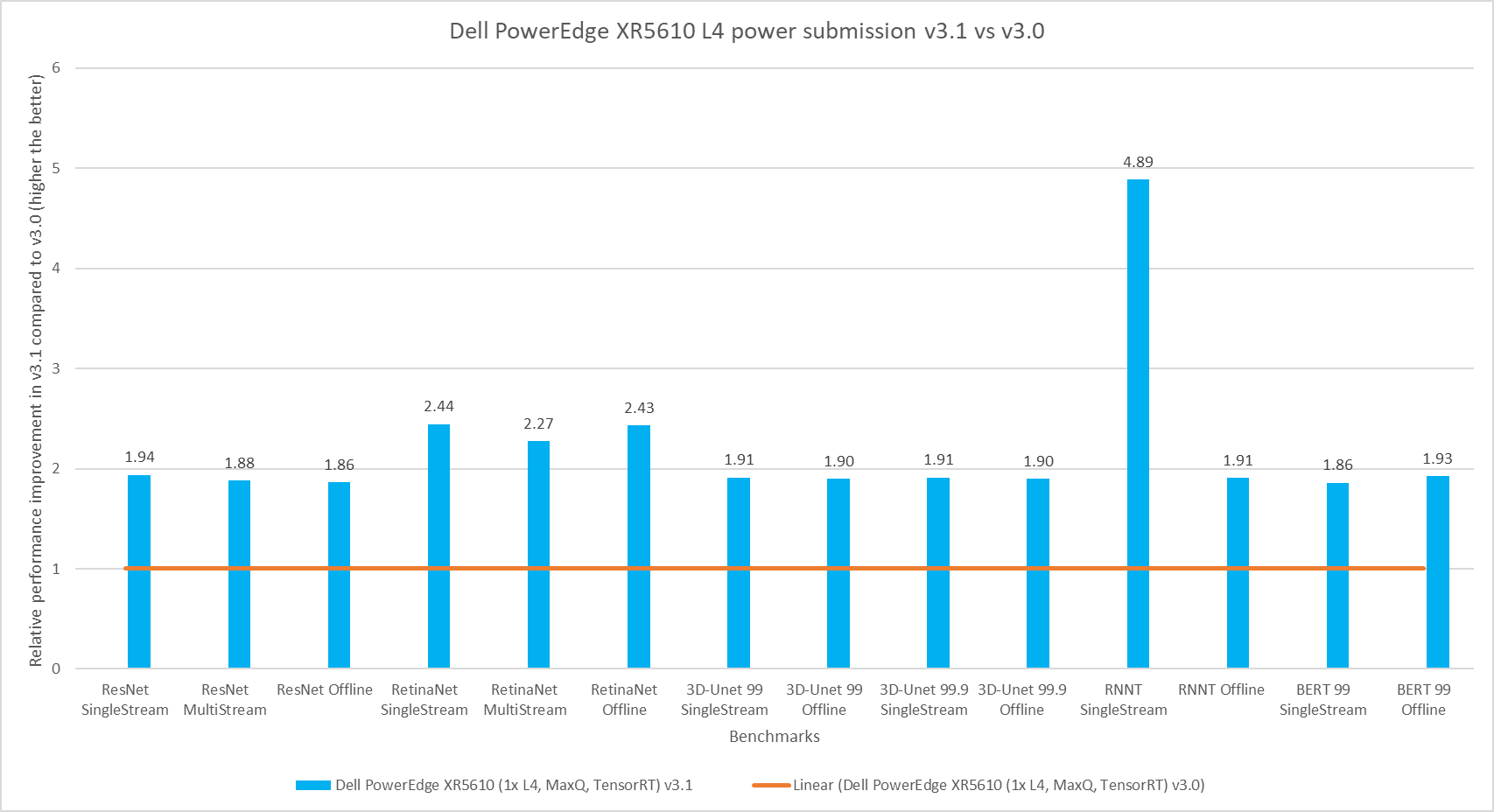

The following figure shows the improvements in the performance per energy used on the Dell PowerEdge XR5610 server across the v3.1 and v3.0 rounds of submission. Across all the benchmarks, the server extracted double the performance per energy. For the RNNT Single-stream benchmark, the servers showed a brilliant performance jump of close to five times greater. The performance improvements came from hardware and software optimizations. Also, BIOS firmware upgrades also contributed significantly.

Figure 11: Dell PowerEdge XR5610 with NVIDIA L4 GPU power submission for v3.1 compared to v3.0

The following figure shows the Single-stream and Multi-stream latency results from the Dell PowerEdge XR5610 server:

Figure 12: Dell PowerEdge XR5610 NVIDIA L4 GPU L4 v3.1 server

Conclusion

Both the Dell PowerEdge XR4520c and Dell PowerEdge XR7620 servers continue to showcase excellent performance in the edge suite for MLPerf Inference. The Dell PowerEdge XR5610 server showed a consistent doubling in performance per energy across all benchmarks confirming itself as a power efficient server option. Built for the edge, the Dell PowerEdge XR portfolio proves to be an outstanding option with consistent performance in the MLPerf Inference v3.1 submission. As the need for edge computing continues to grow, the MLPerf Inference edge suite shows that Dell PowerEdge servers continue to be an excellent option for any Artificial Intelligence workload.

MLCommons results

https://mlcommons.org/en/inference-edge-31/

MLPerf Inference v3.1 system IDs:

- 3.1-0072 - Dell PowerEdge XR4520c (1x L4, TensorRT)

- 3.1-0073 - Dell PowerEdge XR5610 (1x L4, MaxQ, TensorRT)

- 3.1-0074 - Dell PowerEdge XR7620 (1x L4, TensorRT)

The MLPerf™ name and logo are trademarks of MLCommons Association in the United States and other countries. All rights reserved. Unauthorized use strictly prohibited. See www.mlcommons.org for more information.