MLPerf 4.1 Results: PowerEdge XE9680 and PowerEdge XE8640 with Nvidia Tensor Core GPUs

Wed, 28 Aug 2024 05:00:00 -0000

|Read Time: 0 minutes

Introduction

The artificial intelligence (AI) landscape is evolving at an unprecedented pace, driven by groundbreaking advancements in machine learning (ML). As researchers, developers, and companies race to push the boundaries of what's possible, there is a growing need for standardized benchmarks to objectively measure and compare the performance of ML models and hardware performance. This is where MLPerf comes in.

MLPerf is a comprehensive benchmarking suite designed to evaluate the performance of ML hardware, software, and algorithms. It provides a standardized set of tests that cover a wide range of tasks, from image recognition to natural language processing (NLP), and reinforcement learning. These benchmarks are crucial for helping the AI community understand how different systems perform under various conditions, ensuring that innovations are practical and efficient.

The Dell PowerEdge XE9680, equipped with the latest NVIDIA H200 GPUs and powered by NVIDIA CUDA, redefines high-performance computing. Engineered for AI workloads, this scalable platform accelerates NLP, recommender systems, and generative AI breakthroughs. Now, let's explore how the NVIDIA H200 GPU compares to its predecessor, the NVIDIA H100.

Why MLPerf Matters

In the world of AI, performance is critical. Whether it's training a model faster, reducing the energy consumption of a neural network, or improving the accuracy of predictions, every improvement counts. However, comparing the performance of different AI systems has traditionally been a challenge. Different models, datasets, and hardware configurations can lead to inconsistent results, making it difficult to determine which system excels.

MLPerf addresses this challenge by providing a level playing field. Its benchmarks are designed to be fair, representative, and applicable to real-world scenarios. By using MLPerf, organizations can make informed decisions about which hardware and software solutions best suit their needs, whether developing new AI applications or scaling existing ones.

The Latest MLPerf Results

Our MLPerf 4.1 inference benchmarking on the Dell PowerEdge XE9680 and the XE8640 servers equipped with Nvidia Tensor Core GPUs demonstrated significant generational and workload advancements in LLM performance.

The following results offer a glimpse into the future of AI computing on the PowerEdge XE9680 and XE8640 servers.

PowerEdge XE9680 PowerEdge XE8640

The MLPerf ResNet benchmark evaluates the performance of machine learning systems using the ResNet-50 model for image classification on the ImageNet dataset.

The MLPerf BERT benchmark assesses the performance of machine learning systems using the BERT model for natural language processing tasks like question answering and text classification.

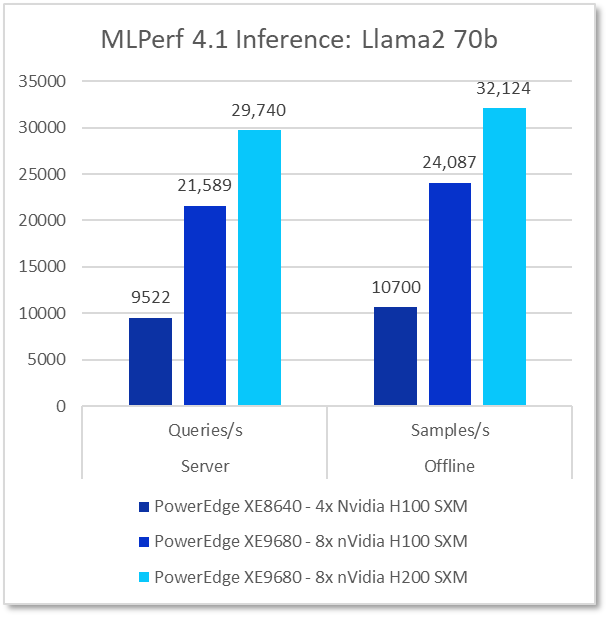

Finally, the MLPerf Llama2 70b benchmark results.

Key Takeaways

Performance Excellence: The Dell PowerEdge XE9680 and XE8640 servers, powered by NVIDIA Tensor Core GPUs, delivered outstanding results in the MLPerf 4.1 benchmarks, including a remarkable 37% generational performance boost on the XE9680 when comparing the Nvidia H100 to H200 GPUs. These GPUs excelled in handling large language models (LLMs) and other complex AI tasks, consistently achieving high throughput and low latency, underscoring their power capability even the most demanding AI workloads.

Flexible AI Scalability: The PowerEdge XE8640 and XE9680 servers offer a scalable performance spectrum, allowing customers to select the server configuration that best aligns with their specific workload demands—from the cost-effective XE8640 to the high-powered XE9680. This ensures optimized performance without overprovisioning.

Benchmarking Leadership: NVIDIA’s GPUs continue to set the benchmark in MLPerf, establishing new standards for AI performance. These results underscore NVIDIA’s relentless commitment to innovation and its pivotal role in advancing the AI industry. Organizations can trust NVIDIA GPUs to deliver cutting-edge performance and efficiency, ensuring their AI workloads are handled with unparalleled excellence.

Conclusion

The 138 submissions by Dell to MLCommons MLPerf 4.1 are more than just numbers; they testify to our commitment to advancing AI technology and giving our customers the insights they need to make informed AI infrastructure decisions. The impressive results of the PowerEdge XE9680 and XE8640 servers reflect our dedication to providing a diverse portfolio of performant, right-sized servers that meet the ever-growing demands of AI computing. To see the comprehensive results, visit the MLCommons Results website linked below.

Server Configuration

Server Model | GPU | CPU | Software |

PowerEdge XE9680 | 8x Nvidia H100 SXM 80GB | 2x Intel Xeon Platinum 8468 | TensorRT 10.2.0, CUDA 12.4 |

PowerEdge XE9680 | 8x Nvidia H200 SXM 80GB | 2x Intel Xeon Platinum 8470 | TensorRT 10.2.0, CUDA 12.4 |

PowerEdge XE8640 | 4x Nvidia H100 SXM 80GB | 2x Intel Xeon Platinum 8468 | TensorRT 9.3.0, CUDA 12.2 |

Resources

- https://www.dell.com/en-us/shop/ipovw/poweredge-xe9680

- https://www.dell.com/en-us/shop/ipovw/poweredge-xe8640

- https://mlcommons.org/benchmarks/inference-datacenter/

Author(s): Dell HPC Lab, Frank Han, Rakshith Vasudev, Delmar Hernandez

Testing conducted by Dell in July of 2024. Performed on PowerEdge XE9680 with 8x Nvidia H100/H200 GPUs. MLPerf v4.1 Inference results in ResNet, BERT, and Llama2 70b models. Result ID is 4.0-0018, 4.0-0020, and 4.0-0021. The MLPerf name and logo are trademarks of MLCommons Association in the United States and other countries. All rights reserved. Unauthorized use is strictly prohibited. See www.mlcommons.org for more information. Individual results may vary.