Home > Storage > PowerFlex > White Papers > YugabyteDB on Dell PowerFlex > YugabyteDB architecture

None

-

A YugabyteDB universe (cluster) is a group of nodes (virtual machines, physical machines, or containers) that collectively function as a resilient and scalable distributed database.

The YugabyteDB Master (YB-Master) service keeps the system metadata and records, such as tables and location of their tablets, users, and roles with their associated permissions, and so on. The YB-Master service is also responsible for coordinating background operations, such as load-balancing or initiating re-replication of under-replicated data, and performing various administrative operations such as creating, altering, and dropping tables.

The YugabyteDB Tablet Server (YB-Tserver) service is responsible for the input-output (I/O) of the end-user requests in a YugabyteDB cluster. Data for a table is split (sharded) into tablets. Each tablet is composed of one or more tablet peers, depending on the replication factor. Each YB-Tserver hosts one or more tablet peers.

YugabyteDB combines technologies like the PostgreSQL API layer and RocksDB in an innovative, two-layer architecture. This powerful combination delivers the best of SQL and NoSQL in a unified database that can run on any cloud or on-prem.

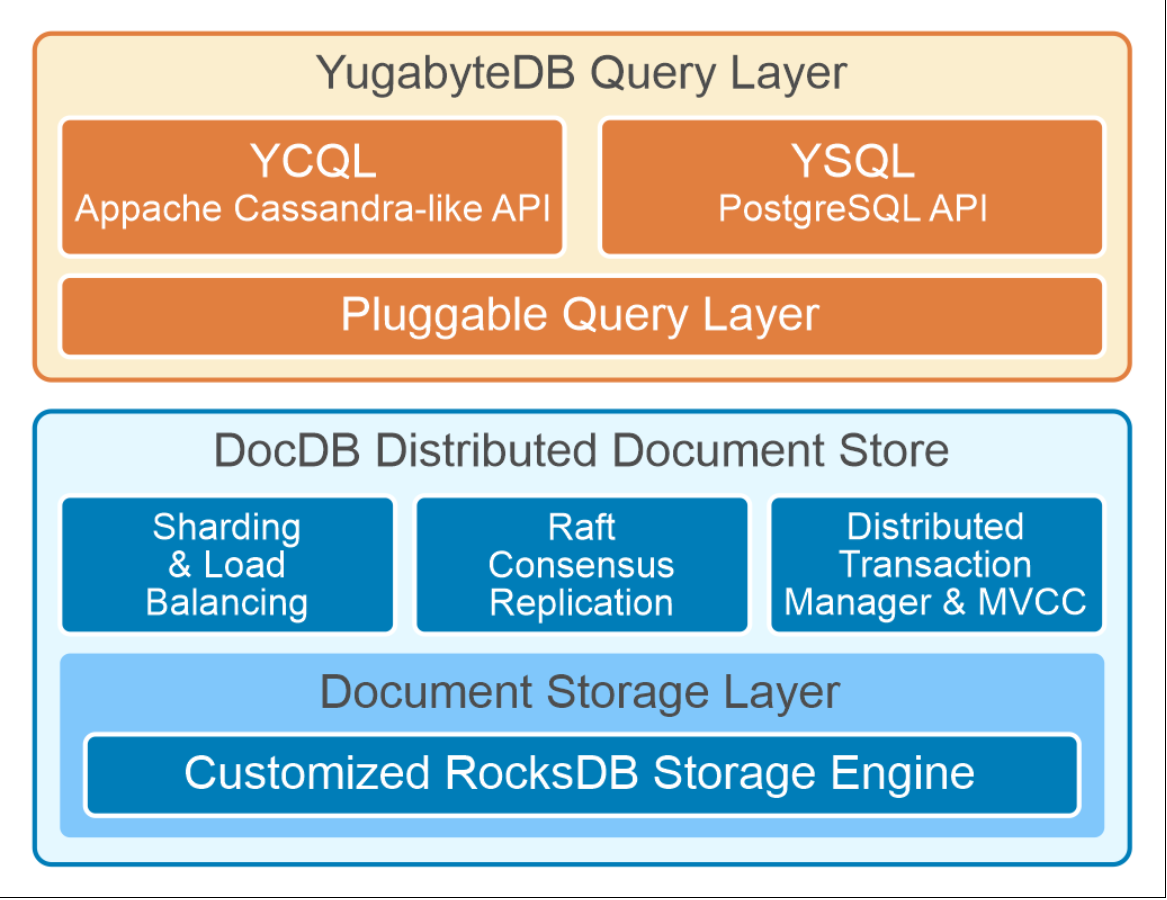

The YugabyteDB architecture, as shown in the following figure, follows a layered design. The logical layers of this architecture are the Yugabyte Query Layer, and the DocDB distributed document store.

Figure 5. YugabyteDB architecture

YugabyteDB Query Layer

The YugabyteDB Query Layer (YQL) is the upper layer of YugabyteDB. Applications interact with the YQL using client drivers. The YQL supports two types of distributed SQL APIs:

- YSQL, a distributed SQL API wire-compatible with PostgreSQL.

- YCQL, a semi-relational API built for high performance and massive scale, with its roots in Cassandra Query Language.

From the application perspective, YQL is stateless, and the clients can connect to one or more YB-Tservers on the appropriate port to perform operations against a YugabyteDB cluster.

DocumentDB Distributed Document Store

YugabyteDB uses a customized version of RocksDB which is called the DocDB that is responsible for data sharding, synchronous replication, and load balancing of data across the database storage nodes evenly. The Raft consensus algorithm is responsible for leader election and data replication. In YugabyteDB, the Raft group is per individual shard.

YugabyteDB uses Hybrid logical clocks (HLC) that combine physical time clocks synchronized using NTP with Lamport clocks. YugabyteDB implements Multi-Version Concurrency Control (MVCC), which internally keeps track of multiple versions of values for a particular key. The last part of the key is a timestamp which enables quick access to a particular version of the key in the DocDB key value store.

For more information about the YugabyteDB architecture, see Architecture.