Home > AI Solutions > Gen AI > White Papers > Technical White Paper–Generative AI in the Enterprise – Model Training > Architecture overview

None

-

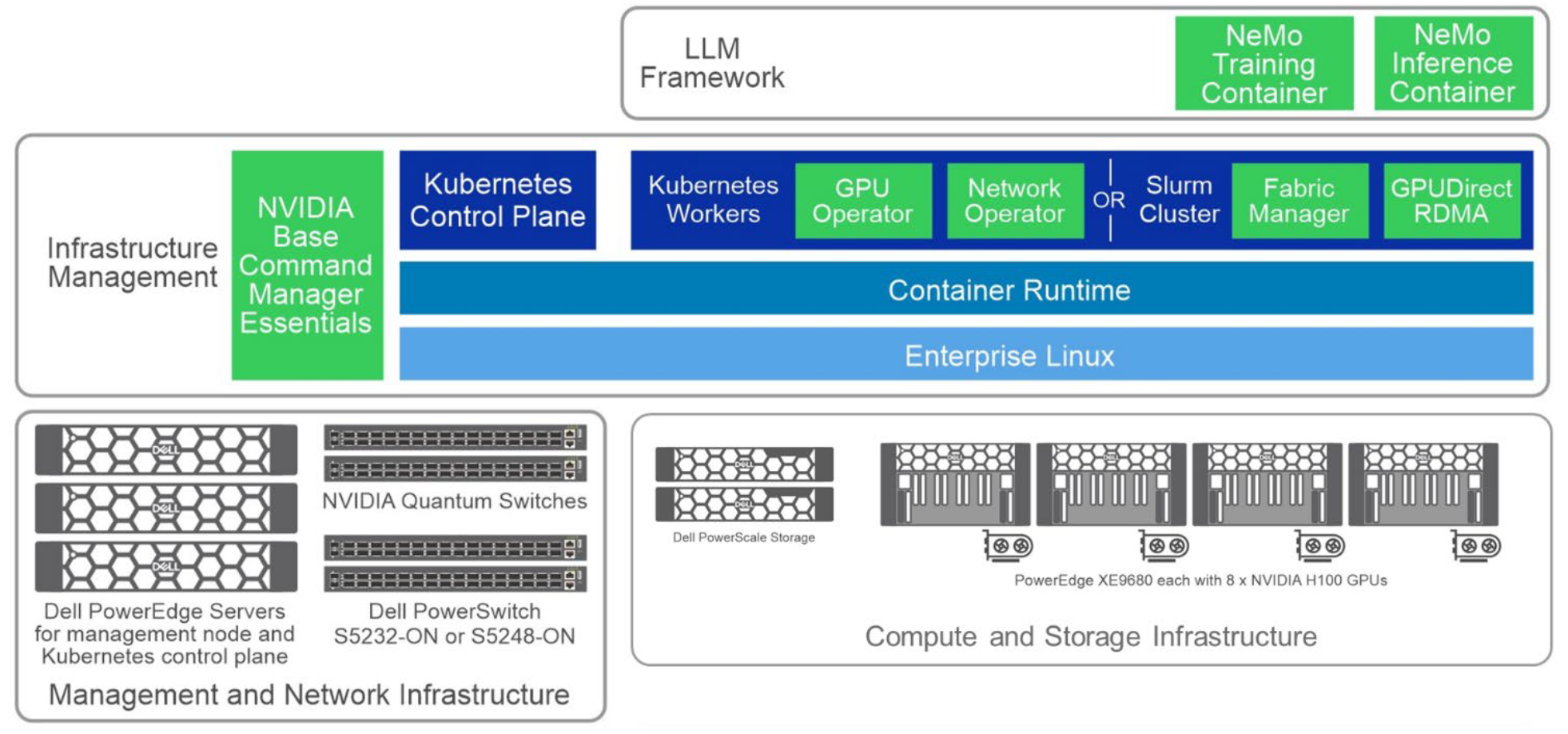

This reference design for generative AI model training is designed to address the challenges of training LLMs for enterprise use cases. LLMs have shown tremendous potential in natural language processing tasks but require specialized infrastructure for efficient development and deployment.

This design serves as a starting point, offering organizations guidelines and best practices to implement scalable, efficient, and reliable infrastructure specifically tailored for generative AI model training. While its primary focus is LLM training, the architecture can be adapted for AI model customization and inferencing, as explained in the associated papers.

Figure 1. High level solution architecture

The solution design presented here is modular and each of the components can be independently scaled depending on the customer’s workflow and application requirements.