Home > Workload Solutions > SQL Server > White Papers > SQL Server 2019 Containers on Linux > Kubernetes

Kubernetes

-

Modern applications—primarily microservices that are packaged with their dependencies and configurations—are increasingly being built using container technology. Kubernetes, also known as K8s, is an open-source platform for deploying and managing containerized applications at scale. The Kubernetes container orchestration system was open-sourced by Google in 2014.

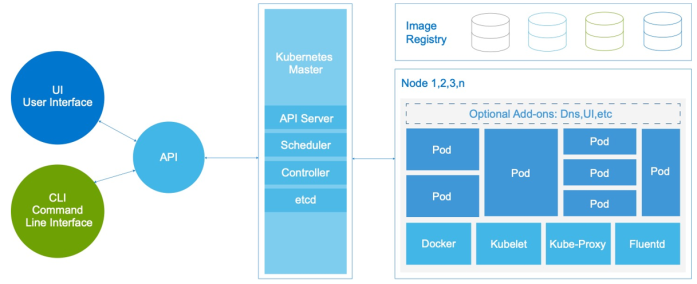

The following figure shows the Kubernetes architecture:

Figure 2. Kubernetes architecture

Kubernetes features for container orchestration at scale include:

- Auto-scaling, replication, and recovery of containers

- Intra-container communication, such as IP sharing

- A single entity—a pod—for creating and managing multiple containers

- A container resource usage and performance analysis agent, cAdvisor

- Network pluggable architecture

- Load balancing

- Health check service

In a simulated dev/test scenario in Use Case 2, we used the Kubernetes container orchestration system to deploy two Docker containers in a pod.

Kubernetes Container Storage Interface specification

The Kubernetes CSI specification was developed as a standard for exposing arbitrary block and file storage systems to containerized workloads through an orchestration layer. Kubernetes previously provided a powerful volume plug-in that was part of the core Kubernetes code and shipped with the core Kubernetes binaries. Before the adoption of CSI, however, adding support for new volume plug-ins to Kubernetes when the code was “in-tree” was challenging. Vendors wanting to add support for their storage system to Kubernetes, or even fix a bug in an existing volume plug-in, were forced to align with the Kubernetes release process. In addition, third-party storage code could cause reliability and security issues in core Kubernetes binaries. The code was often difficult—or sometimes impossible—for Kubernetes maintainers to test and maintain.

The adoption of the CSI specification makes the Kubernetes volume layer truly extensible. Using CSI, third-party storage providers can write and deploy plug-ins to expose new storage systems in Kubernetes without ever having to touch the core Kubernetes code. This capability gives Kubernetes users more storage options and makes the system more secure and reliable. Our Use Case 2 highlights these advantages by using the Dell EMC XtremIO X2 CSI plug-in to show the benefits of Kubernetes storage automation.

Kubernetes storage classes

We do not directly use Kubernetes storage classes in either of the use cases that we describe in this paper; however, the Kubernetes storage classes are closely related to CSI and the XtremIO X2 CSI plug-in. Kubernetes provides administrators an option to describe various levels of storage features and differentiate them by quality-of-service (QoS) levels, backup policies, or other storage-specific services. Kubernetes itself is unopinionated about what these classes represent. In other management systems, this concept is sometimes referred to as storage profiles.

The XtremIO X2 CSI plug-in creates three storage classes in Kubernetes during installation. The XtremIO X2 storage classes, which can be viewed from the Kubernetes dashboard, are predefined. These storage classes enable users to specify the amount of bandwidth to be made available to persistent storage that is created on the array. The following table shows the predefined storage classes:

Table 3. XtremIO X2 CSI predefined storage classes

Storage class

MB/s per GB

High

15

Medium

5

Low

1

The size of the requested storage volume and the storage class define the amount of bandwidth to be specified. For example, bandwidth for a 1,000 gibi (Gi) storage volume configured with the medium storage class is computed as follows:

Storage size (1,000 Gi) x storage class (medium at 5 MB/s per GB) = Total bandwidth (5,000 MB/s)

Note: Gi indicates power-of-two equivalents—10243 in this case.

Using the XtremIO X2 predefined storage classes helps to efficiently scale an environment by defining performance limits. For example, a storage class of low for a pool of 100 containers limits containerized applications so that they consume no more than their allocated bandwidth. Such limitations help to maintain more reliable storage performance across the entire environment.

Using QoS-based storage classes helps balance the resources that are consumed by containerized applications and the total amount of storage bandwidth. For scenarios that require a more customized set of storage classes than the one that is created by the XtremIO X2 CSI plug-in, you can configure XtremIO X2 QoS in Kubernetes. In creating a custom QoS policy, you can define maximum bandwidth per gigabyte or, alternatively, maximum IOPS. You could also define a burst percentage, which is the amount of bandwidth or IOPS above the maximum limit that the container can use for temporary performance.

The benefits of using predefined storage classes and customized QoS policies include:

- Guaranteed service for critical applications

- Eliminating “noisy neighbor” problems by placing performance limits on nonproduction containers