Home > Storage > PowerScale (Isilon) > Product Documentation > Storage (general) > PowerScale OneFS Technical Overview > Overview

Overview

-

Operating system

OneFS is built on a BSD-based UNIX operating system foundation. It supports both Linux/UNIX and Windows semantics natively, including hard links, delete-on-close, atomic rename, ACLs, and extended attributes. It uses BSD as its base operating system because it is a mature and proven operating system, and the open-source community can be leveraged for innovation. From OneFS 8.2 onwards, the underlying operating system version is FreeBSD 11.

Client services

The front-end protocols that the clients can use to interact with OneFS are referred to as client services. See Supported protocols for a detailed list of supported protocols. In order to understand how OneFS communicates with clients, we split the I/O subsystem into two halves: the top half or the ‘initiator’ and the bottom half or the ‘participant’. Every node in the cluster is a participant for a particular I/O operation. The node that the client connects to is the initiator and that node acts as the ‘captain’ for the entire I/O operation. The read and write operation are detailed in later sections.

Cluster operations

In a clustered architecture, there are cluster jobs that are responsible for taking care of the health and maintenance of the cluster itself—these jobs are all managed by the OneFS job engine. The job engine runs across the entire cluster and is responsible for dividing and conquering large storage management and protection tasks. To achieve this, it reduces a task into smaller work items and then allocates, or maps, these portions of the overall job to multiple worker threads on each node. Progress is tracked and reported on throughout job execution and a detailed report and status is presented upon completion or termination.

Job Engine includes a comprehensive check-pointing system which allows jobs to be paused and resumed, in addition to stopped and started. The Job Engine framework also includes an adaptive impact management system.

The Job Engine typically runs jobs as background tasks across the cluster, using spare or especially reserved capacity and resources. The jobs themselves can be categorized into three primary classes:

File system maintenance jobs

These jobs perform background file system maintenance, and typically require access to all nodes. These jobs are required to run in default configurations, and often in degraded cluster conditions. Examples include file system protection and drive rebuilds.

Feature support jobs

The feature support jobs perform work that facilitates some extended storage management function, and typically only run when the feature has been configured. Examples include deduplication and anti-virus scanning.

User action jobs

These jobs are run directly by the storage administrator to accomplish some data management goal. Examples include parallel tree deletes and permissions maintenance.

The table below provides a comprehensive list of the exposed Job Engine jobs, the operations they perform, and their respective file system access methods:

Table 1. OneFS Job Engine: Job descriptions

Job name

Job description

Access method

AutoBalance

Balances free space in the cluster.

Drive + LIN

AutoBalanceLin

Balances free space in the cluster.

LIN

AVScan

Virus scanning job that anti-virus servers run.

Tree

ChangelistCreate

Create a list of changes between two consecutive SyncIQ snapshots

Changelist

CloudPoolsLin

Archives data out to a cloud provider according to a file pool policy.

LIN

CloudPoolsTreewalk

Archives data out to a cloud provider according to a file pool policy.

Tree

Collect

Reclaims disk space that could not be freed due to a node or drive being unavailable while they suffer from various failure conditions.

Drive + LIN

ComplianceStoreDelete

SmartLock Compliance mode garbage collection job.

Tree

Dedupe

Deduplicates identical blocks in the file system.

Tree

DedupeAssessment

Dry run assessment of the benefits of deduplication.

Tree

DomainMark

Associates a path and its contents with a domain.

Tree

DomainTag

Associates a path and its contents with a domain.

Tree

EsrsMftDownload

ESRS managed file transfer job for license files.

FilePolicy

Efficient SmartPools file pool policy job.

Changelist

FlexProtect

Rebuilds and re-protects the file system to recover from a failure scenario.

Drive + LIN

FlexProtectLin

Re-protects the file system.

LIN

FSAnalyze

Gathers file system analytics data that is used in conjunction with InsightIQ.

Changelist

IndexUpdate

Creates and updates an efficient file system index for FilePolicy and FSAnalyze jobs,

Changelist

IntegrityScan

Performs online verification and correction of any file system inconsistencies.

LIN

LinCount

Scans and counts the file system logical inodes (LINs).

LIN

MediaScan

Scans drives for media-level errors.

Drive + LIN

MultiScan

Runs Collect and AutoBalance jobs concurrently.

LIN

PermissionRepair

Correct permissions of files and directories.

Tree

QuotaScan

Updates quota accounting for domains created on an existing directory path.

Tree

SetProtectPlus

Applies the default file policy. This job is disabled if SmartPools is activated on the cluster.

LIN

ShadowStoreDelete

Frees space associated with a shadow store.

LIN

ShadowStoreProtect

Protect shadow stores which are referenced by a LIN with higher requested protection.

LIN

ShadowStoreRepair

Repair shadow stores.

LIN

SmartPools

Job that runs and moves data between the tiers of nodes within the same cluster. Also runs the CloudPools functionality if licensed and configured.

LIN

SmartPoolsTree

Enforce SmartPools file policies on a subtree.

Tree

SnapRevert

Reverts an entire snapshot back to head.

LIN

SnapshotDelete

Frees disk space that is associated with deleted snapshots.

LIN

TreeDelete

Deletes a path in the file system directly from the cluster itself.

Tree

Undedupe

Removes deduplication of identical blocks in the file system.

Tree

Upgrade

Upgrades cluster on a later OneFS release.

Tree

WormQueue

Scan the SmartLock LIN queue

LIN

Although the file system maintenance jobs are run by default, either on a schedule or in reaction to a particular file system event, any job engine job can be managed by configuring both its priority-level (in relation to other jobs) and its impact policy.

An impact policy can consist of one or many impact intervals, which are blocks of time within a given week. Each impact interval can be configured to use a single pre-defined impact-level which specifies the amount of cluster resources to use for a particular cluster operation. Available job engine impact-levels are:

- Paused

- Low

- Medium

- High

This degree of granularity allows impact intervals and levels to be configured per job, in order to ensure smooth cluster operation. And the resulting impact policies dictate when a job runs and the resources that a job can consume. Also, job engine jobs are prioritized on a scale of one to ten, with a lower value signifying a higher priority. This is similar in concept to the UNIX scheduling utility, ‘nice’.

OneFS 9.8 sees the introduction of SmartThrottling. This is an intelligent impact control mechanism for the job engine that automatically adjusts the job engine resource usage to maintain client protocol latencies within specified thresholds.

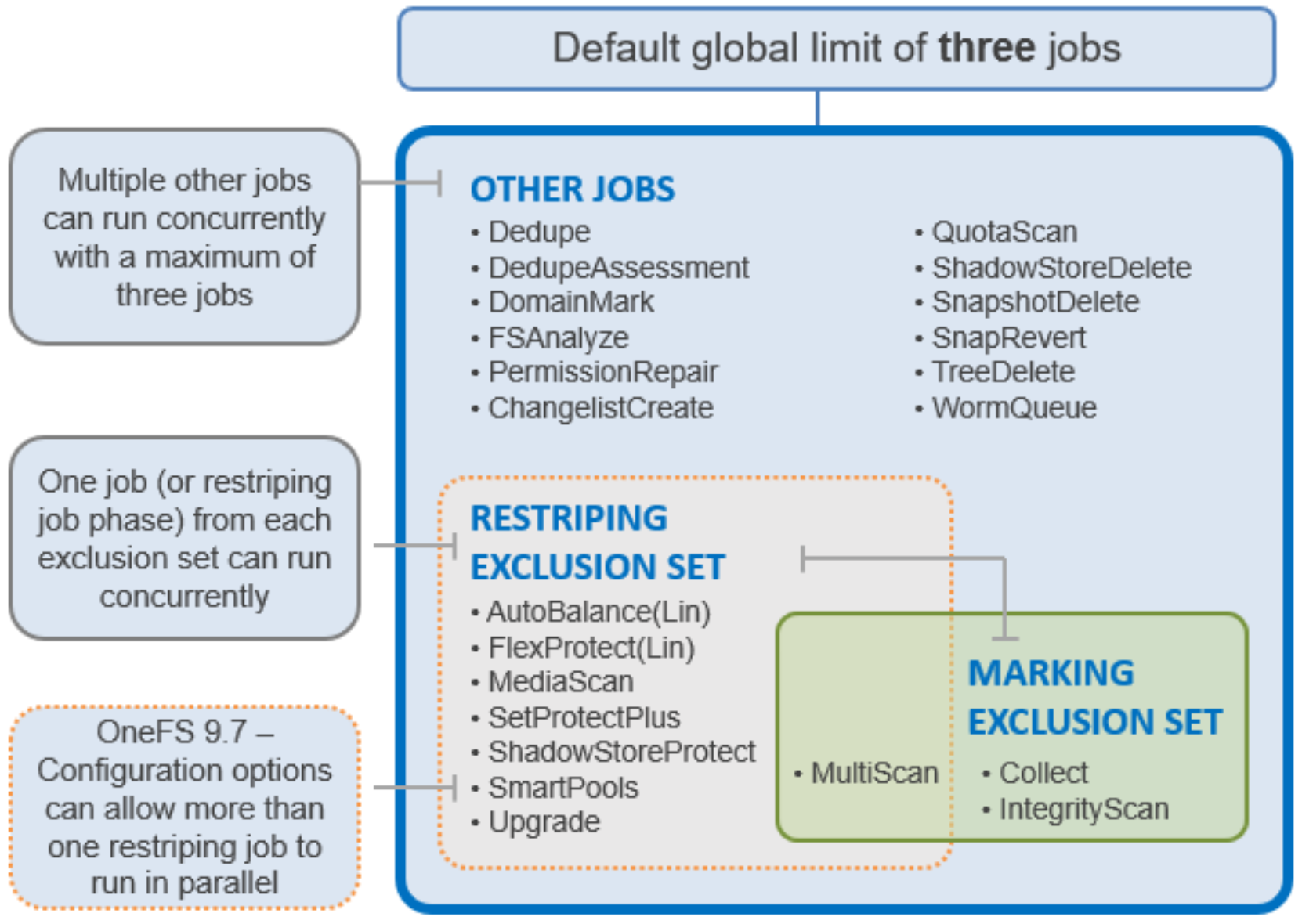

The job engine allows up to three jobs to be run simultaneously. This concurrent job execution is governed by the following criteria:

- Job Priority

- Exclusion Sets - jobs which cannot run together

- Cluster health - most jobs cannot run when the cluster is in a degraded state.

Figure 3. OneFS Job Engine Exclusion Sets

Further information is available in the OneFS Job Engine white paper.