Home > Storage > PowerScale (Isilon) > Product Documentation > Protocols > PowerScale OneFS NFS Design Considerations and Best Practices > SmartConnect

SmartConnect

-

SmartConnect, a licensable software module of the PowerScale OneFS operating system, helps to greatly simplify client management across the enterprise. Through a single host name, SmartConnect enables client connection load balancing and dynamic NFS failover and failback of client connections across storage nodes to provide optimal utilization of the cluster resources.

Client Connection Load Balancing

SmartConnect balances client connections across nodes based on policies that ensure optimal use of cluster resources. By leveraging your existing network infrastructure, SmartConnect provides a layer of intelligence that allows all client and user resources to point to a single hostname, enabling easy management of a large and growing numbers of clients. Based on user configurable policies, SmartConnect applies intelligent algorithms (CPU utilization, aggregate throughput, connection count, or round robin) and distributes clients across the cluster to optimize client performance and end-user experience. SmartConnect can be configured into multiple zones that can be used to ensure different levels of service for different groups of clients. All of this is transparent to the end user.

NFS failover using dynamic IP pool

SmartConnect uses a virtual IP failover scheme that is specifically designed for PowerScale scale-out NAS storage and does not require any client-side drivers. The PowerScale cluster shares a “pool” of virtual IPs that is distributed across all nodes of the cluster. The cluster distributes an IP address across NFS (Linux and UNIX) clients based on a client connection balancing policy.

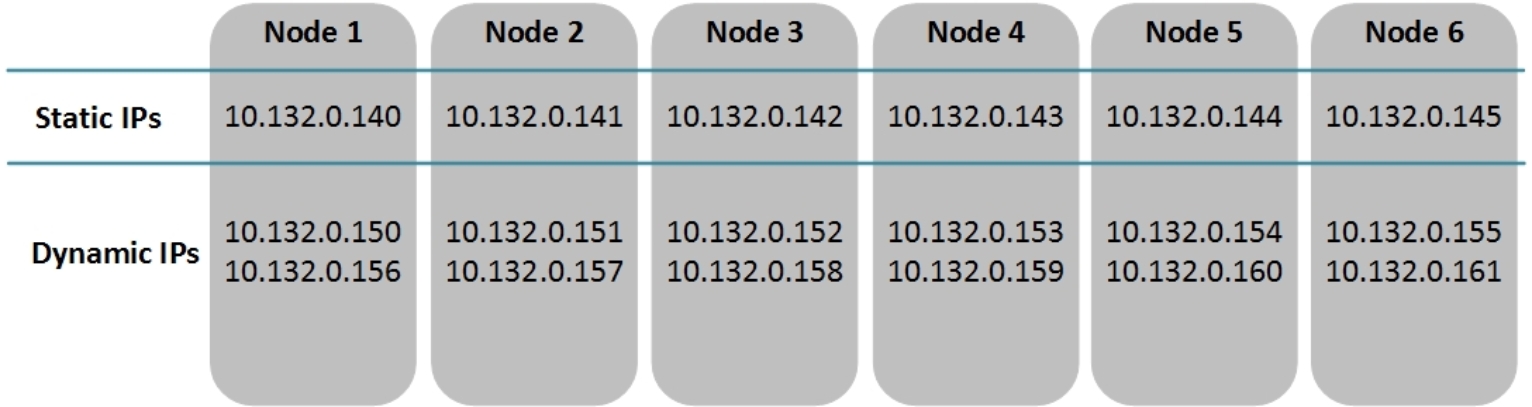

This is an example illustrating how NFS failover works. As shown in Figure 2, in the six-node OneFS cluster, an IP address pool provides a single static node IP (10.132.0.140 – 10.132.0.145) to an interface in each cluster node. Another pool of dynamic IPs (NFS failover IPs) has been created and distributed across the cluster (10.132.0.150 – 10.132.0.161).

Figure 2. Dynamic IPs and Static IPs

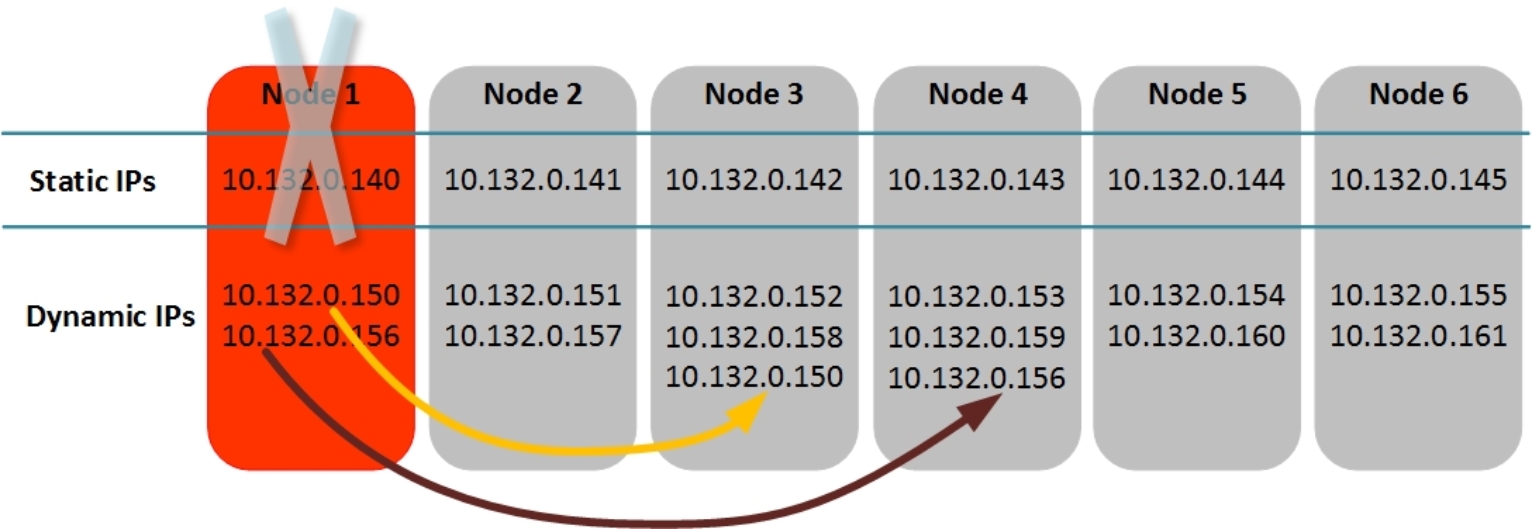

When Node 1 in the cluster goes offline, the NFS failover IPs and connected clients associated with Node 1 failover to the remaining nodes based on the configured IP failover policy (Round Robin, Connection Count, Network Throughput, or CPU Usage). The static IP for Node 1 is no longer available as shown in Figure 3.

Figure 3. NFS Failover with Dynamic IP Pool

Therefore, using dynamic IP pool for NFS workload is recommended to provide NFS service resilience. If a node with client connections established goes offline, the behavior is protocol-specific. Because NFSv3 is a stateless protocol, after the node failure, workflows can be moved easily from one cluster node to another. It will automatically reestablish the connection with the IP on the new interface and retries the last NFS operation. NFSv4.x is a stateful protocol, the connection state between the client and the node is maintained by OneFS to support NFSv4.x failover and in OneFS 8.x versions and higher, OneFS keeps that connection state information for NFSv4.x synchronized across multiple nodes. Once the node failure occurs, the client can resume the workflow with the same IP on the new node using the previous maintained connection state.

The number of IPs available to the dynamic pool directly affects how the cluster load balances the failed connections. For small clusters under N (N<=10) nodes, the formula N*(N-1) will provide a reasonable number of IPs in a pool. For larger clusters, the number of IPs per pool is between the number of nodes and the number of clients. Requirements for larger clusters are highly dependent on the workflow and the number of clients.