Home > Storage > PowerScale (Isilon) > Product Documentation > Storage (general) > PowerScale OneFS: Cluster Composition, Quorum, and Group State > GMP scalability

GMP scalability

-

In OneFS 8.2 and later, the maximum cluster size is extended from 144 to 252 nodes. To support this increase in scale, GMP transaction latency has improved by eliminating serialization and reducing its reliance on exclusive merge locks. Instead, GMP now employs a shared merge locking model.

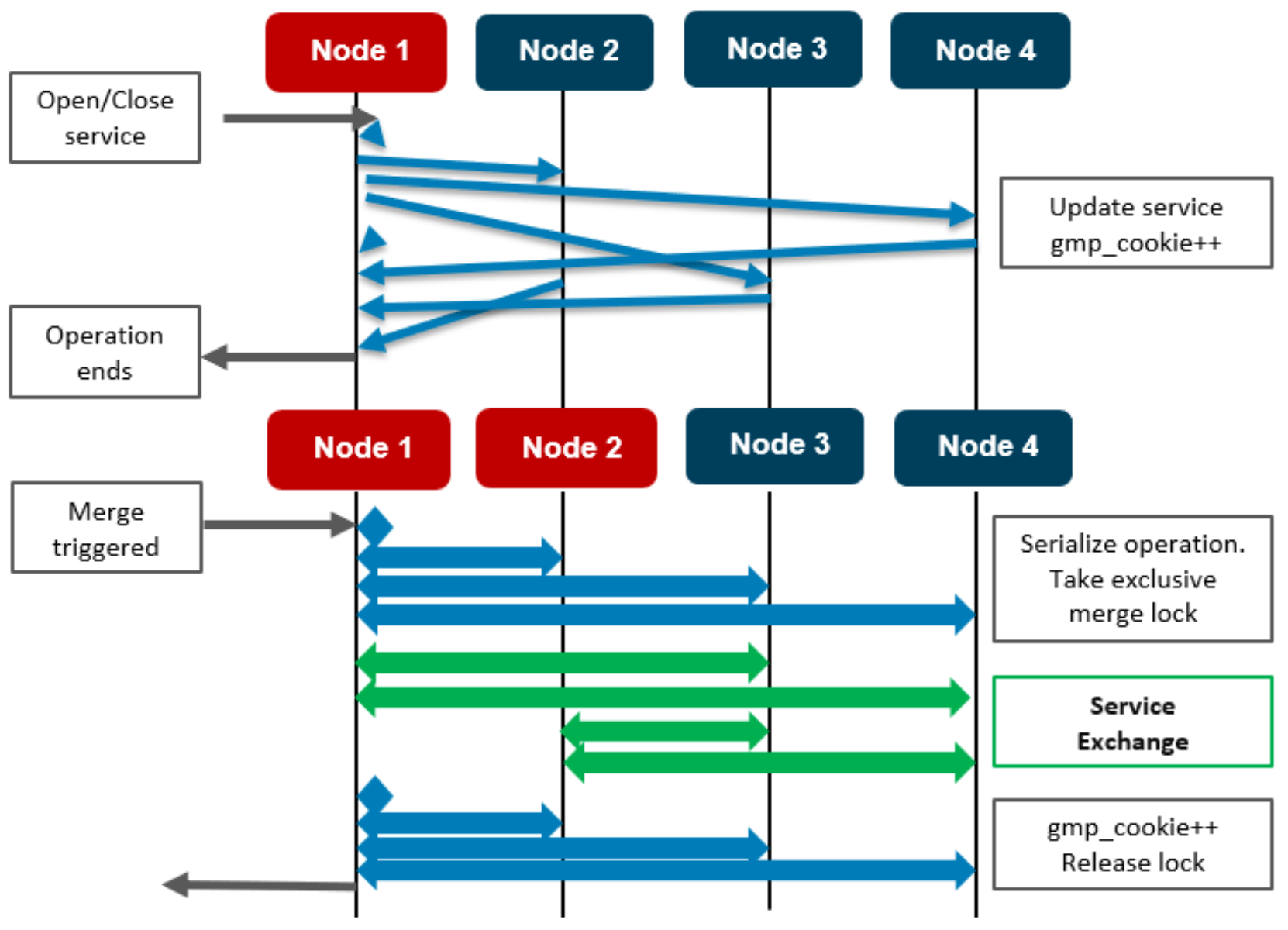

Figure 1. GMP share merge locking

In the above serialized locking example on a four-node cluster, the interaction between the two operations is condensed, illustrating how each node can finish its operation independent of its peers. Note that the diamond icons represent the ‘loopback’ messaging to node 1.

Each node takes its local exclusive merge lock. By not serializing/locking, the group change impact is drastically reduced, thereby allowing OneFS to support greater node counts. It is expensive to stop GMP messaging on all nodes to allow this. While state is not synchronized immediately, it will be the same after a short while. The caller of a service change will not return until all nodes have been updated. Once all nodes have replied, the service change has completed. It is possible that multiple nodes change a service simultaneously, or that multiple services on the same node change.

The example above illustrates nodes {1,2} merging with nodes {3, 4}. The operation is serialized, and the exclusive merge lock will be taken. In the diagram, the wide arrows represent multiple messages being exchanged. The green arrows show the new service exchange. Each node sends its service state to all the nodes new to it and receives the state from all new nodes. There is no need to send the current service state to any node in a group before the merge.

During a node split, there are no synchronization issues because either order results in the services being down, and the existing OneFS algorithm still applies.