Home > Storage > PowerScale (Isilon) > Product Documentation > Storage (general) > PowerScale OneFS: Cluster Composition, Quorum, and Group State > Cluster composition, quorum, and group state

Cluster composition, quorum, and group state

-

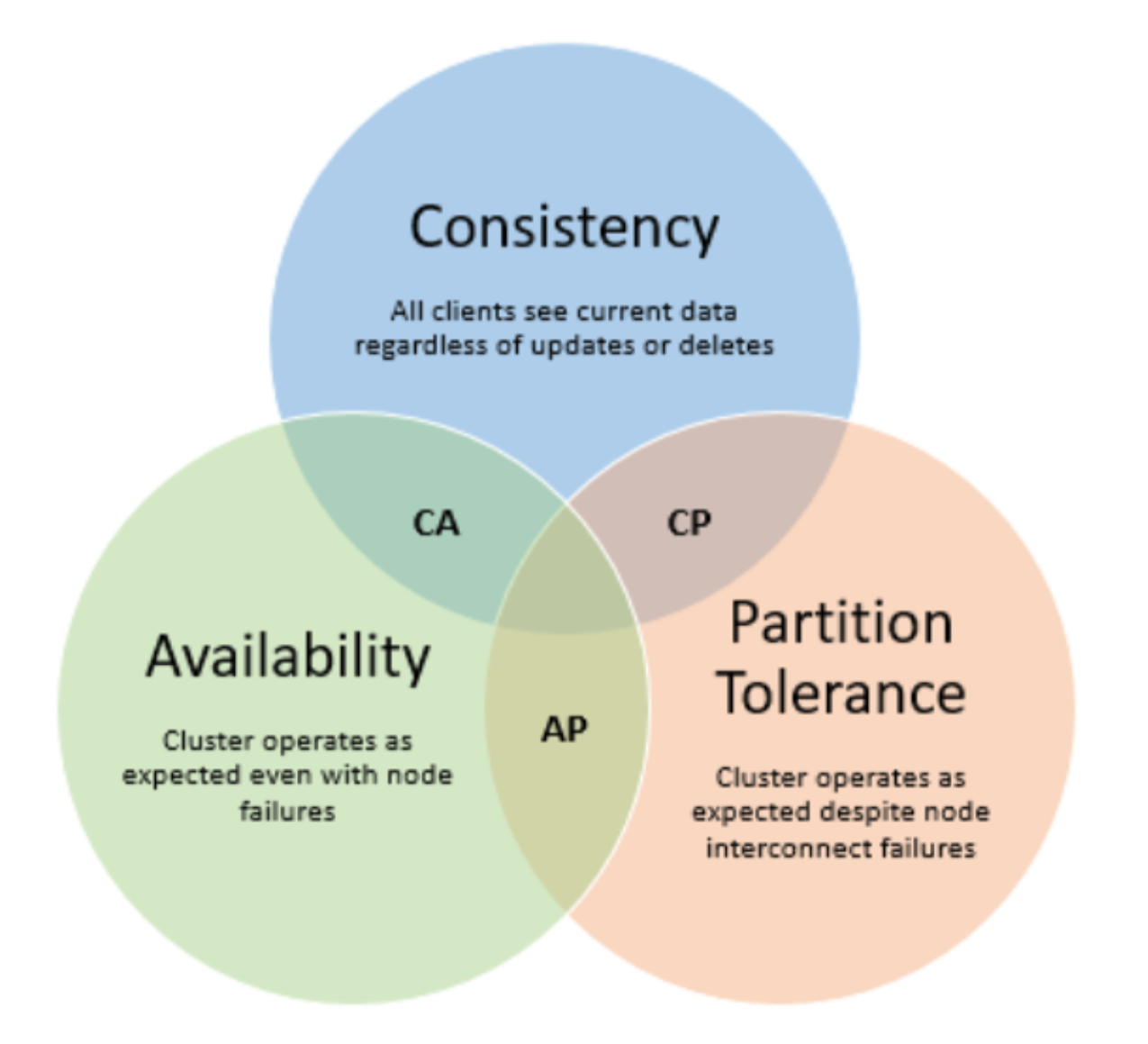

In order for a cluster to properly function and accept data writes, a quorum or majority of nodes must be active and responding. OneFS uses this notion of a quorum to prevent “split-brain” conditions that could be introduced if a cluster temporarily split into two clusters. OneFS clustering is based on the CAP theorem, which states that it is impossible for a distributed data store to simultaneously provide more than two out of the following three guarantees:

OneFS does not compromise on Consistency, and uses a simple quorum to prevent partitioning, or ‘split-brain’ conditions that can be introduced if the cluster should temporarily divide into two clusters. This is a prerequisite of CAP.

The quorum rule guarantees that, regardless of how many nodes fail or come back online, if a write takes place, it can be made consistent with any previous writes that have ever taken place. As such, cluster quorum dictates the number of nodes required in order to move to a given data protection level. For an erasure-code (FEC) based protection-level of N+M, the cluster must contain at least 2 M+1 nodes. For example, a minimum of seven nodes is required for a +3n protection level. This allows for a simultaneous loss of three nodes while still maintaining a quorum of four nodes for the cluster to remain fully operational.

If a cluster does drop below quorum, the file system will automatically be placed into a protected, read-only state, denying writes, but still allowing read access to the available data. In this state, it will not accept write requests from any protocols, regardless of any particular node pool membership issues. In the instances where a protection level is set too high for OneFS to achieve using FEC, the default behavior is to protect that data using mirroring instead. Obviously, this has a negative impact on space utilization.

Since OneFS does not compromise on consistency, so a mechanism is required to manage a cluster’s transient state and quorum. As such, the primary role of the OneFS Group Management Protocol (GMP) is to help create and maintain a group of synchronized nodes.

A group is a given set of nodes which have synchronized state, and a cluster may form multiple groups as connection state changes. Quorum is a property of the GMP group, which helps enforce consistency across node disconnects and other transient events. Having a consistent view of the cluster state is crucial since initiators need to know which node and drives are available to write to.