Home > Storage > PowerScale (Isilon) > Product Documentation > Storage (general) > PowerScale OneFS Best Practices > Cluster capacity management

Cluster capacity management

-

When a cluster or any of its node pools becomes more than 95% full, OneFS can experience slower performance and possible workflow interruptions in degraded mode and high-transaction or write latency sensitive operations. Furthermore, when a large cluster approaches full capacity (over 98% full), the following issues can occur:

- Performance degradation in some cases

- Workflow disruptions - failed file operations and inability to write data.

- Inability to make configuration changes or run commands to delete data and free up space

- Increased disk and node rebuild times.

To ensure that a cluster or its constituent pools do not run out of space:

- Add new nodes to existing clusters or pools

- Replace smaller-capacity nodes with larger-capacity nodes

- Create more clusters.

When deciding to add new nodes to an existing large cluster or pool, contact your sales team to order the nodes well in advance of the cluster or pool running short on space. The recommendation is to start planning for additional capacity when the cluster or pool reaches 75% full. This will allow sufficient time to receive and install the new hardware, while still maintaining sufficient free space.

The following table presents a suggested timeline for cluster capacity planning:

Table 8. Capacity planning timeline

Used capacity

Action

75%

Plan additional node purchases.

80%

Receive delivery of the new hardware.

85%

Rack and install the new node or nodes.

If an organization’s data availability and protection SLA varies across different data categories (for example, home directories, file services), ensure that any snapshot, replication, and backup schedules are configured accordingly to meet the required availability and recovery objectives, and fit within the overall capacity plan.

Consider configuring a separate accounting quota for /ifs/home and /ifs/data directories (or wherever data and home directories are provisioned) to monitor aggregate disk space usage and issue administrative alerts as necessary to avoid running low on overall capacity.

For optimal performance in any size cluster, the recommendation is to maintain 10% free space in each pool of a cluster.

To better protect smaller clusters (containing 3 to 7 nodes) we recommend that you maintain 15 to 20% free space. A full smartfail of a node in smaller clusters may require more than one node's worth of space. Maintaining 15 to 20% free space can allow the cluster to continue to operate while Dell helps with recovery plans.

Plan for contingencies: having a fully updated backup of your data can limit the risk of data loss if a node fails.

Maintaining appropriate protection levels

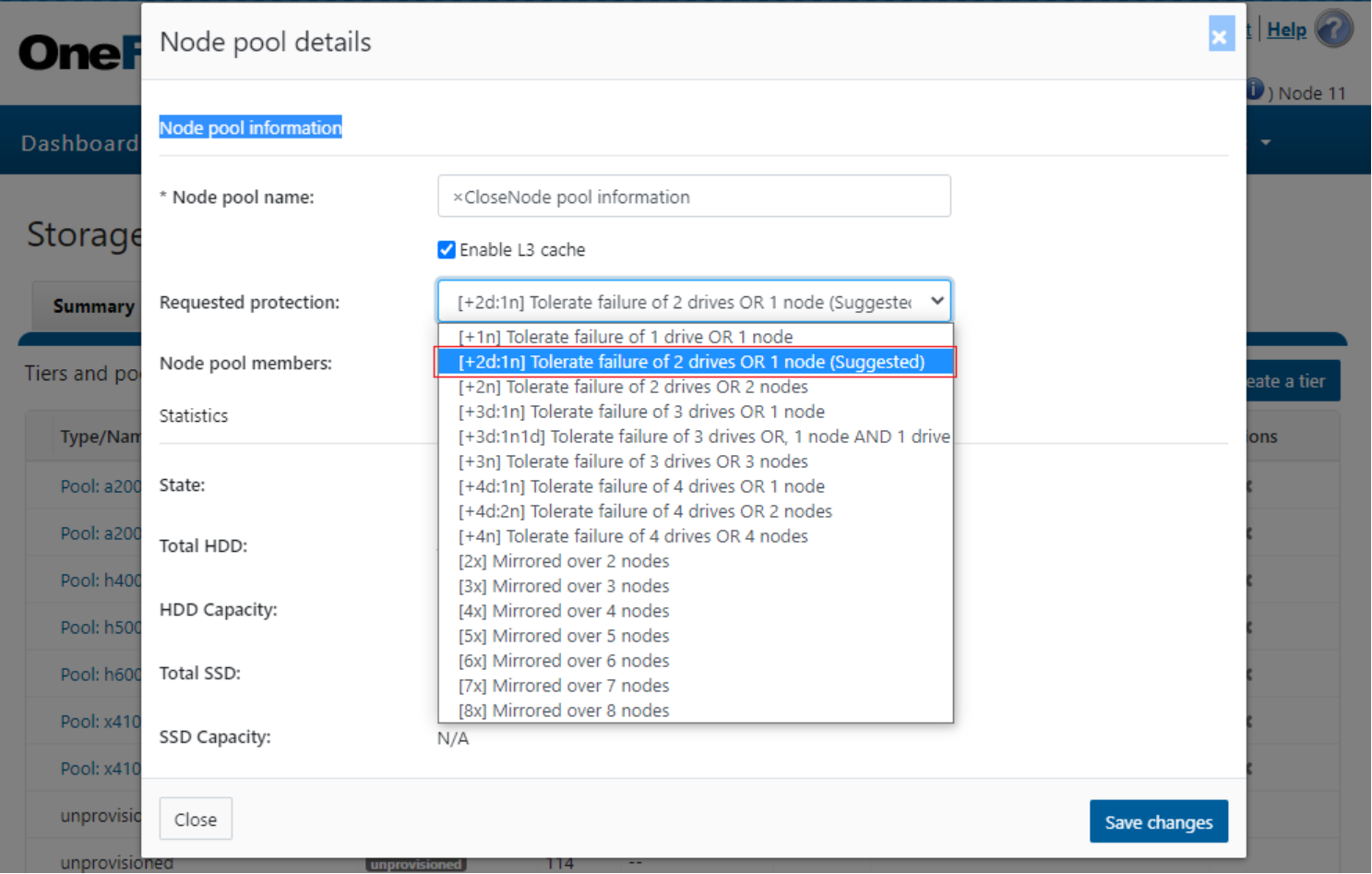

Ensure that your cluster and pools are protected at the appropriate level. Every time you add nodes, re-evaluate protection levels. OneFS includes a ‘suggested protection’ function that calculates a recommended protection level based on cluster configuration, and alerts you if the cluster falls below this suggested level.

OneFS supports several protection schemes. These include the ubiquitous +2d:1n, which protects against two drive failures or one node failure.

The best practice is to use the recommended protection level for a particular cluster configuration. This recommended level of protection is clearly marked as ‘suggested’ in the OneFS WebUI storage pools configuration pages and is typically configured by default. For all current hardware configurations, the recommended protection level is ‘+2d:1n’ or ‘+3d:1n1d’.

Figure 20. OneFS suggested protection level

Monitoring cluster capacity

To monitor cluster capacity:

Configure alerts. Set up event notification rules so that you will be notified when the cluster begins to reach capacity thresholds. Make sure to enter a current email address in order to receive the notifications.

Monitor alerts. The cluster sends notifications when any of its node pools have reached 90 percent and 97 percent capacity. On some clusters, 5 percent (or even 1 percent) capacity remaining might mean that a lot of space is still available, so you might be inclined to ignore these notifications. However, it is best to pay attention to the alerts, closely monitor the cluster, and have a plan in place to take action when necessary.

Monitor ingest rate. It is very important to understand the rate at which data is coming into the cluster or pool. Options to do this include:

- SNMP

- SmartQuotas

- FSAnalyze

Use SmartQuotas to monitor and enforce administrator-defined storage limits. SmartQuotas manages storage use, monitors disk storage, and issues alerts when disk storage limits are exceeded. Although it does not provide the same detail of the file system that FSAnalyze does, SmartQuotas maintains a real-time view of space utilization so that you can quickly obtain the information you need.

Run FSAnalyze jobs. FSAnalyze is a job-engine job that the system runs to create data for InsightIQ file system analytics tools. FSAnalyze provides details about data properties and space usage within the /ifs directory. Unlike SmartQuotas, FSAnalyze updates its views only when the FSAnalyze job runs. Since FSAnalyze is a fairly low-priority job, higher-priority jobs can sometimes preempt it, so it can take a long time to gather all the data. An InsightIQ license is required to run an FSAnalyze job.

Managing data

Regularly archive data that is rarely accessed and delete any unused and unwanted data. Ensure that pools do not become too full by setting up file pool policies to move data to other tiers and pools.

Provisioning additional capacity

To ensure that your cluster or pools do not run out of space, you can create more clusters, replace smaller-capacity nodes with larger-capacity nodes, or add new nodes to existing clusters or pools. If you decide to add new nodes to an existing cluster or pool, contact your sales representative to order the nodes long before the cluster or pool runs out of space. Dell recommends that you begin the ordering process when the cluster or pool reaches 80% used capacity. This will allow enough time to receive and install the new equipment and still maintain enough free space.

Managing snapshots

Sometimes a cluster has many old snapshots that take up a lot of space. Reasons for this include inefficient deletion schedules, degraded cluster preventing job execution, and expired SnapshotIQ license.

Ensuring all nodes are supported and compatible

Each version of OneFS supports only certain nodes. See the “OneFS and node compatibility” section of the Supportability and Compatibility Guide for a list of which nodes are compatible with each version of OneFS. When upgrading OneFS, make sure that the new version supports your existing nodes. If it does not, you might need to replace the nodes.

Space and performance are optimized when all nodes in a pool are compatible. When you add new nodes to a cluster, OneFS automatically provisions nodes into pools with other nodes of compatible type, hard drive capacity, SSD capacity, and RAM. Occasionally, however, the system might put a node into an unexpected location. If you believe that a node has been placed into a pool incorrectly, contact Dell Technical Support for assistance. Different versions of OneFS have different rules regarding what makes nodes compatible.

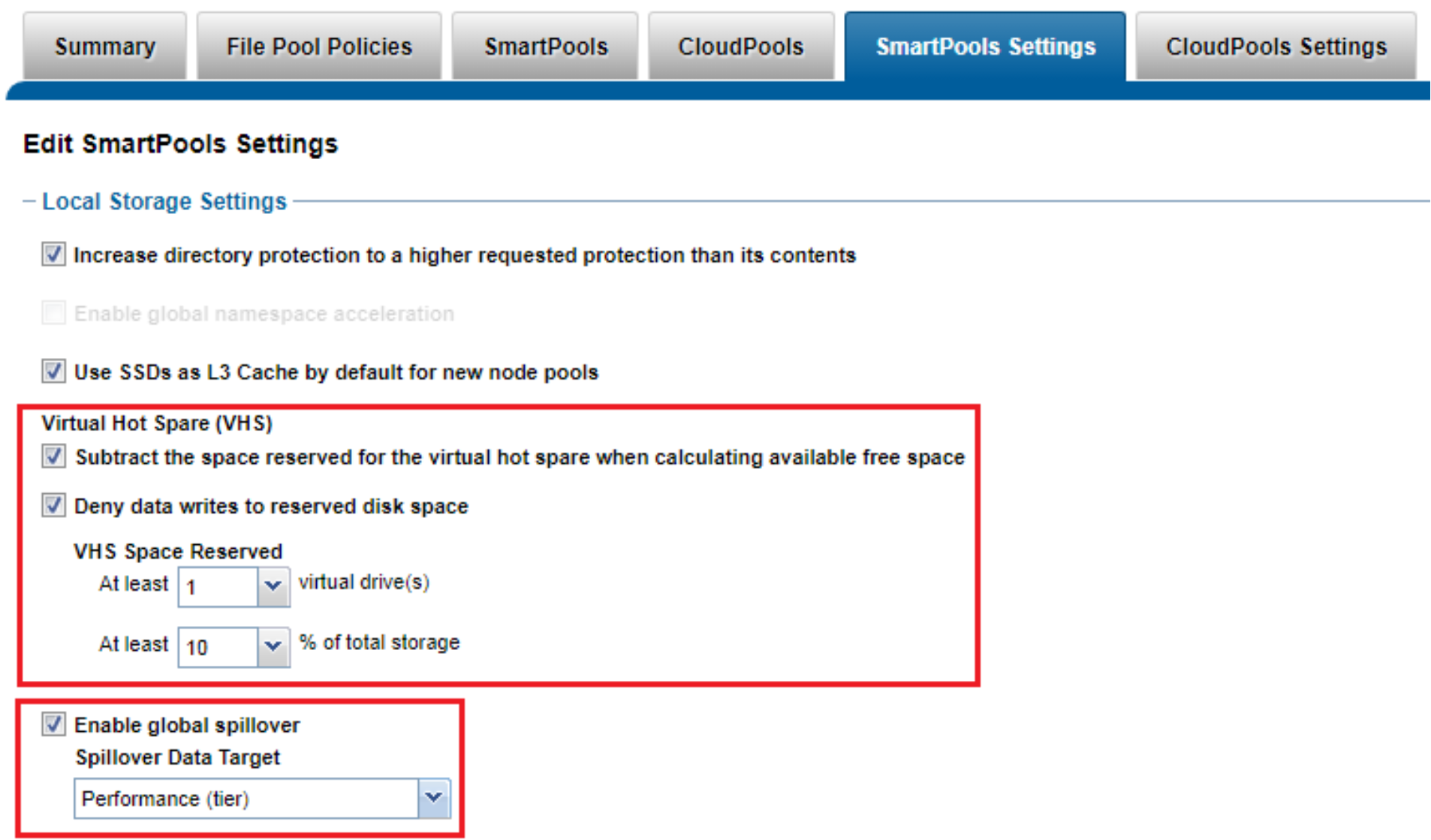

Enabling Virtual Hot Spare

The purpose of Virtual Hot Spare (VHS) is to keep space in reserve in case you need to smartfail drives when the cluster gets close to capacity. Enabling VHS will not give you more free space, but it will help protect your data in the event that space becomes scarce. VHS is enabled by default. Dell strongly recommends that you do not disable VHS unless directed by a Support engineer. If you disable VHS in order to free some space, the space you just freed will probably fill up again quickly with new writes. At that point, if a drive were to fail, you might not have enough space to smartfail the drive and reprotect its data, potentially leading to data loss. If VHS is disabled and you upgrade OneFS, VHS will remain disabled. If VHS is disabled on your cluster, first check to make sure the cluster has enough free space to safely enable VHS, and then enable it.

Enabling Spillover

Spillover allows data that is being sent to a full pool to be diverted to an alternate pool. Spillover is enabled by default on clusters that have more than one pool. If you have a SmartPools license on the cluster, you can disable Spillover. However, it is recommended that you keep Spillover enabled. If a pool is full and Spillover is disabled, you might get a “no space available” error but still have a large amount of space left on the cluster.

Figure 21. OneFS SmartPools VHS and Spillover configuration

Confirm that:

- There are no cluster issues.

- OneFS configuration is as expected.

In summary, best practices on planning and managing capacity on a cluster include the following:

- Maintain sufficient free space.

- Plan for contingencies.

- Manage your data:

- Maintain appropriate protection levels.

- Monitor cluster capacity and data ingest rate.

- Consider configuring a separate accounting quota for /ifs/home and /ifs/data directories (or wherever data and home directories are provisioned) to monitor aggregate disk space usage and issue administrative alerts as necessary to avoid running low on overall capacity.

- Ensure that any snapshot, replication, and backup schedules meet the required availability and recovery objectives and fit within the overall capacity.

- Manage snapshots.

- Use InsightIQ, ClusterIQ and DataIQ, usage forecasting, and verifying cluster health.