Home > Storage > PowerScale (Isilon) > Product Documentation > Security and Compliance > PowerScale Cyber Protection Suite Reference Architecture > AirGap Enterprise design considerations

AirGap Enterprise design considerations

-

This section describes design considerations for AirGap Enterprise.

Network design

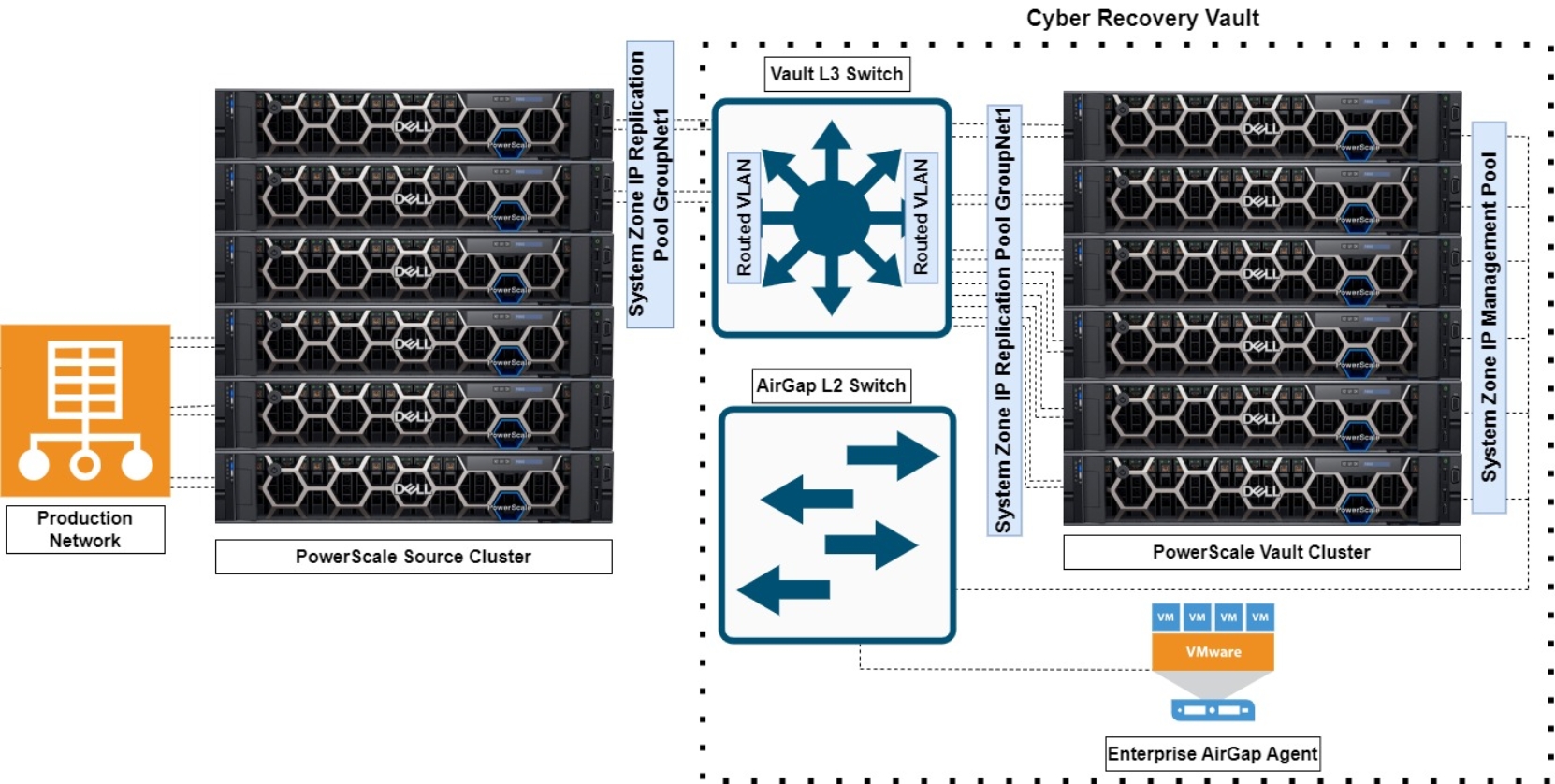

On the PowerScale Source Cluster, some of the nodes are connected through a Vault L3 Switch, located in the Cyber Recovery Vault, to the PowerScale Vault Cluster. Although the number of nodes connected to the Vault L3 Switch varies based on cluster size and workflow requirements, it is recommended to have at least two of the nodes’ front-end network ports connect through the Vault L3 Switch. The rest of the nodes on the source cluster connect to the production network. All the PowerScale Vault Cluster nodes connect to the Vault L3 Switch as shown in the following figure.

Figure 31. PowerScale Source Cluster to Vault Cluster topology

The source cluster nodes that connect to the Vault L3 Switch require a direct connection without any additional network hops for optimal security. The source and vault cluster nodes connect to the Vault L3 Switch through their respective System Zone IP Replication Pool Groupnet1. The Vault L3 Switch routes the VLAN from the source cluster to the VLAN for the vault cluster.

Inside the Cyber Recovery Vault, the PowerScale Vault Cluster management ports connect through a System Zone IP Management Pool to the AirGap L2 Switch. The AirGap L2 Switch also connects to the VMware ESXi host running the AirGap Enterprise vault agent.

Considering that each node on the source cluster can only connect to either the Vault L3 Switch or the production network, a common question is how to split the nodes between the networks. The best practice is for at least two of the source cluster nodes to connect to the Vault L3 Switch. Additional source cluster nodes can connect to the Vault L3 Switch, depending on the source cluster size, workflow, and recovery requirements.

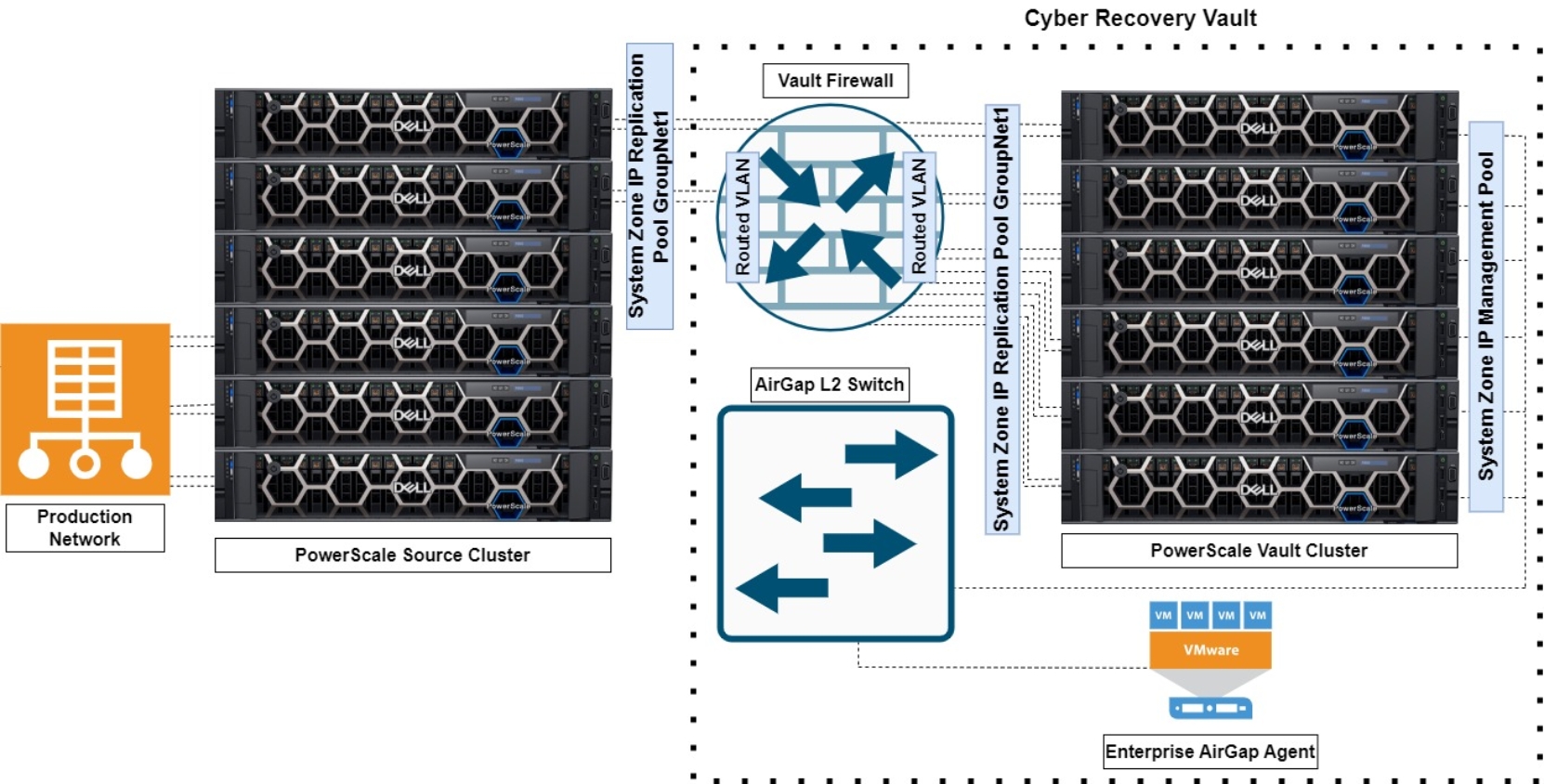

Firewall

Adding a firewall to the Cyber Recovery Vault is an optional configuration. The minimum best practice is having the Vault L3 Switch manage replication into the Cyber Recovery Vault as explained in the section Network design. A firewall could be used in place of the Vault L3 Switch routing between the source cluster and the vault cluster as shown in the following figure.

Figure 32. Cyber Recover Vault with Vault Firewall

Depending on the Vault L3 Switch vendor, configuration, and release, a firewall provides additional protection in most cases. The level of additional protection is difficult to gauge because each switch and firewall vendor implements different security functions. Consider how the specific Vault L3 Switch compares with the specific firewall.

Traditionally, firewalls provide additional security over an L3 switch. If a firewall is configured to block all incoming traffic, any host requesting data from outside the Cyber Recovery Vault would be denied. An Access Control List (ACL) could be configured to allow traffic only from the source cluster. Another option would be a reflexive ACL that could see an outbound connection, and it can be configured to reflect outbound connections, allowing traffic back on that connection. The stateful behavior of firewalls maintains a connection state, up to a timeout period of inactivity, blocking traffic when the connection is closed.

A Next-Generation Firewall (NGFW) would provide an added layer of security with deep packet inspection to determine what type of data heuristically is passing through, or which application is communicating. The NGFW provides flexibility to block or allow data with granular options. For example, the NGFW could detect and block malware in a data stream. Traffic could be limited to and from a single application or host. Considering that the NGFW would be in the Cyber Recovery Vault, a maintenance window would have to be planned for to ensure that the software is current against threats.

Another factor to consider with a firewall are the impacts on data replication speed. The additional packet filtering does consume time, but the impacts vary, and are minimal with the new generation firewalls, depending on the firewall version and vendor.

Adding a firewall to the Cyber Recovery Vault can also create additional management overhead. The device must be configured and updated in a cadence. Access roles must be configured, and other overhead should be considered during the design phase.

It is important to understand that the network interfaces on the vault cluster are added only for replication and then removed when not in use. Further, OneFS release 9.5 and later include a built-in firewall. For more information about the OneFS firewall, see the white paper PowerScale: Network Design Considerations | Dell Technologies Info Hub.

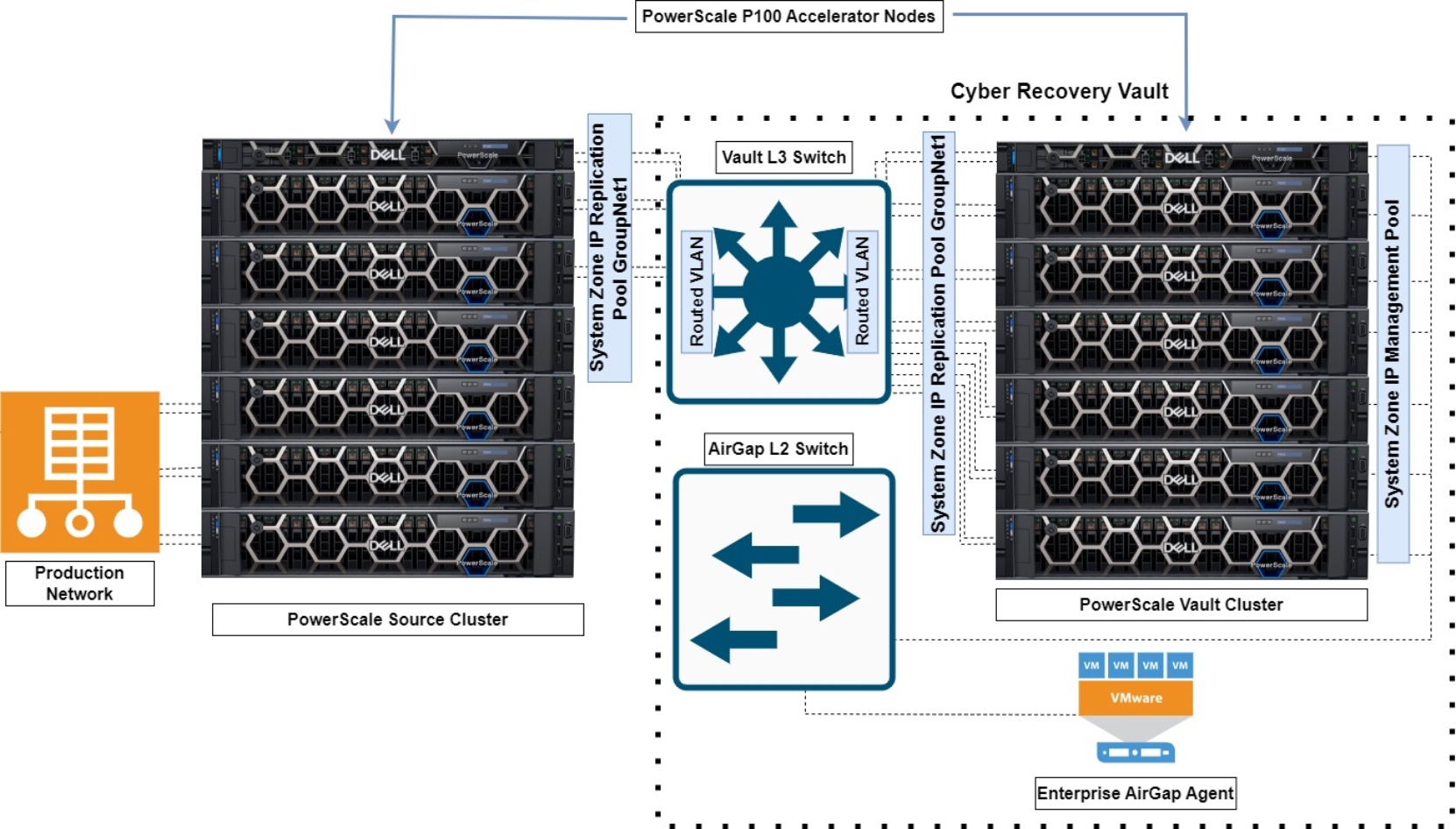

Accelerator nodes

Given that not all the source and vault cluster nodes are connected through the Vault L3 Switch, adding accelerator nodes to both the source and vault clusters provides additional replication throughput. If accelerator nodes are to be used, as a best practice add them to both the source and vault clusters. Further, ensure that they are both part of the nodes participating in the replication and connected through the Vault L3 Switch as shown in the following figure.

Figure 33. PowerScale Source to Vault Cluster Topology with Accelerator Nodes

During SyncIQ replication, the nodes generate worker streams between the clusters. The accelerator nodes read and write data from other nodes in the cluster. This optimizes the overall cluster throughput, considering all of the source cluster nodes are not connected through the Vault L3 Switch.

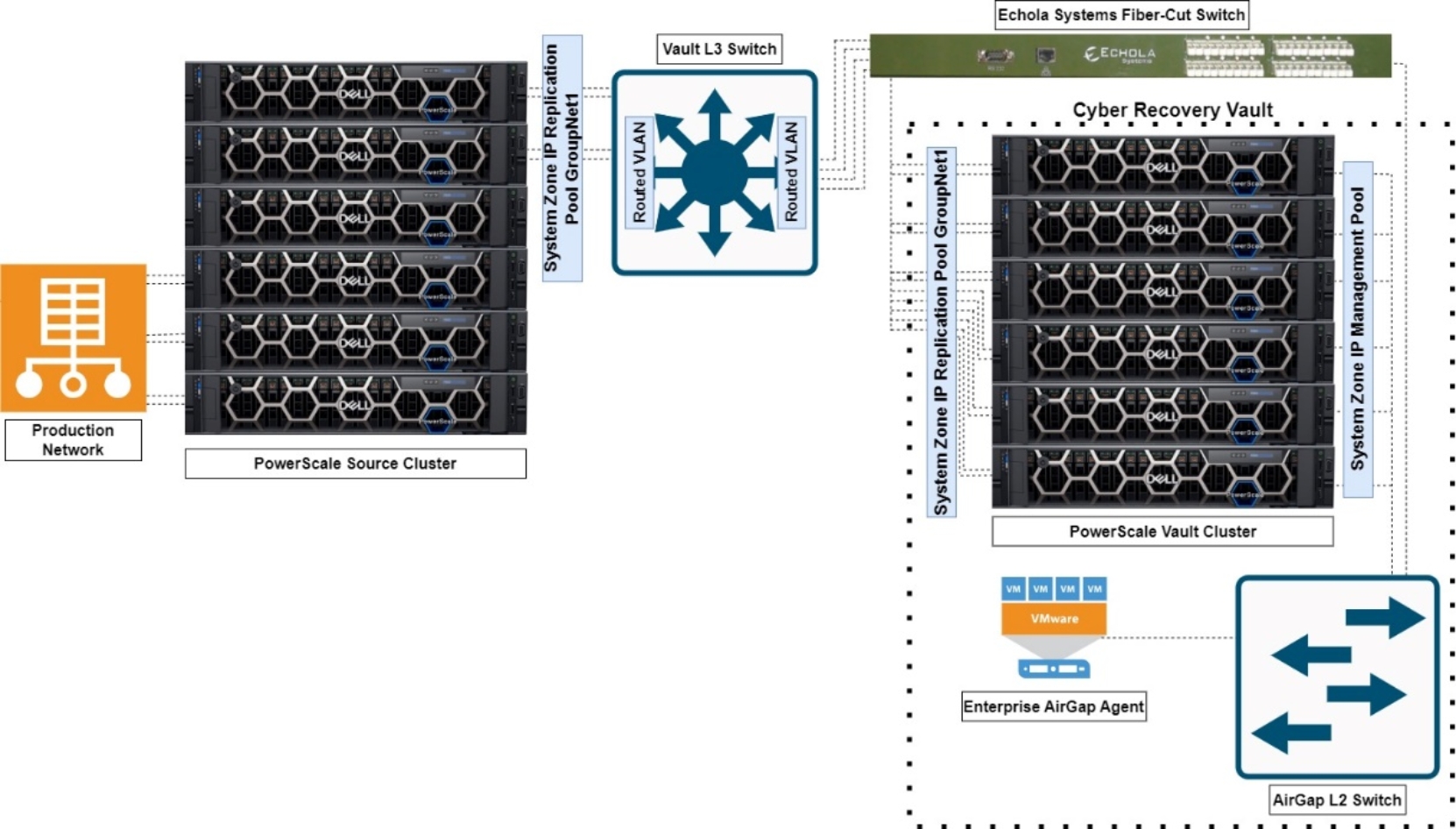

Fiber-cut switch

The Cyber Recovery Vault can be further isolated using a fiber-cut kill switch, simulating a real-world fiber-cut scenario. As an optional part of the Cyber Recover Vault, the fiber-cut switch provides electrical and electromagnetic isolation. An out-of-band management channel is used to isolate the network using fiber-cut simulation and REST APIs/CLIs. Further, it can also function as a kill switch for better containment by blocking the lateral movement of ransomware. Using the fiber-cut switch still allows for the choice of either the Vault L3 Switch or the Vault Firewall because it is physically placed outside the vault, as shown in the following figure.

Figure 34. PowerScale Source to Vault Cluster Topology with Echola fiber-cut switch

For more information about the Echola Systems fiber-cut switch, see Echola Systems Optical switch products.

SyncIQ considerations

Although the considerations here are specific to a source and vault cluster, the general SyncIQ design considerations for a source and target cluster are applicable. For more information about SyncIQ design considerations, see Dell PowerScale SyncIQ: Architecture, Configuration, and Considerations | Dell Technologies Info Hub.

Vault cluster license requirements

The vault cluster requires SmartConnect Advanced, SyncIQ, and SnapshotIQ licenses. SmartDedupe and SmartLock are optional, depending on the data requirements.

Source and vault cluster node platforms

During the design phase, consider how the node platforms on the source and vault cluster affect the overall data replication performance. When a performance node on the source cluster is replicating to archive nodes on the vault cluster, the overall data replication performance is compromised based on the limited performance of the vault cluster’s nodes. For example, if a source cluster is composed of F900 nodes and the vault cluster is composed of A3000 nodes, the replication performance reaches a threshold because the A3000 CPUs cannot perform at the same level as the F900 CPUs.

Depending on the workflow and replication requirements, the longer replication times may not be a concern. However, if replication performance is time sensitive, consider the node types and associated CPUs on the source and target clusters, because this could bottleneck the overall data replication times.

For maximum SyncIQ efficiency, a matching node platform for both clusters is recommended, because the number of SyncIQ workers is based on the node’s CPU. If the vault cluster has a lower performance node, it would not be able to maintain the source cluster’s stream throughput.

Business continuity dataset and cluster node platforms

During the design phase, consider how the business continuity dataset that is stored on the PowerScale Vault Cluster impacts the node quantity and platform of the source and vault cluster. The first step is identifying the business continuity dataset, because several departments must agree on the business continuity dataset.

When the dataset is defined, the next step is to consider how quickly the dataset is changing. Based on the frequency of dataset changes, from a business continuity perspective, organizations must consider the stale data that occurs as the dataset ages from the last update. From this data point, extract the size and frequency of the dataset changes. If the dataset has significant changes in a short time span, this will impact how quickly the dataset can be replicated from the source to vault cluster. The dataset replication speed depends on many factors, but mainly the source and vault cluster sizes, available bandwidth, node platforms, and overall cluster resources. If the source and vault cluster are composed of a larger quantity of nodes, data replication is faster. Further, if the source and vault cluster nodes are performance nodes, more SyncIQ streams are created between the clusters, increasing replication throughput.

Access control and management

On the source cluster, administrators should be assigned with the minimum required Role Based Access Control (RBAC). On the vault cluster, only the Chief Security Officer (CSO) or other senior security management staff should have access. For more information about configuring RBAC for OneFS, see Introduction to Role Based Access Control | PowerScale OneFS Authentication, Identity Management, and Authorization | Dell Technologies Info Hub.

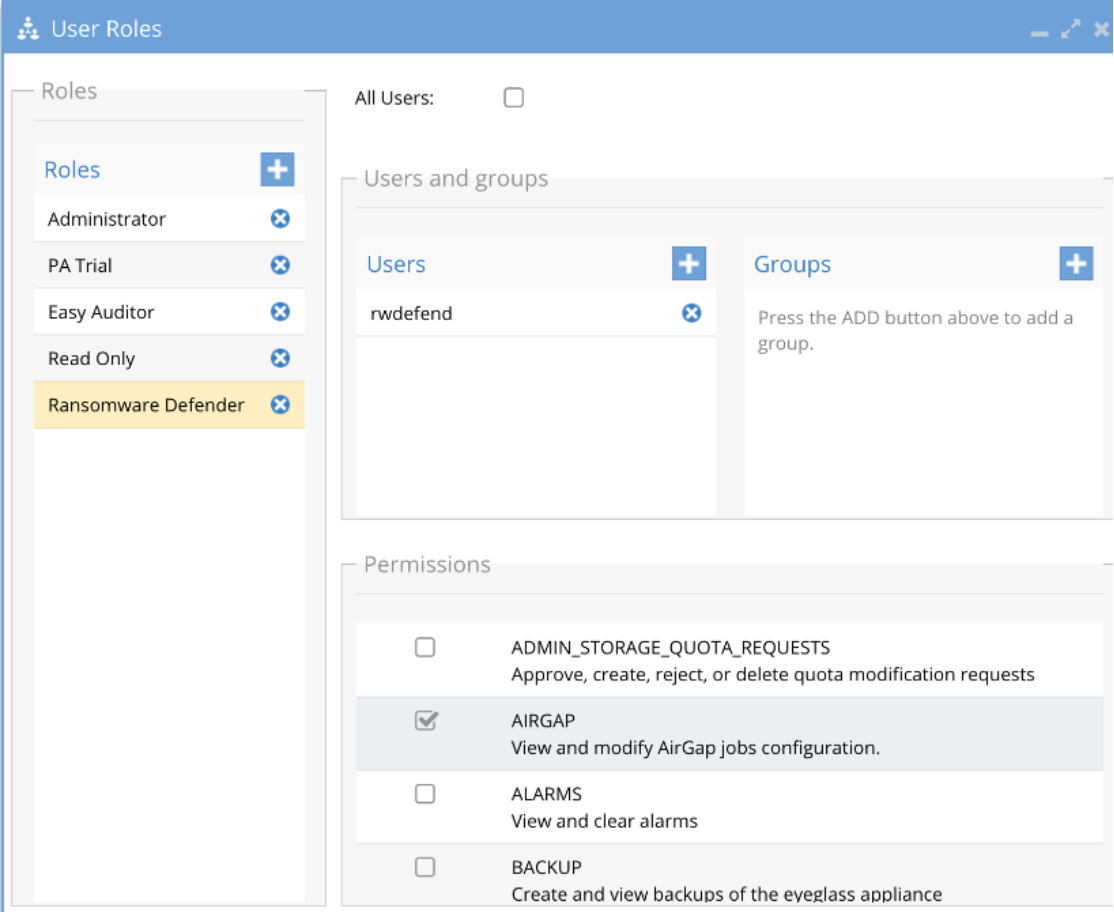

The Cyber Recovery Vault should follow a similar access permission as the source and vault cluster. Although AirGap Enterprise is part of the Ransomware Defender module, it allows for a separate user role to manage the AirGap function. Similar to the vault cluster access, it is also best practice to have the CSO or other senior security management assigned the role of managing AirGap Enterprise. The administrators managing Ransomware Defender must be separate from the staff managing the AirGap function. Ransomware Defender has a role specifically for AirGap. In the following figure, a dedicated role option is available in Ransomware Defender for AirGap.

Figure 35. AirGap user role in Ransomware Defender

Jump server

Optionally, a jump server can be configured to reside inside the Cyber Recovery Vault, providing an option for access into the Cyber Recovery Vault. Although, the jump server eases the administration of the Cyber Recovery Vault, consider the security impacts because it is a device with access outside the vault. Ensure that the jump server is configured similarly to the Vault Cluster, where only the Chief Security Officer (CSO) or other senior security management staff have access. The applications on the jump server must also be limited and configured for minimal access.