Home > Storage > PowerScale (Isilon) > Industry Solutions and Verticals > Electronic Design Automation > PowerScale: Best Practices for Semiconductor EDA Design Environments > Load balancing and failover – SmartConnect

Load balancing and failover – SmartConnect

-

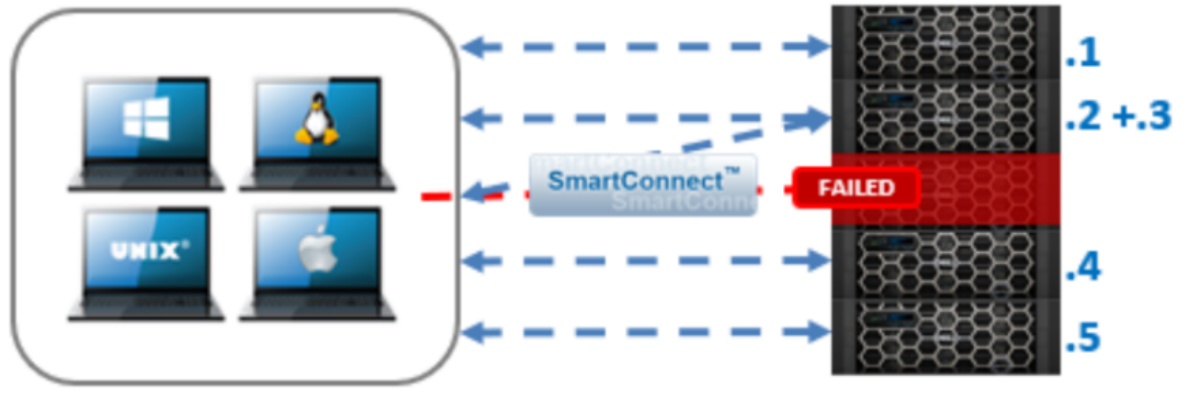

SmartConnect supporting dynamic NFS failover and failback for Linux and UNIX clients and SMB3 continuous availability for Windows clients. This ensures that when a node failure occurs or preventative maintenance is performed, all in-flight reads and writes are handed off to another node in the cluster to finish its operation without any user or application interruption.

During failover, clients are evenly redistributed across all remaining nodes in the cluster, ensuring minimal performance impact. If a node is brought down for any reason, including a failure, the virtual IP addresses on that node is seamlessly migrated to another node in the cluster. When the offline node is brought back online, SmartConnect automatically rebalances the NFS and SMB3 clients across the entire cluster to ensure maximum storage and performance utilization. For periodic system maintenance and software updates, this functionality allows for per-node rolling upgrades affording full-availability throughout the duration of the maintenance window.

Figure 5. Seamless client failover with SmartConnect

SmartConnect also acts as a DNS delegation server to return IP addresses for SmartConnect zones, generally for load-balancing connections to the cluster.

SmartConnect load balances incoming network connections across SmartConnect zones composed of nodes, network interfaces, and pools. The load-balancing policies are Round Robin, Connection Count, CPU Utilization, and Network Throughput. The most common load-balancing policies are Round Robin and Connection Count, but these policies might not apply to all workloads. It is important to understand whether the front-end connections are being evenly distributed, either in count or by bandwidth. Front-end connection distribution can be monitored with InsightIQ or the WebUI. Because each workload is unique, understand how each load-balancing policy functions, and test the policy in a lab environment before a production roll-out.