Home > Storage > PowerMax and VMAX > Storage Admin > Persistent Storage for Containerized Applications on Kubernetes with PowerMax SAN Storage > Host configuration

Host configuration

-

The CSI Driver for PowerMax relies on the host capabilities and configuration to access the storage protocol. Therefore, the driver comes with prerequisites and limitations for accessing the storage backend.

Multipathing

Multipathing is a key part of the host configuration. The CSI Driver for PowerMax relies on device mapper multipathing or Dell PowerPath.

MPIO configuration

The bare minimal multipath.conf configuration that is required for the driver to work is as follows:

defaults {

user_friendly_names yes

find_multipaths yes

}

blacklist { }

Certain distributions, including OpenShift, use an immutable operating system. In those cases, you must ensure that:

- The package device-mapper-multipath is installed.

- The service multipathd is started.

In OpenShift, these changes can be applied with MachineConfig.

PowerPath configuration

PowerPath is particularly useful when used with SRDF Metro because, unlike multipathd, it prioritizes alive paths in case of failure.

PowerPath is distributed as a Linux package. For information about module configuration, see the PowerPath for Linux Installation and Administration Guide.

iSCSI and CHAP

If you use iSCSI with Challenge Handshake Authentication Protocol (CHAP), the configuration of the credentials is not done in the iscsid.conf but within a configuration of the driver.

For more details on PowerMax iSCSI configuration, see the iSCSI Implementation for Dell Storage Arrays Running PowerMaxOS white paper.

Fibre Channel access for virtual machines running in VMware ESXi

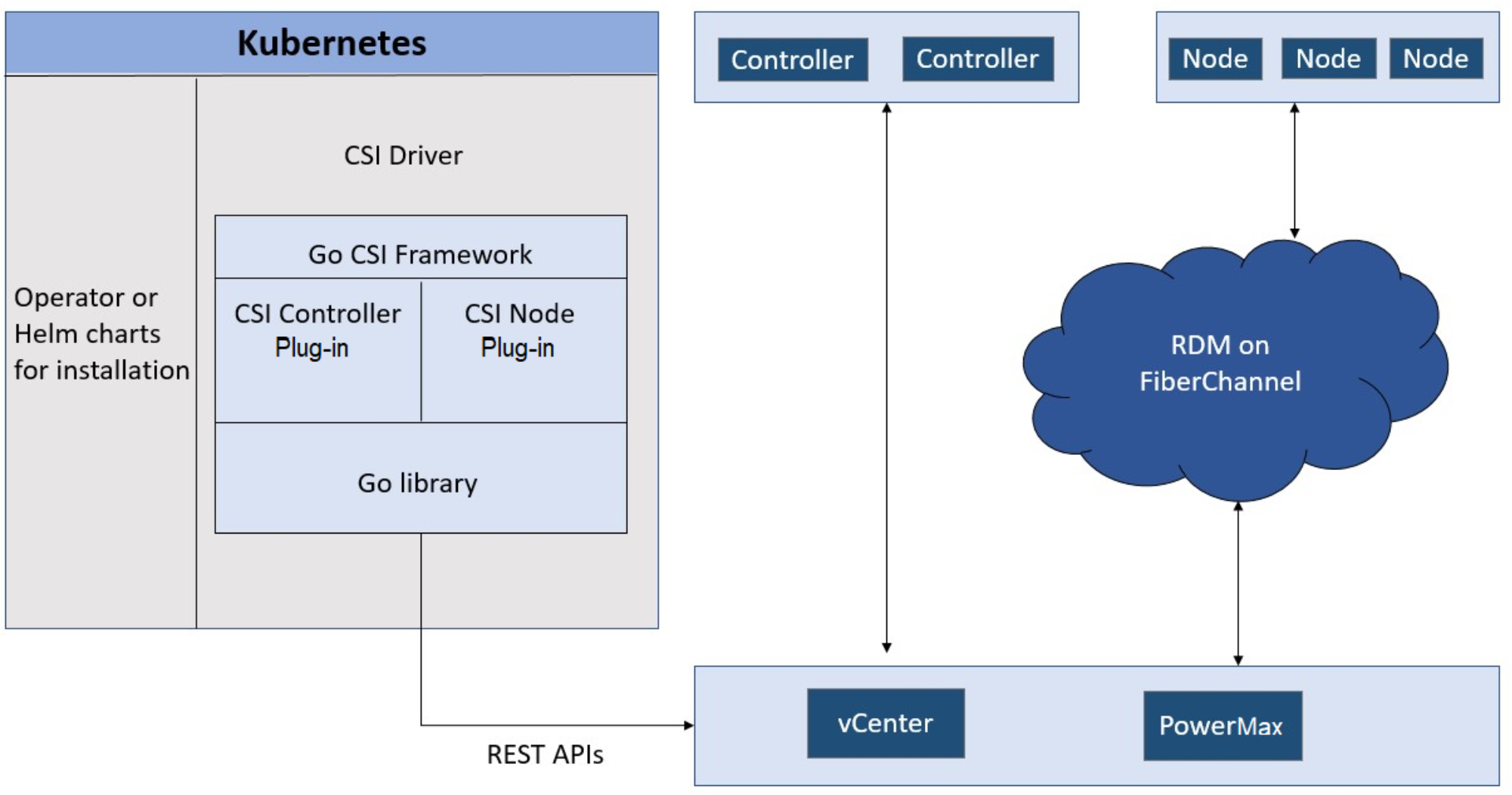

Beginning with version 2.5.0 of the CSI Driver for PowerMax, you can create LUNs that are accessible in FC by VMware ESXi virtual machines using raw device mapping functionality. In CSI and CSM documentation, this feature is referred to as “Auto RDM over FC.” The concept is that the CSI Driver for PowerMax connects to both Unisphere and the vSphere API to create the respective objects.

Figure 9. Auto RDM over FC

Once deployed with “Auto RDM,” the driver can only function in that mode. You cannot combine iSCSI and FC access within the same driver installation.

RDM usage limitations apply. For more information, see RDM Considerations and Limitations in the VMware vSphere documentation.

Note: The driver needs a single initiator group to be deployed (not host group or cascaded initiator group). In addition, vSphere vMotion is not supported at this stage.

Nonsupported protocols

At the time of the publication of this paper, FC is supported only with bare-metal hosts. The driver and FC for virtual machines running Kubernetes do not support end-to-end NVMe.