Home > Storage > PowerScale (Isilon) > Product Documentation > Data Efficiency > Next-Generation Storage Efficiency with Dell PowerScale SmartDedupe > SmartDedupe job reports

SmartDedupe job reports

-

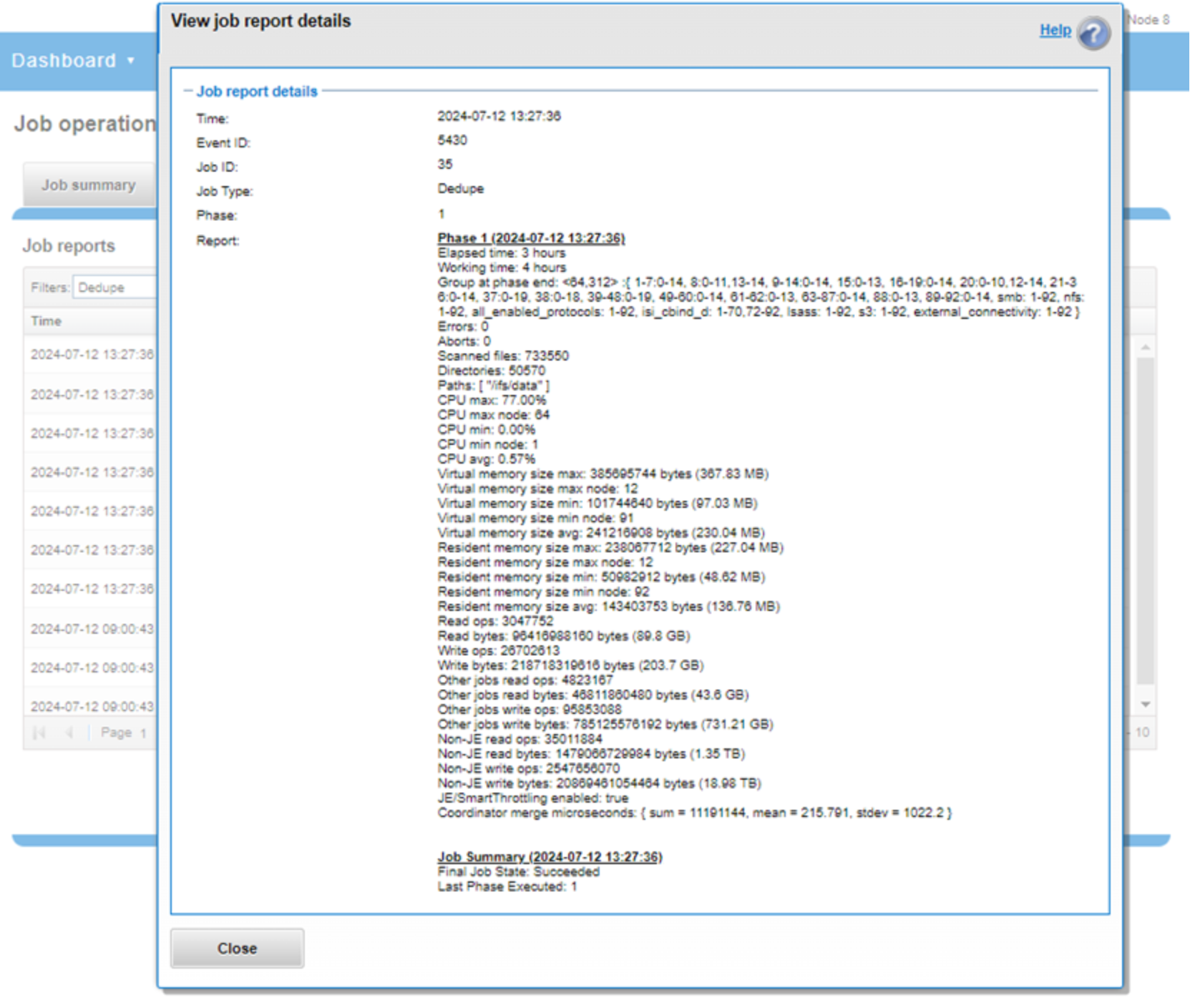

Once the SmartDedupe job has run to completion, or has been terminated, a full deduplication job report is available. This report can be accessed from the WebUI by going to Cluster Management > Job Operations > Job Reports and selecting View Details, under the Action column, for the job.

Figure 17. Example of WebUI deduplication job report

The job report contains the following relevant deduplication metrics.

Table 1. Deduplication job report statistics

Report field

Description of metric

Start time

When the deduplication job started.

End time

When the deduplication job finished.

Scanned blocks

Total number of blocks scanned under configured path or paths.

Sampled blocks

Number of blocks that OneFS created index entries for.

Created dedupe requests

Total number of deduplication requests created. A deduplication request gets created for each matching pair of data blocks. For example, three data blocks all match, two requests are created: One request to pair file1 and file2 together, the other request to pair file2 and file3 together.

Successful dedupe requests

Number of deduplication requests that completed successfully.

Failed dedupe requests

Number of deduplication requests that failed. If a deduplication request fails, it does not mean that the SmartDedupe job also failed. A deduplication request can fail for any number of reasons. For example, the file might have been modified since it was sampled.

Skipped files

Number of files that were not scanned by the deduplication job. The primary reason is that the file has already been scanned and has not been modified since. Another reason for a file to be skipped is if it is smaller than 32 KB. Such files are considered too small and do not provide enough space-saving benefit to offset the fragmentation they will cause.

Index entries

Number of entries that currently exist in the index.

Index lookup attempts

Cumulative total number of lookups that have been done by prior and current deduplication jobs. A lookup is when the deduplication job attempts to match a block that has been indexed with a block that has not been indexed.

Index lookup hits

Total number of lookup hits that have been done by earlier deduplication jobs plus the number of lookup hits done by this deduplication job. A hit is a match of a sampled block with a block in index.

Dedupe job reports are also available from the CLI through the isi job reports view <job_id> command.

Note: From a processing and reporting stance, the Job Engine considers the deduplication job to consist of a single process or phase. The Job Engine events list reports that Dedupe Phase1 has ended and succeeded. This indicates that an entire SmartDedupe job, including all four internal deduplication phases (sampling, duplicate detection, block sharing, and index update), has successfully completed.

For example:

# isi job events list --job-type dedupe

Time Message

------------------------------------------------------

2020-02-01T13:39:32 Dedupe[1955] Running

2020-02-01T13:39:32 Dedupe[1955] Phase 1: begin dedupe

2020-02-01T14:20:32 Dedupe[1955] Phase 1: end dedupe

2020-02-01T14:20:32 Dedupe[1955] Phase 1: end dedupe

2020-02-01T14:20:32 Dedupe[1955] Succeeded

Figure 18. Example of CLI dedupe job events list

For deduplication reporting across multiple OneFS clusters, SmartDedupe is also integrated with InsightIQ cluster reporting and analysis product. A report detailing the space savings delivered by deduplication is available through the InsightIQ File Systems Analytics module.