Home > AI Solutions > Artificial Intelligence > White Papers > Model Customization for Code Creation with Red Hat OpenShift AI on Dell AI Optimized Infrastructure > Visual Studio Code + Continue Plugin

Visual Studio Code + Continue Plugin

-

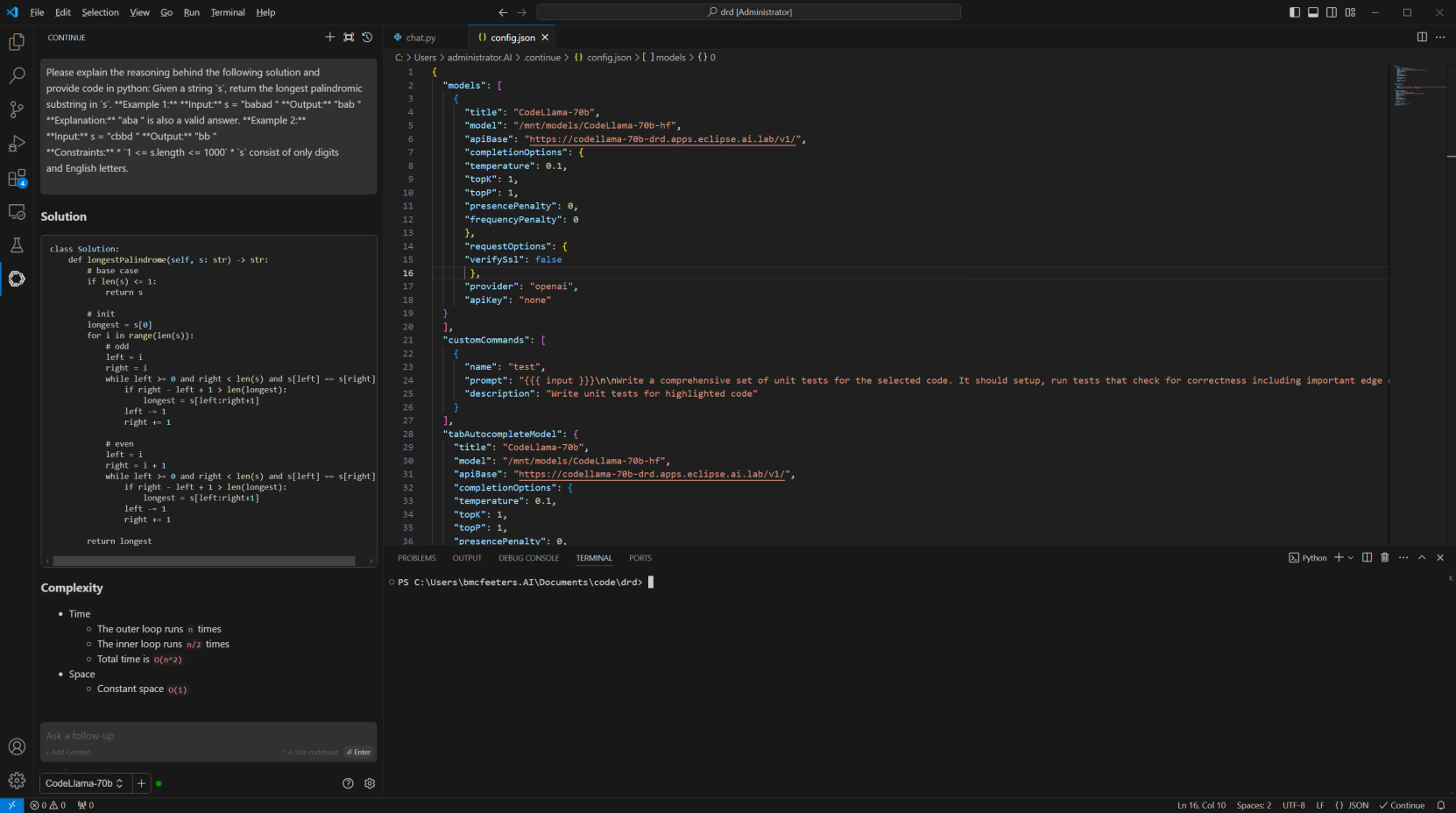

To test the efficacy of the newly trained model, Visual Studio Code is used to simulate a real-world usage example of code generation/explanation. Since VS Code does not have a native interface to LLM inference services the “Continue” plugin is used and configured to submit queries to an inference service serving our original and then newly trained model.

The installation of the Continue plugin is easy accomplished from the VS Code marketplace. The configuration is simple and only some basic metadata about the model being served, and the inference endpoint is required.

If resources are available to run multiple simultaneous inference servers, then additional models can be defined in the Continue configuration for easy switching and testing.

Figure 6. Base model inferencing with VS Code

As seen in Figure 6, the original Code Llama 70b model responds to our chat prompt asking about finding the largest palindrome inside a string using the Python programming language.

A solution is generated in Python as request and the model also returns information about the time and space complexity of the resulting code.

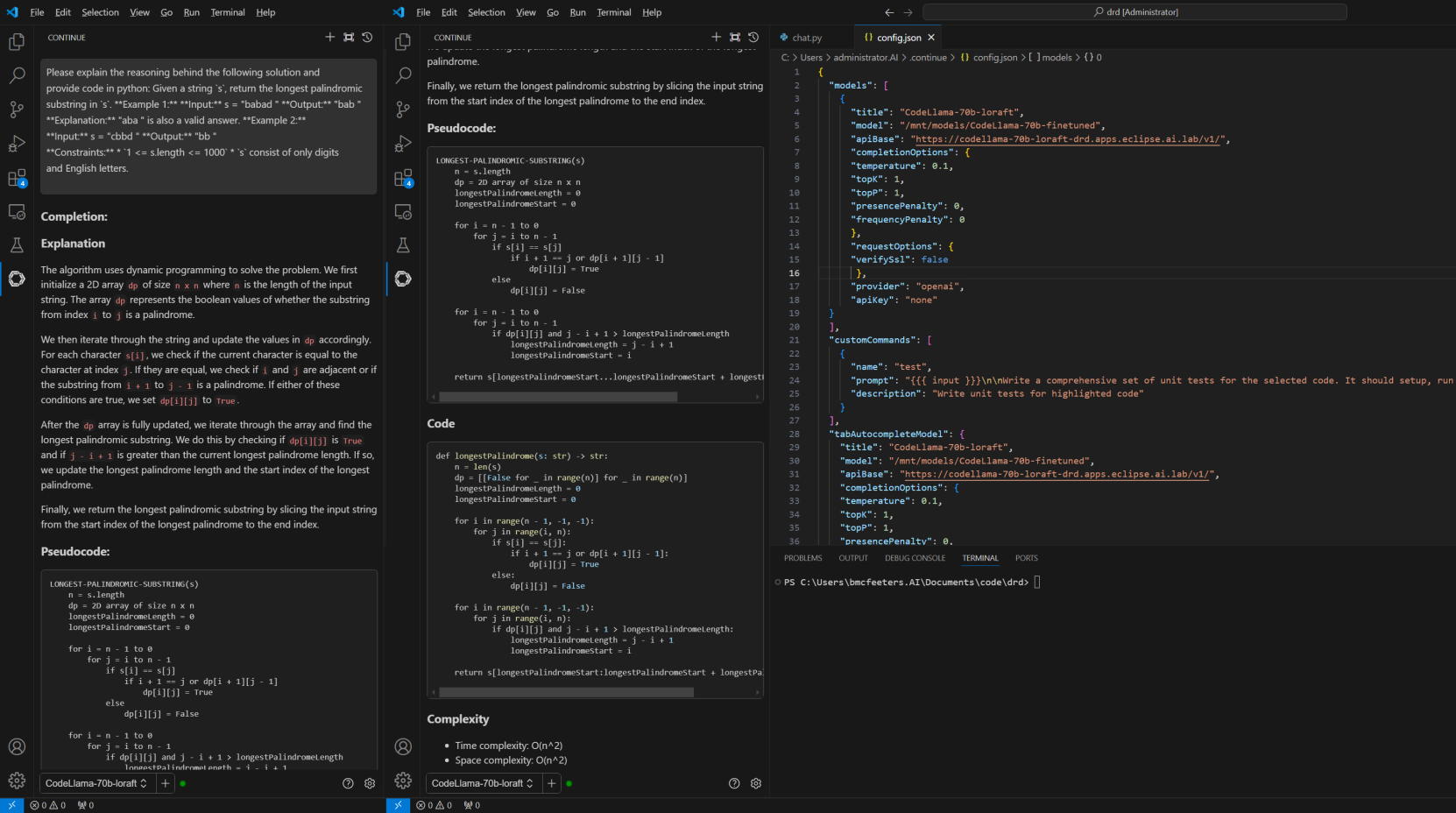

When asking the same question with the model fine-tuned with LoRA, we can observe a different and qualitatively[3] better result in Figure 7. The model returns the explanation section we had requested along with pseudocode, actual Python code, and complexity characteristics as before.

Figure 7. Inference results in VS Code with fine-tuned model