Home > AI Solutions > Artificial Intelligence > White Papers > MLPerf™ Inference v1.0 – NVIDIA GPU-Based Benchmarks on Dell EMC PowerEdge R750xa Servers > MLPerf Inference v1.0 performance results

MLPerf Inference v1.0 performance results

-

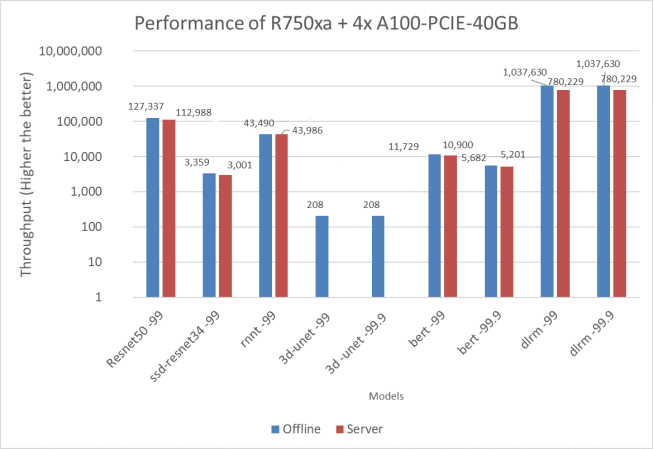

The MLPerf inference benchmark measures how fast a system can perform deep learning inference using a trained model in various deployment scenarios. The following figure represents the Offline and Server scenarios of the MLPerf Inference benchmark with an exponentially scaled y axis:

Figure 5. Resnet50, SSD-Resnet34, RNN-T, BERT, DLRM Offline, and 3D UNET performance of the PowerEdge R750xa server

Key takeaways include:

- The PowerEdge R750xa server can run all models and meet the target accuracy requirements that MLPerf inference v1.0 defined.

- The PowerEdge R750xa server renders promising performance relative to other results.

- All the results in official table are verified by MLCommons™. As of the time of submission, PowerEdge R750xa server results are in line 44.

Note: Due to time constraints, 3D-UNet and DLRM results were not submitted. Figure 5 includes unverified results of these benchmarks.