How iSCSI compares with other storage transport protocols

Home > Storage > PowerMax and VMAX > Storage Admin > iSCSI Implementation Guide for Dell EMC Storage Arrays Running PowerMaxOS > How iSCSI compares with other storage transport protocols

How iSCSI compares with other storage transport protocols

-

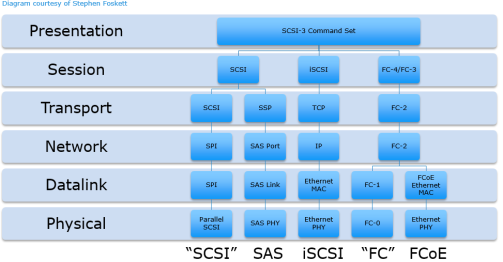

The diagram below shows the similarities and differences between iSCSI and other storage transport protocols. All use the standard network layer model but only iSCSI uses the standard IP protocol.

Figure 4. iSCSI and other SCSI transports

The primary storage transport protocols currently deployed in the data center today is Fibre Channel and Serial Attached SCSI (SAS) storage. With the proliferation of 10 GbE networks and movement to lower cost converged infrastructures in the data center over the last few years, iSCSI has seen a significant uptick in deployment. FCoE has seen some uptick in deployment as well in footprint but it still lags far behind FC, SAS, and iSCSI. This is primarily because FCoE requires Ethernet to be a lossless network that requires the implementation of additional technologies such as end to end Data Center Bridging (DCB). These additional requirements add cost and complexity to the Ethernet solution, greatly reducing any cost advantages that Ethernet has over traditional Fibre Channel.

The table below attempts to summarize the differences and advantages of Fibre Channel and iSCSI storage protocols. Where a protocol has an advantage is identified by the symbol.

Table 2. Fibre Channel and iSCSI comparison

iSCSI

FC

Description

Interconnect technology that uses Ethernet and TCP/IP to transport SCSI commands between initiator and targets

Transporting protocol used to transfer SCSI command sets between initiators and targets

Architecture

Uses standard OSI-based network model—SCSI commands sent in TCP/IP packets over Ethernet

Uses its own five-layer model that starts at the physical layer and progresses through to the upper level protocols

Scalability Score

Good. No limits to the number of devices in specification but subject to vendor limitations. Larger implementations can see performance issues due to increasing number of hops, spanning tree, and other issues.

Excellent. 16 million SAN devices with the use of switched fabric. Achieves linear performance profile as SAN scales outward using proper edge-core-edge fabric topologies

Performance Score

Good. Not particularly well suited for large amounts of small block IO (<=8 KB) due to TCP overhead. Requires Jumbo Frames end to end for best performance. Well suited for mixed workloads with low to mid IOPS requirements. Higher performance requires TCP offloading NICs to save CPU cycles on host and storage

Excellent. Well suited for all IO types and sizes. Scales well as performance demands increase. Well suited for high IOPS environments with high throughput. No offloading required

Virtualization Capability Score

Excellent. iSCSI storage can be presented directly to a virtual machine’s initiator IQN by storage array

Fair to Good. FC SAN storage can be presented directly to a virtual HBA using N-Port ID Virtualization (NPIV). Note: The gen 7 specification will include integrated VM awareness and should close the gap with iSCSI in the future.

Investment Score

Good to Excellent. Can use an existing Ethernet network; however, adding other technologies to make network lossless and to boost performance adds additional complexity and cost

Fair to Good. Initial FC infrastructure costs per port are high (although prices have declined in recent years). Other operation costs are incurred due to specialized network infrastructure. Specialized training required for administration.

IT Expertise Required Score

Good. Network management teams understand Ethernet but could require some storage and IP cross-training.

Fair. Requires specialized FC networking training

Management Ease of Use Score

Fair. Can use existing network infrastructure, but host provisioning and device discovery requires ~3x the steps of Fibre Channel device provisioning. CHAP management in larger implementations can be daunting

Good. Most HBAs allow for autodetection of new devices, with rescan. No host reboot required. Zoning on switch needs to be set up properly.

Security Score

Fair. Requires CHAP for authentication, VLANs or isolated physical networks for separation, IPSec for on wire encryption

Excellent – specification has built in hardware level authentication and encryption, Switch port or WWPN zoning enables separation on Fabric

Strengths Summary

Cost, good performance, ease of virtualization, and pervasiveness of Ethernet networks in the data center and cloud infrastructures. Flexible feature vs. cost trade offs

High performance, scalability, enterprise-class reliability and availability. Mature ecosystem. Future ready protocol - 32 Gb FC is currently available for FC-NVMe deployments.

Weakness Summary

TCP overhead and workloads with large amounts of small block IO. CHAP, excessive host provisioning gymnastics. Questions about future - will NVMe and NVMeoF send iSCSI the way of the Dodo?

Initial investment is more expensive. Operational costs are higher as FC requires separate network infrastructure. Not well suited for virtualized or cloud-based applications.

Optimal Environments

SMBs and enterprise, departmental and remote offices. Very well suited for converged infrastructures and application consolidation.

- Business applications running on top of smaller to mid-sized Oracle environments

- All Microsoft Business Applications such as Exchange, SharePoint, SQL Server

Enterprise with complex SANs: high number of IOPS and throughput

- Non-stop corporate backbone including mainframe

- High intensity OLTP/OLAP transaction processing for Oracle, IBM DB2, Large SQL Server databases

- Quick response network for imaging and data warehousing

- All Microsoft Business Applications such as Exchange, SharePoint, SQL Server

The above table outlines the strengths and weaknesses of Fibre Channel vs. iSCSI when the protocols are being considered for implementation for SMB and enterprise-level SANs. Each customer has their own set of unique criteria to use in evaluating different storage interface for their environment. For most small enterprise and SMB environments looking to implement a converged, virtualized environment, the determining factors for a storage interface are upfront cost, scalability, hypervisor integration, availability, performance, and the amount of IT Expertise required to manage the environment. The above table shows that iSCSI provides a nice blend of these factors. When price to performance is compared between iSCSI and Fibre Channel, iSCSI does show itself to be compelling solution. In many data centers, particularly in the SMB space, many environments are not pushing enough IOPS to saturate even one Gbps bandwidth levels. At the time of this writing, 10 Gbps networks are becoming legacy in the data center and 25+ Gbps networks are being more commonly deployed for a network backbone. This makes iSCSI a real option for future growth and scalability as throughput demands increase.

Another reason that iSCSI is considered an excellent match for converged virtualized environments, is that iSCSI fits in extremely well with a converged network vision. Isolating iSCSI NICs on a virtualized host allows each NIC to have its own virtual switch and specific QoS settings. Virtual machines can be provisioned with iSCSI storage directly through these virtual switches, bypassing the management operating system completely and reducing I/O path overhead.

Making a sound investment in the right storage protocol is for a critical step for any organization. Understanding the strengths and weaknesses of each of the available storage protocol technologies is essential. By choosing iSCSI, a customer should feel confident that they are implementing a SAN that can meet most of their storage workload requirements at a potentially lower cost.