Home > Storage > PowerMax and VMAX > Storage Admin > iSCSI Implementation Guide for Dell EMC Storage Arrays Running PowerMaxOS > Deployment considerations for iSCSI

Deployment considerations for iSCSI

-

The following information needs to be considered and understood when deploying iSCSI into an environment.

Network considerations

Network design is key to making sure iSCSI works properly and delivers the expected performance in any environment. The following are best practice considerations for iSCSI networks:

- 10 GbE+ networks are essential for enterprise production level iSCSI. Anything less than 10GbE should be relegated to test and development.

- iSCSI should be considered a local-area technology, not a wide-area technology, because of latency issues and security concerns.

- Separate iSCSI traffic from general traffic by using either separate physical networks or layer-2 VLANs. Best practice is to have a dedicated LAN for iSCSI traffic and not share the network with other network traffic. Aside from minimizing network congestion, isolating iSCSI traffic on its own physical network or VLAN is considered a must for security as iSCSI traffic is transmitted in an unencrypted format across the LAN.

- Implement jumbo frames (by increasing the default network MTU from 1500 to 9000) in order to deliver additional throughput especially for small block read and write traffic. However, care must be taken if jumbo frames are to be implemented as they require all devices on the network to be jumbo frame compliant and have jumbo frames enabled. When implementing jumbo frames, set host and storage MTUs to 9000 and set switches to higher values such as 9216 (where possible).

- To minimize latency, avoid routing iSCSI traffic between hosts and storage arrays. Try to keep hops to a minimum. Ideally host and storage should co-exist on the same subnet and be one hop away maximum.

- Enable “trunk mode” on network switch ports. Many switch manufacturers will have their switch ports set using “access mode” as a default. Access mode allows for only one VLAN per port and is assuming that only the default VLAN (VLAN 0) will be used in the configuration. Once an additional VLAN is added to the default port configuration, the switch port needs to be in trunk mode, as trunk mode allows for multiple VLANs per physical port.

Multipathing and availability considerations

The following are iSCSI considerations with regard to multipathing and availability:

- Deploy port binding iSCSI configurations with multipathing software enabled on the host rather than use a multiple-connection-per-session (MC/S) iSCSI configuration. MC/S was created when most host operating systems did not have standard operating-system-level multipathing capabilities. MC/S is prone to command bottlenecks at higher IOPS. Also, there is inconsistent support for MC/S across vendors.

- Use the "Round Robin (RR)" load balancing policy for Windows host based multipathing software (MPIO) and Linux systems using DM-Multipath. Round Robin uses an automatic path selection rotating through all available paths, enabling the distribution of load across the configured paths. This path policy can help improve I/O throughput. For active/passive storage arrays, only the paths to the active controller will be used in the Round Robin policy. For active/active storage arrays, all paths will be used in the Round Robin policy.

- For Linux systems using DM-Multipath, change “path_grouping_policy” from “failover” to “multibus” in the multipath.conf file. This will allow the load to be balanced over all paths. If one fails, the load will be balanced over the remaining paths. With “failover” only a single path will be used at a time, negating any performance benefit. Ensure that all paths are active using “multipath –l” command. If paths display an “enabled” status, they are in failover mode

- Use the “Symmetrix Optimized” algorithm for Dell EMC PowerPath software. This is the default policy and means that administrators do not need to change or tweak configuration parameters. PowerPath selects a path for each I/O according to the load balancing and failover policy for that logical device. The best path is chosen according to the algorithm. Due to the propriety design and patents of PowerPath, the exact algorithm for this policy cannot be detailed here

- Do not use NIC teaming on NICs dedicated for iSCSI traffic. Use multipathing software such as native MPIO or PowerPath for path redundancy.

Resource consumption considerations

When designing an iSCSI SAN, one of the primary resource considerations must be focused around the CPU consumption required to process the iSCSI traffic throughput on both the host initiator and storage array target environments. As a guideline, network traffic processing typically consumes 1 GHz of CPU cycles for every 1 Gbps (125 MBps) of TCP throughput. It is important to note that TCP throughput can vary greatly depending on workload; however, many network design teams use this rule to get a “ballpark” idea of CPU resource sizing requirements for iSCSI traffic. The use of this rule in sizing CPU resources is best shown by an example.

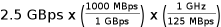

Consider the following: the total throughput of an iSCSI workload is estimated to be 2.5 GBps. This means that both the host and storage environments must be sized properly from a CPU perspective to handle the estimated 2.5 GBps of iSCSI traffic. Using the general guideline, processing the 2.5 GBps of iSCSI traffic will consume:

= 20 GHz of CPU consumption

= 20 GHz of CPU consumption

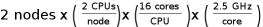

This means that an estimated 20 GHz of CPU resources will be consumed on both the host initiator and storage array target side of the environment to process the iSCSI traffic. To further examine the impact of this requirement, say the host initiator environment consists of a heavily virtualized dual node cluster. Each node has 2 x 16 core 2.5 GHz CPUs. This means that the host initiator environment has a total of:

= 160 GHz of CPU processing power

= 160 GHz of CPU processing powerThe estimated consumption of 20 GHz CPU cycles to process the 2.5 GBps of iSCSI traffic represents 12.5% of the total 160 GHz processing power of the host initiator environment. In many virtualized environments, a two-node cluster is considered a small implementation. Many modern virtualized environments will consist of many nodes, with each node being dual CPU and multi-core. In these environments, 20 GHz of CPU consumption might seem trivial; however, in heavily virtualized environments, every CPU cycle is valuable.

CPU resource conservation is even more important on the storage side of the environment as CPU resources are often more limited than on the host initiator side.

The impact on CPU resources by iSCSI traffic can be minimized by deploying the following into the iSCSI SAN environment:

- In order to fully access the environment CPU resources, widely distribute the iSCSI traffic across many host nodes and storage directors ports as possible.

- Employ NICs with a built-in TCP Offload Engine (TOE). TOE NICs offload the processing of the datalink, network, and transport layers from the CPU and process it on the NIC itself.

- For heavily virtualized servers, use NICs that support Single Root - IO Virtualization (SR-IOV). Using SR-IOV allows the guest to bypass the hypervisor and access I/O devices (including iSCSI) directly. In many instances, this can significantly reduce the server CPU cycles required to process the iSCSI I/O.

Note: The introduction of TOE and SR-IOV into an iSCSI environment can add complexity and cost. Careful analysis must be done to ensure that the additional complexity and cost is worth the increased performance.