Home > Workload Solutions > Container Platforms > Red Hat OpenShift Container Platform > Archive > Implementation Guide—Red Hat OpenShift Container Platform 4.10 on Intel-powered Dell Infrastructure > Red Hat OpenShift Data Foundation storage

Red Hat OpenShift Data Foundation storage

-

Red Hat provides OpenShift Data Foundation as a method to provide persistent storage to applications that are consuming compute node local disks or dynamically provisioned storage through a standard OpenShift Container Platform cluster storage class.

Prerequisites

Ensure that:

- At least three compute nodes are running in the OpenShift cluster

- No disk partitions are configured

Installing Local Storage Operator

Follow steps 1 to 4 in Provisioning local storage.

Installing OpenShift Data Foundation Operator

To install the OpenShift Data Foundation operator:

- To specify a blank node selector for the openshift-storage namespace, run:

$ oc annotate namespace openshift-storage openshift.io/node-selector=

This command overrides the cluster-wide default node selector for OpenShift Data Foundation.

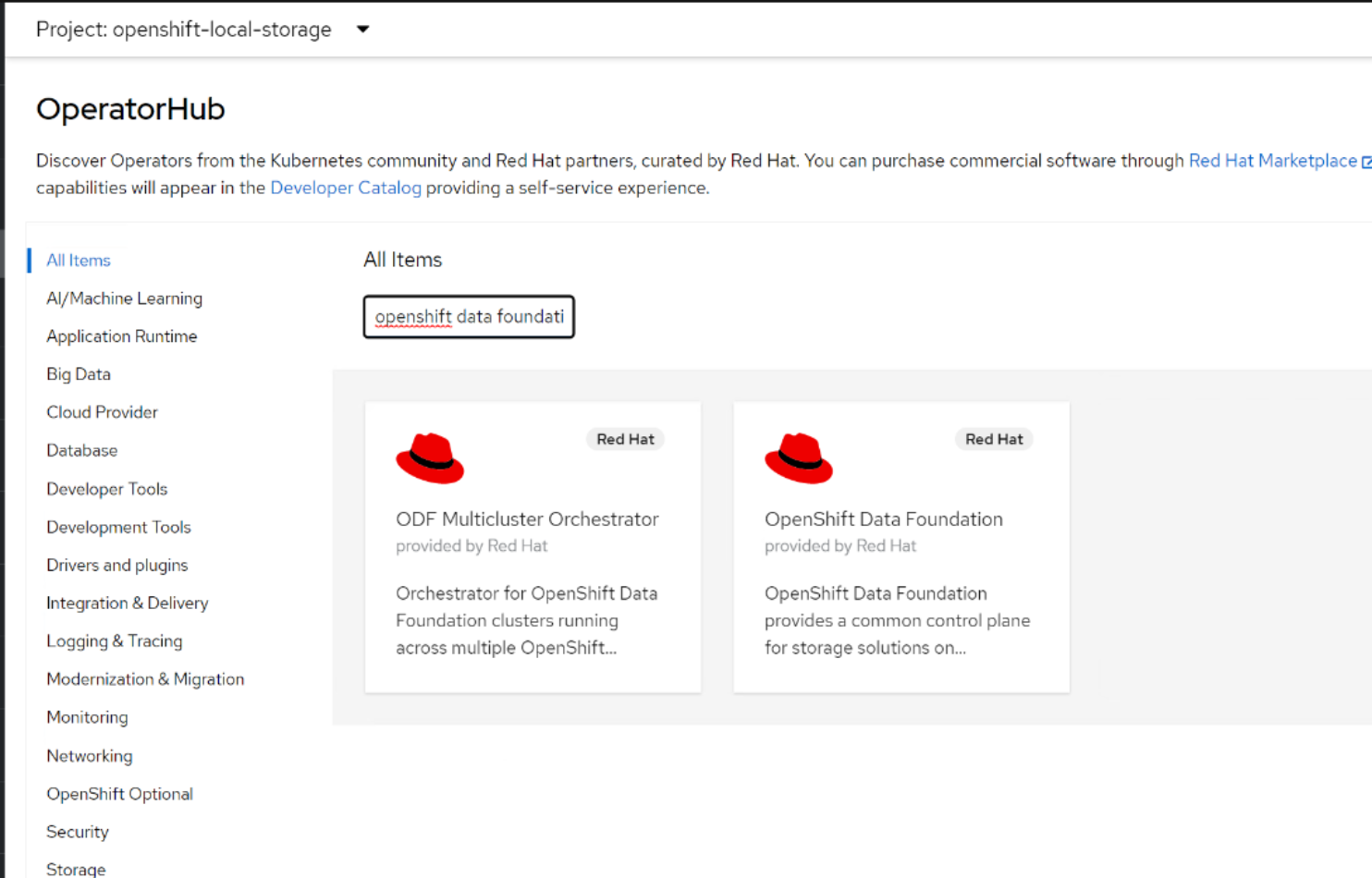

- Log in to the OpenShift web console and select Operators > OperatorHub.

- Search for the OpenShift Data Foundation operator.

Figure 30.

OpenShift Data Foundation operator- Select the operator and click Install.

- Select the default openshift-storage namespace and update any other option as required.

- Click Install.

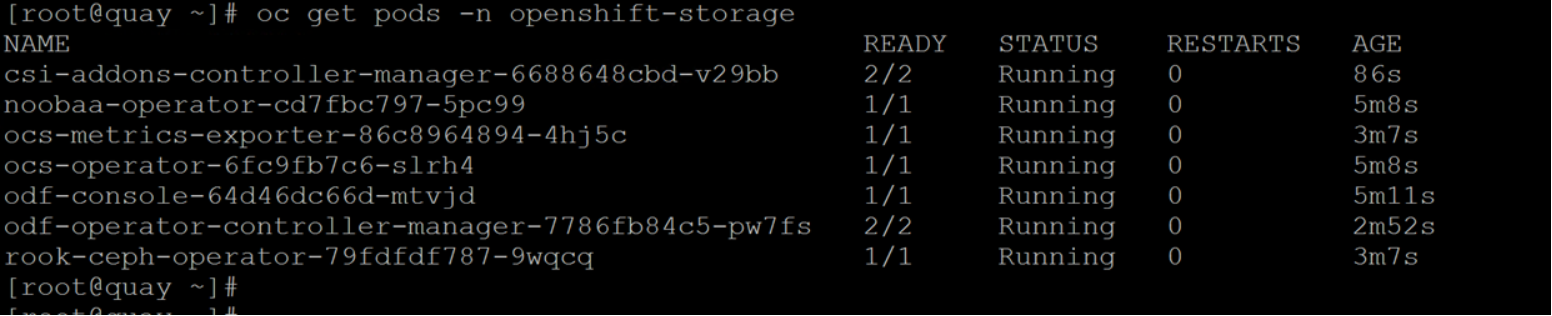

- Verify that the pods are in the “Running” state.

Figure 31. OpenShift Data Foundation pods status

Create the OpenShift Data Foundation cluster

To create and configure the OpenShift Data Foundation cluster:

- Log in to OpenShift web console and select Operators > Installed Operators > OpenShift Data Foundation.

- Click Create StorageSystem.

- On the Backing storage page:

- Select Full Deployment as the Deployment type.

- Select Create a new StorageClass using the local storage devices.

- Click Next.

- On the Create local volume set page:

- Enter a name for the LocalVolumeSet and the StorageClass.

- Select one of the following:

Disks on all nodes

Uses the available disks that match the selected filters on all the nodes.

Disks on selected nodes

Uses the available disks that match the selected filters only on the selected nodes.

- From the Disk Type list, select SSD/NVMe.

- Expand the Advanced section and set the options as described in the following table:

Table 5. Advanced options for OpenShift Data Foundation disks

Parameter

Description

Volume mode

Block is selected by default.

Device Type

Select one or more device type from the drop-down menu.

Disk Size

Set a minimum size of 100 GB for the device and the maximum available size of the device that needs to be included.

Maximum Disks Limit

The maximum number of PVs that can be created on a node. If this field is left empty, PVs are created for all the available disks on the matching nodes.

- Click Next.

The creation of LocalVolumeSet is confirmed.

- Click Yes to continue.

- On the Capacity and nodes page:

- Select Available raw capacity.

After some time, this field is populated with the capacity value based on all the attached disks that are associated with the storage class. The Selected nodes list shows the nodes based on the storage class.

- (Optional) Select Taint nodes to dedicate the selected nodes for OpenShift Data Foundation.

- Click Next.

- Optional: On the Security and network page, select Enable data encryption for block and file storage.

- Select one or both of the following Encryption levels:

Cluster-wide encryption

Encrypts the entire cluster (block and file).

StorageClass encryption

Creates encrypted persistent volume (block only) using encryption enabled storage class.This encryption type requires an advanced subscription.

- Select one of the following:

Default (SDN) if you are using a single network.

Custom (Multus) if you are using multiple network interfaces.

- Select a Public Network Interface from the drop-down menu.

- Select a Cluster Network Interface from the drop-down menu.

Note: At the time of publication, Custom is a Tech Preview feature.

- Click Next.

- On the Review and create page, review the configuration details.

To modify any configuration settings, click Back to go back to the previous configuration page.

Click Create StorageSystem.

- To verify the status of the installed storage cluster, in the OpenShift web console, select Installed Operators > OpenShift Data Foundation > Storage System > ocs-storagecluster-storagesystem > Resources.

Verify that StorageCluster has a status of Ready and has a green tick mark next to it.

The local storage operator discovers all the disks and creates PVs on the cluster.

- Verify that the following storage classes are created with the OpenShift Data Foundation cluster creation:

- ocs-storagecluster-ceph-rbd

- ocs-storagecluster-cephfs

- openshift-storage.noobaa.io

- ocs-storagecluster-ceph-rgw

Create a Ceph fs PVC

CephFS is a filesystem based on Ceph Storage Cluster. The storage class for CephFS can be used for provisioning a shared filesystem.

To create a PVC using ocs-storagecluster-cephfs:

- Create a YAML file based on this sample file to create a PVC using the ocs-storagecluster-cephfs storage class:

[core@csah-pri ~]$ oc create -f <YAML file>

- Verify that the volume is created:

[core@csah-pri ~]$ oc get pvc -n odf-test

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

odf-cephfs-daemonset-pvc Bound pvc-44d3a805-0e6b-48b1-a8d6-0ab6c56e1975 50Gi RWX ocs-storagecluster-cephfs 29s

- Create a DaemonSet or pod and attach the volume (see this sample file for guidance):

[core@csah-pri ~]$ oc create -f <YAML file>

- Validate that the pod is created.

[core@csah-pri ~]$ oc get pods -n odf-test

Create a Ceph rbd PVC

Ceph RBD is Ceph’s block storage component that distributes data and workload across the Ceph cluster. Ceph rbd can be used to provision block storage.

To create a PVC using ocs-storagecluster-ceph-rbd:

- Use this sample YAML file to create a PVC by using the ocs-storagecluster-ceph-rbd storage class:

[core@csah-pri ~]$ oc create -f <YAML file>

- Verify that the PVC is created successfully:

[core@csah-pri ocs]$ oc get pvc ocsrbdpvc -n ocs

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

Odf-rbd-pvc Bound pvc-7742925d-8ecb-4d49-8b4b-00313d8d7c85 100Gi RWO ocs-storagecluster-ceph-rbd 27s

- Create a pod and attach the volume to the pod (refer to this sample file for guidance):

[core@csah-pri ~]$ oc create -f <YAML file>

- Verify that the pod is created:

[core@csah-pri ~]$ oc get pods -n odf-test