Home > Workload Solutions > High Performance Computing > White Papers > HPC High-Performance Storage Solution for BeeGFS > IOzone sequential N-N reads and writes

IOzone sequential N-N reads and writes

-

To evaluate sequential reads and writes, Dell Technologies used IOzone benchmark version 3.492 in the sequential read and write mode. We conducted the tests on multiple thread counts, starting at two threads and increasing in powers of two to 1024 threads. Because this test works on one file per thread, at each thread count, the number of files equal to the thread count was generated. The threads were distributed across 16 physical client nodes in a round-robin fashion.

Dell Technologies converted throughput results to GB/s from the KiB/s metrics that were provided by the tool. Except for the single thread count, for which 1 TB was used as aggregate file size an aggregate file size of 8 TB was chosen to minimize the effects of caching from the servers for all other thread counts. Within any given test, the aggregate file size used was equally divided among the number of threads. A record size of 1 MiB was used for all runs. Operating system caches were also dropped or cleaned on the client nodes between tests and iterations and between writes and reads.

The commands used for Sequential N-N tests are given below:

Sequential Writes: iozone -i 0 -c -e -w -r 1m -s $SIZE -t $THREAD -+n -+m /path/to/threadlist

Sequential Reads: iozone -i 1 -c -e -r 1m -s $SIZE -t $THREAD -+n -+m / path/to/threadlist

For BeeGFS, the default chunk size is 512KiB and stripe count is four. However, the chunk size and the number of targets per file (stripe count) can be configured on a per-directory or per-file basis. For all these tests, BeeGFS chunk size was set to 1 MiB and stripe count was set to 1 as shown below:

$ beegfs-ctl --setpattern --numtargets=1 --chunksize=1m /mnt/beegfs/iozone-dfiles/

$ beegfs-ctl --getentryinfo --mount=/mnt/beegfs/ /mnt/beegfs/iozone-dfiles/ --verbose

Entry type: directory

EntryID: 0-635AFA5A-1

ParentID: root

Metadata node: metaB-numa0-3 [ID: 9]

Stripe pattern details:

+ Type: RAID0

+ Chunksize: 1M

+ Number of storage targets: desired: 1

+ Storage Pool: 1 (Default)

Inode hash path: 1A/28/0-635AFA5A-1

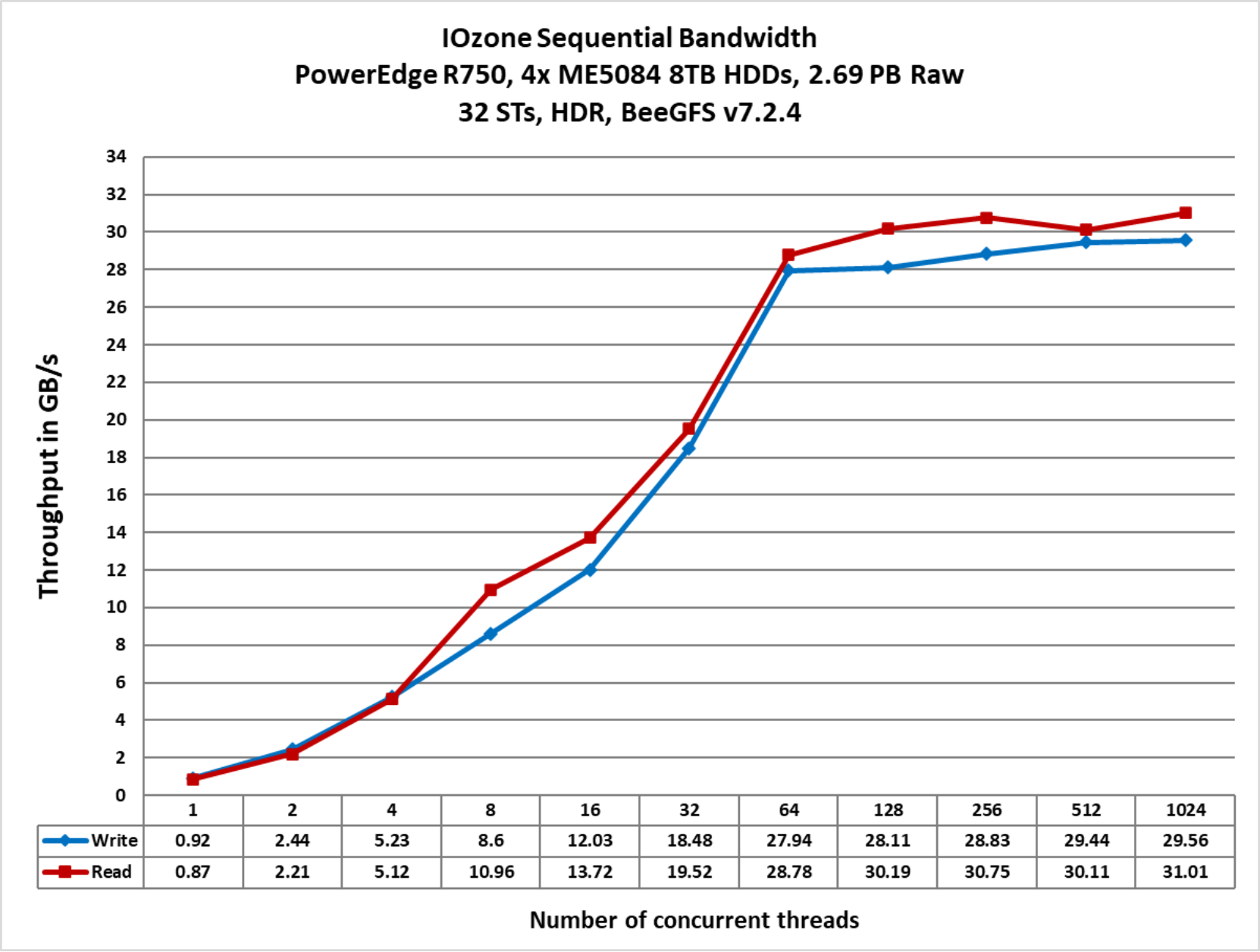

Figure 7 shows the sequential N-N performance of the solution:

Figure 7. Sequential N-N reads and writes

As the figure shows, the peak read throughput of 31.01 GB/s and peak write throughput of 29.56 GB/s was attained at 1024 threads. The read and write performance scale linearly with the increase in the number of threads until the system attained its peak. After this, reads and writes saturate as we scale. The overall sustained performance of this configuration for reads is ≈ 30GB/s and that for the writes is ≈ 29 GB/s with the peaks as mentioned above. Above 4 threads, the reads tend to be slightly higher than the writes.