Home > Storage > Data Storage Essentials > Storage Admin > Fabric Notifications with Dell PowerMax > Use Cases

Use Cases

-

Marginal links are often the result of aging SANs with components that have been in place for many years and are slowly deteriorating. For example, in today’s SANs, 16 Gigabit per second (16Gb/s) optics are supported in switches that can support 4Gb/s HBAs. 4Gb/s Fibre Channel was introduced in 2004! Questionable / malfunctioning components along a SAN path can have severe impacts and frequently leads to application degradation, crashes, and outages.

In some ways, it would be preferable for these components to just completely fail to allow quick fault isolation and component replacement. Instead, these marginal links are intermittent but persistent making them difficult to troubleshoot. In the meantime, end users are being impacted by application unavailability or poor performance. Without Fabric Notification support, solutions such as multi-pathing software also struggle with the intermittent nature of these marginal links (see Figure 1 below).

Figure 1. Multi-Pathing Software with Link Integrity Issues

In this diagram, PowerPath or MPIO have not declared the primary path to be down due to the intermittent nature of the marginal link. However, throughput on the link is near zero. Frames continue to be sent down the bad link making the situation worse by possibly creating a congestion situation.

Congestion occurs when the rate of frames entering the fabric exceeds the rate of frames exiting the fabric. The most common cause is oversubscription of bandwidth. Congestion can begin as minor issue and go unoticed initially. Eventually, as application demands grow, congestion can worsen. A common congestion scenario is the mixing of lower speed HBAs with higher speed storage adapter ports. This mismatch can occur when storage arrays with modern 32Gb/s Fibre Channel adapter ports are introduced into SANs with HBAs with slower speeds like4Gb/s and 8Gb/s. These faster storage array ports can easily overrun the lower speed HBA ports.

Figure 2 is an example of a SAN that is not experiencing congestion. Both Host 1 and Host 2 are performing READ commands to the array. Since both the array and host are attached at 32Gb/s and there is sufficient ISL bandwidth for example, 64Gb/s, there is no congestion in the SAN.

Figure 2. No Congestion

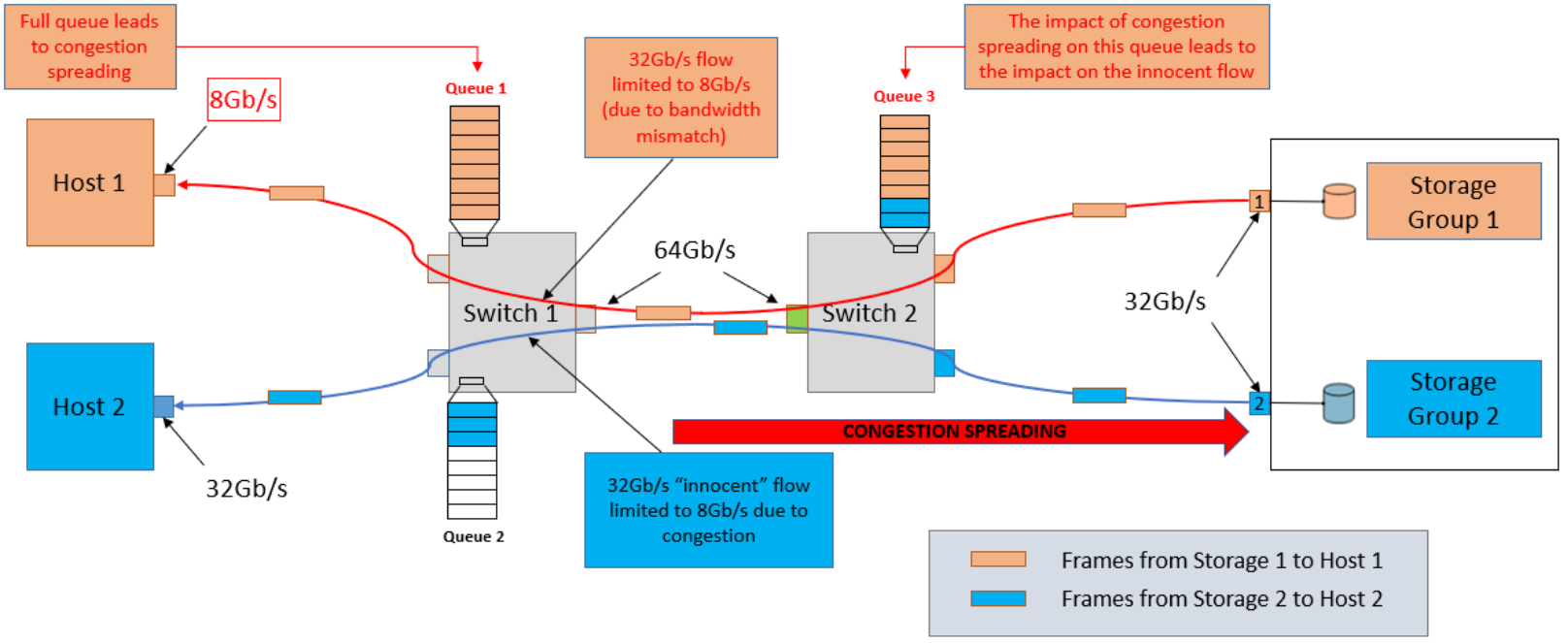

Figure 3 shows an example of a SAN that is experiencing Congestion Spreading due to oversubscription. Due to the nature of the Fibre Channel, congestion can spread from one flow to other flows that may not be otherwise oversubscribed. Because the storage array interface transmits data at a rate (such as 32Gb/s) that is greater than the speed of the attached HBA (which is about8Gb/s), Host 1 will be unable to receive the data at the rate being transmitted from the storage with the immediate impact being the queuing of frames. As Queue 1 fills, the congestion spreads back to the source of the data. Since

both Host 1 and Host 2 are sharing the same Inter Switch Link (ISL), this congestion can, and in this case does, impact the "innocent flow" between Host 2 and Storage 2, reducing throughput from 32Gb/s to 8Gb/s.

Figure 3. Congestion

Congestion is addressed in many ways both with Fabric Notifications and outside of it. The latest Fibre Channel switches and directors have operating system features that can help address congestion in different ways. Brocade’s Traffic Optimizer uses Performance Groups to organize flows across virtual channels by grouping I/Os with similar characteristics (consider Fibre Channel speed or SCSI vs NVMe). By grouping I/Os in this fashion, the causes of congestion are mitigated. For more information on Traffic Optimizer, click here for the Brocade Traffic Optimizer technical brief.

Cisco uses a different technique for detecting and addressing congestion through a feature called Dynamic Ingress Rate Limiting Solution (DIRL). With DIRL, ingress data is limited at the port level when egress congestion is detected. Initiators that are congested ask for less which helps relieve congestion. For more information on DIRL, click here for the Cisco Dynamic Ingress Rate Limiting Solution Overview.

Finally, the latest HBAs from Emulex and Marvell also have dynamic I/O throttling features that work in conjunction with Fabric Notifications. See the Fabric Notifications with Fibre Channel Host Bus Adapters (HBAs) chapter later in this whitepaper for more information and references.