Home > Storage > PowerScale (Isilon) > Industry Solutions and Verticals > Electronic Design Automation > EDA Cloud Burst with Dell PowerScale and Vcinity Data Access > Overview

Overview

-

New advancements of 7nm, 5nm, and 3nm process nodes have introduced complex design challenges for chipmakers, specifically the growing needs for scalable compute power and storage performance to meet shorter design cycles and time to market.

To meet market demand and the growing competitive landscape, chipmakers are considering Hybrid cloud solutions to scale compute resources as quickly as possible to shorten design cycles and time to market.

Cloud Burst is one way of scaling compute resources to public cloud providers to run EDA workloads on-demand. Unfortunately, bursting EDA workloads to the cloud is not an easy process and has its own unique set of challenges.

The first challenge is to build automated bursting to cloud architecture of EDA workloads. This includes setup of license servers, the job scheduler, and automated provisioning of compute, network, and storage on cloud instances.

The next challenge is to identify what data is required to copy/sync for Cloud Burst EDA workloads. There is no simple answer, and it varies depending on EDA design flows and tools. A tremendous number of files and directories – millions and sometimes into the billions – are scattered over NFS storage and can easily exceed PBs in size. What is the size of the data to be available in the cloud? How do you transfer data from on-premises to the cloud? How long does it take to transfer data? How do you maintain cloud data in sync with on-premises?

Vcinity provides solutions for these challenges. This solution helps you optimize your data strategy by enhancing global data availability across your hybrid multicloud environment, whether you choose to instantly access data in place from a remote location (edge, core, or public or private cloud) or rapidly move data from edge to core to cloud.

Accelerate time to insights

Take meaningful action on data sooner, no matter its location, with data movement up to 11x faster and real-time remote data access.

Increase agility across hybrid cloud

Expand EDGE and hybrid deployments and ease cloud adoption with the flexibility to perform on data anywhere—on-premises, at the EDGE, or in the cloud.

Decrease total cost of ownership

Reduce costs associated with data duplication and transfers, as well as increase the effectiveness of current and future physical infrastructure.

Improve security profile

Eliminate unnecessary data movement, reduce liabilities associated with copy management, and better protect your data.

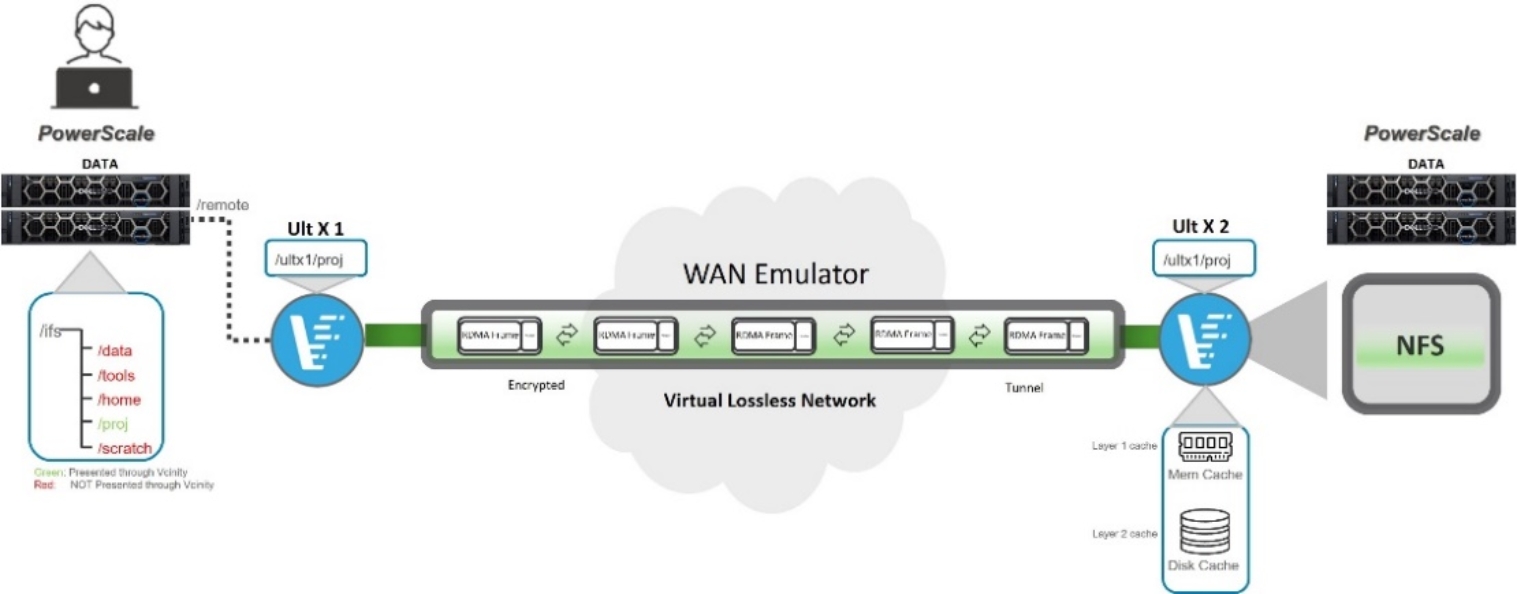

Figure 1. Vcinity data access with PowerScale

Figure 1. Vcinity data access with PowerScaleIn this document, the Synthetic EDA workload benchmark was used to prove and demonstrate a cloud burst solution using Dell PowerScale Scale-Out NAS storage and Vcinity. The testing will focus on testing Vcinity cache performance by emulating WAN latency in our lab.

The Synthetic EDA workload benchmark will submit Android source code build jobs to an EDA simulation farm or HPC cluster using a job scheduler – much like EDA jobs are submitted in a production environment. This will create a high concurrency I/O pattern and achieve benchmark results that better reflect real-world conditions – something that traditional benchmarks fail to do.

In a real-world scenario, all files and libraries are located on-premises, and design teams burst EDA workloads to the cloud. Vcinity will create a secure tunnel between on-premises and cloud, so all files and directories are immediately available to the remote compute services. When the file is read for the first time, the data is transferred to the cloud and stored in cache. Subsequent reads are instantaneous, as the data is now cached.

The purpose of this testing was to identify the performance differences between running these processes, analyzing millions (or billions) of files locally vs remotely using the Vcinity solution with and without its caching capabilities.