Home > Workload Solutions > Container Platforms > Red Hat OpenShift Container Platform > Guides > Design Guide—Red Hat OpenShift Container Platform 4.12 on Intel-powered Dell Infrastructure > Deployment process

Deployment process

-

To deploy a Red Hat OpenShift cluster, use one of the following options:

- Full control: User Provisioned Infrastructure (UPI)-based deployment offers maximum flexibility and enables you to provision, configure, and manage the required infrastructure and resources (bootstrap machine, networking, load balancer, DNS, DHCP, and so on) for the cluster. A Cluster System Admin Host (CSAH) facilitates the cluster deployment and hosts the required services. For more information, see Installing a user-provisioned cluster on bare metal.

- Automated: Installer Provisioned Infrastructure (IPI)-based deployment is an automated approach for OpenShift cluster deployment. The installer uses the baseboard management controller (BMC) on each cluster host for provisioning. A CSAH node or a provisioner node is required to initiate the cluster deployment. For more information, see Deploying installer-provisioned clusters on bare metal.

- Interactive: This approach uses the Assisted Installer from the Red Hat Hybrid Cloud Console to enter configurable parameters and generate a discovery ISO. (Access to the portal requires Red Hat login credentials.) The servers are booted using this ISO to install Red Hat Enterprise Linux CoreOS (RHCOS) and an agent. The Assisted Installer and the agent provide preinstallation validation and installation for the cluster. For more information, see Assisted Installer for OpenShift Container Platform.

- Local agent-based: This approach uses the Assisted Installer from the Red Hat Hybrid Cloud Console for clusters that are confined in an air-gapped network. (Access to the portal requires Red Hat login credentials.) To perform this installation, you must download and configure the agent-based installer. For more information, see Installing a cluster with the Agent-based installer.

OpenShift cluster topologies

Different topologies are available with OpenShift Container Platform 4.12 for different workload requirements, with varying levels of server hardware footprints and high availability (HA).

- Single node cluster: Single Node OpenShift (SNO) bundles control-plane and data-plane capabilities into a single server and provides users with a consistent experience across the sites where OpenShift is deployed.

OpenShift Container Platform on a single node is a specialized installation that requires the creation of a special ignition configuration ISO. The primary use case for SNO is edge computing workloads. The main tradeoff with a single node is the lack of HA.

- Compact cluster: A compact cluster consists of three nodes hosting both the control plane and the data plane, allowing small footprint deployments of OpenShift for testing, development, and production environments.

While the three-node cluster can be expanded with additional compute nodes, an initial expansion of a three-node cluster requires that at least two compute nodes be added simultaneously. This requirement applies because the ingress networking controller deploys two router pods on compute nodes for full functionality. If you are adding compute nodes to a three-node cluster, deploy two compute nodes for full ingress functionality. You can add compute nodes later as necessary.

- Standard HA cluster: A standard cluster or the remote compute node cluster consists of five or more nodes. The control plane is hosted on three dedicated nodes, separating it out from the compute nodes. A minimum of two nodes are required for hosting the data plane. This topology offers the highest level of HA.

Prerequisites

Ensure that:

- The network switches and servers are provisioned.

- Network cabling is complete.

- Internet connectivity has been provided to the cluster.

CSAH node

The deployment begins with initial switch provisioning. Initial switch provisioning enables preparation and installation of the CSAH node by:

- Installing Red Hat Enterprise Linux 8

- Subscribing to the necessary repositories

- Creating an Ansible user account

- Cloning a GitHub Ansible playbook repository from the Dell ESG container repository

- Running an Ansible playbook to initiate the installation process

Dell Technologies has generated Ansible playbooks that fully prepare both CSAH nodes. See User-provisioned infrastructure. For a single-node OpenShift Container Platform deployment, the Ansible playbook sets up a DHCP server and a DNS server. The CSAH node is also used as an admin host to perform operations and management tasks on Single Node OpenShift (SNO).

Note: For enterprise sites, consider deploying appropriately hardened DHCP and DNS servers and using resilient multiple-node HAProxy configuration. The Ansible playbook for this design can deploy multiple CSAH nodes for resilient HAProxy configuration. This design guide provides CSAH Ansible playbooks for reference at the implementation stage.

The deployment process for OpenShift nodes varies depending on the cluster topology, that is, whether it is multinode or single-node.

OpenShift cluster bootstrapping process

The Ansible playbook creates a YAML file called install-config.yaml to control deployment of the bootstrap node.

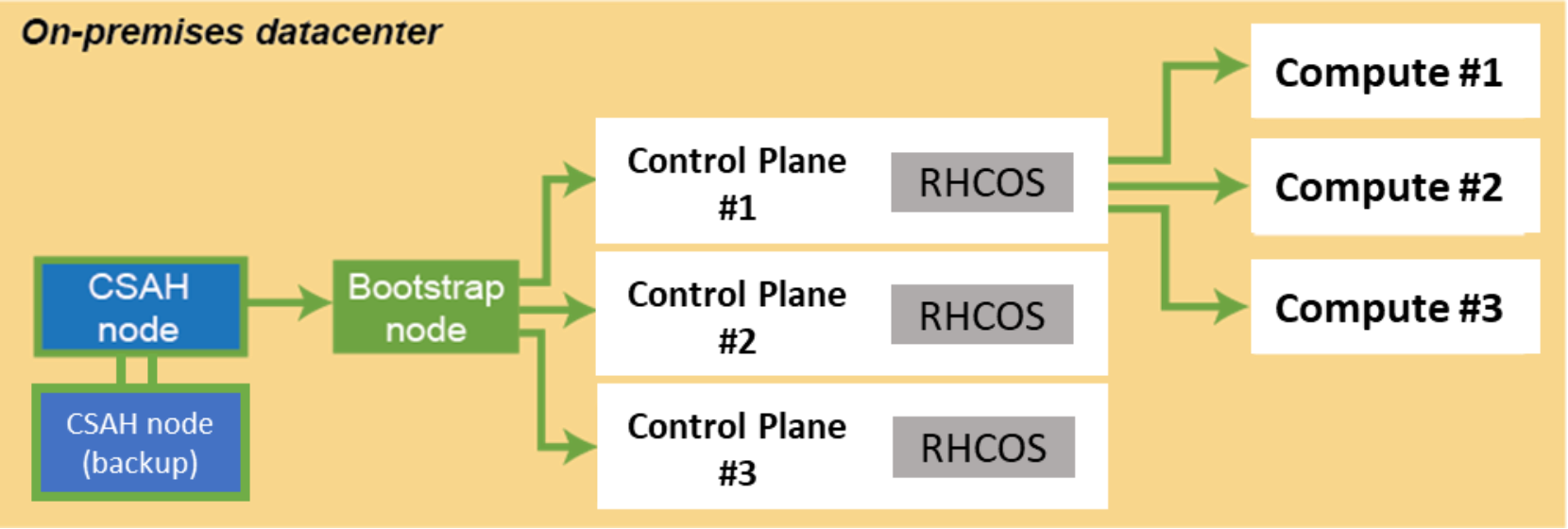

An ignition configuration control file starts the bootstrap node, as shown in the following figure:

Figure 1. Installation workflow: Creating the bootstrap, control-plane, and compute nodes

Note: An installation that is driven by ignition configuration generates security certificates that expire after 24 hours. You must install the cluster before the certificates expire, and the cluster must operate in a viable (nondegraded) state so that the first certificate rotation can be completed.

The cluster bootstrapping process consists of 10 phases:

- After startup, the bootstrap VM creates the resources that are required to start the control-plane nodes. Interrupting this process can prevent the OpenShift control plane from coming up.

- The bootstrap machine starts a single node etcd cluster and a temporary Kubernetes control plane.

- The control-plane nodes pull resource information from the bootstrap VM to bring them up to a viable state.

- The temporary control plane schedules the production control plane to the actual control-plane nodes.

- The Cluster Version Operator (CVO) comes online and installs the etcd Operator. The etcd Operator scales up etcd on all control plane nodes.

- The temporary control plane is shut down, handing control over to the now viable control-plane nodes.

- OpenShift Container Platform components are pulled into the control of the control-plane nodes.

- The bootstrap VM is shut down.

- The control-plane nodes now drive creation and instantiation of the compute nodes.

- The control plane adds operator-based services to complete the deployment of the OpenShift Container Platform ecosystem.

The cluster is now viable and can be placed into service in readiness for Day-2 operations. You can expand the cluster by adding more compute nodes for your requirements.

User-provisioned infrastructure installation

Dell Technologies has generated Ansible playbooks that fully prepare both CSAH nodes. Before the installation of the OpenShift Container Platform 4.12 cluster begins, the Ansible playbook sets up the PXE server, DHCP server, DNS server, HAProxy, and HTTP server. If a second CSAH node is deployed, the playbook also sets up DNS, HAProxy, HTTP, and KeepAlived services on that node. The playbook creates ignition files to drive installation of the bootstrap, control-plane, and compute nodes. It also starts the bootstrap VM to initialize control-plane components. The playbook presents a list of node types that must be deployed in top-down order.

Installer-provisioned infrastructure installation

The installer-provisioned infrastructure-based installation on bare metal nodes provisions and configures the infrastructure on which an OpenShift Container Platform cluster runs. With this approach, OpenShift Container Platform manages all aspects of the cluster.

The CSAH node is used as the provisioner node for this approach and hosts infrastructure services such as DNS and optional DHCP server. The bootstrap VM is hosted on the CSAH node for cluster setup. Dell-created Ansible playbooks set up CSAH nodes and automate the pre-deployment tasks including configuring the CSAH node, downloading OpenShift installer, installing OpenShift client, and creating manifest files required by the installer.

Cluster deployment using the IPI approach is a two-phase process. In Phase 1, the bootstrap VM is created on the CSAH node, and the bootstrap process is started. Two virtual IP addresses, API and Ingress, are used to access the cluster. In Phase 2, cluster nodes are provisioned using the virtual media-based Integrated Dell Remote Access Controller (iDRAC) option. After the nodes are booted, the temporary control plane is moved to the appropriate cluster nodes along with the virtual IP addresses. The bootstrap VM is destroyed, and the remaining cluster provisioning tasks are carried out by the controllers.

Assisted Installer installation

The Assisted Installer offers an interactive cluster installation approach. You create a cluster configuration using the web-based UI or the RESTful API. A CSAH node is used to access the cluster and host DNS and DHCP server.

The Assisted Installer interface prompts you for required values and provides appropriate default values for the remaining parameters unless you change them in the user interface or the API. After you have entered all the required details, a bootable discovery ISO is generated that is used to boot the cluster nodes. Along with RHCOS, the bootable ISO also contains an agent that handles cluster provisioning. The bootstrapping process completes on one of the cluster nodes. A bootstrap node or VM is not required. After the nodes are discovered on the Assisted Installer console, you can select node role (control plane or compute), installation disk, and networking options. The Assisted Installer performs prechecks before initiating the installation.

You can monitor the cluster installation status or download installation logs and kubeadmin user credentials from the Assisted Installer console.

Agent-based installer installation

Agent-based installation is similar to the Assisted Installer approach, except that you must first download and install the agent-based installer on the CSAH node. This deployment approach is recommended for clusters with an air-gapped or disconnected network.

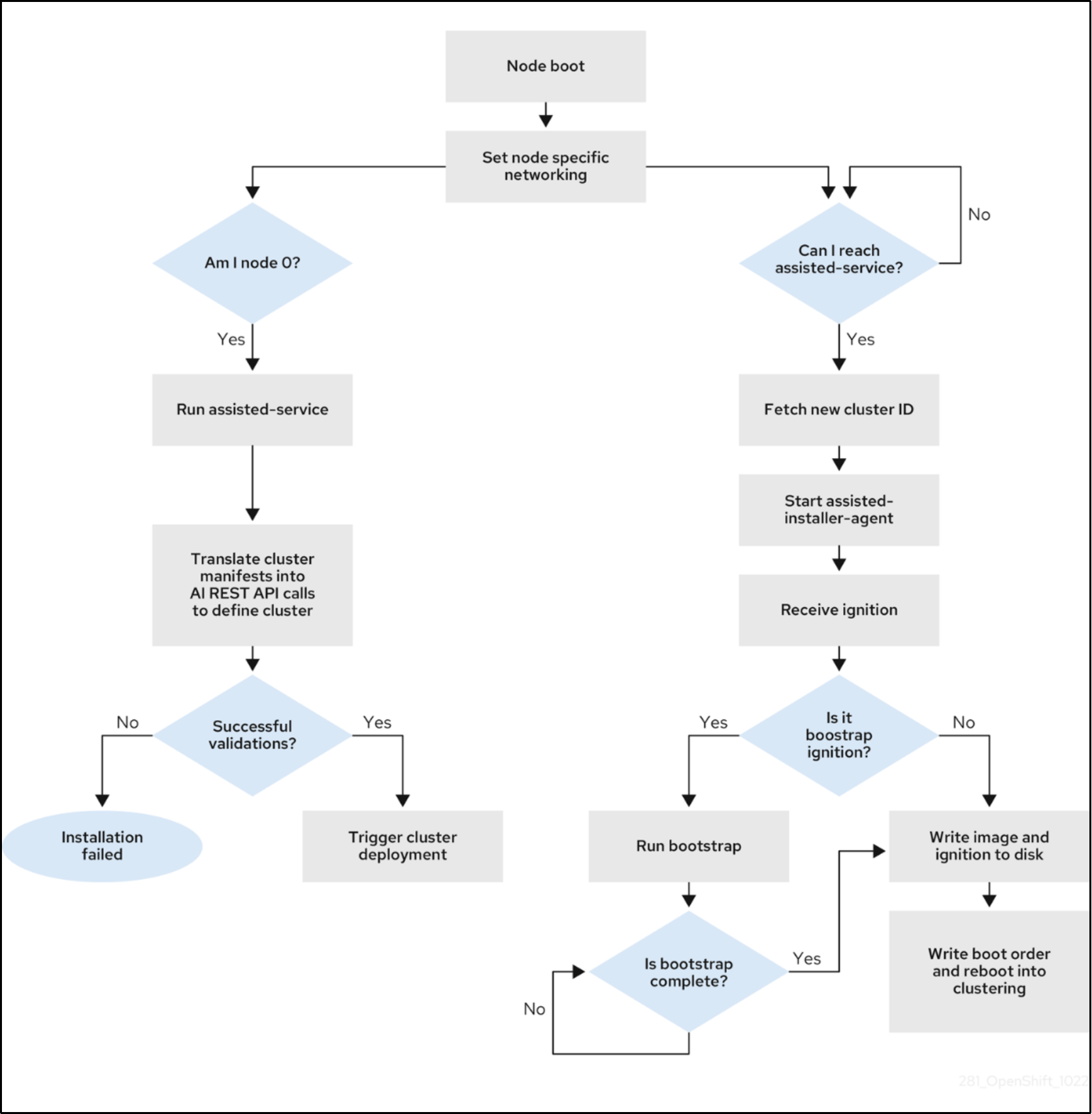

The bootable ISO used by this approach contains the assisted discovery agent and the assisted service. The assisted service runs only one of the control plane nodes, and the node eventually becomes the bootstrap host. The assisted service ensures that all the cluster hosts meet the requirements and then triggers an OpenShift Container Platform cluster deployment.

The following figure shows the node installation workflow:

Figure 2.

Cluster node installation workflowDisconnected installation mirroring

A mirror registry enables disconnected installations to host container images that are required by the cluster. The mirror registry must have access to the Internet to obtain the necessary container images as well as to the private network. You can use:

- Red Hat Quay: Red Hat Quay is an enterprise-quality container registry. Use Red Hat Quay to build and store container images, then make them available to deploy across your enterprise. The Red Hat Quay Operator provides a method to deploy and manage Red Hat Quay on an OpenShift cluster. For more information, see Quay for proof-of-concept purposes and Quay Operator.

- Mirror registry for Red Hat OpenShift: a small-scale container registry that is included with OpenShift Container Platform subscriptions. Use this registry if a large-scale registry is unavailable. For more information, see Creating mirror registry for Red Hat OpenShift.

To use the mirror images for a disconnected installation, update the install-config.yaml file with the imageContentSources section that contains the information about the mirrors, as shown in the following example:

imageContentSources:

- mirrors:

- ipi-prov.dcws.lab:8443/ocp4/openshift412

source: quay.io/openshift-release-dev/ocp-release

- mirrors:

- ipi-prov.dcws.lab:8443/ocp4/openshift412

source: quay.io/openshift-release-dev/ocp-v4.0-art-dev

Zero-Touch Provisioning

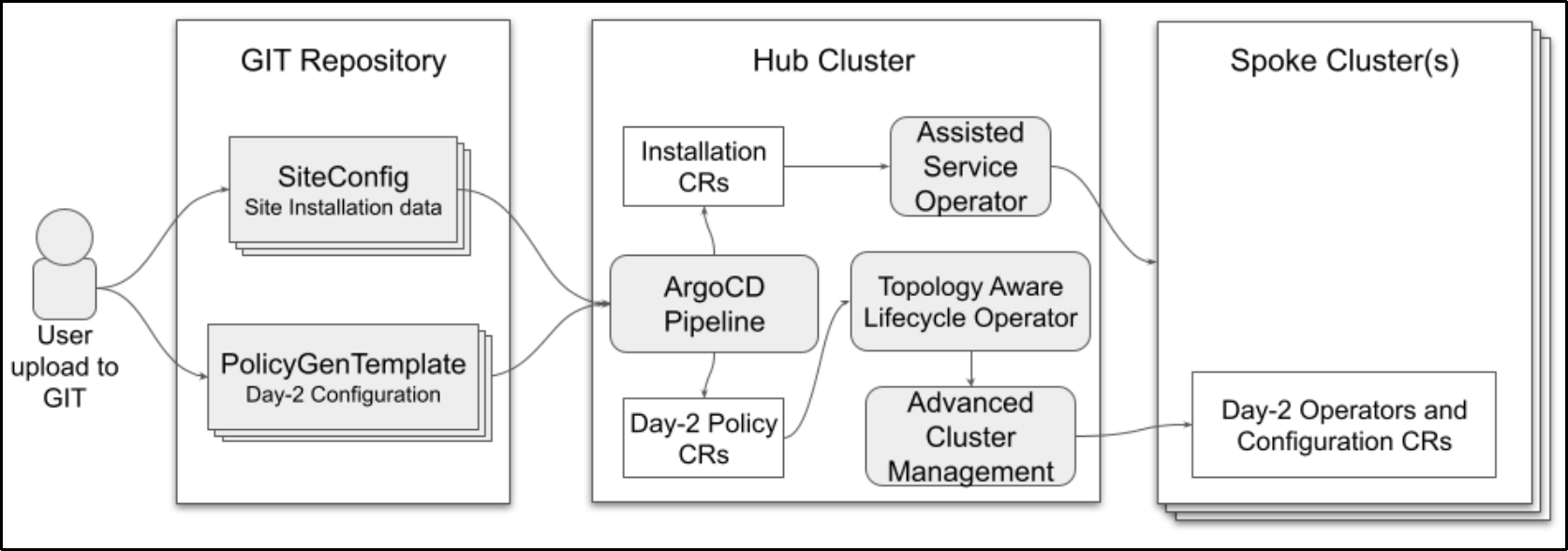

Zero-Touch Provisioning (ZTP) provisions new edge sites using declarative configurations. ZTP can deploy an OpenShift cluster quickly and reliably on any environment—edge, remote office/branch office (ROBO), disconnected, or air-gapped. ZTP can deploy and deliver OpenShift 4.12 clusters in a hub-spoke architecture, where a unique hub cluster can manage multiple spoke clusters.

The following diagram shows how ZTP works in a far edge:Figure 3. OpenShift spoke cluster deployment using ZTP

ZTP uses the GitOps deployment practices for infrastructure deployment. Declarative specifications are stored in Git repositories in the form of predefined patterns such as YAML. Red Hat Advanced Cluster Management (ACM) for Kubernetes uses the declarative output for multisite deployment. GitOps addresses reliability issues by providing traceability, RBAC, and a single source of truth regarding the state of each site.

SiteConfig uses ArgoCD as the engine for the GitOps method of site deployment. After completing a site plan that contains all the required parameters for deployment, a policy generator creates the manifests and applies them to the hub cluster.

The following figure shows the ZTP flow that is used in a spoke cluster deployment:Figure 4. ZTP deployment flow

OpenShift Virtualization

OpenShift Virtualization is an add-on to OpenShift Container Platform that enables you to run and manage VM workloads alongside container workloads. An enhanced web console provides a graphical portal to manage these virtualized resources alongside the OpenShift Container Platform cluster containers and infrastructure.

Components

OpenShift Virtualization consists of:

- Compute: virt-operator

- Storage: cdi-operator

- Network: cluster-network-addons-operator

- Scaling: ssp-operator

- Templating: tekton-tasks-operator

For more information about these components, see OpenShift Virtualization architecture.

Requirements

Take account of the following requirements when planning the OpenShift Virtualization deployment:

- Virtualization must be enabled in server processor settings.

- Control-plane nodes or the compute nodes must have RHCOS installed. Red Hat Enterprise Linux compute nodes are not supported.

- The OpenShift cluster must have at least two compute or data plane nodes available at the time of cluster installation for HA. The OpenShift Virtualization live migration feature requires the cluster-level HA flag to be set to true. This flag is set at the time of cluster installation and cannot be changed afterwards. Although OpenShift Virtualization can be deployed on a single-node cluster, HA is not available in this case.

- The live migration feature requires shared storage among the compute or data plane nodes. For live migration to work, the underlying storage that is used for OpenShift Virtualization must support and use ReadWriteMany (RWX) access mode.

- If Single Root I/O Virtualization (SR-IOV) is to be used, ensure that the network interface controllers (NICs) are supported.

- As an add-on feature, OpenShift Virtualization imposes additional overhead that must be accounted for during the planning phase. Oversubscribing the physical resources in a cluster can affect performance. For more information, see physical resource overhead requirements.