Home > Workload Solutions > Container Platforms > Red Hat OpenShift Container Platform > Archive > Design Guide—Red Hat OpenShift Container Platform 4.10 on Intel-powered Dell Infrastructure > Deployment process

Deployment process

-

Dell Technologies has simplified the process of bootstrapping the OpenShift Container Platform 4.10 multinode cluster.

Prerequisites

Ensure that:

- The cluster is provisioned with network switches and servers.

- Network cabling is complete.

- Internet connectivity has been provided to the cluster.

CSAH node

The deployment begins with initial switch provisioning. Initial switch provisioning enables preparation and installation of the CSAH node and consists of:

- Installing Red Hat Enterprise Linux 8

- Subscribing to the necessary repositories

- Creating an Ansible user account

- Cloning a GitHub Ansible playbook repository from the Dell ESG container repository

- Running an Ansible playbook to initiate the installation process

Dell Technologies has generated Ansible playbooks that fully prepare both CSAH nodes. Before the installation of the OpenShift Container Platform 4.10 cluster begins, the Ansible playbook sets up the PXE server, DHCP server, DNS server, HAProxy, and HTTP server. If a second CSAH node is deployed, the playbook also sets up DNS, HAProxy, HTTP, and KeepAlived services on that node. The playbook creates ignition files to drive installation of the bootstrap, control-plane, and compute nodes, and also starts the bootstrap VM to initialize control-plane components. The playbook presents a list of node types that must be deployed in top-down order.

For a single-node OpenShift Container Platform deployment, the Ansible playbook sets up a DHCP server and a DNS server. The CSAH node is also used as an admin host to perform operations and management tasks on Single Node OpenShift (SNO).

Note: For enterprise sites, consider deploying appropriately hardened DHCP and DNS servers and using resilient multiple-node HAProxy configuration. The Ansible playbook for this design can deploy multiple CSAH nodes for resilient HAProxy configuration. This guide provides CSAH Ansible playbooks for reference at the implementation stage.

The deployment process for OpenShift nodes varies according to whether the cluster topology is multinode or single-node.

Multinode clusters

The Ansible playbook creates a YAML file called install-config.yaml to control deployment of the bootstrap node. For more information, see the Red Hat OpenShift Container Platform 4.10 on Dell Infrastructure Implementation Guide.

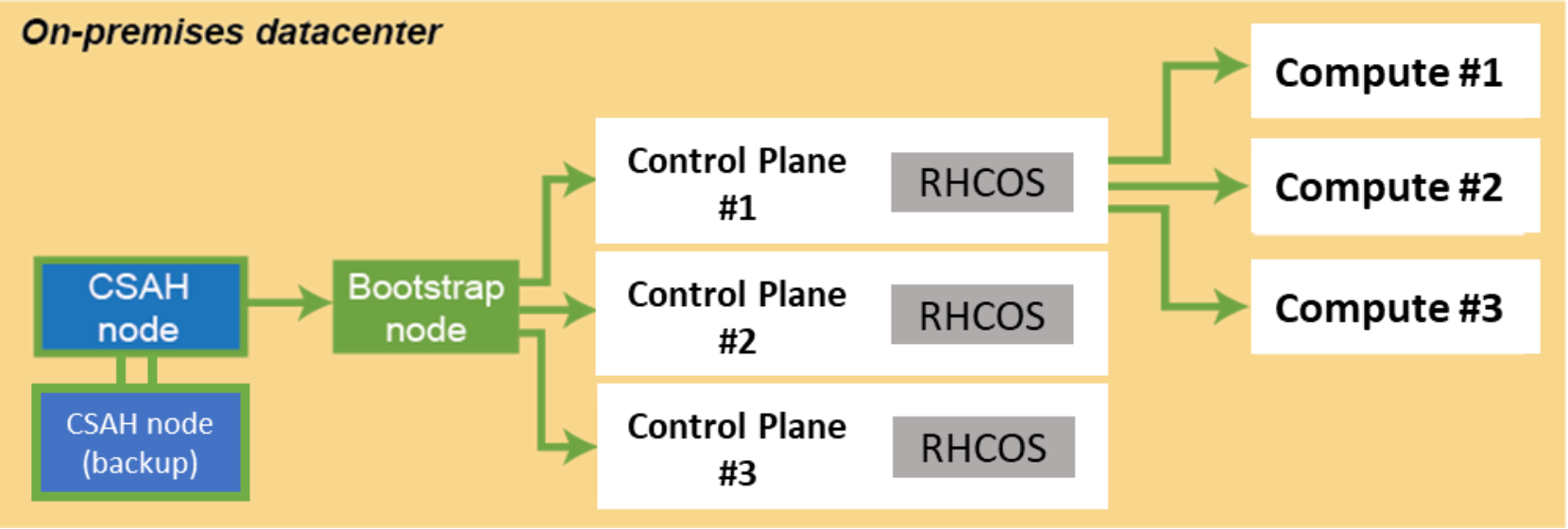

An ignition configuration control file starts the bootstrap node, as shown in the following figure:

Figure 3. Installation workflow: Creating the bootstrap, control-plane, and compute nodes

Note: An installation that is driven by ignition configuration generates security certificates that expire after 24 hours. You must install the cluster before the certificates expire, and the cluster must operate in a viable (nondegraded) state so that the first certificate rotation can be completed.

The cluster bootstrapping process consists of the following phases:

- After startup, the bootstrap VM creates the resources that are required to start the control-plane nodes. Interrupting this process can prevent the OpenShift control plane from coming up.

- The control-plane nodes pull resource information from the bootstrap VM to bring them up to a viable state. This resource information is used to form the etcd control plane cluster.

- The bootstrap VM instantiates a temporary Kubernetes control plane that is under etcd control.

- A temporary control plane loads the application workload control plane to the control-plane nodes.

- The temporary control plane is shut down, handing control over to the now viable control-plane nodes.

- OpenShift Container Platform components are pulled into the control of the control-plane nodes.

- The bootstrap VM is shut down.

- The control-plane nodes now drive creation and instantiation of the compute nodes.

- The control plane adds operator-based services to complete the deployment of the OpenShift Container Platform ecosystem.

The cluster is now viable and can be placed into service in readiness for Day-2 operations. You can expand the cluster by adding more compute nodes to suit your requirements.

Virtualized compute nodes on VMware

Changes in business needs can lead to a fluctuating demand for resources, resulting in either an underpowered or an overprovisioned OpenShift cluster. Virtualized compute nodes can efficiently address the short-term or temporary need for additional capacity in an existing cluster. VMs require a short time for initial setup and can be easily removed from an OpenShift cluster when the demand levels are back to normal. VMs built on VMware vCenter® leverage VMware HA capabilities to offer best-in-class fault tolerance both at network and host level. VMware virtualized compute nodes can boost efficiency and flexibility with reduced downtime.

To validate virtualized compute nodes, the Dell OpenShift engineering team used the vSphere deployment with three hosts as a cluster managed by VMware vCenter. The following table shows the required versions of the vSphere virtual environment products for virtualized compute plane nodes:

Table 5. Required VMware versions

Virtual environment product

Required version

VM hardware version

16 or later

vSphere ESXi hosts

7 or later

vCenter host

7 or later

Single-node OpenShift

Installing OpenShift Container Platform on a single node alleviates some of the requirements for high availability (HA) and large-scale clusters. The administration host requirement is addressed by deploying a CSAH node.

OpenShift Container Platform on a single node is a specialized installation that requires the creation of a special ignition configuration ISO. The discovery ISO can be generated using OpenShift Assisted Installer (AI). The high-level steps for installing a Single Node OpenShift (SNO) are:

- In OpenShift AI, click Create Cluster.

- When prompted by the wizard, select Install single node OpenShift (SNO).

- Enter the required details: Cluster name, base domain, the Secure Shell (SSH) public key, and the pull secret.

- Generate and download the discovery ISO.

- Download a minimal image file.

- Boot the server using the virtual media.

- Select a subnet from list of available subnets.

- Review the settings and create the cluster.

The node reboots several times before the installation is complete. You can monitor the installation status from the AI console.

Zero-Touch Provisioning

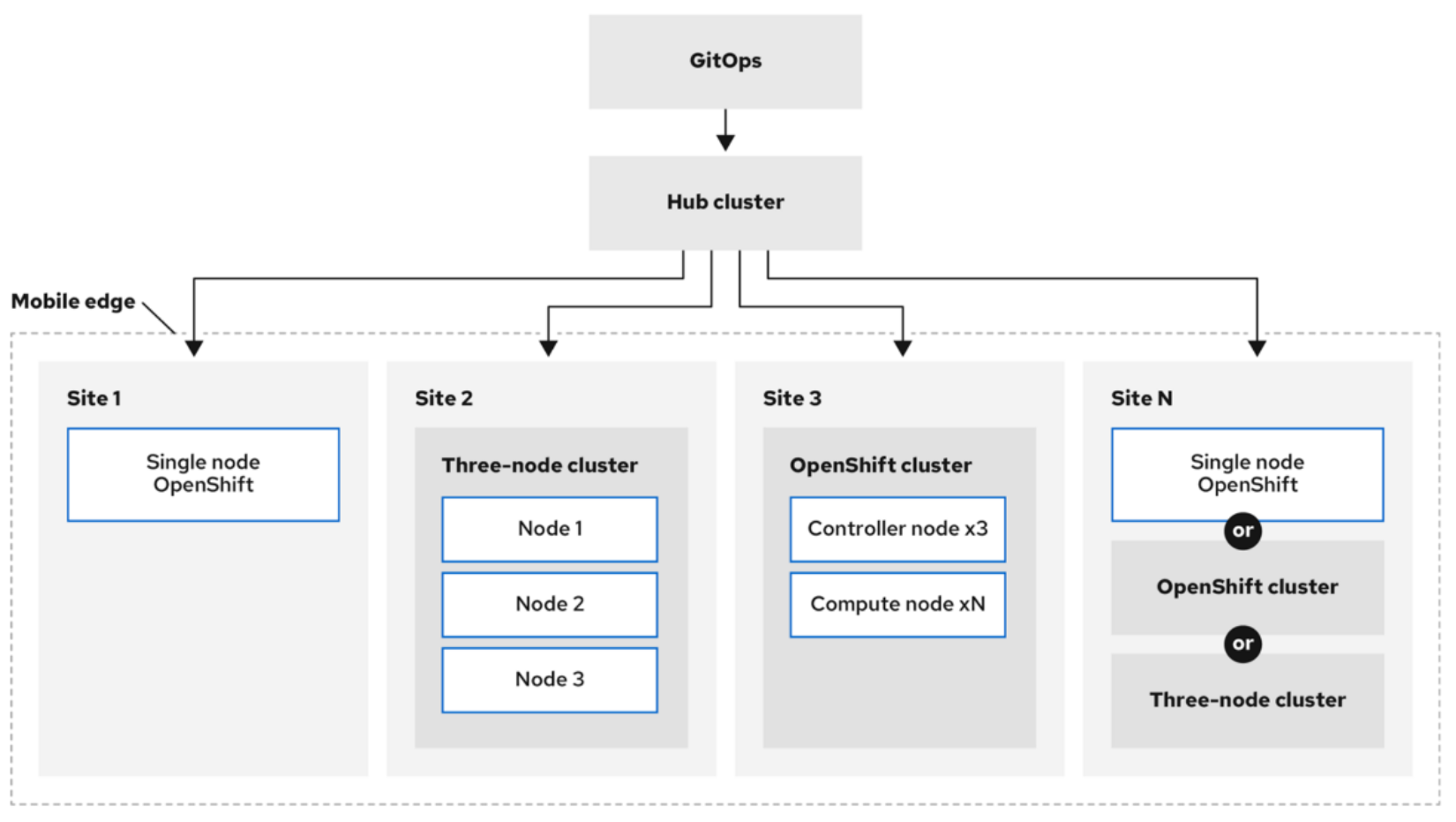

Zero-Touch Provisioning (ZTP) provisions new edge sites using declarative configurations. Partners or customers deploy a relocatable OpenShift cluster on their preferred hardware to bring fully operational OpenShift clusters up quickly and reliably for their environment (edge, remote office/branch office (ROBO), disconnected, or air-gapped). ZTP can deploy and deliver OpenShift 4 clusters in a hub-spoke architecture, where a unique hub cluster can manage multiple spoke clusters.

The following diagram shows how ZTP works within a far edge:Figure 4. OpenShift spoke cluster deployment using ZTP

ZTP uses the GitOps deployment practices for infrastructure deployment. Declarative specifications are stored in Git repositories in the form of predefined patterns such as YAML. The Open Cluster Manager (OCM) uses the declarative output for multisite deployment. GitOps addresses reliability issues by providing traceability, RBAC, and a single source specifying the state (configuration) of each site.

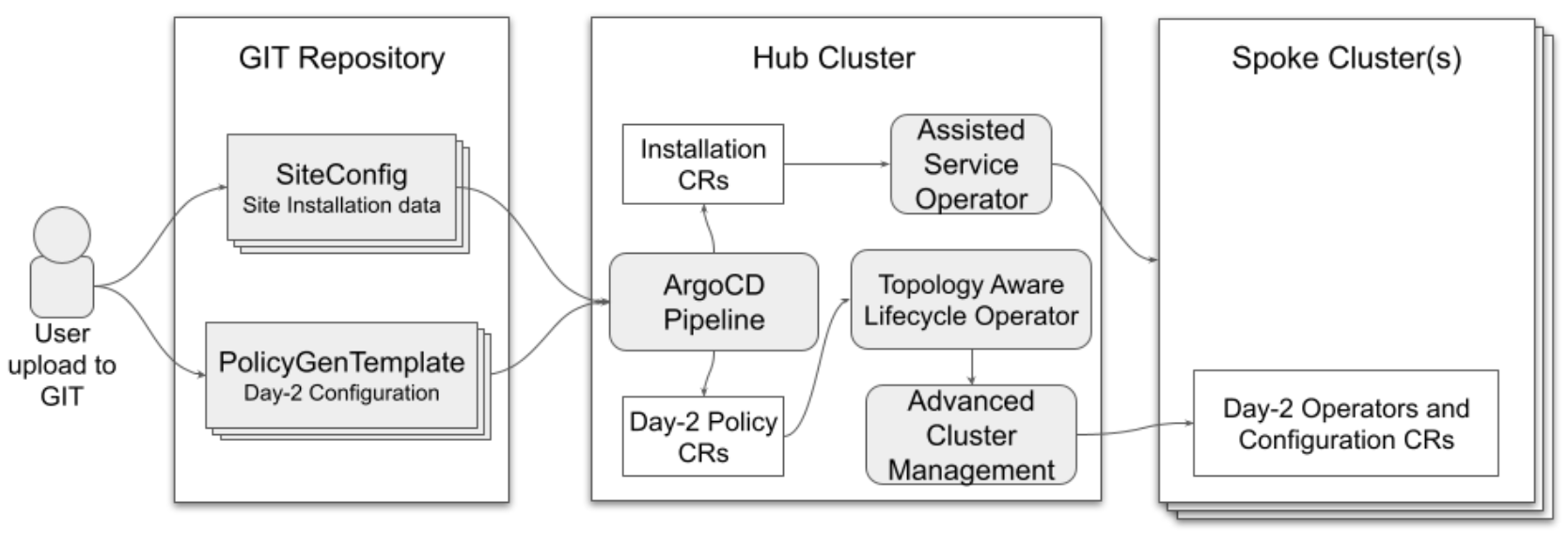

SiteConfig uses ArgoCD as the engine for the GitOps method of site deployment. After completing a site plan that contains all the required parameters for deployment, a policy generator creates the manifests and applies them to the hub cluster.

The following figure shows the ZTP flow that is used in a spoke cluster deployment:Figure 5. ZTP deployment flow