Home > Workload Solutions > Container Platforms > Red Hat OpenShift Container Platform > Archive > Design Guide—Dell Ready Stack for Red Hat OpenShift Container Platform 4.3 CSI Attached Storage > Storage design for OpenShift Container Platform

Storage design for OpenShift Container Platform

-

Introduction

Note: This chapter discusses various storage concepts related to the Dell EMC Ready Stack for Red Hat OpenShift 4.3, including the use of the Container Storage Interface (CSI) and related CSI drivers for Dell EMC storage products. We have included the validation of CSI for this release with Dell EMC PowerMax and Isilon H500. The Dell EMC Ready Stack for Red Hat OpenShift 4.3 Deployment Guide reflects that choice. The CSI drivers that support OpenShift Container Platform 4.3 are located at Operator Hub.

Stateful applications create a demand for persistent storage. All storage within OpenShift Container Platform 4.3 is managed separately from compute (worker node) resources and from all networking and connectivity infrastructure facilities. The CSI API is designed to abstract storage use and enable storage portability.

The following Kubernetes storage concepts apply to this solution:

- Persistent volume (PV)—The physical LUN or file share on the storage array. PVs are internal objects against which PVCs are created. PVs are unrelated to pods and pod storage life cycles.

- Persistent volume claim (PVC)—An entitlement that the user creates for the specific PV.

- Storage class—A logical construct defining storage allocation for a specific group of users.

- CSI driver—The software that orchestrates persistent volume provisioning and deprovisioning on the storage array.

These resources are logical constructs that are used within the Kubernetes container infrastructure to maintain storage for all the components of the container ecosystem that depend on storage. Developers and operators can deploy applications and provision or deprovision persistent storage without having any specific technical knowledge of the underlying storage technology.

The OpenShift Container Platform administrator is responsible for provisioning storage classes and making them available to the cluster’s tenants.

Storage using PVCs is consumed or used in two ways: statically or dynamically. Static storage can be attached to one or more pods by static assignment of a PV to a PVC and then to a specific pod or pods.

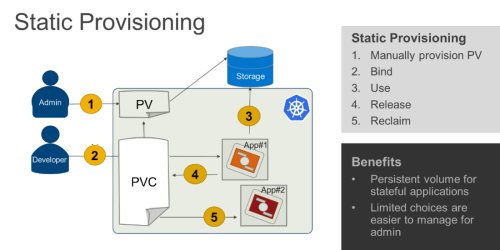

Static storage provisioning

To provision static persistent storage, an administrator preprovisions PVs to be use by Kubernetes tenants. When a user makes a persistent storage request by creating a PVC, Kubernetes finds the closest matching available PV. Static provisioning is not the most efficient method for using storage, but it might be preferred when it is necessary to restrict users from PV provisioning or if the CSI driver does not support dynamic provisioning.

The following figure illustrates the static storage provisioning workflow in this solution:

Figure 5. Static storage provisioning workflow

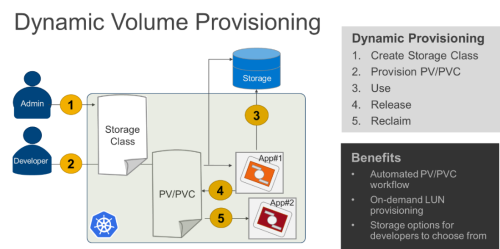

Dynamic persistent storage provisioning

Dynamic persistent storage provisioning, the most flexible provisioning method, enables Kubernetes users to secure PV provisioning on demand. Dynamic provisioning has fully automated LUN export provisioning.

The following figure shows the dynamic storage provisioning workflow in this solution:

Figure 6. Dynamic storage provisioning workflow and benefits

After a PV is bound to a PVC, that PV cannot be bound to another PVC. This restriction binds the PV to a single namespace, that of the binding project. A PV that has been created for dynamic use is a storage class object that functions as, and is automatically consumed as, a cluster resource.

PV types

OpenShift Container Platform natively supports the following PV types:

- AWS Elastic Block Store (EBS)

- Azure Disk

- Azure File

- Cinder

- Fibre Channel (FC) (can only be assigned and attached to a node)

- GCE Persistent Disk

- HostPath (local disk)

- iSCSI (generic)

- Local volume

- NFS (generic)

- Red Hat OpenShift Container Storage (RHOCS)

- VMware vSphere

The CSI API extends the storage types that can be used within an OpenShift Container Platform solution.

There are various Dell EMC CSI drivers that are available for most of the storage arrays, which can be located in Operator Hub. However, as part of this release we only validate the PowerMax and Isilon CSI drivers.

PV capacity

Each PV has a predetermined storage capacity that is set in the capacity definition parameter. A pod that is launched within the container platform can set or request storage capacity. Expect the choice of control parameters to expand as the CSI API is extended and as it matures.

PV access modes

A resource provider can determine how the PV is created and can set the storage control parameters. Access mode support is specific to the type of storage volume that is provisioned as a PV. Provider capabilities determine the PV’s access modes, while the capabilities of each PV determine the modes that that volume supports. For example, NFS can support multiple read/write clients, but a specific NFS PV might be configured as read-only.

Pod claims are matched to volumes with compatible access modes based on two matching criteria: access modes and size. A pod claim’s access modes represent a request.