Home > Storage > PowerFlex > White Papers > Dell Validated Design for Virtual GPU with VMware and NVIDIA on PowerFlex > Host configuration

None

-

The following host side configuration is required to use a NVIDIA GPU in the ESXi host:

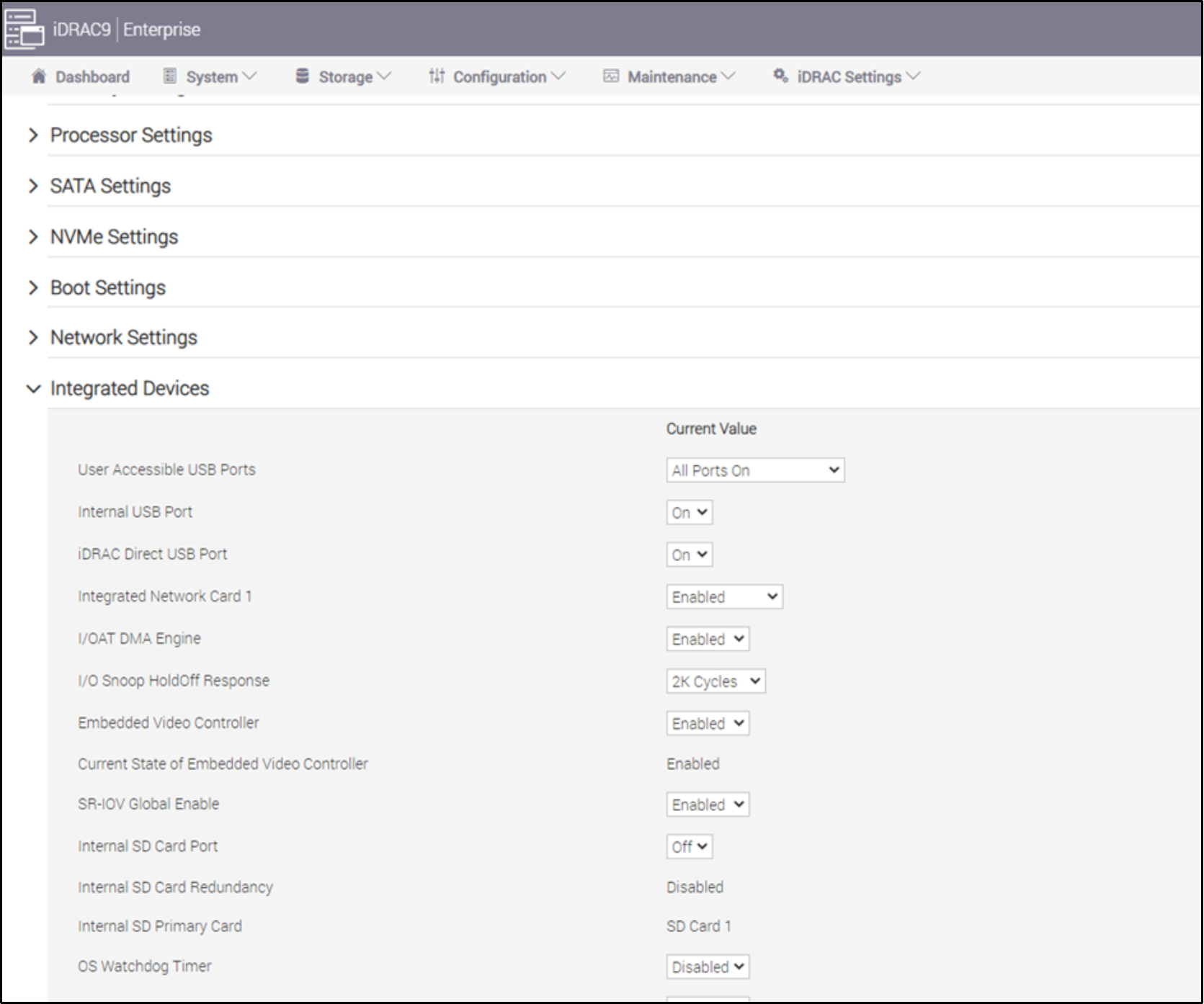

1. In the iDRAC of each CO node, enable SR-IOV as shown in the following figure to enable the hypervisor and create virtual instances of a PCIe device.

Figure 5. SR-IOV option at iDRAC of CO node

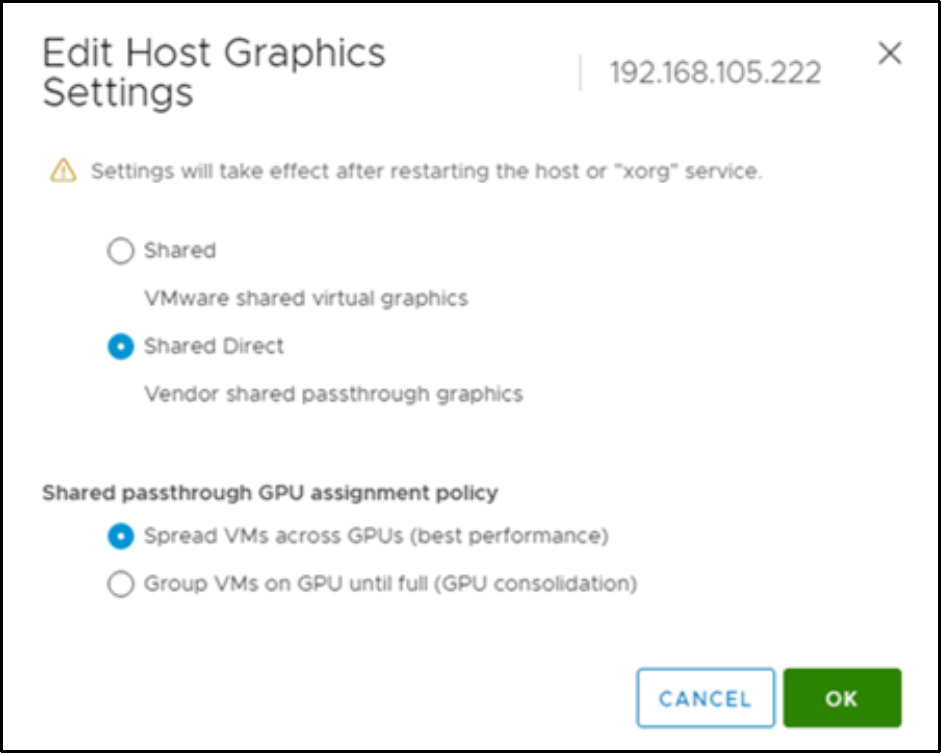

2. For each ESXi host in vSphere, enable the Shared Direct option in the Host Graphics section as shown in the following figure. The shared direct option enables the passthrough graphics on the ESXi host and allows the NVIDIA GPUs to perform the processing or passthrough processing to the GPU.

Figure 6. Shared Direction option for ESXi host

3. Run the following command to verify that the Shared Passthrough has been configured from an SSH session to the ESXi host:

[root@node1:~] esxcli graphics host get

Default Graphics Type: SharedPassthru

Shared Passthru Assignment Policy: Performance

4. Reboot the node after making the above changes.

5. Download the vib package aligning to your hypervisor version from the Software Downloads section in the NVIDIA Licensing Portal.

6. Extract the downloaded file and copy the resulting files to a shared datastore accessible by all the ESXi hosts with NVIDIA GPUs.

7. Use an SSH session to install the NVIDIA vib package by running the following esxcli commands on the ESXi host:

esxcli system maintenanceMode set --enable true

esxcli software vib install -v /vmfs/volumes/ <datastore/host-driver-component.zip>

esxcli software vib install -v /vmfs/volumes/powerflex-PFCompute-ds-1/NVIDIA-AIE_ESXi_7.0.2_Driver_510.85.03-1OEM.702.0.0.17630552.vib <datastore/GPU-management-daemon-component.zip>

esxcli system maintenanceMode set --enable false

For more information about installing the NVIDIA GPU Management daemon and NVIDIA vGPU Hypervisor host driver, see the Installing and Updating the NVIDIA Virtual GPU Manager for VMware vSphere section on the NVIDIA Virtual GPU Software User Guide.

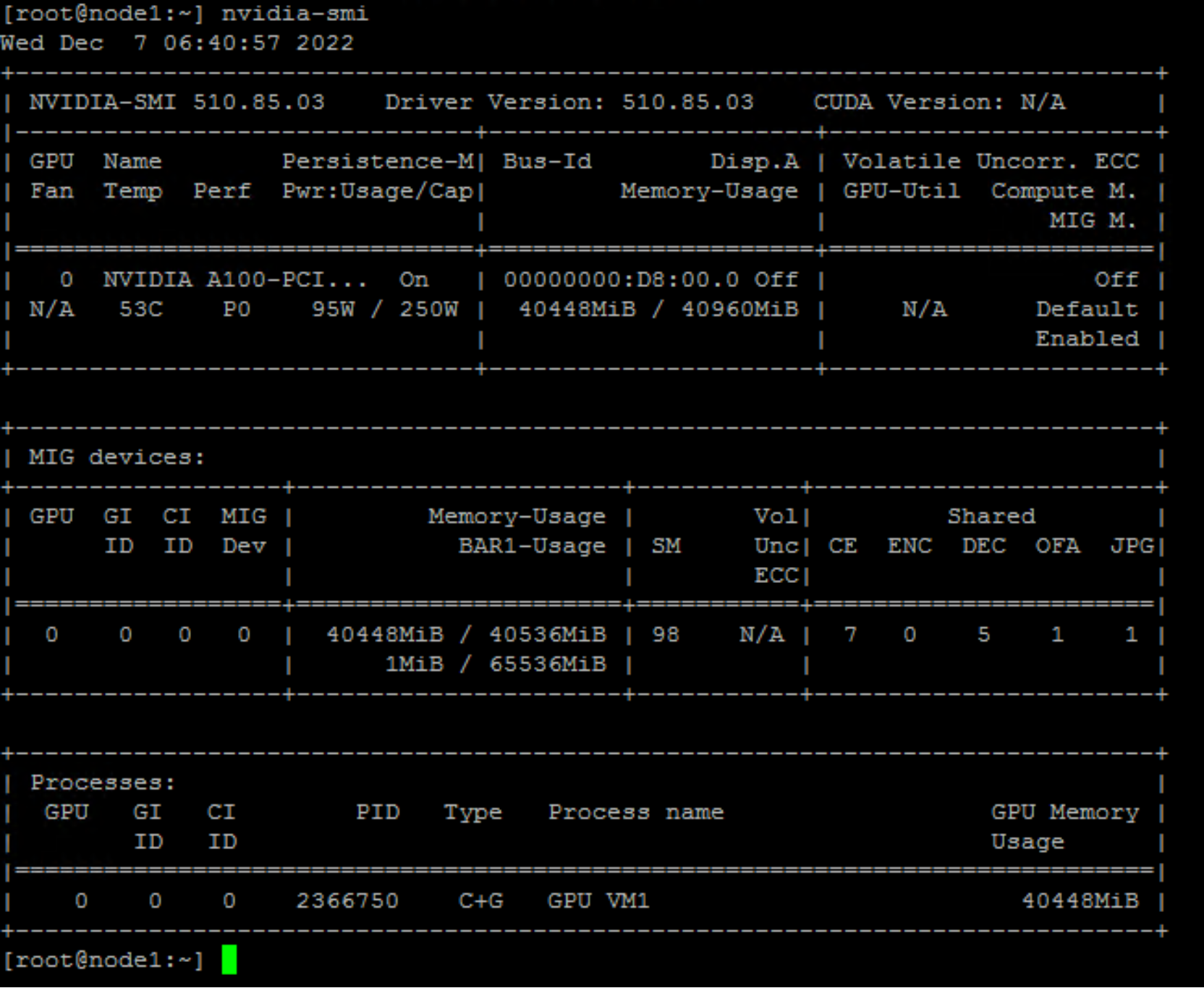

8. Run nvidia-smi command to verify the GPU.

Figure 7. nvidia-smi output

9. Run the following command to verify that the driver is present in the ESXi vib list:

[root@node1:~] esxcli software vib list | grep NVIDIA

NVIDIA-AIE_ESXi_7.0.2_Driver 510.85.03-1OEM.702.0.0.17630552 NVIDIA VMwareAccepted 2022-12-01

[root@node1:~] nvidia-smi -q | grep Virtualization

GPU Virtualization Mode

Virtualization Mode : Host VGPU

[root@node1:~]

10. Some GPUs, vGPU types, and hypervisor software versions do not support the Error Correction Code (ECC) memory with NVIDIA vGPU. So, disable it using the following command. For more information about ECC memory, see Disabling and Enabling ECC Memory in the Virtual GPU Software User Guide.

[root@node1:~] nvidia-smi -e 0

11. Run the following command to enable the MIG feature at ESXi node:

[root@node1:~] nvidia-smi -mig 1

12. Reboot the host and verify the host set up by running the nvidia-smi -q command. The ESXi host set up is complete.