Home > Workload Solutions > SAP > Guides > SAP HANA TDI Guides > Dell Validated Design for SAP HANA TDI with Dell S5000 Series servers > Memory requirements

Memory requirements

-

When selecting a DIMM size, consider future memory upgrade requirements to avoid having to replace existing DIMMs later.

Intel Optane memory

S5000 Series servers with Cascade Lake CPUs in combination with Intel® OptaneTM Persistent Memory (PMem) can lower the TCO for SAP HANA environments and increase the overall memory capacity within a machine.

For the SAP configuration rules for the use of DCPMM with SAP HANA, see SAP Note 2813454: Recommendations for Persistent Memory Configuration with BW/4HANA (access requires SAP login credentials). All S5000 Series servers of up to eight sockets in a glueless architecture support all the Intel® DCPMM operation modes that SAP supports for use with SAP HANA.

The memory limits for these Cascade Lake systems are:

- Six-socket systems: 36 x 256 GB = 9 TB PMEM + 36 x 128 GB = 4.5 TB DRAM, yielding 13.5 TB.

- Eight-socket systems: 48 x 256 GB = 12 TB PMEM + 48 x 128 GB = 6 TB DRAM, yielding 18 TB.

For more information about Intel® OptaneTM memory, see SAP Note 2700084: FAQ: SAP HANA Persistent Memory (access requires SAP login credentials).

CPU and memory requirements

Intel® OptaneTM memory on SAP HANA supports databases of up to 18 TB of memory within eight-socket servers. This exceeds the physical capabilities of DRAM with the same number of sockets. SAP HANA supports PMem only in App Direct mode for production systems.

Note: At the time of publication, memory mode is supported only for nonproduction systems.

Sizing recommendations for storage with PMem

You can use any internal storage or certified Dell SAP HANA external storage. For more information, see the disk sizing requirements for scale-up systems in the SAP HANA TDI Storage Requirements white paper. The white paper is included as an attachment in SAP Note 1900823: SAP HANA Storage Connector API (access requires SAP login credentials).

Scale-out systems are more complex, as shown in the following table:

Table 5. Recommended storage sizes for scale-out systems

Volume

Size

/hana/data

1.2 x anticipated net data size on disk

or

1 x total main memory (DRAM + PMEM)

/hana/log

512 GB

/hana/shared

1 TB

Preparing the Intel Optane Persistent Memory

To configure the Intel(R) Optane(TM) memory DIMMs, you must :

- Create a goal configuration from the BIOS environment

- Create the namespaces with an installed operating system

Creating a goal configuration

Create a goal configuration by using:

- The ndctl tool at operating system level:

You can perform these steps without having any operating system on the machine. Namespace creation requires that an SAP HANA supported operating system that includes the ndctl tool is installed on the machine.

The BIOS configuration information is available in the S5000 Series Technical State Release Note. See “Configuring DCPMM Modes (section 1.9.2)” of the guide for the BIOS configuration steps.

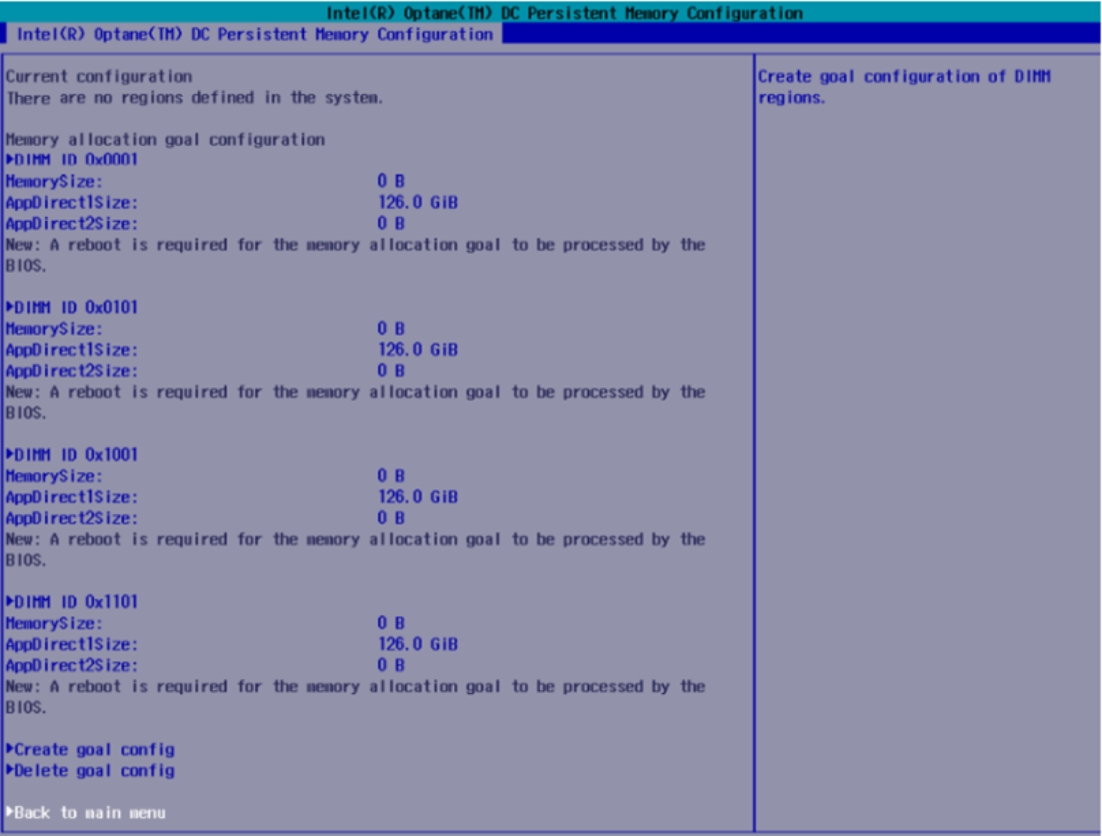

The following figure shows the goal configuration dialog in the BIOS setup utility:

Figure 1. Configuring memory in Application Direct mode

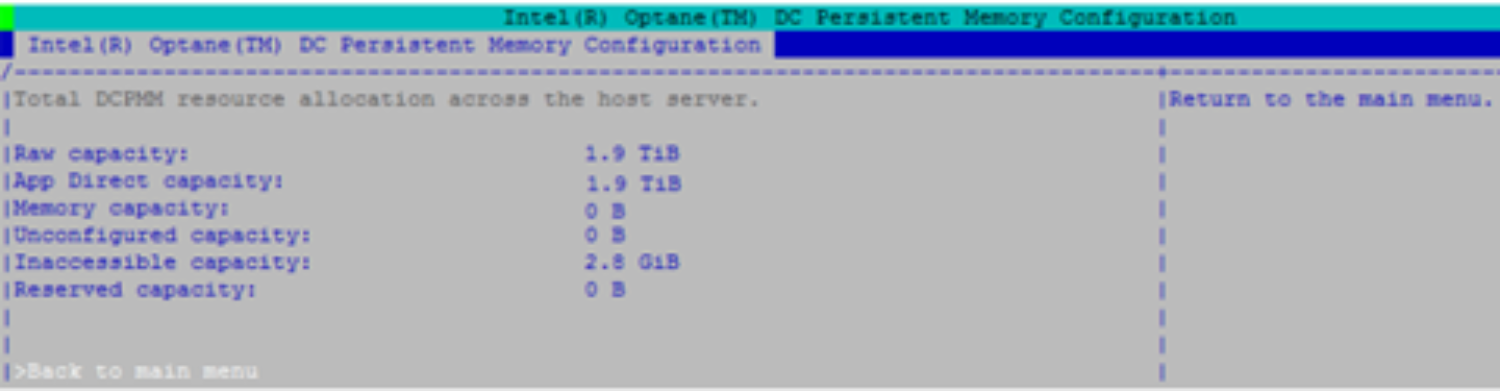

You can check the DCPMM modes in the BIOS setup utility or from the Linux command lines, as shown in the following figure. For more information, see “Checking DCPMM Modes (section 1.9.3)” the S5000 Series Technical State Release Note.

Figure 2. Configuring capacity in Application Direct mode

Creating the namespaces

After you complete the goal configuration, you must allocate the namespaces.

To list all unallocated namespaces, run the ndctl command using the -i flag:

linux:~ # ndctl list -i

[

{

"dev":"namespace24.0",

"mode":"raw",

"size":0,

"uuid":"00000000-0000-0000-0000-000000000000",

"sector_size":512,

"state":"disabled",

"numa_node":0

},

{

"dev":"namespace26.0",

"mode":"raw",

"size":0,

"uuid":"00000000-0000-0000-0000-000000000000",

"sector_size":512,

"state":"disabled",

"numa_node":2

},

{

"dev":"namespace25.0",

"mode":"raw",

"size":0,

"uuid":"00000000-0000-0000-0000-000000000000",

"sector_size":512,

"state":"disabled",

"numa_node":1

},

{

"dev":"namespace27.0",

"mode":"raw",

"size":0,

"uuid":"00000000-0000-0000-0000-000000000000",

"sector_size":512,

"state":"disabled",

"numa_node":3

}

Creating namespaces on the configured regions

Depending on the number of populated sockets in the system, you might need to repeat the namespace creation procedure two or more times. Run the following script:

linux:~ # ndctl create-namespace

{

"dev":"namespace24.0",

"mode":"fsdax",

"size":"744.19 GiB (799.06 GB)",

"uuid":"8bc41612-bebc-4ead-bbca-6cc6f0b93be0",

"raw_uuid":"3c1b2d89-31ac-4686-a6d0-ac22260d7515",

"sector_size":512,

"blockdev":"pmem24",

"numa_node":0

}

linux:~ # ndctl create-namespace

{

"dev":"namespace26.0",

"mode":"fsdax",

"size":"744.19 GiB (799.06 GB)",

"uuid":"2785910e-a01d-42fc-990c-0b8f9563e49e",

"raw_uuid":"6f116048-9f99-4d14-b3f8-b47a8473da7e",

"sector_size":512,

"blockdev":"pmem26",

"numa_node":2

}

linux:~ # ndctl create-namespace

{

"dev":"namespace25.0",

"mode":"fsdax",

"size":"744.19 GiB (799.06 GB)",

"uuid":"a4809f98-69c4-4fb0-b3f9-4cbb9293716f",

"raw_uuid":"84272619-6a3c-42ea-8d31-95198ff67589",

"sector_size":512,

"blockdev":"pmem25",

"numa_node":1

}

linux:~ # ndctl create-namespace

{

"dev":"namespace27.0",

"mode":"fsdax",

"size":"744.19 GiB (799.06 GB)",

"uuid":"ea4cf63a-9910-408a-bae6-6b503e734dd8",

"raw_uuid":"feae79ac-f0ab-4bf2-a7d7-4b6591dcbe0d",

"sector_size":512,

"blockdev":"pmem27",

"numa_node":3

}

Configuring FS-DAX and create and mount the partitions

You now have either two or four block devices configured in the system. The names of the block devices start with pmem and a number and are displayed in the listing in the last step in the “blockdev” section. In our example, the names are /dev/pmem24, /dev/pmem25, /dev/pmem26, and /dev/pmem27.

To create an xfs file system, run the following mkfs.xfs command:

linux:~ # mkfs.xfs /dev/pmem24

meta-data=/dev/pmem24 isize=512 agcount=4, agsize=48770944 blks

= sectsz=4096 attr=2, projid32bit=1

= crc=1 finobt=1, sparse=0, rmapbt=0, reflink=0

data = bsize=4096 blocks=195083776, imaxpct=25

= sunit=0 swidth=0 blks

naming =version 2 bsize=4096 ascii-ci=0 ftype=1

log =internal log bsize=4096 blocks=95255, version=2

= sectsz=4096 sunit=1 blks, lazy-count=1

realtime =none extsz=4096 blocks=0, rtextents=0

linux:~ # mkfs.xfs /dev/pmem25

meta-data=/dev/pmem25 isize=512 agcount=4, agsize=48770944 blks

= sectsz=4096 attr=2, projid32bit=1

= crc=1 finobt=1, sparse=0, rmapbt=0, reflink=0

data = bsize=4096 blocks=195083776, imaxpct=25

= sunit=0 swidth=0 blks

naming =version 2 bsize=4096 ascii-ci=0 ftype=1

log =internal log bsize=4096 blocks=95255, version=2

= sectsz=4096 sunit=1 blks, lazy-count=1

realtime =none extsz=4096 blocks=0, rtextents=0

linux:~ # mkfs.xfs /dev/pmem26

meta-data=/dev/pmem26 isize=512 agcount=4, agsize=48770944 blks

= sectsz=4096 attr=2, projid32bit=1

= crc=1 finobt=1, sparse=0, rmapbt=0, reflink=0

data = bsize=4096 blocks=195083776, imaxpct=25

= sunit=0 swidth=0 blks

naming =version 2 bsize=4096 ascii-ci=0 ftype=1

log =internal log bsize=4096 blocks=95255, version=2

= sectsz=4096 sunit=1 blks, lazy-count=1

realtime =none extsz=4096 blocks=0, rtextents=0

linux:~ # mkfs.xfs /dev/pmem27

meta-data=/dev/pmem27 isize=512 agcount=4, agsize=48770944 blks

= sectsz=4096 attr=2, projid32bit=1

= crc=1 finobt=1, sparse=0, rmapbt=0, reflink=0

data = bsize=4096 blocks=195083776, imaxpct=25

= sunit=0 swidth=0 blks

naming =version 2 bsize=4096 ascii-ci=0 ftype=1

log =internal log bsize=4096 blocks=95255, version=2

= sectsz=4096 sunit=1 blks, lazy-count=1

realtime =none extsz=4096 blocks=0, rtextents=0

Creating /etc/fstab entries for PMEM devices

For each PMEM device:

- Create a folder on which to mount the device by running the following command:

linux:~ # mkdir -p /hana/pmem/0; mkdir /hana/pmem/1; mkdir /hana/pmem/2; mkdir /hana/pmem/3

- To persist the mounting after the system reboots, add a mount entry in /etc/fstab for each namespace by running the following commands.

Note: The x-systemd.device-timeout parameter influences how long the system waits for the device to be ready. This example uses 20 minutes of initializing time. Decrease this value if necessary depending on your landscape needs.

/dev/pmem24 /hana/p

mem/0 xfs noatime,dax,x-systemd.device-timeout=1200 1 2

/dev/pmem25 /hana/pmem/1 xfs noatime,dax,x-systemd.device-timeout=1200 1 2

/dev/pmem26 /hana/pmem/2 xfs noatime,dax,x-systemd.device-timeout=1200 1 2

/dev/pmem27 /hana/pmem/3 xfs noatime,dax,x-systemd.device-timeout=1200 1 2

- Mount all the file systems and verify that they are properly mounted by running the following commands:

linux:~ # mount -a -t xfs

linux:~ # df -h|egrep "File|pmem"

Filesystem Size Used Avail Use% Mounted on

/dev/pmem24 744G 792M 744G 1% /hana/pmem/1

/dev/pmem25 744G 792M 744G 1% /hana/pmem/2

/dev/pmem26 744G 792M 744G 1% /hana/pmem/3

/dev/pmem27 744G 792M 744G 1% /hana/pmem/4

Deploying and configuring SAP HANA

SAP HANA must be made aware of the new Intel Optane memory DIMMs. Follow these steps for your SAP HANA installation:

- Existing installation: Upgrade existing installations to SAP HANA SPS03 or later. In the [persistence]section of the global.ini file, provide a line with a comma-separated list of all mounted pmem devices by running the following command:

[persistence]

basepath_persistent_memory_volumes=/hana/pmem/0;/hana/pmem/1;/hana/pmem/2;/hana /pmem/3

- New installation: Extend the hdblcm tool with two more options besides the normal installation parameters:

--use_pmem --pmempath=/hana/pmem

HDBLCM determines and uses all pmem devices below that /hana/pmem subfolder.

SAP HANA uses the persistent memory devices and loads data to them. You can also move databases and tables individually to a specific region (DRAM or PMem).

Summary

Dell Technologies conducted a test with SAP Business Warehouse on SAP HANA (BWoH) by running complex queries through the whole SAP HANA stack (hardware, operating system, database, applications). The test results showed a similar read performance between DRAM and Intel Optane memory, indicating that this technology facilitates access to greater amounts of data while balancing TCO.