Home > Workload Solutions > SAP > Guides > SAP HANA TDI Guides > Dell Validated Design for SAP HANA TDI Deployments with Dell PowerFlex File Storage > SAP HANA installation with NAS/NFS connectivity

SAP HANA installation with NAS/NFS connectivity

-

Installing an SAP HANA scale-out cluster

Overview

To install an SAP HANA scale-out cluster on PowerFlex File storage, you must:

- Configure the /etc/fstab file on each SAP HANA client and mount all the SAP HANA data, log, and shared directories from the PowerFlex File storage to the SAP HANA hosts.

- Install an SAP HANA scale-out instance with the SAP HANA hdblcm command-line tool by using the NAS storage directories that you previously created.

Prerequisites

The configuration example in this guide assumes that the following basic installation and configuration operations are complete on the SAP HANA nodes:

- The operating system is installed and configured according to the SAP recommendations. The example in this guide uses SUSE Linux 15 SP1 for SAP applications.

- The directories to be used for mount points have been created for /hana/shared and for the data directory /hana/data/SID/mnt0000x and log directory /hana/log/SID/mnt0000x on each of the SAP HANA nodes with 775 permissions.

- All network settings and bandwidth requirements for internode communications are configured according to the SAP requirements.

- SSH keys have been exchanged between all SAP HANA nodes.

- System time synchronization has been configured through an NTP server.

- The SAP HANA installation DVD ISO file has been downloaded from the SAP website and is available on a shared file system.

Note: SAP HANA can only be installed on certified server hardware. A certified SAP HANA expert must perform the installation.

After all the file systems are mounted, you are ready to install the SAP HANA scale-out cluster. Our example uses the hdblcm tool to install the SAP HANA 2+1 scale-out cluster. For more information, see the SAP HANA Studio Installation and Update Guide.

Installation steps

Ensure that the installation DVD ISO file has been extracted to a shared software-repository file system that is mounted on all hosts. Run the following command from the extracted installation folder:

#/SAPShareNew/software/SAP/HANA/hana2_rev2065/SAP_HANA_DATABASE/hdblcm

SAP HANA Lifecycle Management - SAP HANA Database 2.00.065.00.1665753120

************************************************************

Scanning software locations...

Detected components:

SAP HANA Database (2.00.065.00.1665753120) in /SAPShareNew/software/SAP/HANA/hana2_rev2053/SAP_HANA_DATABASE/server

Choose an action

Index | Action | Description

-----------------------------------------------

1 | install | Install new system

2 | extract_components | Extract components

3 | Exit (do nothing) |

Enter selected action index [3]: 1

SAP HANA Database version '2.00.065.00.1665753120' will be installed.

Select additional components for installation:

Index | Components | Description

---------------------------------------------------------------------------------------------

1 | all | All components

2 | server | No additional components

3 | client | Install SAP HANA Database Client version 2.7.21.1611351107

Enter comma-separated list of the selected indices [3]:

Enter Installation Path [/hana/shared]:

Enter Local Host Name [hana01]:

Do you want to add hosts to the system? (y/n) [n]: y

Enter comma-separated host names to add: hana02,hana03

Enter Root User Name [root]:

Collecting information from host 'hana02'...

Collecting information from host 'hana03'...

Information collected from host 'hana03'.

Information collected from host 'hana02'.

Select roles for host 'hana02':

Index | Host Role | Description

-------------------------------------------------------------------

1 | worker | Database Worker

2 | standby | Database Standby

….

Enter comma-separated list of selected indices [1]: 1

Enter Host Failover Group for host 'hana02' [default]:

Enter Storage Partition Number for host 'hana02' [<<assign automatically>>]:

Enter Worker Group for host 'hana02' [default]:

Select roles for host 'hana03':

Index | Host Role | Description

-------------------------------------------------------------------

1 | worker | Database Worker

2 | standby | Database Standby

….

Enter comma-separated list of selected indices [1]: 2

Enter Host Failover Group for host 'hana03' [default]:

Enter Worker Group for host 'hana03' [default]:

Enter SAP HANA System ID: PFX

Enter Instance Number [00]:

Enter Local Host Worker Group [default]:

Index | System Usage | Description

-------------------------------------------------------------------------------

1 | production | System is used in a production environment

2 | test | System is used for testing, not production

3 | development | System is used for development, not production

4 | custom | System usage is neither production, test nor development

Select System Usage / Enter Index [4]:

Enter Location of Data Volumes [/hana/data/PFX]:

Enter Location of Log Volumes [/hana/log/PFX]:

Restrict maximum memory allocation? [n]:

Enter Certificate Host Name For Host 'hana01' [hana01]:

Enter Certificate Host Name For Host 'hana02' [hana02]:

Enter Certificate Host Name For Host 'hana03' [hana03]:

Enter System Administrator (nasadm) Password:

Confirm System Administrator (nasadm) Password:

Enter System Administrator Home Directory [/usr/sap/PFX/home]:

Enter System Administrator Login Shell [/bin/sh]:

Enter System Administrator User ID [1001]:

Enter System Database User (SYSTEM) Password:

Confirm System Database User (SYSTEM) Password:

Restart system after machine reboot? [n]:

……..

Do you want to continue? (y/n): y

Installing components...

Installing SAP HANA Database...

Preparing package …..

Creating System...

Extracting software...

Installing package

Starting SAP HANA Database system...

All server processes started on host 'hana01' (worker).

Importing delivery units...

Adding 2 additional hosts in parallel

Adding host 'hana03'...

Adding host 'hana02'...

hana02: Adding host 'hana02' to instance '00'...

hana03: Adding host 'hana03' to instance '00'...

hana02: Starting SAP HANA Database...

hana03: Starting SAP HANA Database...

hana03: All server processes started on host 'hana03' (standby).

hana02: All server processes started on host 'hana02' (worker).

Installing Resident hdblcm...

Installing SAP HANA Database Client...

….

Registering SAP HANA Database Components on Local Host...

Regenerating SSL certificates...

Deploying SAP Host Agent configurations...

Updating SAP HANA Database Instance Integration on Remote Hosts...

Updating SAP HANA Database instance integration on host 'hana02'...

Updating SAP HANA Database instance integration on host 'hana03'...

Creating Component List...

SAP HANA Database System installed

Implementing STONITH with the HA/DR provider for SAP HANA

Note: This section applies only to multihost SAP HANA scale-out instances on NAS and the host autofailover.

On failover, the database on the standby host must have read-access and write-access to the files of the failed active host. If the failed host can still write to these files, the files might become corrupted. Preventing this corruption is called fencing.

When you use shared file systems such as PowerFlex File storage and NFSv3 or NFSv4, the STONITH method is implemented to achieve proper fencing capabilities and ensure that locks are always freed.

Note: For multihost SAP HANA scale-out instances and the host autofailover with NFSv3, the STONITH (SAP HANA HA/DR provider) implementation is mandatory. With NFSv4, a locking mechanism based on lease-time is available. The locking mechanism can be used for I/O fencing, and STONITH is not required. However, STONITH can be used to speed up failover and ensure that locks are always released.

In such a setup, the storage connector API can be used for invoking the STONITH calls. During failover, the SAP HANA leading host calls the STONITH method of the custom storage connector with the hostname of the failed host as the input value.

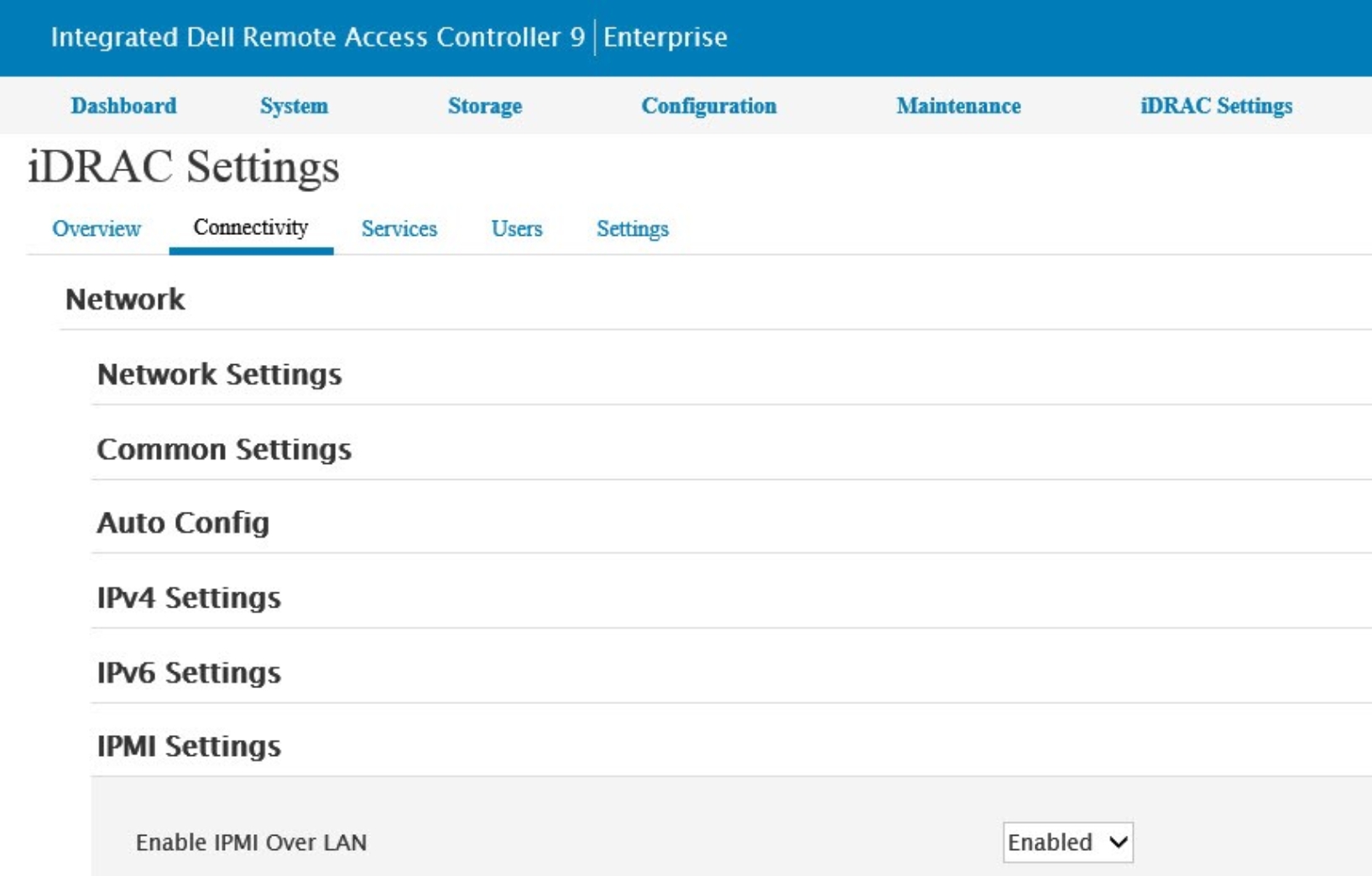

A mapping of hostnames to management network addresses is maintained in each host by the /etc/hosts file, which is used to send a reboot signal to the server through the management network (see step 3 of Enable IPMI over LAN). When the host restarts, it automatically starts in the standby host role. The STONITH example uses the Intelligent Platform Management Interface (IPMI) in bare-metal deployments with PowerEdge servers.

Enable IPMI over LAN

For PowerEdge servers, configure IPMI over LAN for iDRAC to enable IPMI commands over LAN channels to any external systems.

To enable IPMI over LAN:

The iDRAC Settings page opens:

Figure 13. iDRAC Settings page

- Ensure that IPMI Over LAN is enabled, and then click Apply.

- Repeat the preceding steps for each host that is used in the SAP HANA scale-out instance.

The /etc/hosts file maintains a mapping of hostnames to IPMI IP addresses in STONITH using a standard naming convention:

# #

# IPMI mapping

#

10.230.79.85 hana01-ipmi

10.230.79.86 hana02-ipmi

10.230.79.87 hana03-ipmi

- Verify that the IPMI tool is working on each SAP HANA host by running the following command as root:

ipmitool power status –H hana01-ipmi -U root -P xxxx

If IPMI is working successfully, the output is Chassis Power is on.

- Set the set-user-ID bit for IPMItool to enable sidadm execution permissions by running:

chmod u+s /usr/bin/ipmitool

Create a custom HA/DR STONITH provider

To create your own HA/DR provider, perform the following steps and then add the hook method that you want to use. For more information, see the SAP HANA Administration Guide. The example in this guide uses STONITH, which was written, tested, and validated for use with certified PowerEdge servers in SAP HANA scale-out scenarios.

To create the Dell recommended custom HA/DR STONITH provider:

- As sidadm, create a directory for the HA/DR provider. The directory must be within the /hana/shared storage of the SAP HANA installation but outside the <SID> directory structure. The following example uses /hana/shared/HANA_Hooks as the location.

- Copy exe/python_support/hdb_ha_dr/HADRDummy.py from an installed SAP HANA system to the new location. For example, copy the file and rename it to: /hana/shared/HANA_Hooks/HA_STONITH_Hook.py

- Customize the contents of the new file by renaming the Python class to the name of the HA_STONITH_Hook file. Within the HA_STONITH_Hook.py file, the Dell SAP engineering team also customized def __init__(), def about and the STONITH hook def stonith, as shown in the following example:

"""

Sample for a HA/DR hook provider.

When using your own code in here, please copy this file to location on /hana/shared outside the HANA installation.

This file will be overwritten with each hdbupd call! To configure your own changed version of this file, please add

to your global.ini lines similar to this:

[ha_dr_provider_<HA_STONITH_Hook>]

provider = <HA_STONITH_Hook>

path = /hana/shared/HANA_Hooks

execution_order = 1

For all hooks, 0 must be returned in case of success.

"""

from hdb_ha_dr.client import HADRBase, Helper

import os, time

class HA_STONITH_Hook(HADRBase):

def __init__(self, *args, **kwargs):

# delegate construction to base class

super(HA_STONITH_Hook, self).__init__(*args, **kwargs)

def about(self):

return {"provider_company" : "Dell",

"provider_name" : "HA_STONITH_Hook", # provider name = class name

"provider_description" : "Dell STONITH HOOK for SAP HANA",

"provider_version" : "2.0"}

def startup(self, hostname, storage_partition, system_replication_mode, **kwargs):

self.tracer.debug("enter startup hook; %s" % locals())

self.tracer.debug(self.config.toString())

self.tracer.info("leave startup hook")

return 0

def shutdown(self, hostname, storage_partition, system_replication_mode, **kwargs):

self.tracer.debug("enter shutdown hook; %s" % locals())

self.tracer.debug(self.config.toString())

self.tracer.info("leave shutdown hook")

return 0

def failover(self, hostname, storage_partition, system_replication_mode, **kwargs):

self.tracer.debug("enter failover hook; %s" % locals())

self.tracer.debug(self.config.toString())

self.tracer.info("leave failover hook")

return 0

def stonith(self, failingHost, **kwargs):

self.tracer.debug("enter HANA HA stonith hook; %s" % locals())

self.tracer.debug(self.config.toString())self.tracer.info( "Stonith - rebooting failing host %s" % failingHost)

ipmi_host = "%s-ipmi" % failingHostpower_cycle = "ipmitool power cycle -I lanplus -H %s -U root -P Xxxxxxxx " % ipmi_host

power_on = "ipmitool power on -I lanplus -H %s -U root -P Xxxxxxxxx " % ipmi_host

rc = os.system(power_cycle)

time.sleep(10)

if rc == 0:

msg = "Power cycle successfully executed to the failed host %s" % failingHost

self.tracer.info(msg)

rc = 0

elif rc !=0:

msg = "failed to power cycle %s, will try again" % failingHost

self.tracer.info(msg)

rc = os.system(power_on)

time.sleep(10)

if rc == 0:

msg = "Successfully powered on %s" % failingHost

self.tracer.info(msg)

rc = 0

elif rc !=0:

msg = "unable to power cycle %s - Please CHECK" % failingHost

self.tracer.info(msg)

return 1

self.tracer.info("leaving HANA HA stonith hook")

return rcdef preTakeover(self, isForce, **kwargs):

"""Pre takeover hook."""

self.tracer.info("%s.preTakeover method called with isForce=%s" % (self.__class__.__name__, isForce))

if not isForce:

# run pre takeover code

# run pre-check, return != 0 in case of error => will abort takeover

return 0

else:

# possible force-takeover only code

# usually nothing to do here

return 0

def postTakeover(self, rc, **kwargs):

"""Post takeover hook."""

self.tracer.info("%s.postTakeover method called with rc=%s" % (self.__class__.__name__, rc))

if rc == 0:

# normal takeover succeeded

return 0

elif rc == 1:

# waiting for force takeover

return 0

elif rc == 2:

# error, something went wrong

return 0

def srConnectionChanged(self, parameters, **kwargs):

self.tracer.debug("enter srConnectionChanged hook; %s" % locals())

# Access to parameters dictionary

hostname = parameters['hostname']

port = parameters['port']

volume = parameters['volume']

serviceName = parameters['service_name']

database = parameters['database']

status = parameters['status']

databaseStatus = parameters['database_status']

systemStatus = parameters['system_status']

timestamp = parameters['timestamp']

isInSync = parameters['is_in_sync']

reason = parameters['reason']

siteName = parameters['siteName']

self.tracer.info("leave srConnectionChanged hook")

return 0

def srReadAccessInitialized(self, parameters, **kwargs):

self.tracer.debug("enter srReadAccessInitialized hook; %s" % locals())

# Access to parameters dictionary

database = parameters['last_initialized_database']

databasesNoReadAccess = parameters['databases_without_read_access_initialized']

databasesReadAccess = parameters['databases_with_read_access_initialized']

timestamp = parameters['timestamp']

allDatabasesInitialized = parameters['all_databases_initialized']

self.tracer.info("leave srReadAccessInitialized hook")

return 0

def srServiceStateChanged(self, parameters, **kwargs):

self.tracer.debug("enter srServiceStateChanged hook; %s" % locals())

# Access to parameters dictionary

hostname = parameters['hostname']

service = parameters['service_name']

port = parameters['service_port']

status = parameters['service_status']

previousStatus = parameters['service_previous_status']

timestamp = parameters['timestamp']

daemonStatus = parameters['daemon_status']

databaseId = parameters['database_id']

databaseName = parameters['database_name']

databaseStatus = parameters['database_status']

self.tracer.info("leave srServiceStateChanged hook")

return 0

def srSecondaryUnregistered(self, parameters, **kwargs):

self.tracer.debug("enter srSecondaryUnregistered hook; %s" % locals())

# Access to parameters dictionary

siteName = parameters['site_name']

siteId = parameters['site_id']

reason = parameters['reason']

self.tracer.info("leave srSecondaryUnregistered hook")

……….return 0

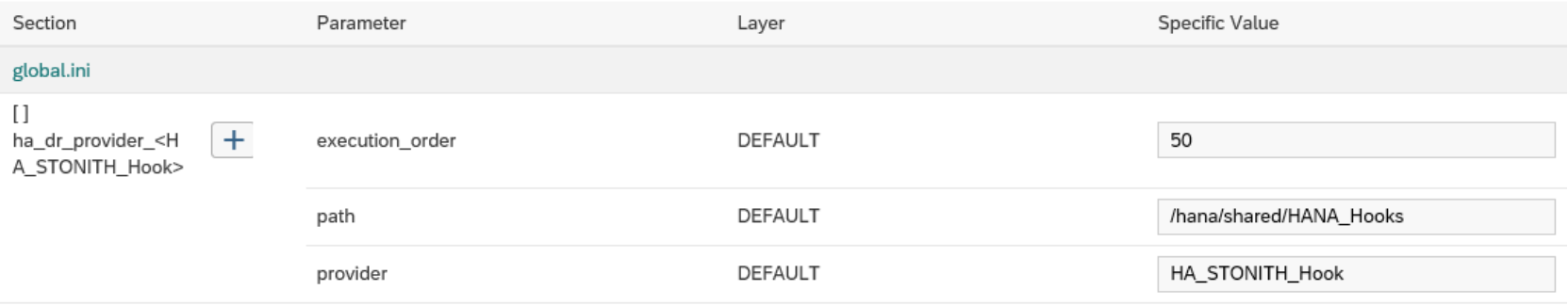

Install the HA/DR provider script

Add, configure, and monitor your custom provider scripts in the SAP HANA Cockpit.

- After the HA/DR provider script is created, install the script on an SAP HANA system by adding an ha_dr_provider_<classname> section to the global.ini file with the following parameters:

provider: Class name

path: Location of the script

execution_order: Ordering of the HA/DR provider (if there is more than one; this is a number from 1 through 99) For example, add the following details to the global.ini file:

[ha_dr_provider_<HA_STONITH_Hook>]

provider = HA_STONITH_Hook

path = /hana/shared/HANA_Hooks

execution_order = 50

- Using the SAP HANA Cockpit, in your SAP HANA database, select Database Administration > Manage system configuration.

You can add, configure, and monitor the HA/DR provider information, as shown in the following figure:

Figure 14. SAP HANA Cockpit: ha_dr_provider section in global.ini

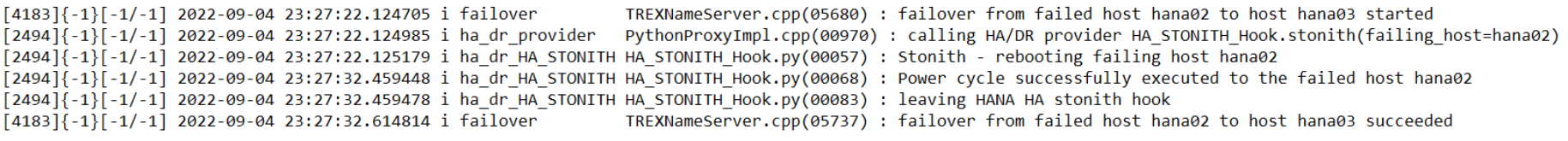

Verify the installation of the HA/DR provider script HA_STONITH.Hook.py

All scripts are loaded during the startup phase of the name server. You can monitor the name server trace file while general information is collected about the ha_dr_provider and return codes.

Perform host autofailovers to ensure that the failovers work as expected and that STONITH has been implemented on the failed host. The following figure shows an example of output from the name server trace file following a host autofailover and successful implementation of STONITH:

Figure 15. Ha_dr_provider output from the leading name server trace file