Home > Storage > Unity XT > Storage Admin > Dell Unity: Operating Environment (OE) Overview > Storage provisioning

Storage provisioning

-

Dell Unity offers both block and file provisioning in the same enclosure. Drives are provisioned into Pools that can be used to host both block and file data. Connectivity is offered for both block and file protocols. For block connectivity, iSCSI and/or Fibre Channel may be used to access LUNs, Consistency Groups, Thin Clones, VMware Datastores (VMFS), and VMware Virtual Volumes. For file connectivity, NAS Servers are used to host File Systems that can be accessed through SMB Shares or NFS Shares. NAS Servers are also used to host VMware NFS Datastores and VMware Virtual Volumes.

Unity supports two different types of Pools: Traditional and Dynamic Pools. Due to differences in usage and behavior for each type of Pool, each type is discussed in separate sections.

Traditional pools

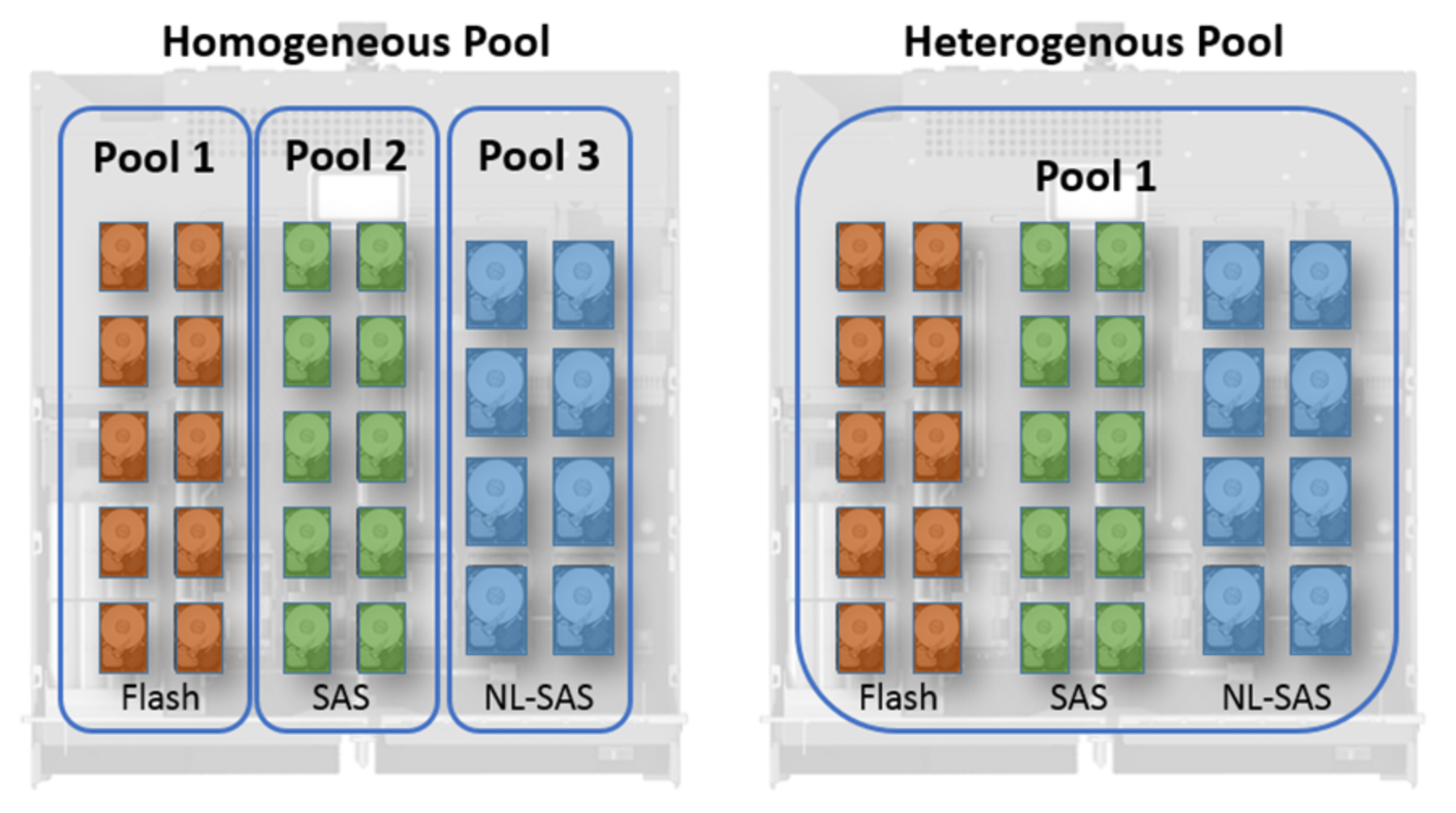

All storage resources are provisioned from Pools, whether Traditional or Dynamic. In general, a Pool is a collection of physical drives arranged into an aggregate group, with some form of RAID applied to the drives to provide redundancy. Traditional Pools can consist of drives of varying types. These drives are sorted into one of three tiers: Extreme Performance (Flash), Performance (SAS), and Capacity (NL-SAS). For hybrid systems, Pools can be configured to contain multiple tiers of drives. This is known as a heterogeneous Pool. When combined with FAST VP, heterogeneous Pools can provide efficient balancing of data between tiers without requiring user intervention. In another available configuration, Pools can contain a single tier of drives. This is known as a homogeneous Pool (Figure 3).

Figure 3. Pool Layouts

Each tier in a Pool can have a different RAID configuration set. The list of supported RAID configurations for each tier is listed in Dell Unity Family Configuring Pools on Dell Support. Another consideration for Pools is the Hot Spare Policy. The Dell Unity system reserves 1 spare drive per 31 drives to serve as a spare for the system. In other words, given 31 drives of the same type on a Dell Unity system, 1 of those drives will be marked as a hot spare and will not be permitted to be used in a Pool. If a 32nd drive is added to the system, the policy is updated and a second drive will be reserved as a hot spare. A spare drive can replace a faulted drive in a Pool if it matches the drive type of the faulted disk. Any unbound drive can serve as a spare, but Dell Unity will always enforce the “1 per 31” rule.

If a drive fails, the Dell Unity system will try to locate a spare. The system has four criteria for selecting a drive replacement: Type, Bus, Size, and Enclosure. The system will start by finding all spares of the same drive type and then will look for a drive on the same bus. If a drive on the same bus is found, the system will locate any drives that are the same size or greater than the faulted drive. Finally, if any valid drives are in the same enclosure as the faulted drive, one will be chosen. If during this search a valid drive cannot be located, the system will widen its search in reverse order until a suitable replacement is found.

For more information about how drive sparing is handled, see the Dell Unity: High Availability white paper.

Dynamic Pools

Dynamic Pools, released in Dell Unity OE version 4.2.x for Dell Unity All Flash systems, increases the flexibility of configuration options within a Dell Unity system with an entirely redesigned Pool structure. Dynamic Pools replace the existing Pool technology, now called Traditional Pools, in this release as the default Pool type created within Unisphere for Dell Unity All Flash systems. As with Traditional Pools, Dynamic Pools can be created, expanded, and deleted, but they include other improvements.

When expanding a Dynamic Pool, as the RAID width multiple does not apply, the user can also expand by a specific target capacity. Usually, the user can add a single drive to the Pool to increase its capacity. These features provide flexible deployment models, which improves the planning and provisioning process. The total cost of ownership of the configuration is also reduced as there is no restriction of adding additional drives based on RAID width multiples.

As Dynamic Pools are created without using fixed width RAID groups, rebuild operations are different from rebuilds occurring within a Traditional Pool. When a drive is failing or has failed with a Traditional Pool, a Hot Spare is engaged and used to replace the bad drive. This replacement is one-to-one, and the speed of the proactive copy or rebuild operation is limited by the fixed width of the private RAID group and the single targeted Hot Spare. With Dynamic Pools, regions of RAID and regions within the Pool that will be used for drive replacement are spread across the drives within the Pool. In this design, multiple regions of a failing or failed drive can be worked on simultaneously. As the space for the replacement is spread across the drives in the Pool, the proactive copy or rebuild operations are also targeted to more than one drive. These features significantly reduce the amount of time required to replace a failed drive.

In Dell Unity OE version 4.2.x or later, for All-Flash ss, all new pools are Dynamic Pools when created in the Unisphere GUI. To create Traditional Pools, you can use Unisphere CLI or REST API.

Dell Unity OE version 5.2 and later versions include support for Dynamic Pools on Hybrid systems. All newly created pools including multi-tiered pools on the Hybrid system will be created as Dynamic Pools by default.

For more information about Dynamic Pools, see the Dell Unity: Dynamic Pools white paper.

LUNs

LUNs are block-level storage resources that can be accessed by hosts over iSCSI or Fibre Channel connections. A user can create, view, manage, and delete LUNs in any of the management interfaces – Unisphere, Unisphere CLI, and REST API. A Pool is required to provision LUNs. LUNs may be replicated in either an asynchronous or synchronous manner, and snapshots of LUNs may be taken.

With multi-LUN create, a user can create multiple LUNs at a time in a single wizard. However, certain settings, such as the replication configuration, must be manually added after the LUNs are created. Multi-LUN create is intended to create multiple independent resources at once. Users looking to configure similar host access, snapshot, and replication settings on a group of LUNs can leverage Consistency Groups. In Dell Unity OE version 4.5 and later, multi-LUN create lets users specify a starting point for the appended number, allowing users to continue the numbering scheme for preexisting LUNs.

In Dell Unity OE version 4.4 or later, Unisphere will prevent the user from deleting a block resource that has host access assigned to it. To delete the host-accessible block resource, the user needs to first remove the host access. Host access can be removed by selecting a LUN in the Block page and using the More Actions dropdown, or through the LUN properties window. Additionally, in Dell Unity OE version 4.4, Unisphere allows the user to set a custom Host LUN ID during creation of LUNs and VMware vStorage VMFS Datastores. Once the resource is created, the user can modify the Host LUN IDs from the block resource’s properties page under the Access tab or the host properties page.

In Dell Unity OE version 5.1 users can logically group hosts and block resources within a host group. Host groups can be created and managed from the Host Groups tab under the Hosts page and help to streamline host/resource access operations. A host group can be one of two types, General and ESX, which is persistent for the life of the group. A General type host group allows one or more non ESXi hosts and LUNs to be grouped. ESX host groups allow VMware ESXi hosts to be grouped with LUNs and/or VMFS datastores. For more information about host groups, see the Dell Unity: Unisphere Overview white paper.

Consistency Groups

A Consistency Group arranges a set of LUNs into a group. This is especially useful for managing multiple LUNs that are similar in nature or interrelated, as management actions taken on a Consistency Group will apply to all LUNs in the group. For example, taking a snapshot of a Consistency Group will take a snapshot of each LUN in the Consistency Group at the same point in time. This can ensure backup and crash consistency between LUNs. Consistency Groups can be replicated using asynchronous or synchronous means, and operations on the Consistency Group replication session, such as failover and failback, will be performed on all the LUNs in the Consistency Group.

VMware Datastores

VMware Datastores are storage resources preconfigured to be used with VMware vCenter and ESXi hosts. Creating VMware Datastores in Unisphere and assigning host access to your VMware resources will create your datastore on the Dell Unity system and automatically configure the datastore in your VMware environment. VMware vStorage VMFS Datastores are block storage objects that are connected through iSCSI or Fibre Channel.

In Dell Unity OE version 4.3 and later, users can create version 6 VMware vStorage VMFS Datastores from Unisphere CLI or REST API. In Dell Unity OE version 4.5 or later, users can create version 5 or version 6 VMware vStorage VMFS Datastores from Unisphere.

Thin Clones

A Thin Clone is a read/write copy of a block storage resource (LUNs, LUNs within Consistency Group or VMware VMFS Datastores) snapshot. The Thin Clone shares the sources of block storage resources. When a Thin Clone is created, all the data is instantly available on the Thin Clone. Ongoing changes in data on the Thin Clones does not affect the Base resource and vice versa. Any changes to the Thin Clone do not affect the snapshot source.

Thin Clones can be refreshed to return to a previous image or to the original snapshot image. In Dell Unity OE version 4.2.1 and later, a LUN can be refreshed by any snapshot created under the Base LUN, including snapshots of related Thin Clones. This allows a user to push changes made on a Thin Clone back to the Base LUN.

VMware Virtual Volumes (Block)

Dell Unity supports VMware Virtual Volumes served over a block Protocol Endpoint. The Protocol Endpoint serves as a data path on demand from an ESXi host’s Virtual Machines to the Virtual Volumes hosted on the Dell Unity system. The Protocol Endpoint may be defined using iSCSI or Fibre Channel.

For more information about VMware datastores, Virtual Volumes, and other virtualization technologies related to Dell Unity, see the Dell Unity: Virtualization Integration white paper.