-

Dell Unity supports NFSv3 through NFSv4.1. NFS can be enabled on a per NAS server basis, which only enables the NFSv3 protocol. After enabling NFSv3, administrators have the option to enable additional options such as vVols, NFSv4, and Secure NFS. Afterward, when creating an NFS file system and share, default host access can also be set on a share level with exceptions defined for specific hosts.

In OE version 4.4 and earlier, NFSv3 must be enabled before NFSv4 can be enabled. OE version 4.5 adds the ability to enable NFSv3 or NFSv4 independently. This is useful for customers who only use NFSv4 and want to leave the NFSv3 protocol disabled. NFSv4 is not supported with vVols.

Starting with OE version 4.4, NFS share names can contain the “/” character, except as the first character. Previously, using the “/” character in the share name is prohibited as it is reserved to indicate a directory on UNIX systems. By allowing the use of the “/” character in the share name, this enables administrators to create a virtual namespace that is different from the actual path used by the share. While NFSv3 clients can mount shares that include “/” in the name, NFSv4 client cannot due to protocol constraints. NFSv4 clients must use the actual path of the share to mount it.

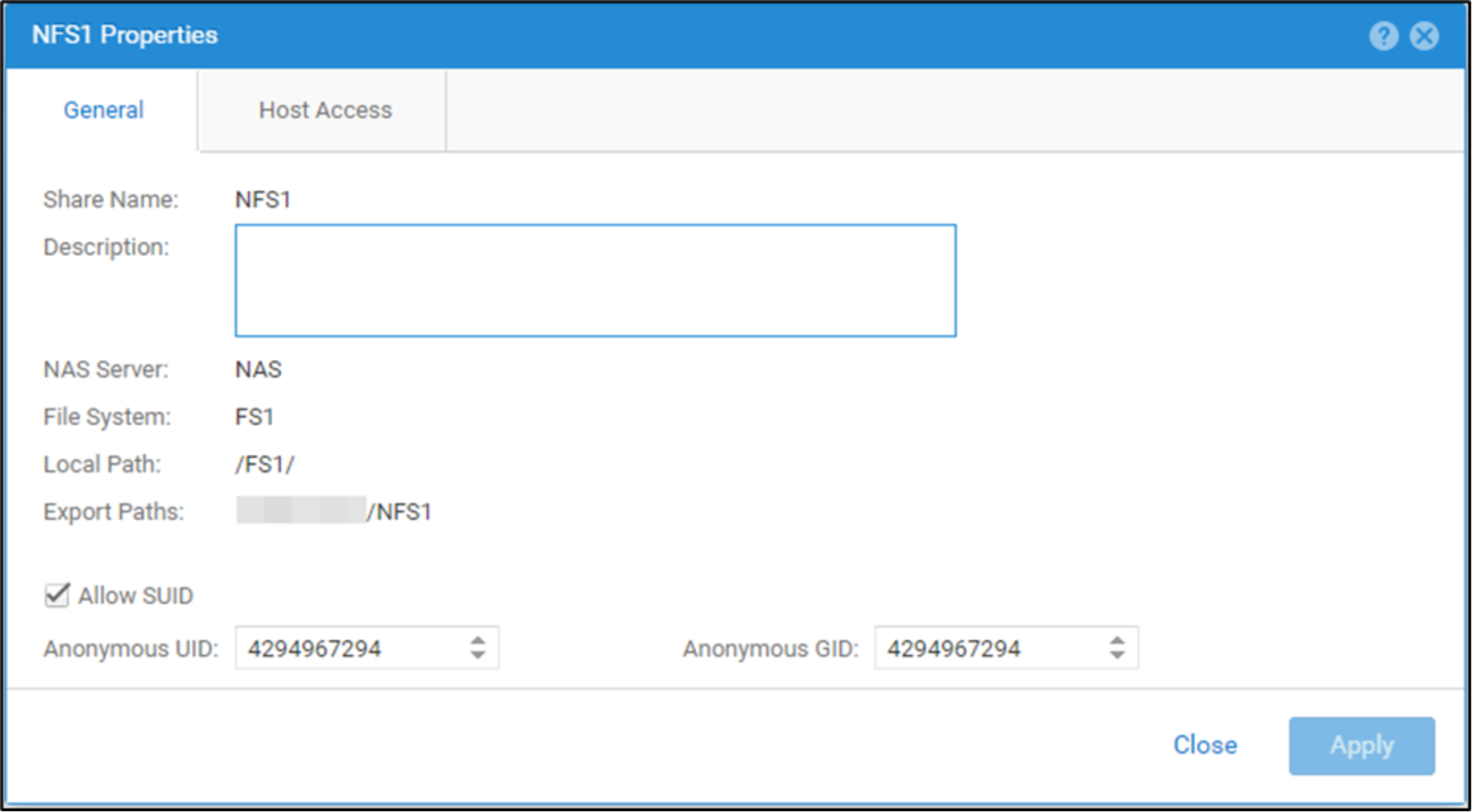

By default, NFS clients can set the setuid and setgid bits on files and directories stored on an NFS export. Files that the setuid or setgid bits set can be identified since the execute bit changes from x to s for the owner or group, respectively. If set on a file, these bits provide the ability for users to run an executable with the permissions of the owner or group, respectively. Also, if the setgid bit is set on a directory, it causes new files and subdirectories to inherit the GID from its parent directory, rather than the primary GID of the user that is creating the file. The setuid bit is ignored if it is set on a directory on most UNIX and Linux systems. These bits allow users to run specific executables with temporarily elevated permissions (such as root) which may be a security concern for some customers. OE version 4.4 also introduces the ability to allow or prevent clients from setting the setuid and setgid bits on any files and directories residing on the NFS share. By default, this is allowed and can be changed when creating or modifying an NFS share. If it is disabled on an existing NFS share, any existing files that already have the setuid or setgid bits configured are not changed. However, any future attempts to set or unset these bits are not allowed. Also, if files that have these bits enabled are copied to the share, these bits are removed. The allow SUID option is shown in the figure below.

Figure 26. Allow SUID and anonymous UID/GID

OE version 4.4 also introduces the ability to configure the anonymous UID and GID attributes. If a client is granted access to a NFS share without allowing root access, the root user on that client may still attempt to access the share. In this case, the root user is mapped to anonymous UID and GID 4294967294, which is typically associated with the nobody user. A custom anonymous UID and GID can be configured on the NFS share during creation or modification of the share as shown in the figure above. By default, these are set to 4294967294 and can be changed to any valid UID and GID. If the client is granted root access to the share or if Secure NFS is used, the anonymous UID and GID are not used.

NFS protocol-related options are shown in the table below.

Table 3. NFS options

Protocol options

Level

Default

NFSv4

NAS server

Disabled

Secure NFS (with Kerberos™)

NAS server

Disabled

vVols (NFS Protocol Endpoint)

NAS server

Disabled

Default Host Access

Share

No Access

Allow SUID

Share

Enabled

Anonymous UID and GID

Share

4294967294

Parameters

By default, NAS servers query lookup services in the following order: local files, LDAP/NIS, and then DNS. In OE version 4.5, the ns.switch parameter is available. This parameter is used to control which services are queried and the order that they are searched. It can specify different services for different types of object lookups. The available options are passwd, group, hosts, and netgroups. The available resolvers are files, nis, ldap, and dns. The default is NULL, which means the default order is used. If you want to use a custom lookup order, edit this using the same syntax as the nsswitch.conf file, but concatenate the lines. For example, if you only want to use local files for user lookups and only DNS for host lookups, set this to passwd: files hosts: dns.

When using a string to define the host access list for an NFS share, netgroups should be prefixed with an @ symbol to differentiate them from hostnames. When using registered hosts, the @ symbol is not required since these entries are defined according to their host object type.

In the string, the system treats all entries that begin with @ solely as netgroups. Entries that do not begin with @ are resolved as hostname first and, if that fails, as a netgroup second. This could cause a performance impact due to unnecessary and unintentional netgroup lookups. OE version 4.4 and later adds a parameter to disable the netgroup lookup behavior for unresolved hostnames. The nfs.netgroupprefix parameter can be configured to:

- 0 (default)

- Entries that begin with @: Treated only as a netgroup

- Entries that do not being with @: Treated as a hostname first and as a netgroup second

- 1

- Entries that begin with @: Treated only as a netgroup (no change)

- Entries that do not being with @: Treated only as a hostname

- The NFSv4 protocol allows the owner and group attributes of a file to be identified as either a numeric ID or a string in the packet. Some legacy NFSv4 clients require the use of the string format as they do not support numeric IDs. OE version 4.4 adds two parameters to control this behavior, enabling support for some of the legacy clients. Parameters nfsv4.numericId and nfsv4.domain are described below.

- nfsv4.numericId: Specifies if NFSv4 user and group attributes are handled as numeric IDs or user@domain strings

- 0: Uses strings (user@dell.com)

- 1 (default): Uses numeric IDs (UID 100 and GID 100)

If nfsv4.numericId is set to 0, a UNIX Directory Service (UDS) must be configured to translate the numeric UID and GID to the usernames. The domain is retrieved from a second parameter:

- nfsv4.domain: Specifies the domain to be used for the usernames. This is only used if

- nfsv4.numericId is set to 0. If this parameter is empty, the realm of the NFS server (if Secure NFS is enabled) is used as the domain name.

NFSv4 supports file delegations which is the process of assigning management of a file to the client. This greatly reduces the number of transactions required between the NAS server and the client which results in increased efficiency, reduced network traffic, and potentially improved performance. Read delegations can be provided to multiple clients simultaneously since they only prevent write access while the data is being read. Write delegations are exclusive and are used to prevent read access by other clients while new data is being written. Since NFSv4 delegations provide several advantages, they are enabled by default. However, in specific instances such as troubleshooting or issue reproduction, this feature may need to be disabled. Disabling this feature may result in increased network traffic. OE version 4.3 also includes a parameter to disable NFSv4 delegation. The nfsv4.delegationsEnabled parameter can be configured to:

- 0: Disables NFSv4 file delegations

- 1 (default): Enables NFSv4 file delegations

Dell Unity systems use NFS export aliases, meaning each NFS export has a path and a name alias. An NFS client can mount the NFS export by using either the path or name. For example, if the path is /filesystem1/ and the export name is FS1, either can be used to mount the export. In addition, both options also show up in the showmount –e output even though they are the same export. To prevent duplicates in the showmount output, a parameter is available in OE version 4.3 to control this behavior. The nfs.showExportLevel parameter can be configured to:

- 0 (default): Show both the export path and name

- 1: Show only the export path

- 2: Show only the export name

NFS is designed as a simple and efficient protocol. Oracle takes this a step further with their Oracle® direct NFS (dNFS) client. dNFS is built into the database’s kernel, enabling performance improvements compared to traditional NFS. A traditional NFS kernel goes through four layers of the I/O stack while the dNFS only needs to go through two. This is accomplished by bypassing the operating system level caches and eliminating operating system write-ordering locks. This enables dNFS to generate precise requests without any user configuration or tuning. Memory consumption is also reduced since data only needs to be cached in user space, eliminating the need for a copy to exist in the kernel space. Performance can also be further enhanced by configuring multiple network interfaces for load balancing purposes. All OE versions support Oracle dNFS in single node configurations. Starting with OE version 4.2, Oracle Real Application Clusters (RAC) are also supported. To use Oracle RAC, the nfs.transChecksum parameter must be enabled. This parameter ensures that each transaction carries a unique ID and avoids the possibility of conflicting IDs that result from the reuse of relinquished ports. The nfs.transChecksum parameter can be configured to:

- 0 (default): Does not compute a CRC

- 1: Computes a CRC on the first 200 bytes to check if an XID entry matches the request

For more information about NAS server parameters and how to configure them, reference the Service Commands document on Dell Technologies Info Hub.

NFSv4

NFSv4 is a version of the NFS protocol that differs considerably from previous implementations. Unlike NFSv3, this version is a stateful protocol, meaning that it maintains a session state and does not treat each request as an independent transaction without the need for additional preexisting information. This behavior is like that seen in Windows environments with SMB. NFSv4 brings support for several new features including NFS ACLs that expand on the existing mode-bit-based access control in previous versions of the protocol.

While Dell Unity fully supports the majority of the NFSv4 and v4.1 functionality described in the relevant RFCs, directory delegation and pNFS are not supported. Some of these features do not require any special configuration on the Dell Unity system, such as NFSv4.1 session trunking. To enable this, create multiple interfaces on the NAS server and then point the host to all the available IP addresses.

To configure NFSv4, you must first enable NFSv4 on the NAS server and then create an NFS file system and share. Then, the file system can be mounted on the host using the NFSv4 mount option.

Starting with OE version 4.2, NFS datastores can also be mounted using NFSv4. When creating NFS datastores on earlier versions of OE, the NFSv3 protocol is always used. If you want to use NFSv4, ensure NFSv4 is enabled on the NAS server. When creating a new datastore, select NFSv4 on the configuring host access page and provide host access to the ESXi servers. This process creates the datastore and automatically mounts it on the ESXi servers using NFSv4.

Secure NFS

Traditionally, NFS is not the most secure protocol, because it trusts the client to authenticate users as well as build user credentials and send these in clear text over the network. With the introduction of secure NFS, Kerberos can be used to secure data transmissions through user authentication as well as data signing through encryption. Kerberos is a well-known, strong authentication protocol where a single key distribution center, or KDC, is trusted rather than each individual client. There are three different modes available:

- krb5: Use Kerberos for authentication only

- krb5i: Use Kerberos for authentication and include a hash to ensure data integrity

- krb5p: Use Kerberos for authentication, include a hash, and encrypt the data in-flight

To enable secure NFS, both DNS and NTP must be configured. Also, a UNIX Directory Service such as NIS, LDAP, or Local File must be enabled, and a Kerberos realm must exist. LDAPS (LDAP over SSL) is generally used for Secure NFS to avoid weaknesses in the security chain. If an Active Directory domain joined SMB server existed on the NAS server, that Kerberos realm may be leveraged. Otherwise, a custom realm can be configured for use in Unisphere.

Starting with OE version 4.2, NFS datastores can also be mounted using Secure NFS with Kerberos. When creating NFS datastores on earlier versions of the OE, the NFSv3 protocol is always used. If you want to use Secure NFS with Kerberos, ensure Secure NFS is enabled on the NAS server. You must also configure DNS, NTP, domain, and NFS Kerberos credentials on the ESXi server. When creating a new datastore, select NFSv4, provide the Kerberos NFS Owner name, and provide either read/write or read-only access to your ESXi hosts. This process creates the datastore and automatically mounts it to the ESXi hosts using secure NFS.

vVols

Virtual Volumes (vVols) is a storage framework introduced in VMware vSphere 6.0 that is based on the VASA 2.0 protocol. vVols enable VM-granular features and Storage Policy Based Management (SPBM). Enabling this option allows clients to access NFS vVol datastores through a NAS server.

Using vVols with IP multi-tenancy is not supported. You are prohibited from enabling the vVol Protocol Endpoint on a NAS server that has a tenant association. To use vVols, you must use a NAS server that has NFSv3 enabled and does not have a tenant assigned.

For more information about vVols, reference the Dell Technologies Info Hub.

Host access

The default host access option determines the access permissions for all hosts with network connectivity to the NFS storage resource. The available options are:

- No Access (Default)

- Read-Only

- Read-Only, allow Root (OE version 4.4 or later for file systems)

- Read/Write

- Read/Write, allow Root

For hosts that need something other than the default, different access levels can be configured by adding hosts, subnets, or netgroups to the override list with one of the access options above. To do this, these resources must first be registered on to the system. This can be done in the Hosts (ACCESS) page in Unisphere. The following information is required for registration and all other fields are optional:

- Host: Name and IP Address/Hostname

- Subnet: Name, IP Address, and Subnet Mask/Prefix Length

- Netgroup: Name and Netgroup Name

For hostname resolution, the search order is local files, UNIX directory service, and then DNS. This means if Local Files are configured, they are always queried first to resolve a hostname. If the name cannot be resolved or if Local Files are not configured, then the NAS server queries the configured UNIX Directory Service (LDAP or NIS), if it is configured. If the name still cannot be resolved or if neither Local Files nor UNIX Directory Service is configured, then DNS is used. This behavior can be customized by configuring the ns.switch parameter on the NAS server.

Starting with OE version 4.4, NFS host registration is made optional. Instead, host access can be managed by specifying a comma separated string. This is designed to simplify management and improve ease of use. When configuring access to a NFS share, you can select the option to enter in a comma separated list of hosts or select from a list of registered hosts on the system. For each NFS share, only one host access method can be used.

As part of this change, you may see the host registration-based method of configuring NFS access referenced to as advanced host management, such as in UEMCLI. If advanced host management is enabled, it indicates the NFS share is using the host registration-based method for configuring NFS access. If Advanced Host Management is disabled, it indicates the NFS share is using the comma separated string method of configuring NFS access.

The string can contain any combination of entries listed in the table below and is limited to 7000 characters. If replication is configured, this string is also replicated to the destination, so no reconfiguration of host access is required in the event of a failover.

Table 4. NFS host access

Name

Example

Notes

Hostname

host1.dell.com

Hostname should be defined in the local hosts file, NIS, LDAP, or DNS.

IPv4 or IPV6 Address

10.10.10.10

fd00:c6:a8:1::1

Subnet

10.10.10.10/255.255.255.0

10.10.10.10/24

IP address/netmask or IP address/prefix.

Netgroup

@netgroup

Netgroup should be defined in the local netgroup file or UDS. Netgroup entries should be prefixed with @ to differentiate them from hostnames.

DNS Domain

*.dell.com

The DNS Server must support reverse lookups and the ns.switch parameter should not exclude DNS. Domain entries should be prefixed with * and follow the Linux convention. This option is only available when using host strings.

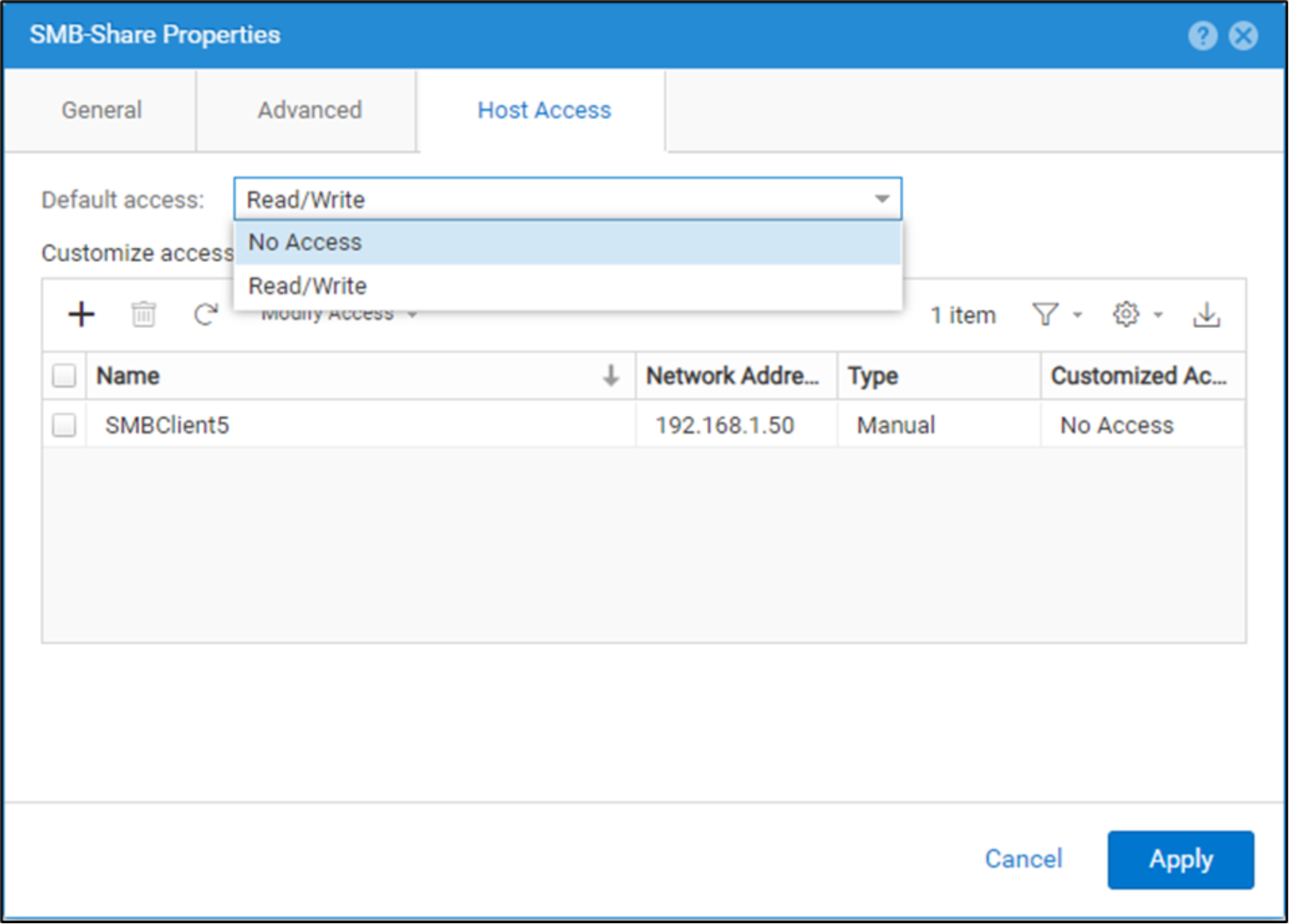

Starting with OE version 5.4, host access can now be configured at the SMB share level. A new Host Access tab has been added under SMB share properties in Unisphere. Like NFS host access, users have the flexibility to set default and customized access (override list) on SMB shares.

The access types for both options include:

- No Access

- Read/Write (Default)

Figure 27. SMB host access

Users can also achieve setting host access on SMB shares by using UEMCLI. Please note that host access can only be configured after SMB share creation, and only admin/storage admin user roles can configure this.

For SMB host access, hosts can be defined as such:

- Hostname

- IPv4/IPV6 Address

- Subnet

Like NFS, SMB share host access can leverage registered hosts on the system or utilize a comma separated list.

- 0 (default)