Home > Storage > PowerScale (Isilon) > Industry Solutions and Verticals > Analytics > Dell Technologies Solution: Distributed Deep Learning Infrastructure for Autonomous Driving > ADAS Deep Learning Reference Architecture

ADAS Deep Learning Reference Architecture

-

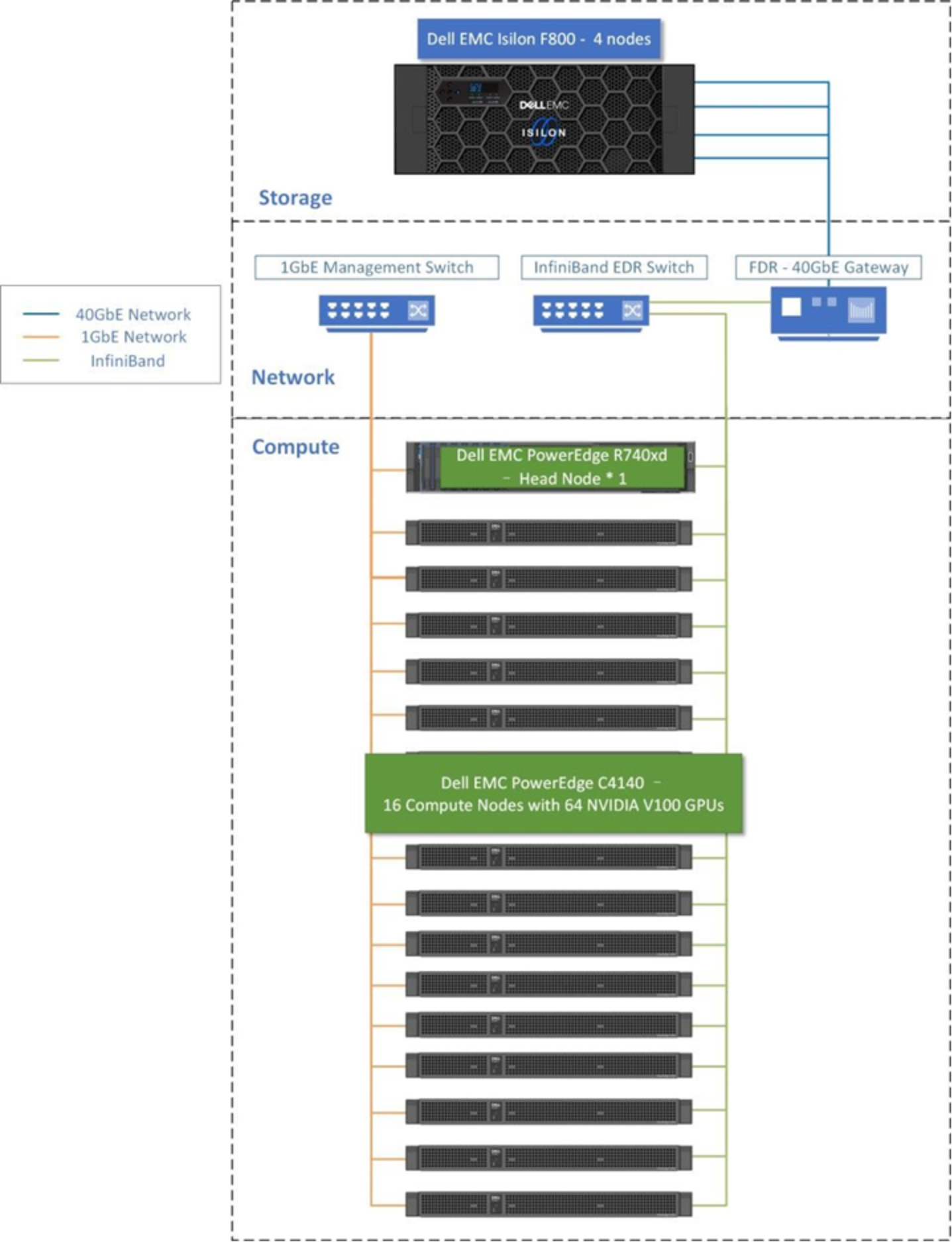

Note: In a customer deployment, the number of Dell PowerEdge compute servers and PowerScale storage nodes will vary and can be scaled independently to meet the requirements of the specific DL workloads.

Figure 5. Dell ADAS / AD DL Reference Architecture

The hardware architectures include these key components:

- Compute: High performance GPU server nodes that can support compute intensive workloads are central for any DL solution. We used 16 PowerEdge C4140 servers with four V100 GPUs each (64 GPUs total) in this solution for the distributed DL training workload.

The Dell PowerEdge R740xd is used for the role of the head node. This is Dell ’s latest two socket, 2U rack server that can support the memory capacities, I/O needs and network options required of the head node. The head node will perform the cluster administration, cluster management, NFS server, user login node and compilation node roles.

- Storage: A critical component of DL solutions is high performance storage. It is uniquely suited for modern DL applications delivering the flexibility to deal with any data type, scalability for data sets ranging in the PBs, and concurrency to support the massive concurrent I/O request from the GPUs. This solution also mounted NFS share on either the head node or each compute node.

- Networking: The solution consists of three network fabrics. The head node and all compute nodes are connected with a 1 Gigabit Ethernet fabric. The Ethernet switch recommended is the Dell Networking S3048-ON which has 48 ports. This connection is primarily used by Bright Cluster Manager for deployment, maintenance and monitoring of the solution. The second fabric connects the head node and all compute nodes through 100 Gb/s EDR InfiniBand. The third switch in the solution is called a gateway switch and connects the Isilon F800 to EDR switch with Isilon’s front end 40 Gigabit Ethernet connection.

The software architectures include these key components

- Resource scheduler and management: Bright Cluster Manager is used in this solution to easily deploy and manage the clustered infrastructure and provides all cluster software including the operating system, GPU drivers and libraries, InfiniBand drivers and libraries, MPI middleware, the Slurm scheduler, and more.

- Docker containers or virtual machines: There has been a dramatic rise in the use of software containers for simplifying deployment of applications at scale. You can use either virtual machines or containers encapsulated by all the application’s dependencies to provide reliable execution of DL training jobs. A docker container is more widely used now for bunding an application with all its libraries, configuration files, and environment variables so that the execution environment is always the same. To enable of portability in Docker images that leverages GPUs, NVIDIA developed the Docker Engine Utility for NVIDIA GPUs which is also known as the NVIDIA Container Toolkit, an open-source project that provides a command-line tool. The publicly available CSI driver for the scale-out NAS PowerScale provides support for provisioning of persistent storage.