Home > Storage > PowerVault > Guides > Dell PowerVault ME5 Series: Microsoft Hyper-V Best Practices > Present ME5 storage to Hyper-V hosts and VMs

Present ME5 storage to Hyper-V hosts and VMs

-

Hyper-V supports DAS (SAS, FC, iSCSI) and SAN (FC, iSCSI) configurations with ME5.

See the Dell PowerVault ME5 Administrator’s Guide and the Dell PowerVault ME5 Deployment Guide at Dell Technologies Support for an in-depth review of transports and cabling options.

Transport options

Deciding which transport to use is based on customer preference and factors such as the size of the environment, cost of the hardware, and the required support expertise.

iSCSI has grown in popularity for several reasons, such as improved performance with the higher bandwidth connectivity options now available. A converged Ethernet configuration also reduces complexity and cost. Small office, branch office, and edge use cases benefit when minimizing complexity and hardware footprints with converged networks.

Regardless of the transport, it is a best practice to ensure redundant paths to each host by configuring MPIO. For test or development environments that can accommodate down time without business impact, a less-costly, less-resilient design that uses single path may be acceptable.

Mixed transports

In a Hyper-V environment, all hosts that are clustered should be configured to use a single common transport (FC, iSCSI, or SAS).

There is limited Microsoft support for mixing transports on the same host. Mixing transports is not recommended as a best practice, but there are some uses cases for temporary use.

For example, when migrating from one transport type to another, both transports may need to be available to a host during a transition period. If mixed transports must be used, use a single transport for each volume that is mapped to the host.

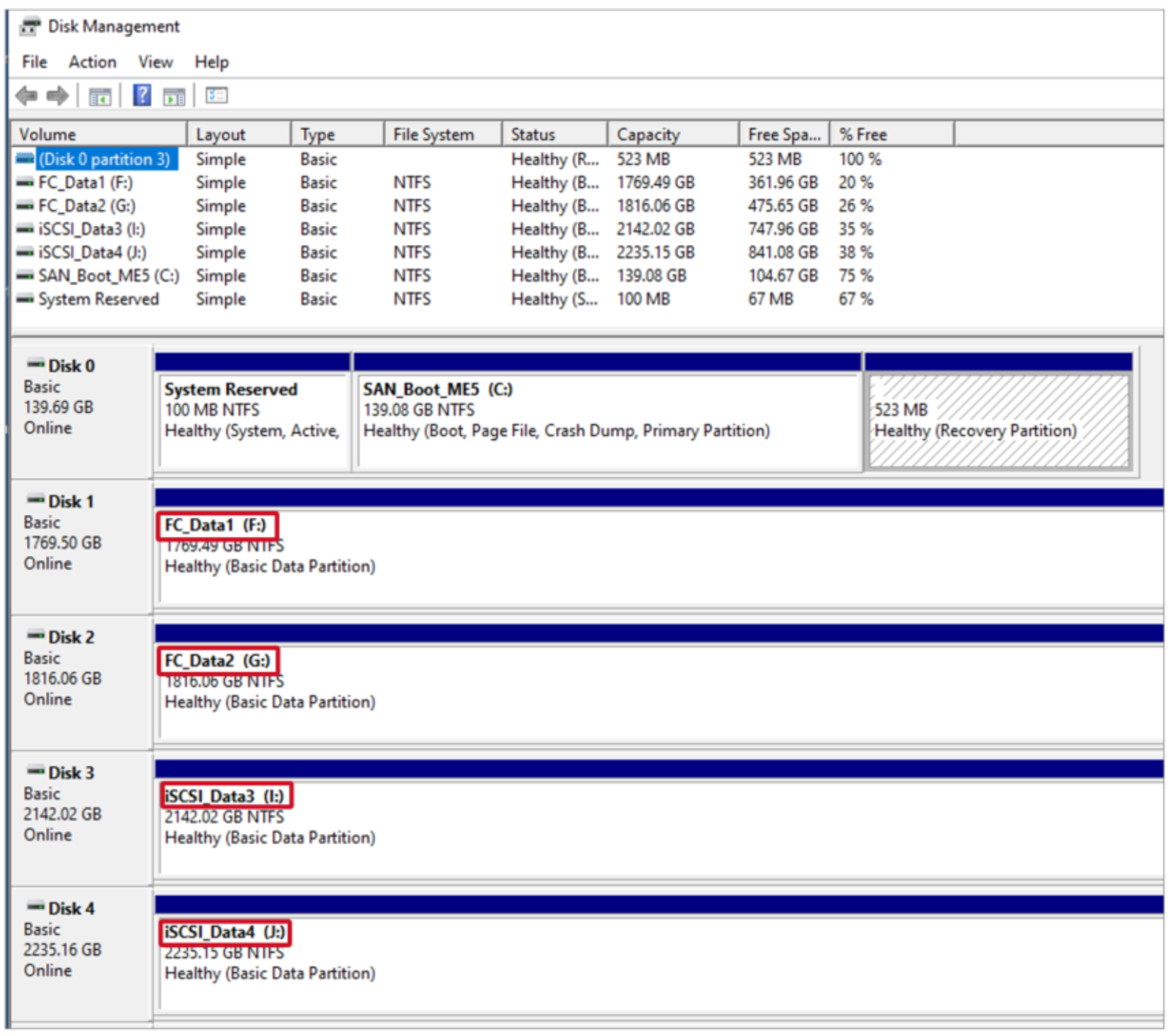

Figure 12. A host with two FC volumes and two iSCSI volumes mapped concurrently

Consider the following example:

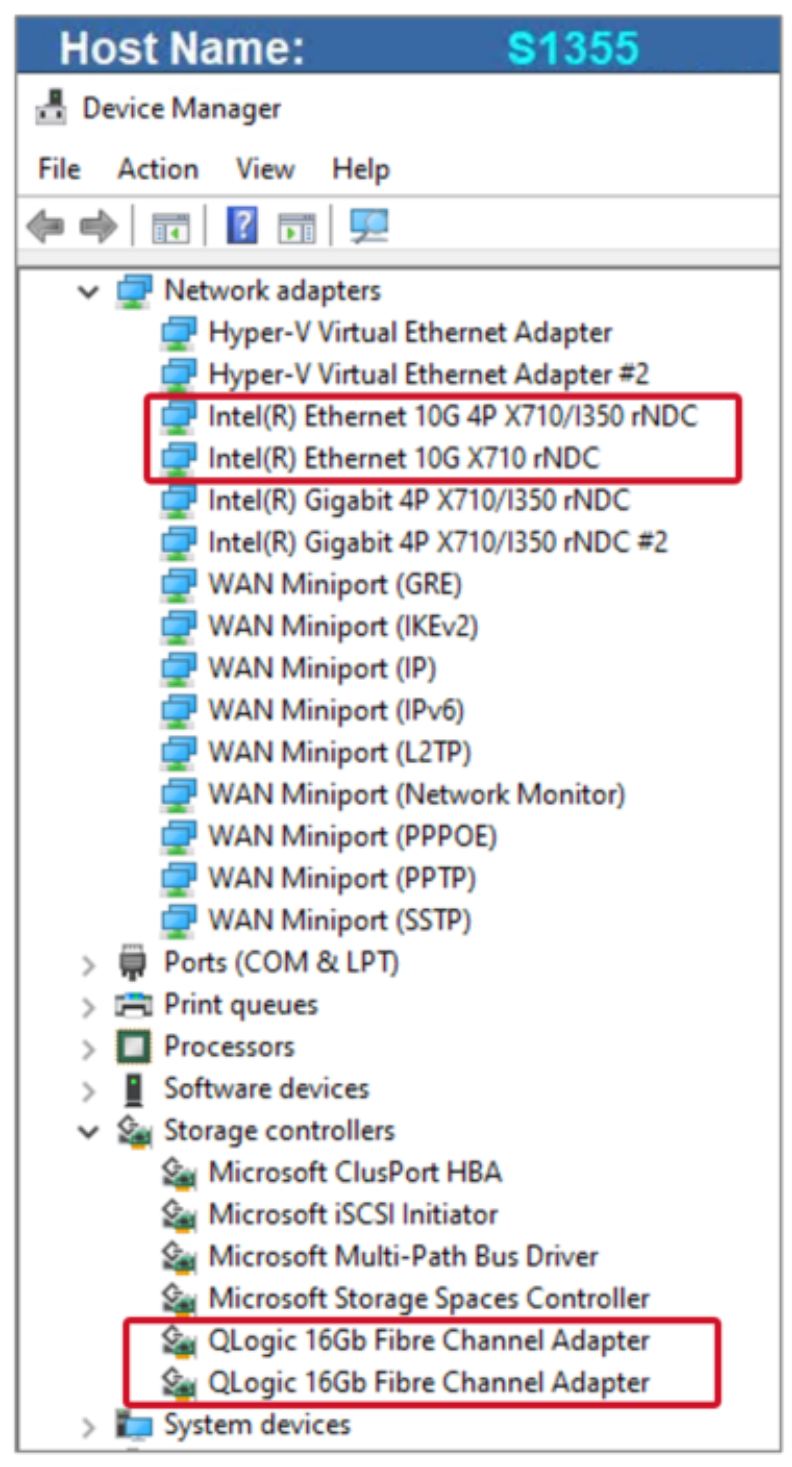

- A host has FC HBAs that support FC. The same host also has NICs that support iSCSI. The host is connected to both storage networks (FC and iSCSI) using MPIO.

Figure 13. Host server with iSCSI NICs and FC HBAs

- An existing FC volume is mapped to the host from a legacy storage array that is being retired.

- Create a host object on the new ME5 array that uses iSCSI mappings.

- Map a new volume on the ME5 to the host using iSCSI. After discovery, the host will display two volumes:

- The first volume is the FC volume from the legacy storage array.

- The new volume is the iSCSI volume from the ME5 array.

- Migrate the workload from the existing FC volume to the new iSCSI volume on the ME5 array.

- Discontinue the legacy FC volume.

Note: Do not attempt to map a volume to a Windows host using more than one transport. Mixing transports for the same volume will result in unpredictable service-affecting I/O behavior in path failure scenarios. Each volume should be mapped using a unique transport.

MPIO best practices

Windows Server and Hyper-V natively support MPIO. A Device Specific Module (DSM) provides MPIO support. The DSM that is bundled with the Windows Server operating system is fully supported with ME5 arrays.

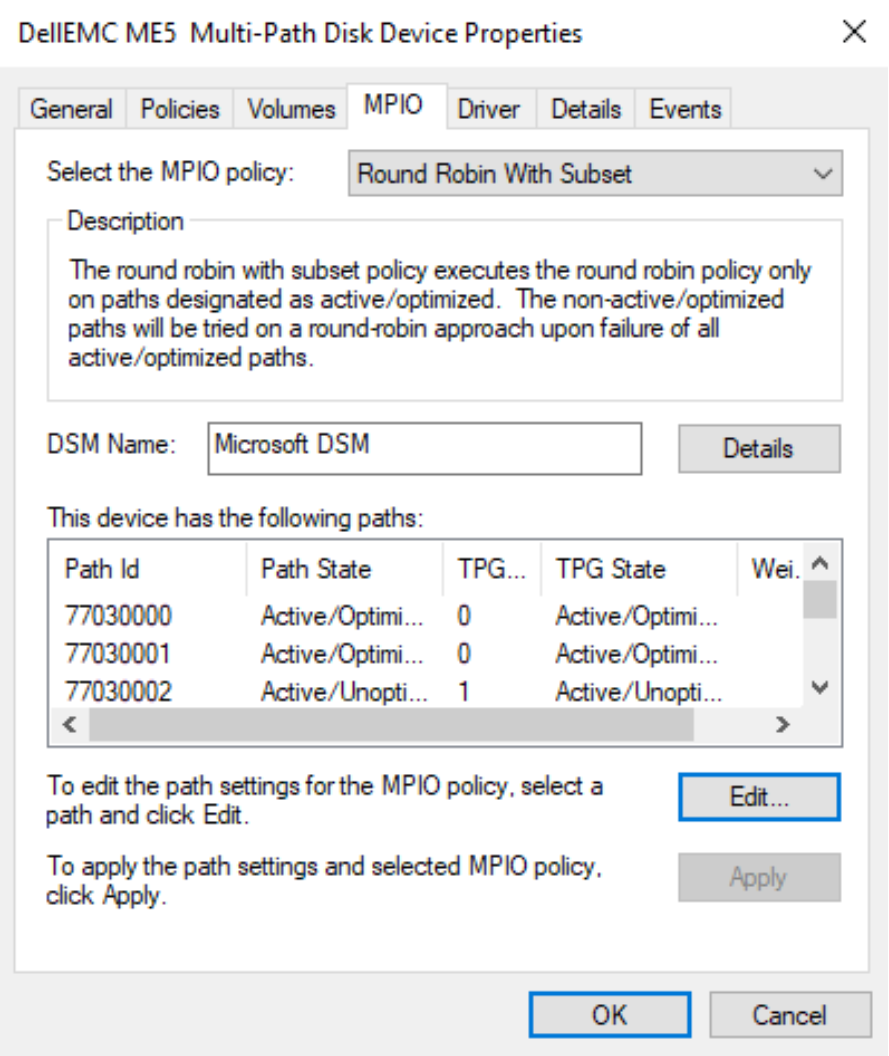

Windows and Hyper-V hosts default to the Round Robin with Subset policy with ME5 storage. Round Robin with Subset will work well for most Hyper-V environments. Specify a different supported MPIO policy if necessary.

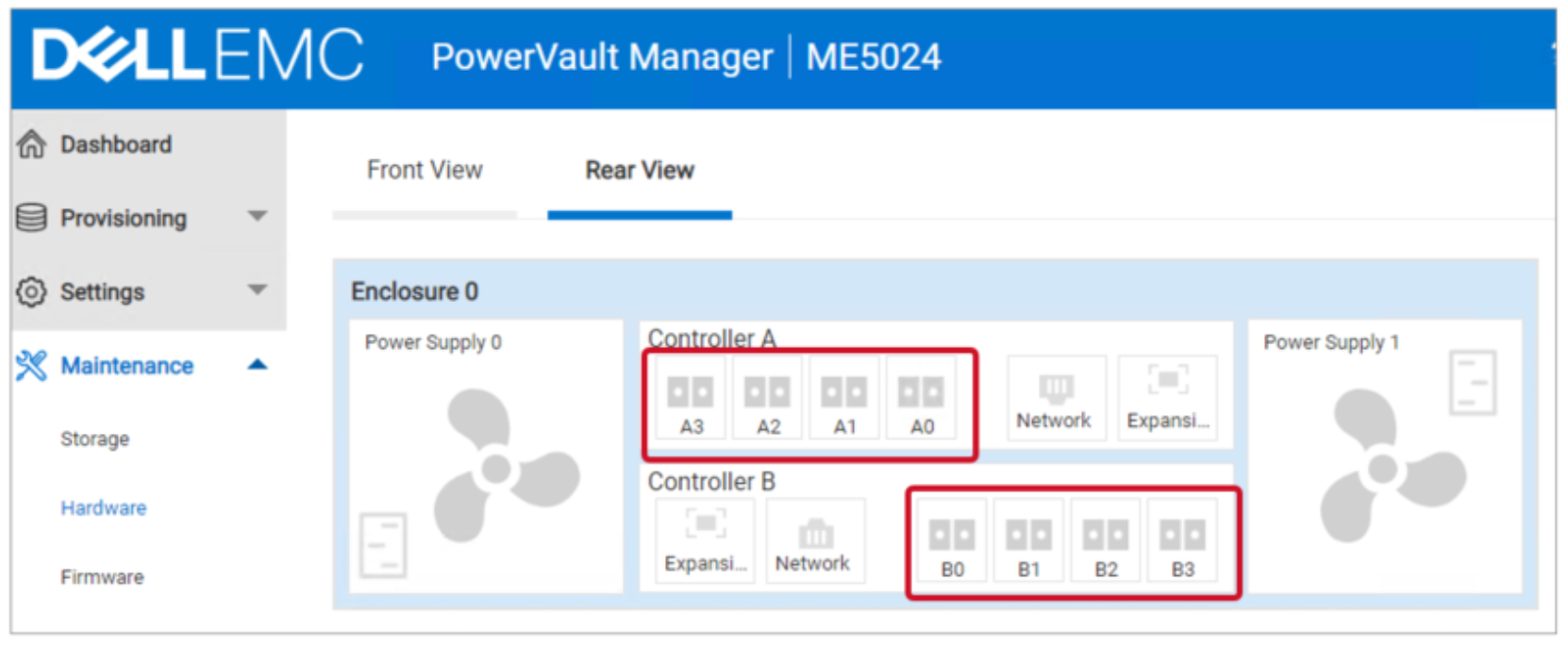

In this example, each ME5 storage controller (Controller A and Controller B) has four FC front-end (FE) paths connected to dual fabrics, for eight paths total. Connecting fewer FE paths, such as two on each controller for four paths total, is also acceptable.

Figure 14. Controller front-end ports A0 – A3 and B0 – B3

In Figure 15, a volume mapped from ME5 to a host lists eight total paths.

- Four paths that are optimized (to the primary controller for that volume)

- Four paths that are unoptimized (to the secondary or standby controller for that volume).

Figure 15. Verify MPIO settings (Microsoft DSM)

The Active/Optimized paths are associated with the ME5 storage controller that the volume is assigned to. The Active/Unoptimized paths are associated with the secondary or standby ME5 storage controller for that same volume.

When creating volumes on PowerVault, the wizard will alternate controller ownership in a round-robin fashion to help load balance the controllers. Administrators can override this behavior and specify a specific controller when creating a volume.

Best practices recommendations include the following:

- Do not change MPIO registry settings on the Windows or Hyper-V host (such as time-out values) unless directed by ME5 documentation or Dell Technologies support.

- Connect all available FE ports on an ME5 array (SAN mode) to use your preferred transport to optimize throughput and maximize performance.

- Configure dual fabrics and storage networks for switch and path level redundancy.

- Configure each host to use at least two ports with a SAN or DAS configuration (iSCSI, SAS, or FC). Configure host MPIO settings to protect against a controller or path failure.

- Verify that software versions are current for all components in the data path.

- ME5 controller firmware

- Data and FC switch firmware

- Boot code, firmware and drivers for HBAs, NICs, SAS cards, and converged network adapters (CNAs)

- Verify that all hardware is supported according to the latest version of the Dell PowerVault ME5 Support Matrix at Dell Technologies Support.

Guest VMs and block storage options

ME5 block storage can also be presented directly to Hyper-V guest VMs using the following methods:

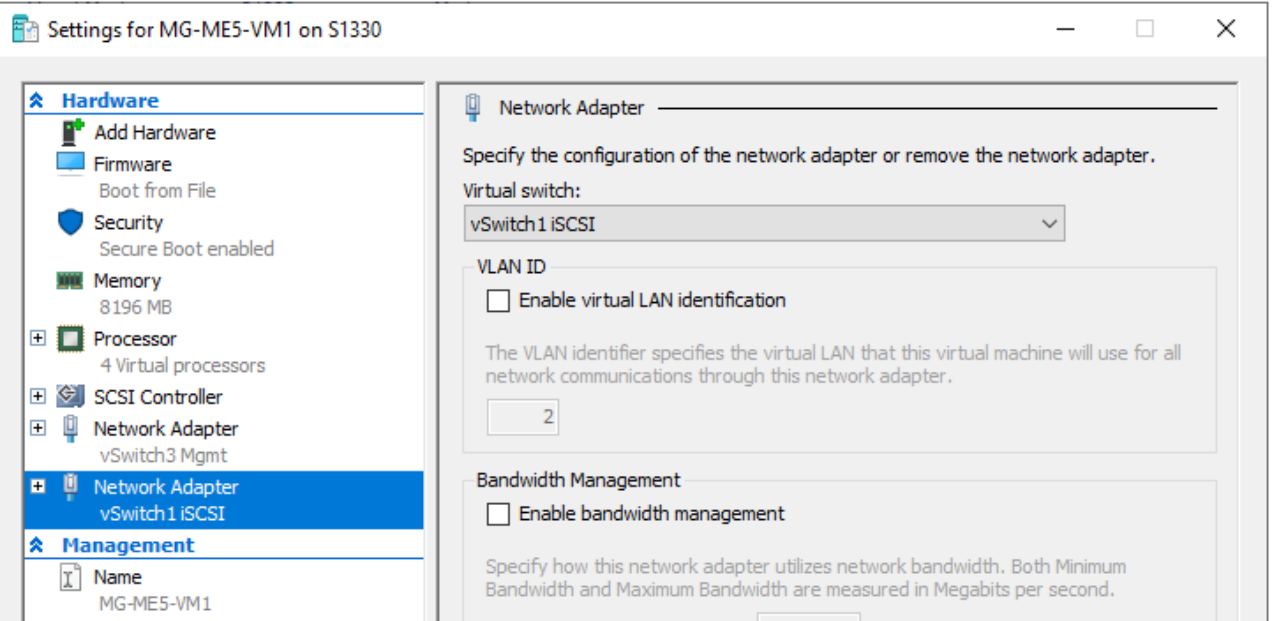

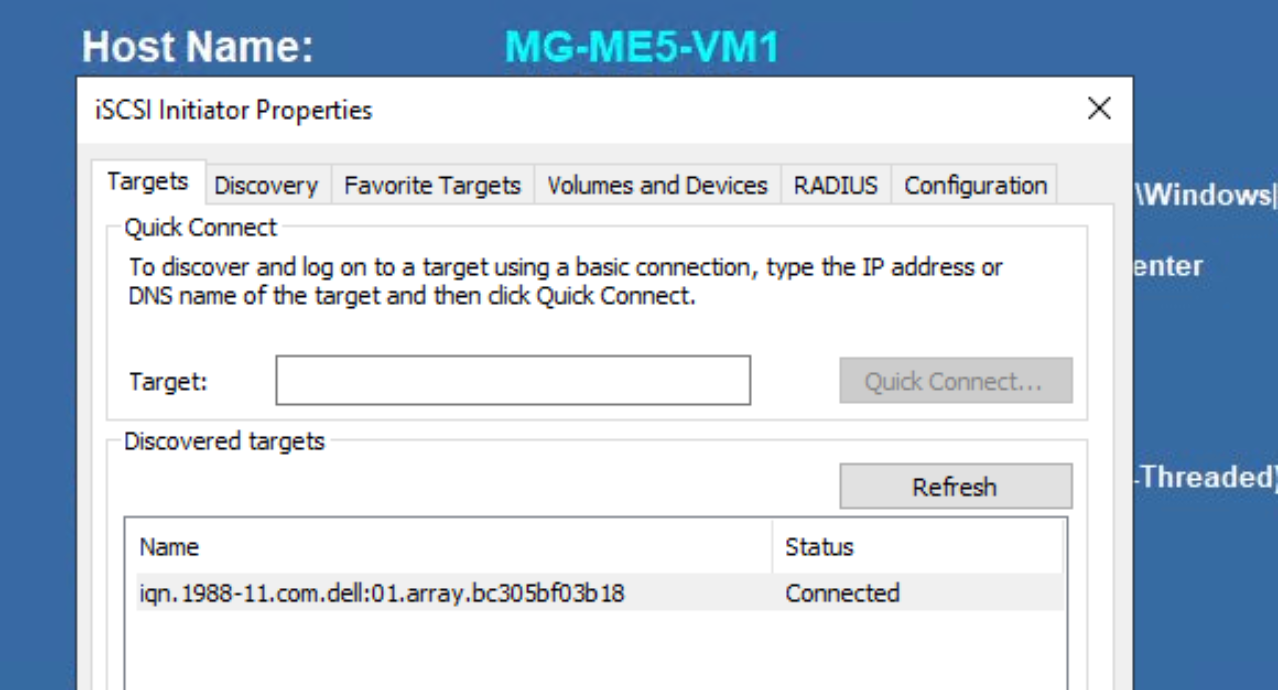

In-guest iSCSI: Configure the host and VM network so the VM can access ME5 iSCSI volumes through a Hyper-V host or cluster network.

- Configure in-guest iSCSI on the VM. The setup is similar to iSCSI on a physical host.

- MPIO is supported on the VM if multiple paths are available to the VM, and the multipath I/O feature is installed and configured.

Figure 16. In-guest iSCSI

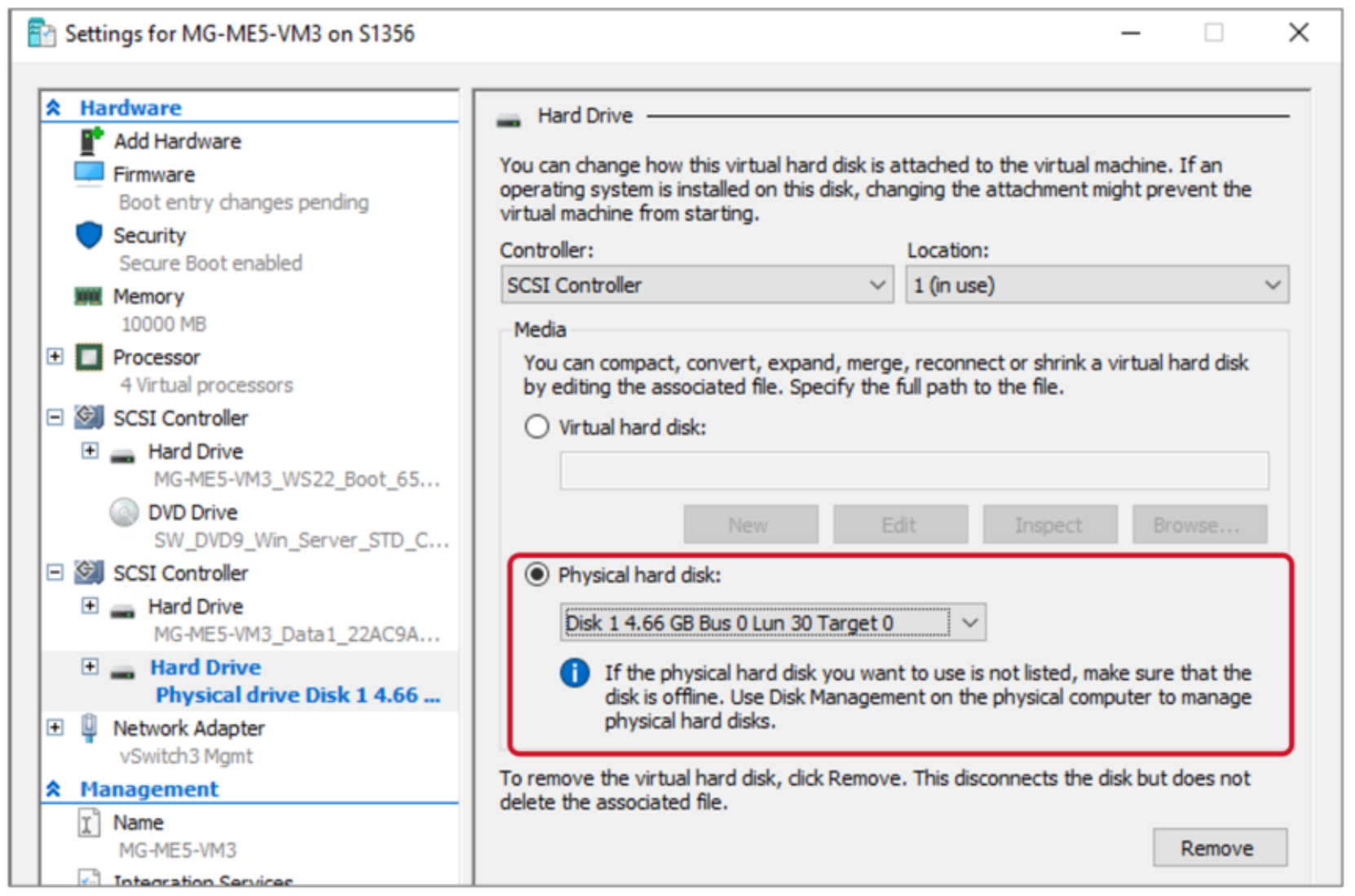

Physical disks: Physical disks presented to a Hyper-V VM are often referred to as pass-through disks. A pass-through disk is mapped to a Hyper-V host or cluster, and I/O access is passed through directly to a Hyper-V guest VM. The Hyper-V host or cluster has visibility to a pass-through disk and assigns it a LUN ID, but does not have I/O access. Hyper-V keeps the disk in a reserved state. Only the guest VM has I/O access.

- Use of pass-through disks is a legacy configuration that was introduced with Hyper-V 2008.

- Pass-through disks are no longer necessary because of the feature enhancements with newer releases of Hyper-V (generation 2 guest VMs, VHDX format, and shared VHDs).

- Use of pass-through disks is now discouraged, other than for temporary or specific use cases.

Figure 17. Hyper-V VMs support physical (pass-through) disks

In-guest iSCSI and pass-through disk use cases

ME5 arrays support in-guest iSCSI and pass-through disks (direct-attached disks) mapped to guest VMs. However, using direct-attached storage for guest VMs is not recommended as a best practice unless there is a specific use case that requires it. Typical use cases include:

- Performance: Direct-attached disks bypass the host server file system and so offer slightly better performance than a VHD or VHDX. There is no significant difference in performance between a direct-attached disk and a virtual hard disk for most workloads.

- Clustering: VM clustering on legacy Hyper-V platforms require the use of direct-attached disks. Shared VHDs are preferred for VM clustering with Server 2012 R2 and newer.

- Troubleshooting: Use of a direct-attached disk can be helpful if you need to troubleshoot the I/O performance of a volume and it must be isolated from all other servers and workloads.

- Custom snapshot or replication policy: It may be necessary in some use cases to apply a custom ME5 snapshot or replication policy to a specific disk (volume).

- The preferred method is to place a virtual hard disk on a dedicated cluster shared volume (CSV) in a one-to-one configuration. Then, apply ME5 snapshots and replication to the CSV.

- Capacity: Legacy VHDs support a maximum size of two TB. VHDX supports a maximum size of 64 TB. If a data volume will exceed these limits, you may need to use in-guest iSCSI or a pass-through disk. The maximum supported size of a direct-attached disk is a function of the VM operating system.

In-guest iSCSI and pass-through disk storage limitations

- Native Hyper-V Snapshots: The ability to perform native Hyper-V snapshots is lost. However, the ability to leverage ME5 snapshots of the underlying volume is unaffected.

- Complexity: Use of direct-attached volumes increases complexity, requiring more management overhead.

- Mobility: VM mobility is reduced due to creating a physical hardware layer dependency.

- Scale: Each pass-through disk consumes a LUN ID on each host in a Hyper-V cluster. Extensive use of pass-through disks quickly becomes impractical and unmanageable at scale on a Hyper-V cluster. Use pass-through disks sparingly if they are required.

- Differencing Disks: The use of a pass-through disk as a boot volume on a guest VM prevents the use of a differencing disk.

Note: Legacy Hyper-V environments that are using direct-attached disks for guest VM clustering should consider switching to shared virtual hard disks when migrating to a newer Hyper-V version.

ME5 storage and Hyper-V clusters

Use a consistent LUN number when mapping shared volumes: quorum disks, cluster disks, and cluster shared volumes. Leverage host groups on the ME5 array to simplify the task of assigning consistent LUN numbers.

Note: Hyper-V hosts that use boot-from-SAN cannot be added to ME5 hosts groups. See the Boot from SAN section of this white paper for details.

Changing LUN IDs after initial assignment by ME5 may be necessary to make them consistent. By default, PowerVault Manager assigns the next available LUN ID that is common when mapping a new volume to a host group or group of hosts.

Volume design considerations for ME5 storage

Each cluster shared volume (CSV) will support one VM or many VMs. How many VMs to place on a CSV is a function of user preference, the workload, and how ME5 storage features such as snapshots and replication will be used. Placing multiple VMs on a CSV a good design starting point in most scenarios. Adjust this strategy for specific uses cases.

Some advantages for a many-to-one strategy include the following:

- Avoid volume sprawl: Fewer ME5 array volumes are easier to manage.

- Efficiency: It is quicker and easier to deploy a VM to an existing CSV.

Some advantages for a one-to-one strategy include the following:

- I/O isolation: It is easier to isolate and monitor disk I/O patterns for a specific Hyper-V guest VM or workload.

- Ease of recovery: It is easy to quickly restore a guest VM by recovering the underlying CSV using an ME5 snapshot.

- Replication control: One-to-one gives administrators more granular control over what data gets replicated when ME5 volumes are replicated to another location.

- Move large VMs quickly: Use of native Hyper-V tools to migrate VMs is preferred. However, for large VMs, it might be easier to move a guest VM from one host or cluster to another by remapping the volume. Remapping the CSV (or using an ME5 snapshot) avoids having to copy a VM and its data over the network.

Other strategies include placing VHDs with a common purpose on a CSV. For example, place boot VHDs on a common CSV, and place data VHDs on other CSVs.